You don’t need another list of AEO tools. You need to know what real users discovered after paying for them.

Since G2 officially established the AEO software category in March 2025, demand has grown by more than 2,000%. The Winter 2026 Grid marked the first time this category was formally mapped, with nine products making the initial cut. What separates the tools users kept from the ones they churned isn’t what the marketing pages claim. It’s buried in the 1-3 star reviews, the usability scores, and the patterns that repeat across hundreds of real user accounts.

Here are seven of those patterns, and what they mean when you’re about to write a check.

Insight 1: Most AEO Tools Track Mentions. The Best Ones Track What Mentions Mean.

A mention in an AI answer isn’t inherently good.

ChatGPT might cite your brand in a sentence like: “While Product A is expensive and prone to errors, it remains one of the available options.” That’s a mention. It’s also a reputation problem. The 2025 Conductor AEO/GEO Benchmarks Report identified what researchers call the “Brand-Citation Gap”: in real estate, Zillow achieves a brand mention share of 7.36%, yet consistently fails to rank among the top-cited domains. High awareness, low authority.

High-rated tools on G2 have built Answer Placement Score (APS) alongside sentiment polarity analysis. They distinguish between being recommended and being referenced in a negative context. Tools that only count citation rates miss this entirely.

If a tool can’t tell you the difference between a positive citation and a backhanded mention, the numbers it’s producing are misleading at best.

Insight 2: “Full Platform Coverage” Is the Claim G2 Users Fact-Check First

The phrase appears in a lot of product descriptions. User reviews peel it back fast.

ChatGPT now processes over 2 billion queries daily. Google AI Overviews reaches a similar number of monthly users. Perplexity, Claude, and Microsoft Copilot each hold significant share in specific intent categories. A brand’s visibility can vary dramatically between these models because each one has a distinct retrieval architecture. Perplexity leans on real-time news sources. ChatGPT draws on pre-training and RAG pipelines. An optimization that moves the needle on one won’t automatically transfer to the other.

The most common finding in negative G2 reviews: tools that claimed broad coverage were running deep integrations on ChatGPT and light, infrequent polling on everything else. G2 users consistently reward platforms that offer a unified dashboard tracking seven or more engines, including ChatGPT, Gemini, Perplexity, Claude, and Copilot.

Topify covers ChatGPT, Gemini, Perplexity, DeepSeek, Grok, Copilot, Doubao, Qwen, and others, tracking seven distinct metrics across all of them simultaneously: visibility, sentiment, position, volume, mentions, intent, and CVR. That breadth is where the actual visibility picture lives.

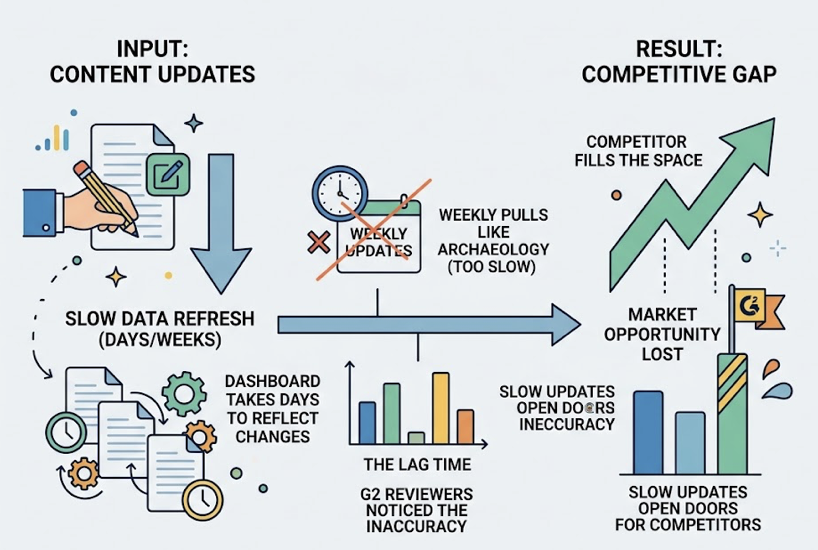

Insight 3: Data Freshness Divides the G2 Grid More Than Any Feature Set

AI models update faster than most monitoring tools assumed they would.

In Q1 2026, RAG datasets and model weights are shifting at a frequency that makes weekly data pulls look like archaeology. G2 reviewers noticed the gap in real terms: they’d correct a piece of content that was causing an AI to generate inaccurate brand information, and the dashboard would take days to reflect the change. By then, a competitor had already filled the space.

The high-rated tools have moved to hourly updates or real-time browser-rendered capture, meaning they simulate actual AI queries across distributed environments rather than relying on cached API responses. That technical distinction matters enormously when a competitor’s aggressive content push can shift your visibility position within 24 hours.

One question cuts through most vendor pitches: is your data coming from live browser rendering or static API caches? If the answer is vague, that’s your answer.

Insight 4: The Setup Problem Nobody Shows You in the Demo

Look at the 1-2 star reviews. The words “onboarding” and “setup” appear with striking consistency in the Cons sections.

Industry data puts this in context: 75% of SaaS users abandon a product within the first week if they struggle with initial configuration. For AEO tools specifically, time-to-value is hindered by the need for custom prompt engineering, API integrations, and the time required to build a historical data baseline. Unlike traditional SEO tools that can pull years of historical keyword data, most AEO platforms only begin collecting data the day you sign up.

Top performers on G2’s Usability Index take a different approach: opinionated onboarding that guides users toward a working setup rather than 40 empty fields to figure out independently. Platforms cited for ease of use, like Peec AI and Visby AI, allow users to see an initial AI Search Score within minutes. That immediate feedback loop is what reduces abandonment.

Corporate-level tools, by contrast, can require more than 100 days to reach full configuration. At that pace, you’ve already missed a full quarter of visibility data.

Insight 5: Competitor Benchmarking Went from “Nice to Have” to Non-Negotiable

Without competitive context, AI visibility metrics are unactionable.

A 15% visibility rate on ChatGPT is meaningful only when compared to a competitor’s 30% or a competitor’s 5%. G2 users are direct about this: tools that don’t provide comparative data are “data without direction.” The shift toward Answer Share of Voice marks a departure from keyword-centric thinking toward entity-level dominance across AI engines.

High-performing platforms have built “Missed Answer Detection” into their core product: features that identify specific queries where a competitor is cited but your brand isn’t. That list is an immediate content roadmap. Citation Gap Analysis, offered by tools like Writesonic and Otterly AI, takes this further by identifying the high-authority sites mentioning rivals, giving brands a clear list of external targets for digital PR.

Topify’s Competitor Monitoring goes further still, tracking not just who is mentioned but how they’re positioned relative to you, and surfacing new competitors entering your visibility space in real time.

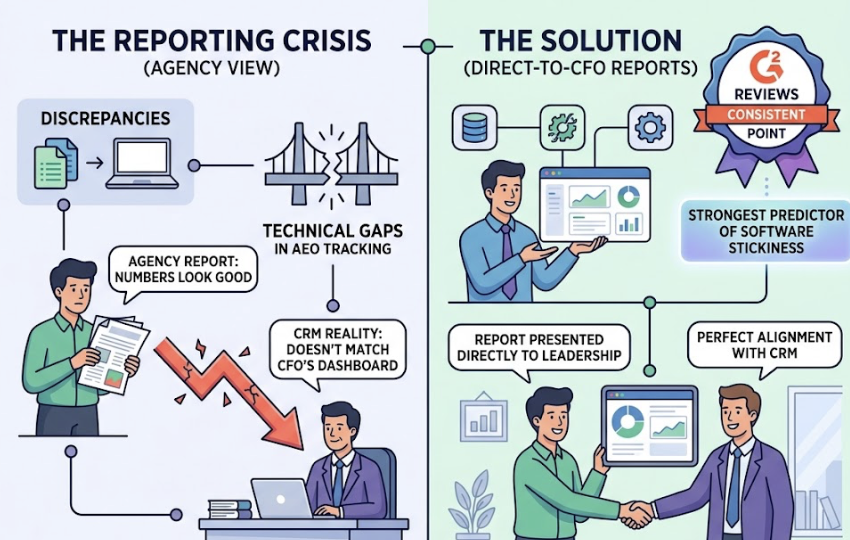

Insight 6: Reporting Quality Predicts Whether Agencies Keep Their Clients

For marketing agencies, this is the variable that quietly determines renewal rates.

A “reporting crisis” has emerged in the agency world: technical gaps in AEO tracking create discrepancies between agency reports and client financial reality. When the numbers don’t match what a CFO sees in the CRM, trust erodes quickly. G2 reviews are consistent on this point: reports that can be presented directly to leadership, without manual adjustment, are the strongest predictor of software stickiness.

The productivity data supports this. Automated reporting platforms save agencies an average of 137 billable hours per month, a 30% productivity increase. Real-time dashboards, white-label outputs, and “What’s Next” analysis that shifts focus from historical data to forward-looking strategy each contribute to a measurable increase in client satisfaction.

Topify integrates reporting as a core execution layer, synthesizing visibility across platforms into a single AI Search Score. The goal is to move agencies from defending numbers in a spreadsheet to discussing strategy in a boardroom.

Insight 7: A High G2 Score Today Doesn’t Tell You Much About Tomorrow

This is the one most buyers overlook.

G2 ratings reflect historical satisfaction. In a category where LLM algorithms change monthly, a tool’s current score may not reflect its current technological relevance. Reports indicate that up to 26% of G2 reviews in the AI category may be synthetic or AI-generated, which further complicates the trust signal. Legacy SEO tools often maintain high G2 scores due to established user bases, while power users describe their AEO modules as “beta-quality” or “standard SEO advice from the past decade.”

What static scores can’t capture is iteration velocity: how fast a tool is improving, and whether it’s moving toward agentic execution or staying at the level of a monitoring dashboard. The maturity model emerging from the market runs from Level 1 (basic mention tracking) through Level 5 (autonomous content deployment and one-click fixes). Most of the tools with impressive G2 averages sit at Level 2 or 3. Level 5 is where Topify and a small number of purpose-built platforms are operating.

The question isn’t just “what does this tool do today?” It’s “where is this tool in 12 months?”

Use These 7 Insights as a Pre-Purchase Checklist

Before you book a demo or start a trial, run through these seven questions:

| Dimension | What to Ask | Why It Matters |

|---|---|---|

| Citation vs. Mention | Does it distinguish positive citations from negative references? | Mention volume without sentiment context is misleading |

| Platform Breadth | Does it track 7+ engines in a unified dashboard? | Single-platform coverage creates dangerous blind spots |

| Data Freshness | Is data from live browser rendering or cached APIs? | Weekly data is too slow for the current AI update cycle |

| Time-to-Value | How long to first useful insight? | 75% of users abandon tools with poor onboarding within a week |

| Competitor Intelligence | Does it include Missed Answer Detection and Share of Voice? | Absolute metrics are unactionable without competitive context |

| Reporting Quality | Can reports go directly to leadership without manual work? | Reporting quality predicts client retention and executive trust |

| Agentic Potential | Does it close the loop from insight to execution? | Monitoring without action capability is a dashboard, not a platform |

Topify’s Basic plan starts at $99/month and covers 100 prompts across ChatGPT, Perplexity, and AI Overviews, with 9,000 AI answer analyses per month. The Pro plan at $199/month extends to 250 prompts and 22,500 analyses across the full engine ecosystem. If you want to see where your brand actually stands across AI platforms before committing, start with a trial.

Conclusion

The 2,000% growth in AEO software demand since early 2025 reflects a real shift: brands have accepted that AI search is a channel they can’t ignore. But the tools serving that demand vary enormously in depth and execution.

G2 data consistently points to the same differentiators: sentiment analysis over raw mention counts, genuine multi-platform coverage, data that updates fast enough to be actionable, and reporting that holds up in front of a CFO. The tools that score well on all seven dimensions are a small subset of the grid.

Use the checklist. Pressure-test the onboarding. Ask the data freshness question directly. And remember that a strong G2 score tells you what users thought last quarter, not what the tool will do for your brand in the next one.

FAQ

What is AEO and how is it different from SEO in 2026?

SEO focuses on ranking in link-based search results and driving clicks. AEO focuses on being cited by AI systems that synthesize answers directly, often without the user ever visiting a website. As Semrush data indicates, 93% of AI searches end in zero clicks, which means AEO is how brands influence decisions they’ll never see in their traffic analytics.

How reliable are G2 reviews for evaluating AEO tools?

Overall star ratings are useful but contain noise, including factors like customer support speed that don’t reflect core platform performance. More reliable signals are the Usability Index and Results Index scores, and specifically the 1-3 star reviews, where patterns around data accuracy and platform coverage are most visible. Industry reports also estimate that up to 26% of AI category reviews on G2 may be AI-generated, so look for specificity and detail as indicators of authentic feedback.

What’s the minimum feature set an AEO tool needs in 2026?

At minimum: real-time or near-real-time tracking across at least five AI platforms, sentiment polarity analysis (not just mention counting), competitor benchmarking with Share of Voice data, and reporting that doesn’t require manual cleanup before it reaches a client or executive. Agentic execution, the ability to act on insights automatically, is rapidly moving from differentiator to expectation.