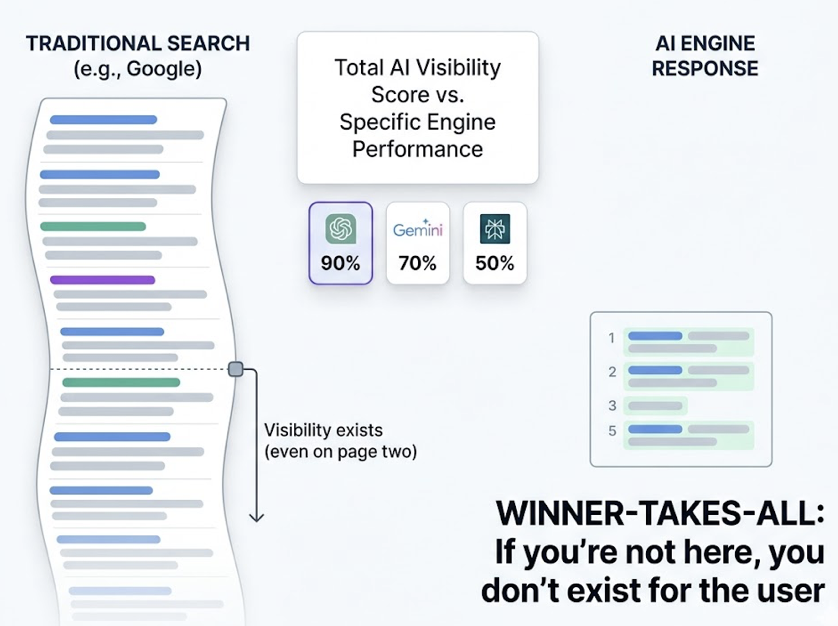

Your brand’s AI visibility score reads 72%. The quarterly report looks solid. Then you pull the platform-level data and the story falls apart: ChatGPT ranks you in the top three, Perplexity doesn’t mention you at all, and Gemini describes your product as a “budget option.” Three platforms, three completely different versions of your brand.

That single number in your dashboard isn’t telling you where you’re winning or losing. It’s averaging out the gaps that actually determine whether high-intent buyers find you or your competitor first.

Same Brand, Three Different AI Realities

Think of a mid-market B2B SaaS company with strong domain authority and a decade of content. In a standard monthly AI visibility report, that brand might show a score of 70%. Looks fine.

But split it by platform and the picture changes. ChatGPT treats the brand as a category leader because its pre-training data absorbed years of backlinks, press coverage, and directory listings. Perplexity skips the brand entirely because the company hasn’t published data-rich content in the past quarter. And Gemini pulls pricing info from an outdated third-party directory, labeling the product as “low-cost.”

That’s not a bug. It’s a permanent feature of AI brand visibility in 2026.

Each AI platform perceives the internet through a different lens. ChatGPT leans on historical authority. Perplexity rewards recency. Gemini trusts structured entities. When you average those into one number, you’re hiding the signal that matters most: where your brand is invisible to the audience segments you care about.

Why ChatGPT, Perplexity, and Gemini Don’t Agree on Your Brand

The disagreement comes down to architecture. These platforms don’t read the same internet, and they don’t trust the same signals.

ChatGPT: Historical Authority Wins

ChatGPT runs on a hybrid model. It has access to live search through Bing, but that browse capability only activates on roughly 34.5% of queries. The other 65% rely on the model’s internal knowledge base, which skews heavily toward established sources. An analysis of 680 million citations found that Wikipedia alone accounts for nearly 47.9% of ChatGPT’s top citation share. If your brand has years of third-party coverage and directory presence, ChatGPT already “knows” you. If you’re newer or pivoting, you’re fighting an uphill battle against its training data.

Perplexity: Freshness Is Everything

Perplexity operates as a 100% retrieval-augmented generation (RAG) engine. Every single query triggers a live web search. It retrieves roughly 10 candidate pages and cites 3 to 4 in its response. This means Perplexity is extremely sensitive to what’s been published recently. Content updated within the past 30 days is 3.2 times more likely to get cited than evergreen material. Perplexity also favors niche expertise: in unbranded queries, niche sources account for 24% of all citations, higher than any other major model.

Gemini: Entity Ownership Matters

Gemini sits inside Google’s ecosystem and draws heavily from the Google Knowledge Graph. A Yext study found that 52.15% of Gemini citations come from brand-owned websites, compared to ChatGPT’s reliance on third-party directories. If your structured data is clean, your Google Business Profile is accurate, and your schema markup is tight, Gemini trusts you. If those signals are fragmented or contradictory, Gemini either mischaracterizes you or drops you entirely.

Here’s the thing: only 11% of cited domains overlap between ChatGPT and Perplexity. Optimizing for one platform doesn’t automatically help you on another. That’s why platform-level tracking isn’t optional anymore.

The Total Score Trap: Why Averages Are Dangerous

In traditional SEO, a domain’s average ranking gave you a reasonable proxy for digital health. In AI search, averages aren’t just misleading. They’re actively harmful to decision-making.

A total AI brand visibility score of 70% could mean 90% on ChatGPT, 70% on Gemini, and 50% on Perplexity. That 50% doesn’t mean your brand shows up half the time. In most cases, it means you’re missing from the 3 to 5 citations an AI engine provides for high-intent comparison queries. Unlike Google’s search results, where you might still appear on page two, an AI response is a winner-takes-all environment. If you’re not in the top recommendations, you don’t exist for that user.

This creates three specific failures:

| Failure Type | What Happens | Why It Hurts |

|---|---|---|

| Platform Growth Blindness | A 20% drop in Perplexity visibility barely moves your total score | You miss that Perplexity is where your most technical buyers research |

| Resource Misallocation | Teams keep investing in PR for ChatGPT consensus | The real gap is technical schema for Gemini or content freshness for Perplexity |

| Broken Conversion Funnels | Strong ChatGPT visibility feels like success | Perplexity and AI Overviews drive higher-intent, closer-to-purchase traffic |

The conversion data makes this concrete. AI-driven traffic from ChatGPT converts at 15.9%, compared to 1.76% for traditional Google organic. Perplexity follows at 10.5%. When you’re invisible on these platforms, you’re not losing impressions. You’re losing buyers who are already 90% of the way through their decision.

What Cross-Platform AI Brand Visibility Gaps Actually Tell You

The gap between platforms isn’t random noise. It’s a diagnostic signal pointing to a specific problem in your content and authority ecosystem.

Visible on ChatGPT, invisible on Perplexity means you have a freshness problem. Your historical authority is strong, but your real-time content game is weak. The fix: implement a 30-day content refresh cycle, submit new URLs via IndexNow for faster crawling, and publish original data that RAG systems can easily extract.

Visible on Perplexity, invisible on ChatGPT means you have an authority depth problem. You’re producing content that ranks in real-time search, but ChatGPT’s model weights don’t recognize you as a category authority yet. The fix: earn mentions in high-DA encyclopedic sources, contribute to industry publications, and ensure consistent categorization across third-party directories.

Gemini description doesn’t match your positioning means you have an entity integrity problem. Your Knowledge Graph footprint is fragmented. The fix: audit your Schema.org Organization and Product markup, update your Google Business Profile, and make sure your brand-owned properties are the strongest signal for how you’re categorized.

Each pattern requires a different optimization strategy. That’s why a single “AI visibility” metric can’t drive action. You need the platform-level breakdown to know what to fix.

How to Track AI Brand Visibility at the Platform Level

Manual checks don’t scale. Asking ChatGPT “What’s the best CRM?” once a week gives you a snapshot of a stochastic model, not a trend. LLM outputs vary by session, and the results shift as training data and retrieval indexes update.

Professional tracking in 2026 has moved toward automated “Share of Model” analysis. The methodology works by running thousands of natural language prompt variations across multiple platforms and geographic nodes, then calculating reliable visibility scores per engine.

Topify takes this approach by treating LLMs as behavioral systems rather than searchable databases. Instead of tracking keywords, it tracks prompt-level brand appearance across ChatGPT, Perplexity, Gemini, DeepSeek, and other major AI platforms. The platform breaks the “total score” into the metrics that actually drive decisions:

Mention Frequency tells you how often your brand appears per 1,000 relevant queries. Top brands in a category typically hit around 12%, while the average sits at 0.3%. Position Tracking shows whether you’re the first recommendation or buried as an afterthought. Source Analysis reveals which domains AI engines are citing when they talk about your category, so you can see where competitors are getting their authority. And Sentiment Monitoringcatches cases where an AI engine is technically mentioning you but describing you inaccurately.

In practice, this means you can spot a drop in Perplexity visibility, trace it to a specific content gap, and know exactly which type of content to publish next. No guessing required.

Closing the Gap: From Diagnosis to Action

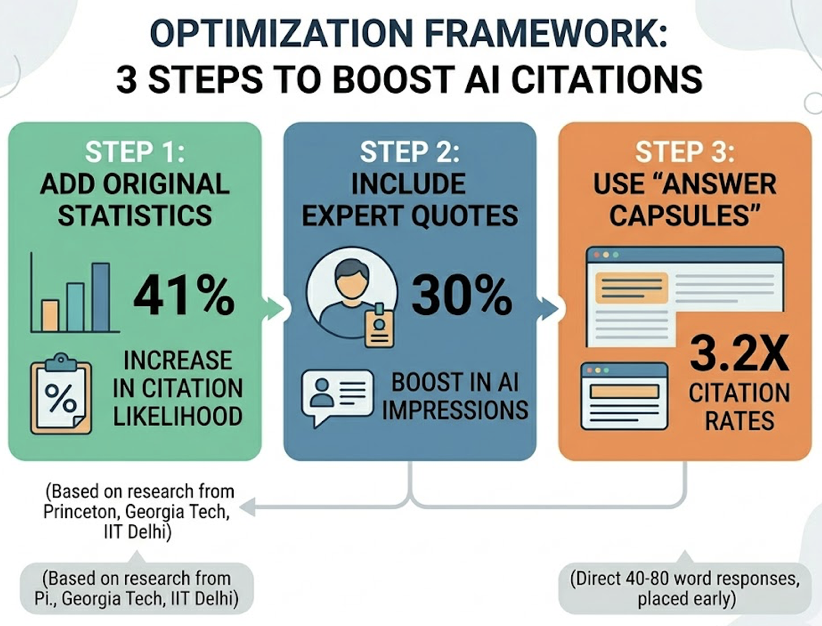

Once you’ve identified which platforms are underperforming, the execution framework is straightforward. Academic research from Princeton, Georgia Tech, and IIT Delhi found that adding original statistics to content increases AI citation likelihood by 41%. Including expert quotes with verifiable credentials provides a 30% boost in AI impressions. And structuring content with “answer capsules,” direct 40-to-80-word responses placed early in the page, increases citation rates by 3.2x.

For Gemini specifically, implementing FAQPage and Organization schema increases AI citation likelihood by up to 40%. Entity linking, connecting your brand to founders, social profiles, and certifications through structured data, adds another 19.72% lift in AI Overview appearances.

The economic case is clear. Gartner projects that by 2026, 30% of brand perception will be shaped by AI before a buyer ever visits a brand’s website. A SOCi audit of over 350,000 business locations found that ChatGPT recommends only 1.2% of local businesses. The gap between brands that track platform-level AI brand visibility and those that don’t is widening fast.

The action loop is simple: identify the gap, diagnose the cause, deploy targeted content, and track the results continuously. AI models are iterative. Your visibility score isn’t a trophy. It’s a signal that changes every time an index refreshes.

Conclusion

Your total AI visibility score is an average. And averages hide the platform-level gaps that determine whether high-intent buyers find you or your competitor. The brands that win in 2026 won’t be the ones with the highest single number. They’ll be the ones that know exactly where they’re strong, where they’re invisible, and what to do about it, platform by platform.

Stop flying blind on a number that smooths out the peaks and valleys. Start tracking the cross-platform AI brand visibility data that actually tells you where to act.

FAQ

Q: What is AI brand visibility?

A: AI brand visibility measures how often and in what context your brand appears in AI-generated answers from platforms like ChatGPT, Perplexity, and Gemini. Unlike traditional search rankings, it focuses on “Share of Model,” the frequency with which a model selects your brand as a recommendation for a user’s prompt.

Q: Why does my brand show up on ChatGPT but not Perplexity?

A: The two platforms use fundamentally different architectures. ChatGPT relies heavily on pre-training data that rewards historical authority and internet consensus. Perplexity runs 100% real-time retrieval and prioritizes content freshness and niche expertise. If you’re missing from Perplexity, your recent content strategy and real-time SEO signals likely need work.

Q: How often should I check AI brand visibility across platforms?

A: Weekly monitoring is recommended for category leaders, given that RAG indexes can update in 24 to 48 hours and LLM outputs are stochastic. Monthly audits are the minimum for standard brand health.

Q: Can I improve visibility on one AI platform without affecting others?

A: Yes. Because only 11% of cited domains overlap between ChatGPT and Perplexity, you can target specific platforms. Implementing Schema.org markup primarily boosts Gemini and AI Overview visibility, while publishing original research statistics most directly impacts Perplexity citations.