Your marketing team tracks everything. Organic rankings, paid search CTR, GA4 sessions, conversion funnels. Then someone on the leadership team asks, “What does ChatGPT say when a customer searches for our product category?” and nobody has an answer.

That’s not a minor blind spot. ChatGPT now processes 2.5 billion daily prompts across 900 million weekly active users. Perplexity handles 780 million monthly queries. Google AI Overviews appear in over 25% of desktop searches. None of that activity shows up in your current analytics stack. AI visibility analytics exists to close that gap.

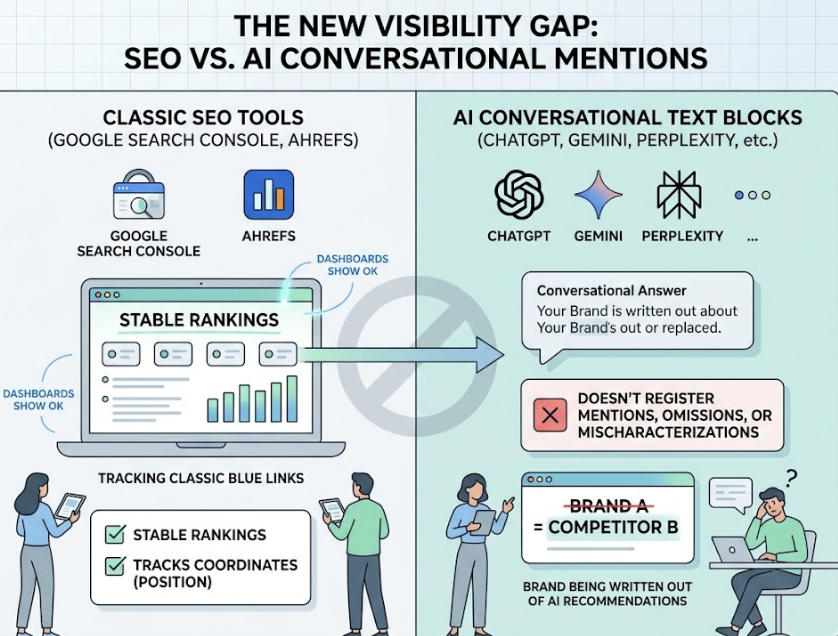

Most Analytics Dashboards Can’t See What AI Is Saying About You

Traditional web analytics was built to measure clicks, rankings, and sessions. It works for a world where users type a query, scan ten blue links, and click one.

That world is shrinking fast.

Zero-click searches have risen from 56% to 69% globally. When a Google AI Overview appears, that rate jumps to 80–83%. Users get synthesized answers directly in the interface, and traditional organic results get pushed down by 1,562 to 1,630 pixels.

Here’s the thing. Tools like Google Search Console and Ahrefs track the coordinates of classic blue links. They don’t register when a brand is mentioned, omitted, or mischaracterized inside a conversational text block. That means your dashboard can show stable rankings while your brand is actively being written out of AI-generated recommendations.

AI visibility analytics is a different discipline entirely. Instead of tracking user clicks, it tracks model outputs: whether your brand appears in AI responses, how it’s described, where it’s positioned relative to competitors, and which sources the model cites to justify its answer.

What AI Visibility Analytics Actually Measures

The core framework breaks down into seven dimensions. Each one maps to a traditional SEO metric but measures something fundamentally different.

| Metric | What It Tracks | Traditional SEO Equivalent |

|---|---|---|

| Visibility | Whether your brand appears in AI responses for a given prompt set | Impression share / keyword ranking |

| Sentiment | How the AI describes your brand (positive, neutral, critical) | Backlink sentiment / anchor text |

| Position | Where your brand appears in the generated text (early = better recall) | SERP rank position |

| Volume | Search demand for the prompts that trigger your brand mentions | Monthly search volume |

| Mentions | Frequency of brand name occurrences across responses | Keyword density |

| Source | Which URLs and domains the AI cites when referencing your brand | Referring domains / backlinks |

| CVR | Predicted likelihood that an AI mention drives a downstream action | Click-through rate |

The key distinction: traditional analytics tells you what users did. AI visibility analytics tells you what the model said. And in a zero-click environment, what the model says often determines whether a user ever reaches your site.

One metric that tends to get overlooked is Source analysis. When you know exactly which domains the AI is citing for your competitors but not for you, you’ve found the content gap to fix.

Why Tracking Perplexity Mentions Is Harder Than You Think

Perplexity isn’t ChatGPT with citations bolted on. It runs a multi-layered retrieval pipeline that makes brand tracking genuinely complex.

When a user submits a query, Perplexity’s intent mapping system classifies it using an internal embedding model and routes it to either a trending or evergreen index. A candidate pool of web snippets gets assembled, then scored by an L3 XGBoost reranker evaluating semantic depth, domain authority, engagement signals, and freshness. Snippets below the similarity threshold get discarded. What survives gets synthesized into a response with inline citations.

That pipeline is dynamic and query-dependent. A single manual check doesn’t account for regional differences, personalized search histories, or the model’s variable parameters. Plus, Perplexity enforces source diversity constraints, which means your brand’s visibility can shift depending on what else appears in the candidate pool.

Manual monitoring doesn’t scale. With 45 million active users running research-oriented queries with high commercial intent, Perplexity is too important to track with spot checks. Automated tools that run scheduled simulations across thousands of regional nodes are the only way to establish a reliable baseline of brand presence, citation frequency, and competitor co-occurrence.

5 Metrics That Separate Real AI Visibility Analytics from Dashboard Noise

Not all AI visibility data is worth acting on. Here’s a checklist that isolates the signals that actually drive decisions:

1. Share of Model (SoM) across a prompt cluster. If your SoM drops by more than 15%, it typically means competitor content is matching the model’s semantic vector more effectively. Time to audit what changed.

2. Citation Attribution Rate. This is the ratio of explicit URL citations to raw text mentions. If the model mentions your brand but doesn’t cite your domain, your site likely lacks the structural extraction schema that AI crawlers prefer.

3. Target Prompt Coverage. Track your inclusion rate across categorized prompt variants. A drop on comparison queries often signals that third-party review sites are outranking your brand in the model’s index.

4. NLP Sentiment Velocity. Monitor the shift in context sentiment scores over a 30-day window. A downward trend often means outdated press coverage or unaddressed negative reviews are feeding the model’s retrieval pipeline.

5. Attributed Session Yield. Map GA4 traffic using custom AI channel filters. If session volume drops while your SoM stays stable, the model is likely satisfying user intent directly on the results page without sending a click.

The most common mistake in AI visibility analytics? Tracking raw visibility while ignoring contextual sentiment. A high volume of brand mentions is counterproductive if the model regularly positions you as a negative example or references pricing you retired two years ago.

Another frequent pitfall: focusing exclusively on ChatGPT while ignoring Perplexity. Perplexity’s research-oriented users convert at significantly higher rates, making citation changes on that platform an early signal of high-intent buying shifts.

Research backs this up. 96% of content selected for Google’s AI Overviews features verified E-E-A-T trust signals. The Princeton GEO study found that integrating expert quotes with clear attributions improves generative visibility by 41%, and adding verified data tables with inline citations increases selection probability by 30%.

How to Build an AI Visibility Analytics Strategy from Scratch

Step 1: Define your target prompt portfolio. Unlike traditional keyword lists, these prompts mirror natural language query paths. Include category-level prompts (share of voice), problem-solving prompts (early-stage buyers), and comparison prompts (high-intent evaluations).

Step 2: Establish a baseline audit. Run your prompt set across ChatGPT, Gemini, Perplexity, and Google AI Overviews. Document brand presence, explicit citations, competitor co-occurrences, and the third-party domains models cite when your brand is absent.

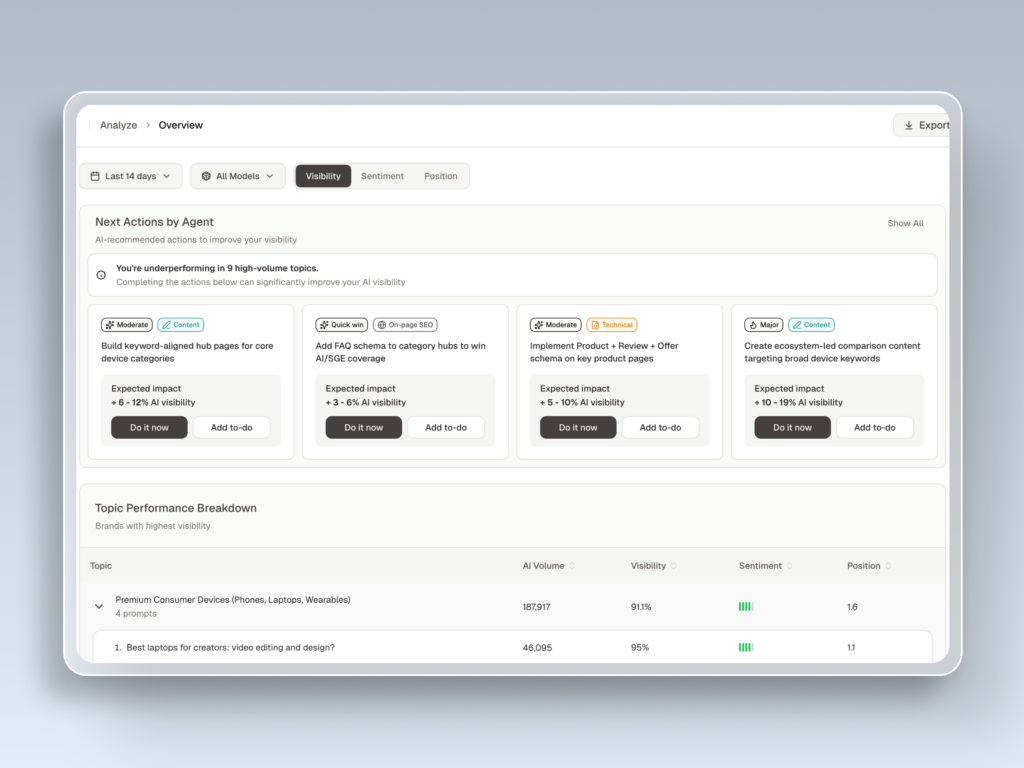

Step 3: Choose the right tool. For teams that need monitoring, analysis, and execution in one place, Topify consolidates the entire workflow. It tracks visibility and sentiment across ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, Qwen, and other major engines. Its Source Analysis feature reverse-engineers the exact domains AI platforms cite, so you can see where your competitors are getting picked up and where you’re not. When the data reveals a gap, Topify’s one-click agent deploys optimized content directly to your CMS.

That combination of tracking and execution is what separates a monitoring tool from an analytics platform. Most alternatives stop at the dashboard. Topify connects the data to action.

Step 4: Set a tracking cadence. High-volume consumer brands typically need daily scanning. B2B companies can run weekly cycles to separate real visibility shifts from minor model fluctuations.

Step 5: Turn insights into optimizations. When the dashboard flags a citation gap on a high-value prompt, your content team should place a concise direct-answer block in the first 200 words of the target page, integrate verified statistics, and update the dateModified schema to signal recency to AI crawlers.

| Feature | Topify | Profound | Writesonic GEO | Otterly AI |

|---|---|---|---|---|

| Supported engines | ChatGPT, Gemini, Claude, Perplexity, DeepSeek, Doubao, Qwen | 10+ engines including Grok, Meta AI | ChatGPT, Perplexity, Gemini, Claude, AIO | ChatGPT, Perplexity, AI Overviews, Copilot |

| Citation source analysis | URL-level | Partial, high-level | Basic tracking | Basic alerts |

| Sentiment analysis | Proprietary NLP scoring | Deep sentiment + compliance | Basic content sentiment | Standard keyword sentiment |

| Optimization integration | One-click CMS publishing | Manual recommendation reports | In-platform content suggestions | Structured data guidelines |

| Workflow automation | Autonomous agent execution | Static dashboard reports | Semi-automated editing | Alert-triggered emails |

What AI Visibility Analytics Costs in 2026

The market breaks into three tiers based on tracking depth and automation.

Entry tier ($20–$99/month): Platforms like Otterly AI (starting at $29/month) or AI Peekaboo ($50/month) support basic mention alerts across core models. They work for startups establishing a baseline but lack URL-level citation parsing, regional model tracking, and API integrations.

Mid-market tier ($99–$300/month): This is where most growing brands and agencies land. Topify’s pricing sits in this range while delivering enterprise-grade capabilities:

| Plan | Price | Prompts | AI Answer Analyses | Projects | Seats |

|---|---|---|---|---|---|

| Basic | $99/mo | 100 | 9,000 | 4 | 4 |

| Pro | $199/mo | 250 | 22,500 | 8 | 10 |

| Enterprise | From $499/mo | Custom | Custom | Unlimited | Custom |

For current details, check Topify’s pricing page.

Premium tier ($300–$700+/month): Platforms like Profound (from $499/month) target Fortune 500 companies with SOC 2 Type II compliance, HIPAA readiness, and advanced brand safety alerts. Custom platforms like seoClarity ArcAI can reach $3,000/month for high-volume API integrations.

The ROI math favors tracking. Standard organic search traffic converts at roughly 2.8%, whereas pre-qualified users arriving via generative citations convert at 14.2%. Marketing teams that don’t track these patterns risk cutting budgets for high-value informational content because GA4 misclassifies this converting traffic as anonymous “Direct” sessions.

Conclusion

The analytics infrastructure most marketing teams rely on was built for a search experience that’s disappearing. AI visibility analytics isn’t a niche add-on. It’s the measurement layer that connects your brand to where discovery is actually happening: inside synthesized AI responses across ChatGPT, Perplexity, Gemini, and beyond.

The brands that move first will have a compounding advantage. They’ll know which prompts matter, which sources get cited, where competitors are winning, and what to fix. The brands that wait will keep watching stable dashboards while their AI visibility erodes.

Start by auditing your brand across one AI platform. Then scale the tracking. Get started with Topify to turn that data into action.

FAQ

Q: What is AI visibility analytics?

A: AI visibility analytics is the systematic process of tracking, measuring, and analyzing how your brand appears in AI-generated responses across platforms like ChatGPT, Perplexity, and Gemini. Unlike traditional SEO analytics that focuses on keyword rankings and backlink profiles, it measures extraction probabilities, contextual sentiment, citation frequency, and competitor co-occurrences within LLM outputs.

Q: How does AI visibility analytics work?

A: It works through programmatic API simulations that run natural language queries across multiple AI platforms and search configurations to capture real-time model outputs. The analytics platform then uses NLP to extract brand mentions, score contextual sentiment, map citation sources, and track positioning relative to competitors.

Q: What are the best tools for AI visibility analytics?

A: Topify offers integrated multi-engine tracking with automated content optimization. Profound focuses on enterprise-grade compliance and risk monitoring. Writesonic GEO serves content-focused teams, and Otterly AI provides cost-effective baseline tracking. The right choice depends on your tracking scale, budget, and whether you need automated execution capabilities.

Q: How do you measure AI visibility analytics?

A: By tracking seven core dimensions: visibility presence, NLP sentiment, positioning order, prompt search volume, mention density, source citation attribution, and Conversion Visibility Rate (CVR). These should be paired with custom GA4 channel groups using regex filters to isolate generative referral traffic from anonymous direct sessions.