Most brands now know they need to show up in AI answers. So they run a GEO audit, fix their schema markup, tighten their headings, and call it done.

That’s half the work. And it’s the easier half.

A GEO Score tells you whether your content is readable to an AI. An AI Visibility Score tells you whether AI actually recommends you. Those are two very different things, and confusing them is one of the most expensive mistakes you can make right now.

Your GEO Score Is a Readiness Snapshot, Not a Performance Report

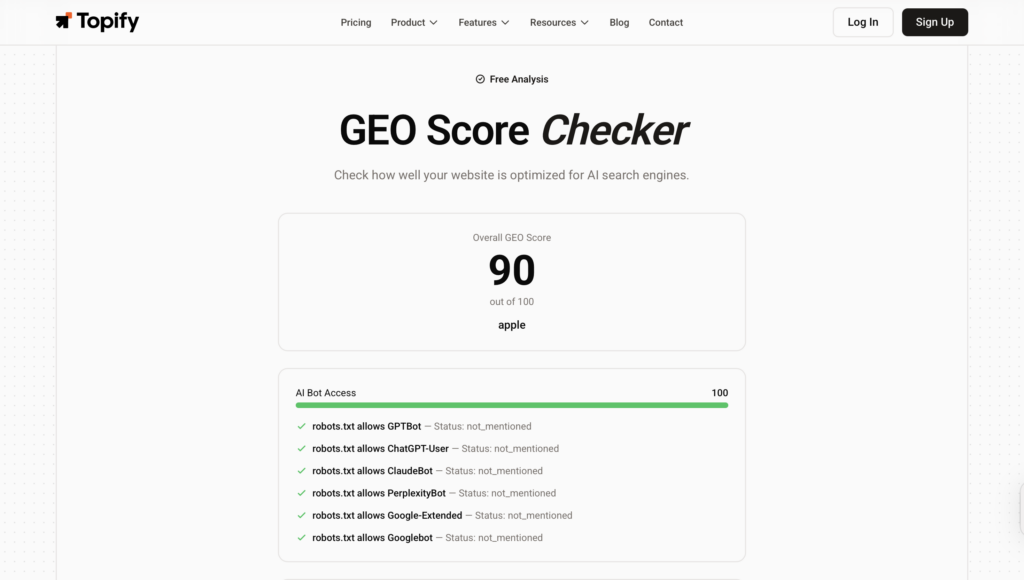

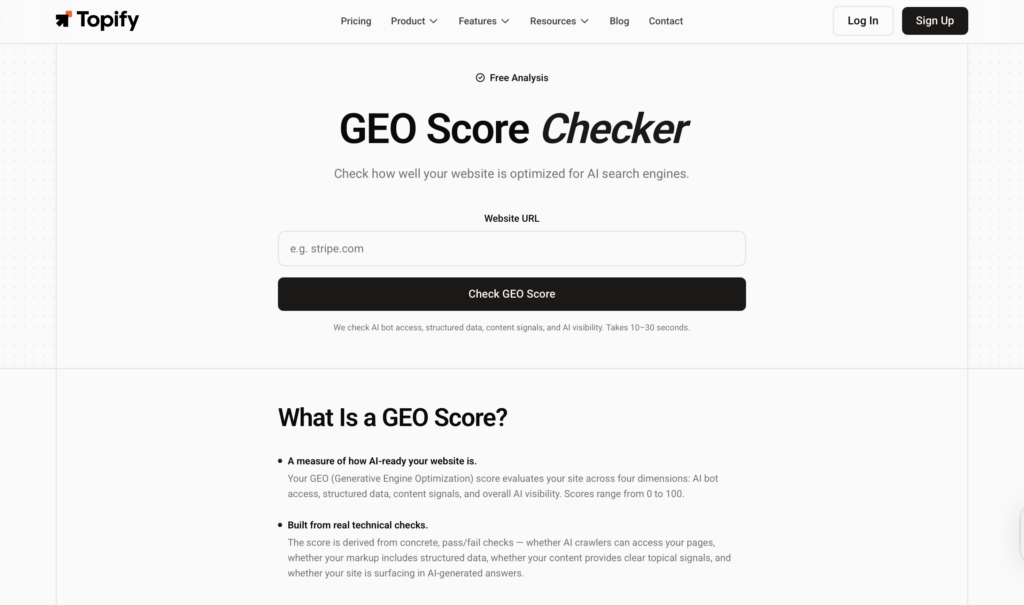

A GEO Score measures how well your content is structured for AI retrieval. Think of it as a technical audit: can a model’s crawler access your site, parse your headings, extract discrete fact units, and trust what it finds?

The evaluation typically covers five areas: crawler accessibility, semantic structure, entity declaration via Schema markup, factual density, and metadata freshness. Each one maps to a specific stage of how Retrieval-Augmented Generation (RAG) systems process a page before deciding whether to cite it.

This is genuinely useful. Content that fails these checks is unlikely to get picked up regardless of how authoritative the brand is. Research from the foundational Princeton GEO study found that adding verifiable statistics to content can lift citation rates by 31% to 37%, and expert quotations can push that figure higher.

But here’s the thing: passing these checks doesn’t mean you get cited. It means you’re eligible to be cited.

You can check where your content stands right now with Topify’s GEO Score Checker, which runs 22 technical checks across content quality, AI readiness, technical structure, and authority signals.

What AI Visibility Score Actually Captures

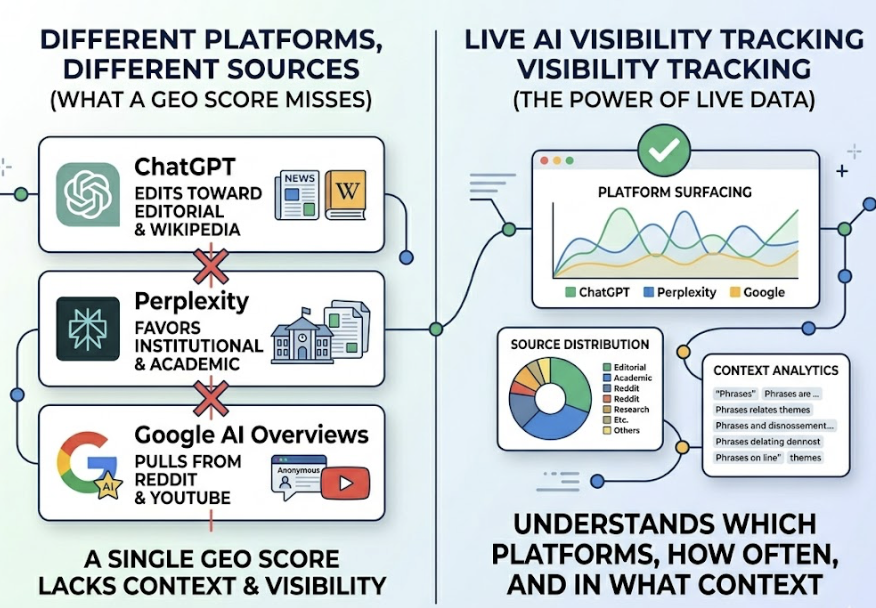

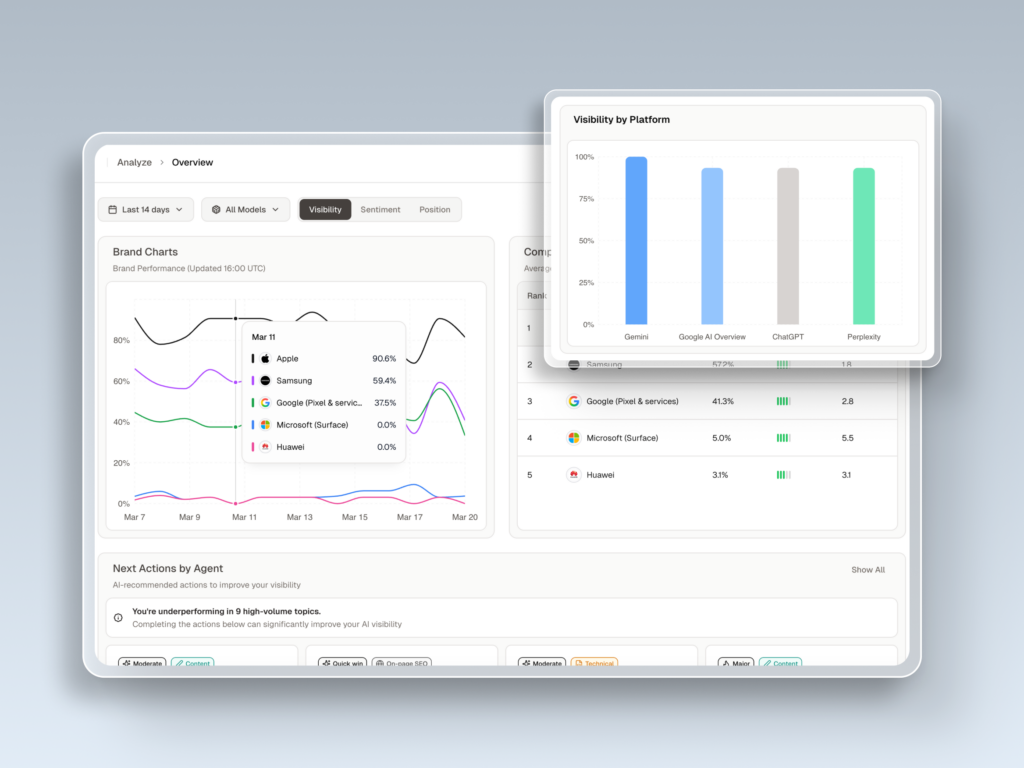

AI Visibility Score measures something else entirely: how often your brand actually appears in AI-generated answers across real platforms like ChatGPT, Gemini, and Perplexity.

It’s expressed as a share of citations for a defined set of category prompts. And the numbers are stark. Industry tracking data from 2026 shows the median brand sits at roughly 0.3% AI Visibility. Top performers in competitive categories reach 12% to 30%. That gap isn’t a rounding error; it’s the difference between being part of the AI conversation and being invisible to it.

AI Visibility also captures dimensions a GEO Score can’t touch: where you rank within an answer (first mention vs. buried alternative), how AI describes your brand over time, and which specific user intents cause you to appear or disappear.

The Gap Most Teams Don’t See Coming

A 2026 analysis of 1,528 company reports found that the correlation between technical GEO Score and real-world AI Visibility was just 0.080. The correlation between brand authority (presence across high-credibility third-party sources) and AI Visibility was 0.386, nearly five times higher.

That’s not a small gap. That’s a different variable entirely.

The reason comes down to how LLMs actually select sources. They don’t just retrieve the cleanest content; they cross-reference claims across multiple independent sources before committing to a citation. If your brand makes a strong claim on your own domain but that claim isn’t corroborated by analyst reports, review platforms, or editorial coverage, the model often discards it.

A brand with high authority and a mediocre GEO Score averaged 0.651 AI Visibility in that same study. A brand with a high GEO Score but low authority averaged 0.548. Technical readiness without off-page trust consistently underperforms.

Why the Slot Competition Makes This Urgent

Traditional search gives you ten blue links. AI answers give you two to five citation slots, sometimes fewer.

That scarcity changes the stakes. Ranking first on Google for a query doesn’t protect you if the LLM synthesizing that same query picks three other sources instead. You can be a market leader in traditional search and functionally invisible in AI answers simultaneously.

This isn’t a hypothetical edge case. It’s what the data shows for most brands right now.

The other complication: different AI platforms cite differently. ChatGPT skews toward editorial sources and Wikipedia. Perplexity heavily favors institutional and academic sources. Google AI Overviews pulls heavily from Reddit and YouTube. A GEO Score doesn’t tell you which platforms are surfacing your brand, how often, or in what context. Only live AI Visibility tracking does.

How to Use Both Together

The two metrics work as a sequence, not a choice.

Start with your GEO Score. It tells you whether the technical foundation is in place: whether bots can crawl your pages, whether your content is chunked in a way that RAG systems can extract, whether your schema markup helps the model understand what your brand actually does. Fixing GEO Score gaps is table stakes; it’s what gets you into the retrieval pool.

Then track your AI Visibility. This tells you what’s actually happening once you’re in that pool. Are you being selected? In which contexts? For which intents? Against which competitors? These questions can’t be answered by a technical audit.

| GEO Score | AI Visibility Score | |

|---|---|---|

| What it measures | Content readiness for AI retrieval | Actual citation frequency in AI answers |

| Type of metric | Static snapshot | Dynamic, ongoing |

| Update frequency | On-demand audit | Continuous tracking |

| Primary action | Fix technical and content gaps | Optimize off-page authority and citation share |

| Tells you | Whether AI can cite you | Whether AI does cite you |

Use Topify’s GEO Score Checker to run the technical diagnostic. Then use Topify’s AI Visibility Checker to track how your brand is actually showing up across ChatGPT, Gemini, Perplexity, and other major platforms in real time.

The path from readiness to actual visibility becomes a lot clearer when you can see both numbers side by side.

Conclusion

A good GEO Score and strong AI Visibility aren’t the same thing, but you need both. The GEO Score tells you whether AI can pick up your content. The AI Visibility Score tells you whether it does. Most brands are investing in readiness and stopping there, which explains why the median brand’s AI Visibility sits at just 0.3% while top performers are at 12% and climbing.

Start with the technical foundation. Then track the real-world results. The gap between those two numbers is where your actual opportunity lives.

FAQ

Is GEO Score the same as AI Visibility?

No. GEO Score measures whether your content is technically structured for AI retrieval. AI Visibility Score measures whether AI systems actually cite your brand in their answers. A brand can score well on the former and still have near-zero performance on the latter.

How often should I check each metric?

Run a GEO audit when you publish or significantly update content, and after major site changes. AI Visibility needs continuous tracking because AI citation behavior shifts as models update, competitors publish new content, and platform sourcing patterns evolve.

What’s a realistic AI Visibility benchmark to aim for?

The median brand sits around 0.3%. Reaching 2% to 10% puts you in the category presence tier; you’re appearing in comparison contexts and long-tail queries. Top-tier performers consistently hit 12% and above. The right benchmark depends on your vertical and competitive set.

Can I improve AI Visibility without improving my GEO Score first?

Partially. Off-page authority signals (analyst coverage, editorial mentions, review platform presence) drive visibility independently of on-page technical quality. But brands with both tend to outperform brands that focus on only one. Technical readiness removes friction; authority creates preference.

Why do different AI platforms show different visibility results?

Each platform has distinct sourcing behavior. ChatGPT pulls broadly from editorial and corporate sources; Perplexity skews academic and institutional; Google AI Overviews leans heavily on Reddit and user-generated content. A single GEO audit can’t account for these platform-specific citation patterns. Cross-platform tracking is the only way to see where you actually stand.