March 2025: seven products listed in a brand-new G2 category called Answer Engine Optimization. January 2026: more than 150. That’s a 2,000%+ expansion in under ten months — faster than almost any software category G2 has tracked in its AI parent group.

The vendor side of this story is easy to explain. The buyer side is why it matters to your brand.

7 Products in March 2025. 150+ G2 AEO Tools by Early 2026.

G2’s AEO category launched quietly. At the time, it met the platform’s minimum listing threshold — six products with ten or more reviews each, plus at least 150 total reviews across the category — but barely. A handful of early tools were targeting a problem most marketing teams hadn’t put on their annual planning radar.

Ten months later, the category had over 150 products. For context: most enterprise software categories need three to five years to reach that kind of density on G2.

That growth carried enough momentum to generate a G2 Grid report by Winter 2026, followed by a Spring 2026 Grid shortly after. In G2’s system, a Grid report is a credibility signal — it means the category has passed the threshold from experimental to benchmarkable.

The page view data reinforces it. According to G2 Data Solutions, the AEO category’s page views ranked first among all AI parent categories on G2 in Q4 2025, climbing 62% compared to the previous quarter. That means buyer interest isn’t slowing after the initial spike. It’s still accelerating.

The Real Signal Isn’t Vendor Count — It’s How Buyers Changed

Here’s the thing: software categories don’t grow 2,000% because vendors decided to build new products. They grow because buyers started spending money.

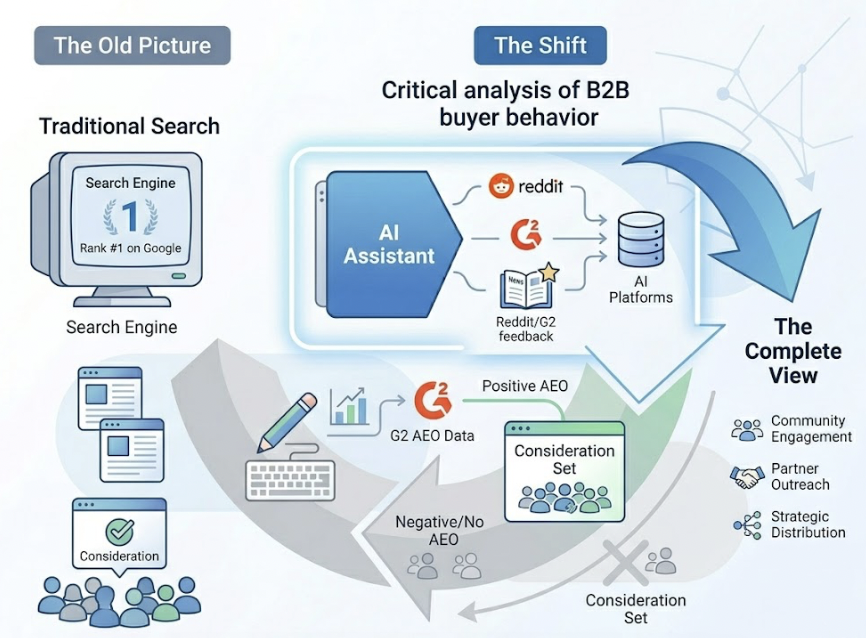

According to G2’s research, 50% of B2B software buyers now start their purchasing process with an AI chatbot rather than a traditional Google search. Among those, 74% prefer ChatGPT as their primary research tool.

That behavior shift is the underlying driver behind every number in the G2 AEO category. When half your potential buyers are opening an AI assistant instead of a search engine, your ranking on Google’s page one stops being the whole picture. What the AI says about you — or doesn’t say — determines whether you enter the consideration set at all.

Emily Greathouse, G2’s Director of Market Research, framed the stakes precisely: the modern buyer’s decision journey is being compressed by AI, and winning the AI’s answer matters more than winning the click.

That’s not a prediction. It’s already reflected in how buyers report their research behavior.

AEO Went From “Watch List” to “Budget Line” in Under a Year

Twelve months ago, most marketing teams listed AEO as something to observe. Now it’s appearing in Q1 planning decks as a distinct budget category.

The shift happened because the data became concrete enough to justify spend. B2B buyers consume an average of 13.4 pieces of content before they contact a sales rep. Two-thirds of that decision journey is self-directed, with AI assistants increasingly acting as the primary research layer. The brand that appears consistently and accurately in AI answers has already shaped the buyer’s shortlist before a single discovery call takes place.

One benchmark gaining traction in enterprise marketing circles is the “15% rule” — allocating at least 15% of total search budget to AEO. Research suggests that investments below this threshold typically don’t generate sustained citation growth. Teams that have crossed it report a 38% reduction in cost per lead and a 2.4x increase in meeting bookings, according to Salesforce’s 2026 State of Marketing report. In a year when overall marketing budgets tightened, those efficiency numbers drove AI tool spend up nearly threefold in 18 months.

Budget decisions are lagging indicators. The fact that AEO is appearing as a budget line now tells you the underlying behavior shift happened earlier.

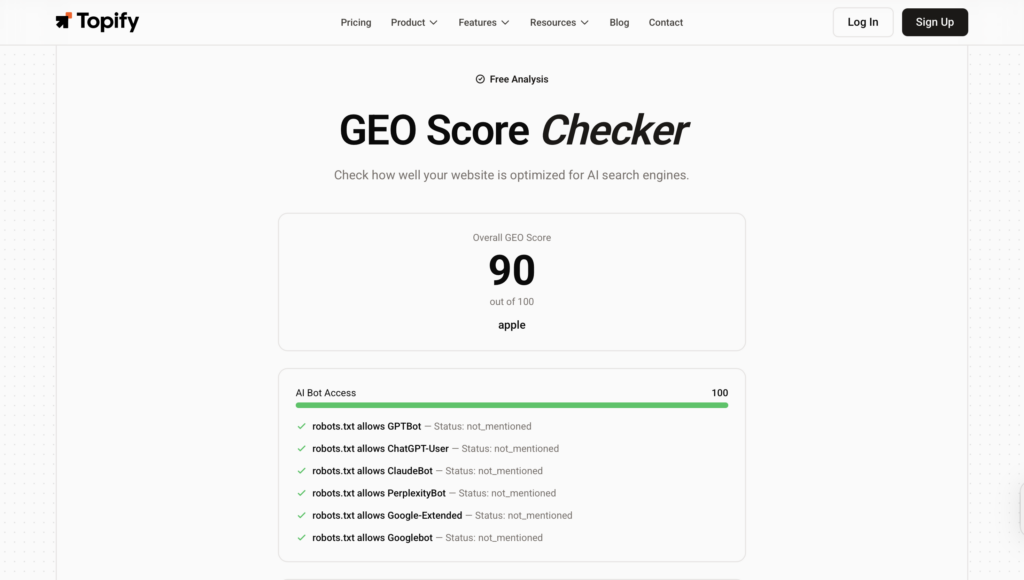

Before you benchmark your spend, it’s worth establishing where your brand currently stands in AI answers. Topify’s GEO Score Checker gives you a baseline read across major AI platforms in minutes — useful data before any planning conversation.

What the G2 Grid Reveals About the AEO Tool Market Right Now

Most buyers use the G2 Grid to find “who’s established” in a category. That’s useful. But in a category this young, the Grid reveals something more specific: which tools close the loop between data and action, and which ones stop at reporting.

The AEO category has bifurcated into two distinct product types. First: monitoring tools that surface AI visibility metrics but leave the fix to your team. Second: full-stack platforms that connect the insight to the execution — identifying why your brand is missing from AI answers and deploying content to address it.

That distinction doesn’t show up in feature lists. It shows up in satisfaction scores and user retention. On the G2 Spring 2026 AEO Grid, the platforms with the highest satisfaction ratings are consistently the ones in the second category.

G2 also functions as a data source for the AI systems themselves. The platform holds roughly 22.4% share of voice across AI-generated software recommendations, and on Perplexity specifically, G2 accounts for 75% of citations from review-type platforms. AI systems treat G2 profiles as structured, machine-readable evidence — not just user testimonials. Reviews that include specific use cases, measurable outcomes, and technical detail carry more citation weight than general sentiment.

That means a brand’s G2 presence isn’t just a social proof asset anymore. It’s part of the AI visibility stack.

Topify on G2 Spring 2026: What the Data Backs Up

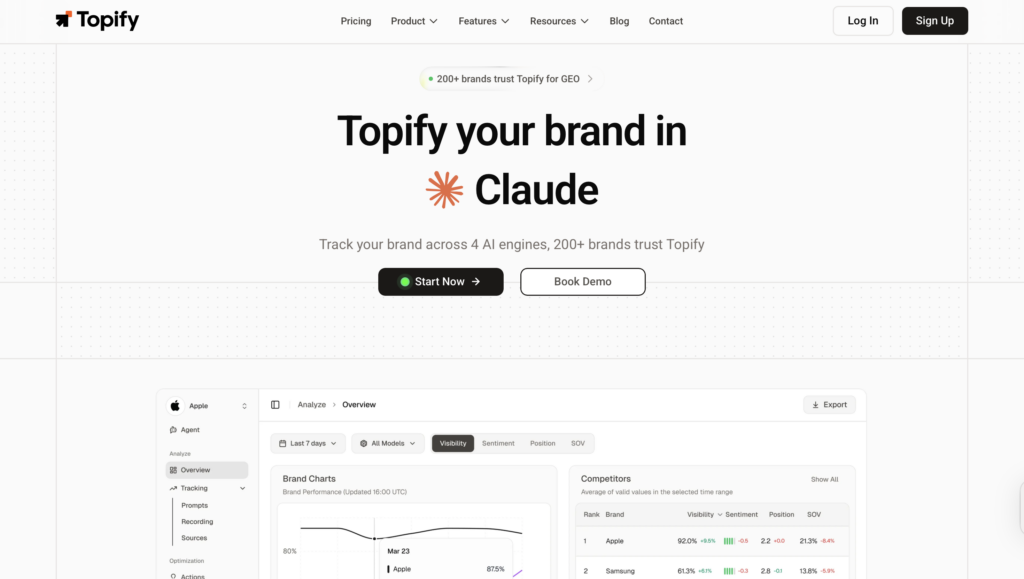

Topify appears in the G2 Spring 2026 AEO Grid as one of the category’s emerging benchmarks. Built by researchers with LLM development and enterprise SEO backgrounds, it’s positioned among the platforms that combine measurement with execution rather than treating them as separate workflows.

The coverage layer is broader than most tools in the category. Topify tracks brand visibility across ChatGPT, Gemini, Perplexity, Claude, and other major AI platforms — which matters because your buyers aren’t all using the same AI assistant, and gaps in coverage become blind spots in your data.

The analytics framework tracks seven KPIs: visibility, sentiment, position, volume, mentions, intent, and CVR (Conversion Visibility Rate). That last metric estimates the probability that an AI answer is actually directing a buyer toward your brand — which is the number most marketing dashboards are currently missing. Visibility tells you if you’re being mentioned. CVR starts to tell you whether it’s converting.

Topify’s source analysis feature works in the opposite direction: it reverse-engineers the exact URLs that AI platforms cite when recommending brands in your category. For content teams, that data directly answers the question of what to build next. You’re not guessing which content fills the citation gap — you’re seeing which domains are being pulled and why yours isn’t among them.

You can review Topify’s verified G2 profile and user ratings on G2’s Topify page, or run a 7-day free trial to pull your own brand’s AI visibility baseline before your next planning cycle.

How to Pick a G2 AEO Tool Before the Category Gets Noisier

With 150+ products in the category and new entrants arriving monthly, selection pressure is real. Most tools share surface-level feature parity — dashboards, visibility scores, platform mentions. The differences that actually matter show up in three areas.

Platform coverage. Single-platform tools are common and inexpensive. But if your buyers are distributed across ChatGPT, Gemini, and Perplexity, a tool that only monitors one of them isn’t showing you a complete picture. Coverage gaps become strategy gaps.

Metric depth. Visibility percentage is a floor, not a ceiling. Look for tools that track position relative to competitors, sentiment accuracy over time, and source citation analysis. These are the signals that connect AI mentions to actual pipeline behavior.

Execution capability. Data without a clear path to action is a reporting exercise. The platforms with the strongest G2 satisfaction scores in the AEO category are consistently the ones that help you act on what you find — not just document it.

Here’s how the two main tool types stack up across these dimensions:

| Capability | Monitoring-only tools | Full-stack AEO platforms |

|---|---|---|

| Multi-platform AI tracking | Often single-platform | ChatGPT, Gemini, Perplexity + others |

| Competitor benchmarking | Limited | Real-time, multi-platform |

| Source citation analysis | Rarely included | Yes |

| Content gap identification | No | Yes |

| Agent-based execution | No | Yes (e.g. Topify) |

| Entry price | $49–$79/mo | $99–$499/mo |

One practical filter: use the High Performer quadrant on the G2 Spring 2026 Grid as your starting point, not just the Leaders. In a category this young, satisfaction scores are a more reliable signal than market presence scores. High Performers have strong user validation but may not have scaled distribution yet — for a newer category, that’s often where the better product lives.

Conclusion

The 2,000% growth in G2’s AEO category isn’t a forecast about where marketing is heading. It’s a data point about where buyers already are.

When half of B2B software buyers start their research with an AI chatbot, your position in those AI answers directly affects whether you make the initial shortlist. The G2 Spring 2026 AEO Grid gives you a peer-reviewed starting point for evaluating which platforms are worth testing. Use it alongside a current baseline of your own AI visibility — you need both to make an informed decision.

The brands that ran this audit twelve months ago are already ahead. The window before it becomes table stakes is closing.

FAQ

What is the G2 AEO tool category?

The Answer Engine Optimization (AEO) category on G2 groups tools designed to help brands improve their visibility in AI-generated answers across platforms like ChatGPT, Gemini, and Perplexity. The category launched in March 2025 with 7 products and grew to 150+ by early 2026, making it one of the fastest-growing software categories on the platform.

How is AEO different from SEO?

SEO optimizes for search engine rankings — getting your pages to rank in Google’s results. AEO optimizes for AI-generated answers, which means ensuring that large language models cite, reference, and recommend your brand when buyers ask research questions. The mechanics differ: AI systems prioritize structured content, semantic consistency across third-party sources, and citation density over traditional backlink signals and keyword density.

What should I look for when evaluating a G2 AEO tool?

Prioritize platform coverage (does it track ChatGPT, Gemini, and Perplexity, not just one), metric depth (visibility percentage is a starting point — look for sentiment, competitive position, and source citation analysis), and execution capability (can it help you act on the data, or does it only report it?). On the G2 Spring 2026 Grid, start with the High Performer quadrant for the strongest satisfaction signals in an early-stage category.

Why is G2 specifically important for AEO strategy?

G2 holds approximately 22.4% share of voice in AI-generated software recommendations and accounts for 75% of review-platform citations on Perplexity. AI systems treat structured G2 profiles as credible, machine-readable evidence. That means reviews containing specific use cases and measurable outcomes carry citation weight in AI answers — making G2 presence a direct input to AEO performance, not just a social proof channel.

Is Topify listed on G2?

Yes. Topify appears in the G2 Spring 2026 AEO Grid with verified user reviews. You can view its profile and satisfaction ratings on G2’s Topify page.