Everyone on your team has heard the phrase “AI Agent” at least once in the last six months. Most people nod along. Few can actually explain what makes an agent different from the ChatGPT tab they already have open.

That confusion isn’t just a vocabulary problem. It’s a strategic one. Companies are reorganizing workflows, reallocating budgets, and making hiring decisions based on what AI agents can supposedly do, while the underlying technology remains poorly understood at the team level. The gap between assumption and reality is wide enough to stall real adoption.

Most People Think AI Agents Are Just Smarter Chatbots. Here’s the Actual Difference.

The standard chatbot operates on a simple logic: one input, one output, no memory of what came before. Every conversation starts from zero. You write the prompt, it writes the response. The system doesn’t carry context, doesn’t track goals, and doesn’t do anything you didn’t explicitly ask for.

An AI agent works differently. You give it a goal, not a prompt. The agent plans the steps, calls external tools, checks its own work, adjusts when something goes wrong, and keeps going until the task is done.

Here’s a concrete example. Ask ChatGPT to “schedule next week’s meetings” and it’ll draft a polite email you can send yourself. Give the same instruction to an AI agent and it reads your calendar, checks attendee availability via API, sends the invites, and updates the reminders when someone responds. Same words. Completely different result.

That’s not a performance upgrade. That’s a different category of tool.

| Traditional Chatbot | AI Agent | |

|---|---|---|

| Interaction model | One prompt, one response | Goal-driven, self-directed execution |

| Task complexity | Single-step Q&A | Multi-step, cross-system workflows |

| Memory | No memory across sessions | Short-term context + long-term knowledge |

| Tool use | Text generation only | APIs, browsers, databases, code execution |

| Error handling | Generates a response regardless | Self-evaluates and corrects course |

How an LLM Agent Actually Works: The 4-Layer Structure

The autonomy of an AI agent isn’t arbitrary. It comes from a specific four-layer architecture that extends what a language model can do on its own.

Perception is the entry point. The agent reads its environment: your instructions, API responses, document contents, web pages, even sensor data. In a competitive research task, this layer pulls raw information from industry databases and competitor websites before the agent writes a single word.

Reasoning is where the real work happens. A high-performance LLM, like GPT-4o or Claude 3.5 Sonnet, breaks down a vague goal into a concrete sequence of steps using chain-of-thought logic. It also checks itself: “Did step two give me what I needed to do step three?”

Action is the execution layer. The agent calls APIs, runs scripts, navigates browsers through automation tools like Playwright, and writes to databases. This takes it out of the chat window and into your actual business systems, updating a CRM record, committing code, or publishing an article, all without a human confirming each move.

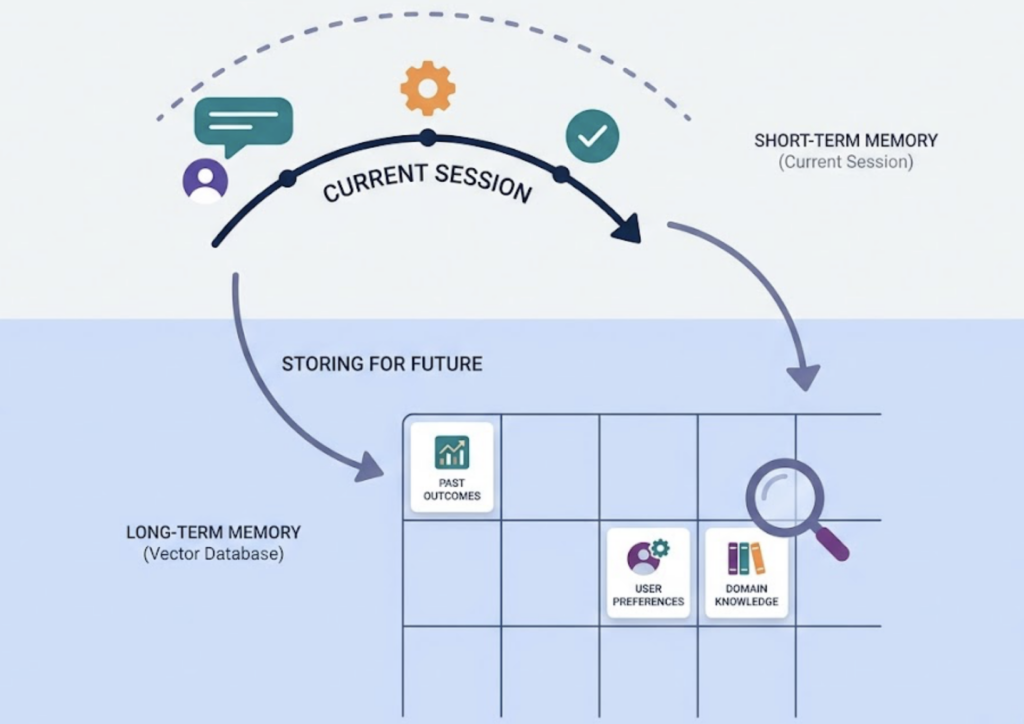

Memory is what makes it coherent over time. Short-term memory tracks what happened in the current session. Long-term memory, often powered by vector databases, stores past task outcomes, user preferences, and domain knowledge so the agent doesn’t start from scratch every time.

Put the four layers together and you get what researchers call “agentic AI”: a system that perceives, reasons, acts, and remembers, rather than one that simply responds.

One Intelligent Agent Is Fine. Multiple Agents Working Together Is a Different Game.

Single agents handle focused, linear tasks well. Scale to complex workflows across multiple systems and a single agent starts to strain under the cognitive load, and error rates climb.

That’s the logic behind multi-agent systems (MAS). Instead of one agent doing everything, you have multiple specialized agents working in parallel: one searches, one drafts, one fact-checks, one publishes. Each handles what it’s built for.

The output quality improves because agents can challenge each other’s work, catch errors the original agent missed, and run subtasks simultaneously instead of in sequence.

Three frameworks dominate how enterprises are building these systems right now:

| Framework | Core philosophy | Best for |

|---|---|---|

| LangGraph | Graph-based orchestration (nodes and edges) | Finance, healthcare: deterministic logic and compliance |

| CrewAI | Role-based team collaboration | Marketing automation, content pipelines, customer support |

| AutoGen | Conversation-centric collaboration | Software engineering, iterative research and code tasks |

CrewAI has the lowest ramp time for non-technical teams. LangGraph wins on stability and auditability when the task can’t tolerate a wrong output. The right choice depends on how much determinism your workflow requires.

What AI Agents Can Actually Do in a Business Right Now

This isn’t speculative. According to a 2025 PwC survey, 79% of organizations have adopted AI agents in some form, and 43% are allocating more than half of their AI budget to agent systems (Multimodal, 2025). Gartner projects that by end of 2026, 40% of enterprise software will integrate task-specific AI agents.

Here’s where the actual work is happening today.

Sales teams are using AI SDR agents that research leads, personalize outreach, and update CRM records around the clock. Companies using AI agents for lead nurturing report 4 to 7 times higher conversion rates compared to traditional methods, according to Landbase data.

Customer service is one of the highest-ROI deployments. Gartner projects AI agents will autonomously resolve 80% of routine support tickets by 2029, cutting operational costs by 30%. Reddit’s internal deployment cut support case resolution time by 84%.

Content and marketing teams are using agents to automate research, draft SEO-optimized copy, and schedule publishing. At Seattle Children’s Hospital, agents helped the content team improve editing speed by 32% and overall creation speed by 46%.

Security and DevOps teams run agents for round-the-clock threat monitoring and auto-remediation. In documented deployments, vulnerability risk dropped by 70% and average incident response time was cut by half.

The global average ROI across enterprise AI agent deployments sits at 171%. For U.S. companies specifically, that number reaches 192% (Landbase, 2025).

AI Agent vs. Copilot: Two Very Different Philosophies

Both use large language models. Both are sold as productivity accelerators. The similarity ends there.

A Copilot lives in your sidebar. It waits for you to trigger it, generates a suggestion, and waits for you to decide what to do with it. Every step requires your involvement. Microsoft 365 Copilot and GitHub Copilot are the most common examples: useful, widely deployed, and fundamentally dependent on a human in the loop.

An agent doesn’t wait. You set the goal and the guardrails, then step back. Salesforce’s Agentforce, for instance, doesn’t just suggest a follow-up email. It executes the entire follow-up workflow inside the CRM without you touching it.

| AI Agent | Copilot | |

|---|---|---|

| Trigger mechanism | Goal/event-driven | Step-by-step human trigger |

| Autonomy level | High, self-planning | Low, requires human confirmation per step |

| Memory | Long-term, cross-session | Current document or conversation window |

| Representative tools | Agentforce, AutoGPT, Devin | Microsoft 365 Copilot, GitHub Copilot |

| Core value | Replaces repetitive multi-step workflows | Accelerates individual task completion |

The practical question isn’t which is better. It’s which one your workflow actually needs. Copilots are the right tool for augmenting creative or high-judgment tasks. Agents are the right tool for automating structured processes that don’t need a human confirming every loop.

Will AI Agents Replace Human Workers? A More Precise Question to Ask.

The honest answer: agents are replacing tasks, not jobs.

High-repetition, rule-based work is already being taken over. Data entry, lead qualification, report generation, routine code changes. These don’t require judgment. They require execution at scale, and agents do that better.

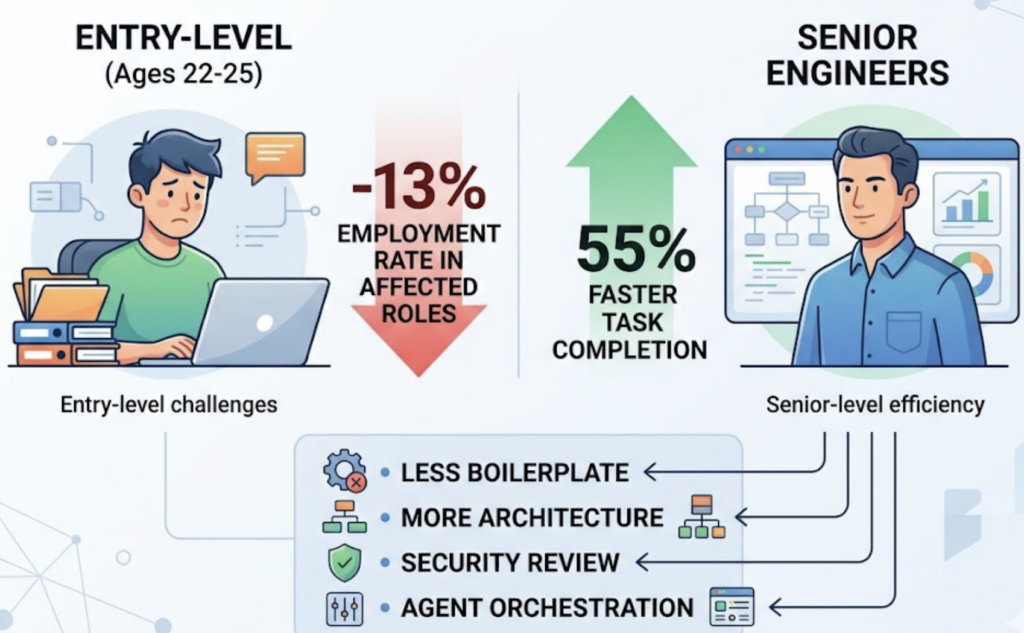

What’s actually happening to people is more nuanced. GitHub research found that developers using AI tools complete tasks 55% faster. But that efficiency gain isn’t evenly distributed. Entry-level workers in high-AI-exposure roles, specifically ages 22 to 25, saw employment rates drop by 13% in affected categories. Senior engineers, by contrast, are spending less time on boilerplate and more time on architecture, security review, and agent orchestration.

That’s the consistent pattern. Agents raise the floor on what competent execution looks like, which raises the baseline skill requirement for human contributors.

The Stanford AI Index reported that engineers with demonstrated AI tool proficiency earn $20,000 to $50,000 more per year than peers without it. The labor market is already pricing in the skill premium.

AI agents won’t replace software developers. They will make developers who can’t use them competitively irrelevant.

AI Agents Are Changing How Brands Get Discovered. Most Marketing Teams Haven’t Priced This In.

When a user types a query into traditional search, SEO determines whether your brand appears on page one. That logic still holds for a shrinking share of search behavior.

Increasingly, users are asking AI systems directly: “What’s the best project management tool for a 10-person remote team?” The AI responds with a short list. If your brand isn’t cited, the user moves on. You didn’t rank lower. You didn’t exist.

When AI Overviews appear in search results, organic click-through rates drop by an average of 61%. As AI agents become the primary interface for research, shopping, and software selection, the question shifts from “do we rank?” to “does AI recommend us?”

This is the core problem that Generative Engine Optimization (GEO) addresses. It’s also the reason platforms like Topifyexist. Topify tracks how often your brand is cited across ChatGPT, Gemini, Perplexity, DeepSeek, and other major AI platforms, measuring not just whether you appear, but where you rank relative to competitors, how AI describes your brand, and which sources AI is pulling from when it does.

Built by founding researchers from OpenAI and champion Google SEO practitioners, Topify turns AI visibility into a structured, measurable growth channel — the same way analytics platforms did for web traffic a decade ago.

For marketing teams operating now, AI visibility isn’t optional. It’s the new SEO.

Conclusion

AI agents aren’t a smarter version of the chatbot you already use. They’re a distinct category of software: autonomous systems that perceive context, reason through multi-step problems, execute across real business tools, and retain memory between sessions.

The teams that understand this early have a real operational advantage. Start by mapping the high-repetition, multi-step processes in your workflow that don’t require human judgment at each step. Those are your first agent candidates. Then ask a harder question: when your customers use AI agents to find tools, vendors, or services in your category, is your brand in the answer? Get started with Topify to find out exactly where you stand.

FAQ

Q: What does “Agentic AI” mean?

A: Agentic AI refers to AI systems that don’t just generate content but independently plan and execute complex tasks. “Agentic” describes the degree of autonomy: the system selects its own tools, manages multi-step processes, and adjusts based on feedback without human intervention at each step. It’s less about the model’s raw capability and more about how that capability is deployed.

Q: How do you build your own AI Agent?

A: Building an agent typically involves four steps: selecting a core LLM (GPT-4o, Claude, or similar), defining the perception and memory layers through RAG and a vector database, configuring the action tools the agent can call (APIs, scripts, internal systems), and using an orchestration framework like CrewAI or LangGraph to manage the logic and guardrails. Most teams start with CrewAI for its lower barrier to entry, then migrate to LangGraph as workflow complexity increases.

Q: What are the best AI Agent tools and platforms in 2025?

A: For developers building custom agents, LangGraph, CrewAI, and AutoGen are the dominant frameworks. For enterprise deployment, Salesforce Agentforce leads in CRM workflows and Devin has become the benchmark for AI software engineering. For teams that need to monitor whether their brand is being recommended by AI agents in customer-facing contexts, Topify tracks brand visibility and citation patterns across the major AI platforms your buyers are already using.

Q: What are the biggest AI Agent trends heading into 2025 and 2026?

A: The clearest shift is from single-agent pilots to multi-agent systems deployed at workflow scale. Enterprises that ran isolated experiments in 2024 are now building full agentic pipelines across sales, support, and content operations. Alongside this, GEO (Generative Engine Optimization) is emerging as a distinct marketing discipline focused on brand visibility inside AI-generated answers rather than traditional search rankings. Agent cost optimization and safety guardrails are becoming critical infrastructure as deployments mature and regulatory scrutiny increases.