Your brand ranks #1 for a high-intent keyword. But when someone searches that query, Google summarizes an answer at the top of the page and your brand isn’t in it.

That’s the visibility gap. And most teams don’t catch it until traffic has already dropped.

Organic click-through rates have fallen 61% for queries where an AI Overview is present, according to longitudinal data tracking the shift from 2024 to 2026. Meanwhile, 69% of Google searches now end without a single click to any external site. The implication is clear: if you’re not being cited in the summary, you’re largely invisible.

This guide walks through how to track where your brand stands in Google AI Overviews today, diagnose why you might be missing, and execute the optimizations that actually move the needle.

Rankings Alone Won’t Tell You If You’re in AI Overviews

This is the single most important thing to internalize before you do anything else.

Traditional rank tracking tools tell you where your page sits in the blue-link results. They don’t tell you whether your brand appears in the synthesized answer above those results. And as of 2026, that synthesized answer is often the only part of the SERP a user actually reads.

The overlap between top-10 organic rankings and AI Overview citations has collapsed. In 2024, roughly 76% of AI Overview citations were pulled from the top-10 organic results. That figure now sits somewhere between 17% and 38%, meaning the majority of citations are coming from pages outside the traditional first page.

Pages ranking in positions 11 to 100 capture approximately 31% of AI Overview citation slots. Pages outside the top 100 account for another 31%.

That’s a completely different game. And playing it requires a completely different set of metrics.

The 5 Signals That Actually Measure AI Overviews Performance

Before tracking, you need to know what to track. These are the metrics that matter for AI Overviews optimization specifically.

Visibility Rate (Inclusion Rate): The percentage of relevant prompts where your brand appears in the AI-generated answer. AI outputs are probabilistic, not fixed. Only 30% of brands maintain consistent visibility across multiple regenerations of the same query. That means visibility must be measured as a probability score across multiple runs, not a single manual check.

Sentiment Score: How the AI characterizes your brand when it does mention you. Is it framing you as a leading option, a budget alternative, or a niche pick? The framing matters almost as much as the inclusion.

Position Index: Where in the narrative your brand appears. First recommendation correlates with meaningfully stronger downstream engagement than being mentioned third or fourth.

Citation Source Attribution: Whether AI is linking to your own content or citing third-party platforms like Reddit or Wikipedia to validate your existence. High mentions with low direct citations signal a content-authority mismatch.

CVR (Conversion Visibility Rate): This is the downstream metric. Visitors arriving from AI Overview citations convert at dramatically higher rates than traditional organic traffic. One Ahrefs study found AI search visitors convert at 23x the rate of standard organic visitors. You want to know how often AI recommendations are translating into actual site visits.

Step 1: Audit Your Current AI Overviews Visibility

Start with a baseline. You can’t track improvement without knowing where you stand.

The manual method: Open Google in an incognito window. Run 15 to 20 conversational queries that represent how your target customers would describe their problems, not keyword-style queries. Note whether an AI Overview appears, and whether your brand or your content is cited in it.

Question-format queries trigger AI Overviews at an 88% to 91% rate. Long-tail queries of seven or more words trigger them at 46.4%. Short navigational queries, on the other hand, trigger them less than 10% of the time. Your audit prompts should skew toward the first category.

The limitation of manual audits is significant: you can only check a handful of prompts, and AI outputs are non-deterministic, meaning the same query can produce different citations across runs.

The tool-based method: Platforms like Topify automate this at scale. Topify runs hundreds of prompt variations across ChatGPT, Gemini, Perplexity, and Google AI Overviews, giving you a statistical baseline of your inclusion rate rather than a snapshot. The Visibility Tracking feature shows you where your brand appears, how consistently, and which prompts are driving or missing citations.

That probabilistic view is what makes tool-based tracking meaningful for ongoing measurement.

Step 2: Map the Prompts That Trigger AI Overview Citations in Your Category

Most brands optimize for the wrong inputs. They focus on high-volume keywords when over 80% of AI prompts are phrased as context-rich, conversational queries.

A traditional search might be “email marketing tool.” A generative search prompt is more likely “which email marketing tool is best for a 50-person SaaS team with a $500 monthly budget.” Those are completely different inputs, and they surface different citations.

Prompt mapping means identifying the specific conversational questions that trigger AI Overviews in your category, and then checking whether your brand, your competitors, or third-party sites are appearing in those answers.

For B2B tech brands, this matters more than almost any other sector. AI Overviews appear for over 70% of technology queries in the US, and the buyer journeys they support are exactly the high-intent, research-heavy paths where brand positioning is decisive.

The goal is to build a prompt matrix: a structured set of 40 to 100 prompts covering the key questions your buyers are asking at each stage of evaluation. Topify’s High-Value Prompt Discovery feature surfaces these automatically, flagging prompts that are generating AI Overview appearances in your category that your brand isn’t winning.

Step 3: Diagnose Why You’re Not Being Cited

If you’re ranking well but absent from AI Overviews, you’re dealing with a citation source gap. Here’s how to diagnose it.

Check which domains are being cited instead of you. AI platforms have a clear hierarchy of trust. Google AI Overviews frequently cite Reddit (2.2% of citations), YouTube (1.9%), and Quora (1.5%), alongside brand-owned content. If competitors are being cited via these platforms and you’re not present there, the gap is one of authority distribution, not just content quality.

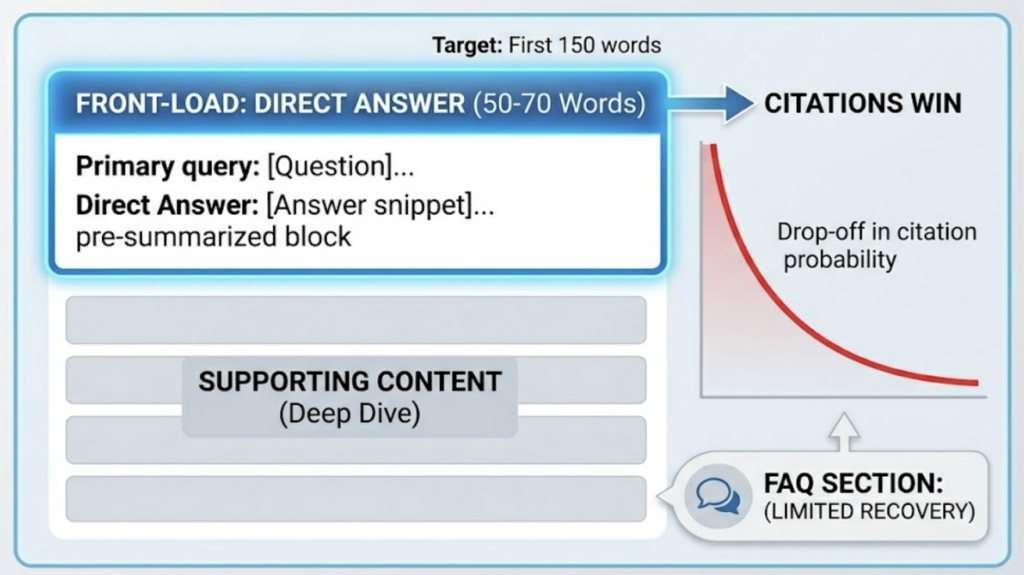

Evaluate your content’s extractability. Research shows that 55% of AI Overview citations come from the first 30% of a document, with the highest citation probability (27%) concentrated between the 10% and 20% mark. If your primary answer to a query is buried in paragraph six, the AI’s retrieval system is likely skipping past it.

Assess your entity authority. AI models evaluate content through semantic understanding of who a brand is and what it’s authoritative on. If your brand has an inconsistent presence across structured data, third-party domains, and the Google Knowledge Graph, the AI system has lower confidence in citing you. A gap in citations often reflects a gap in corroboration, not a gap in expertise.

Topify’s Source Analysis feature shows you exactly which domains AI is pulling from for the prompts in your category. That’s the diagnostic layer most manual workflows can’t replicate.

Step 4: Close the Gaps with a Targeted Optimization Playbook

Once you know what’s missing, there’s a specific set of actions that move the needle.

Front-load your answers. Every high-priority page should open with a 50 to 70 word direct answer to the primary query it targets, within the first 150 words. This is not about keywords; it’s about giving the AI a clean, pre-summarized block it can extract. The drop-off in citation probability after the first third of a page is steep, and recovery later in the document is limited unless you’re using FAQ sections.

Implement schema markup. Basic schema is a minimum, not a differentiator. FAQ page schema increases citation likelihood by 60%, according to available benchmarks. Schema markup overall has been associated with a 73% boost in AI Overview selection probability. For brands in B2B SaaS or tech, this is one of the fastest wins available.

Build entity authority through third-party platforms. If AI systems are citing Reddit and YouTube to validate brand claims in your category, your presence on those platforms isn’t a social media strategy, it’s an AI visibility strategy. Unlinked mentions and community-level engagement on high-authority domains signal to AI models that a brand exists and is trusted beyond its own site.

Use entity linking. Connecting on-page mentions to external knowledge bases like Wikidata has been shown to increase AI Overview visibility by 19.72%. It reduces the semantic ambiguity that causes AI systems to skip over content they’d otherwise trust.

Produce original data. Content that aggregates existing information is increasingly deprioritized. Original research and proprietary data show a 40% higher citability rate than generic content. Even small-scale original surveys or internal data publications help here.

| Optimization Tactic | Impact | Timeline |

|---|---|---|

| Schema Markup Implementation | +73% selection boost | 1–4 weeks |

| 150-word Direct Answer Lead | 55% of citations from top 30% of page | 4–8 weeks |

| FAQ Page Schema | +60% citation likelihood | 1–4 weeks |

| Entity Linking | +19.72% visibility lift | 4–8 weeks |

| Original Research / Data | +40% citability rate | 3–4 months |

Step 5: Measure Whether It’s Working

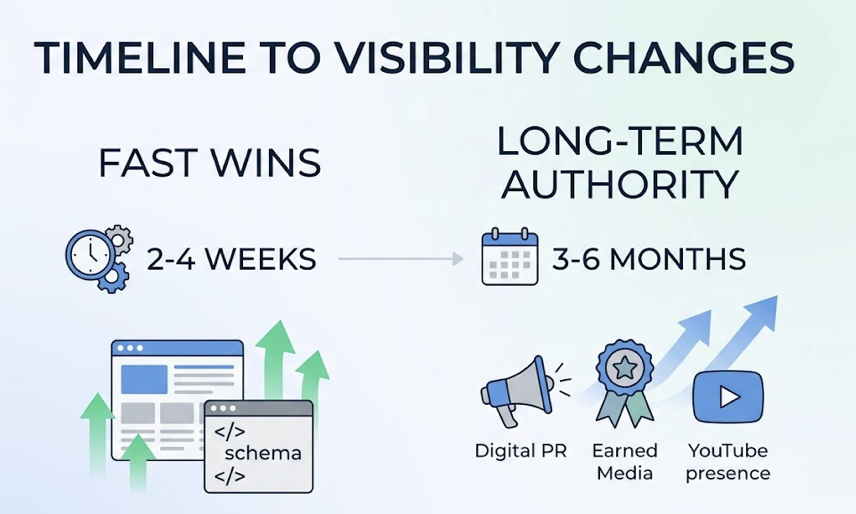

The timeline for AI Overviews optimization is faster than traditional SEO, but the measurement approach has to be different.

Initial visibility changes can appear within 2 to 4 weeks following a structural content update or schema implementation. This is meaningfully faster than the 3 to 6 months typically associated with standard organic ranking shifts. That said, authority-building tactics like digital PR, earned media, and YouTube presence take 3 to 6 months to translate into sustained citation improvement.

What to measure week-over-week:

Your Visibility Rate should be tracked across a fixed set of 40 to 100 representative prompts, run multiple times each to account for the probabilistic nature of AI outputs. A single run per query will give you noisy data. The baseline you need is a statistical inclusion rate, not a snapshot.

Track your Sentiment Score alongside visibility. A brand can appear in AI Overviews while being characterized unfavorably, and that framing affects downstream behavior in ways that standard analytics won’t surface.

Monitor citation source shifts. If the AI starts pulling from your own content rather than third-party domains to validate your brand, that’s a signal that entity authority is building.

And watch the conversion data. Because AI Overview visitors are highly pre-qualified, a relatively small increase in citation frequency can produce an outsized revenue impact. The 23x conversion lift makes even modest visibility gains worth tracking precisely.

Conclusion

Google AI Overviews have broken the historical relationship between ranking and visibility. A first-position ranking no longer guarantees inclusion in the synthesized answer that most users actually read.

The brands that close this gap are the ones that treat AI Overviews as a distinct measurement and optimization challenge, not a side effect of traditional SEO. That means tracking inclusion probability across prompt sets, diagnosing citation source gaps, restructuring content for extractability, and building entity authority across both owned and third-party channels.

The citation premium is real: brands cited in AI Overviews earn 35% more organic clicks and 91% more paid clicks than non-cited competitors. And the downstream traffic quality is fundamentally different.

Start with your baseline. Run your audit. Then fix what’s actually missing.

FAQ

How often does Google change its AI Overview citations?

Volatility varies significantly by industry. Finance queries can see weekly citation turnover as Google tightens quality filters. Healthcare and B2B tech tend to be more stable. This is why ongoing measurement matters more than periodic audits. A citation you earned this month may not persist next month without continued optimization.

Can I optimize for AI Overviews without changing my core SEO strategy?

You can do both in parallel, but they’re not the same. Traditional SEO focuses on PageRank signals and keyword density. AI Overviews optimization focuses on content extractability, entity authority, and schema implementation. Tactics like front-loading answers and FAQ schema support both goals. Tactics like entity linking are specific to the AI layer.

How do I identify which competitors are winning AI Overview placements in my category?

Manual competitive checks give you partial visibility but won’t scale. A structured approach runs the same prompt set against your competitor brand names and compares inclusion rates, position, and sentiment scores. Topify’s Competitor Monitoring feature automates this comparison, showing you how your visibility rate, position index, and sentiment score stack up against specific competitors across the same prompt matrix.

Is AI Overviews optimization different from GEO (Generative Engine Optimization)?

Related but not identical. AI Overviews Optimization refers specifically to Google’s AI-generated summary feature at the top of search results. GEO is a broader discipline covering how brands appear across all generative AI systems, including ChatGPT, Perplexity, and Gemini. The content and technical principles overlap significantly, but GEO involves prompt tracking across multiple platforms, not just Google.