Your content might be getting cited by ChatGPT, Perplexity, or Google AI Overviews right now. You’d have no idea.

That’s not a hypothetical. Zero-click searches already account for 69% of all queries, up from 56% just a year ago. When Google triggers an AI Overview, the click-through rate for the top organic result drops by 58% to 61%. The traffic didn’t disappear. It got redirected to whoever AI decided to cite.

The brands winning in this shift aren’t the ones with the highest rankings. They’re the ones who know exactly when and where they’re being cited, and why.

Here’s how to build that visibility across all three major AI platforms.

AI Citations Are Now a Traffic Source. Most Brands Still Don’t Track Them.

Being cited by an AI platform isn’t just a credibility signal. It’s a revenue driver.

Sources cited in Google AI Overviews earn 35% more organic clicks and 91% more paid clicks than non-cited competitors on the same query. And users arriving from AI platforms aren’t casual browsers: they generate 23x more signups relative to their traffic share compared to traditional organic visitors.

Legacy SEO tools can’t see any of this. Rank trackers check where a URL sits in a list. They can’t detect when AI uses your content to build a synthesized answer without linking to you, or when a competitor is getting cited for every prompt in your core category.

That’s the gap. And it’s widening.

What “AI Citation” Actually Means on Each Platform

The phrase “AI citation” covers three distinct architectures. Getting them confused leads to the wrong tracking approach.

| Feature | ChatGPT (Browsing) | Perplexity AI | Google AI Overviews |

|---|---|---|---|

| Retrieval Type | Bing Search API | Hybrid (Bing + Cache) | Google Search Index |

| Citation Style | Footnotes / Icons | Numbered Inline Links | Carousel / Source Links |

| Sources Per Answer | 3 to 6 | 3 to 4 | 6.8 to 13.3 |

| Update Speed | Real-time | Real-time | Moderate (indexed) |

| Selection Focus | Authority & readability | Entity clarity & BLUF | E-E-A-T & extractability |

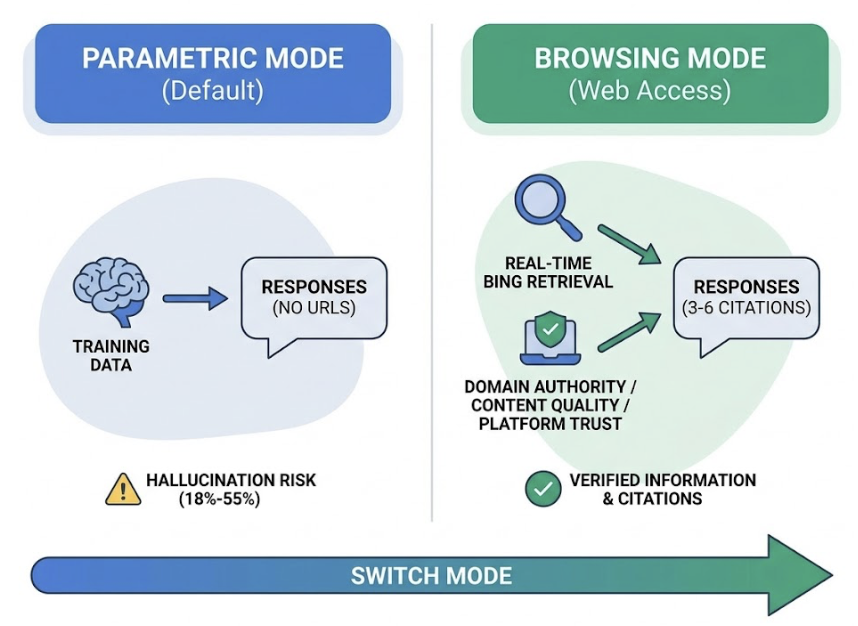

ChatGPT operates in two modes. Its default “parametric” mode draws from training data and doesn’t cite real URLs, with hallucination rates between 18% and 55%. Switch to Browsing Mode and the architecture changes entirely: real-time retrieval from Bing, 3 to 6 clickable citations per response, and a selection process weighted toward domain authority (40%), content quality (35%), and platform trust (25%).

Perplexity is RAG-native. Every answer requires citations. That makes it structurally more transparent than standard LLMs, but also more selective: while a single query might retrieve 60+ sources, only 3 to 4 make the final answer.

Google AI Overviews sits inside the search index itself, using Gemini to synthesize multiple sources simultaneously. It cites more sources per answer than either ChatGPT or Perplexity, but the selection logic is built around extractability, not just rank.

How to Check If ChatGPT Is Citing Your Content

The manual approach is straightforward: open a ChatGPT session with Browsing enabled, run a prompt your target customer would ask, and check the Sources panel. If your domain appears, you’re cited.

The problem isn’t the method. It’s the math.

ChatGPT’s responses are non-deterministic. The same prompt generates different sources across different sessions. A single check is a snapshot of one instance, not a reliable indicator of your actual inclusion probability across hundreds of regenerations.

Content updated within the past 30 days gets 3.2x more citations in Browsing Mode. Which means stale content that showed up last month might already be gone.

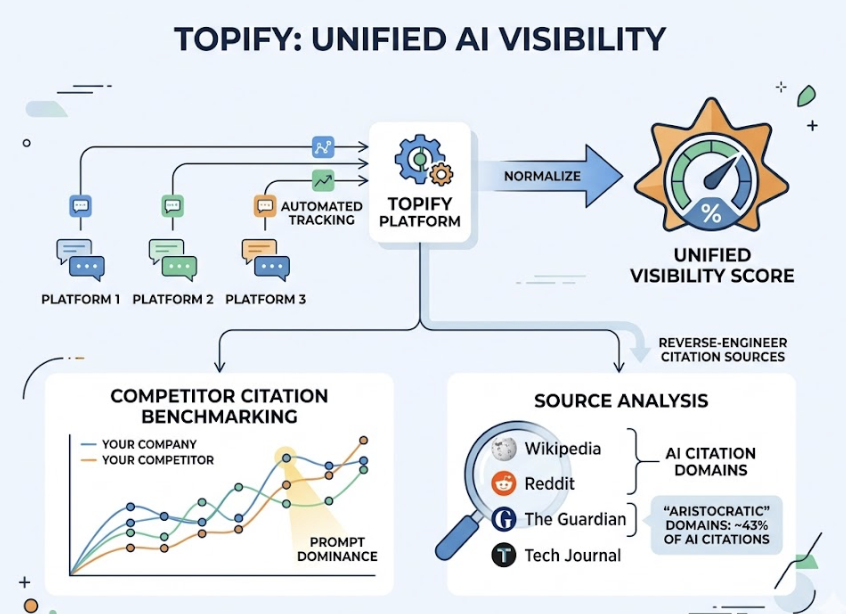

This is where Topify’s Source Analysis changes the math. Instead of running one test prompt, Topify runs thousands of relevant prompts across ChatGPT automatically, logs every citation event, and surfaces your domain’s inclusion probability over time. It’s the difference between checking the weather once vs. reading a 30-day forecast.

Tracking Citations in Perplexity: What the Numbers Actually Tell You

Perplexity’s user base is smaller than ChatGPT’s (roughly 780 million monthly queries vs. 2.5 billion daily prompts), but its audience skews heavily toward research-oriented, high-intent buyers. Being cited there carries real commercial weight.

The platform uses an L3 XGBoost reranker to decide which sources earn a spot in the final answer. Two signals matter most:

BLUF rule: 90% of top citations come from content that answers the query directly within the first 100 words. Perplexity’s model doesn’t have patience for slow-building articles.

Schema markup: Pages with FAQ or Article JSON-LD schema see a 47% top-3 citation rate, compared to 28% for pages without it. That’s not a marginal difference.

The difference between being cited #1 vs. #5 in Perplexity

Perplexity doesn’t display citations as a ranked list, but position still matters. “Primary Sources” appear in the opening paragraph of the synthesized answer. “Supporting Citations” appear later and attract significantly less attention. Moving from a supporting slot to a primary slot is the difference between being a reference and being the answer.

Topify’s Visibility Tracking shows where your citations appear within Perplexity responses, not just whether they appear. That position data is what turns tracking into optimization.

Google AI Overviews Citations Are Different. Here’s Why That Matters.

Google AI Overviews now appear on 13.14% of all U.S. desktop searches, and for informational queries that number reaches 80% to 88%. When an AIO triggers, the average zero-click rate for that query hits 83%.

Here’s what most brands get wrong about AIO: they assume it favors top-ranked pages.

It doesn’t.

Analysis of over 4 million AIO citations shows that only 38% of cited pages come from the top 10 search results for that query. More than 60% come from pages ranking at position 40 or lower. This happens because of “Query Fan-Out”: Google’s AI expands your original question into multiple related sub-queries, pulling from a much wider pool of content than standard ranking would reach.

For AIO, the winning factor is extractability. Content needs to be structured as standalone blocks of 40 to 60 words that lead with a direct answer, include a concrete data point, and can be parsed without context from the surrounding page. Pages that combine text with original images and video see a 156% higher selection rate in AIO.

Topify’s Visibility Tracking monitors your brand’s appearance in AI Overview responses across the queries that matter to your category, including prompts where you’re not showing up but competitors are.

Stop Tracking 3 Platforms Separately. Use One AI Citation Tracker.

Managing citations manually across ChatGPT, Perplexity, and Google AIO creates three separate data silos and burns team hours that don’t compound into results.

The efficiency gap is hard to ignore:

| Metric | Manual Tracking | Topify Automation |

|---|---|---|

| Audit Speed | 5 to 10 minutes per prompt | Under 1 second per prompt |

| Error Rate | High (human error) | Under 1% |

| Update Cadence | Monthly (at best) | Daily or hourly |

| Statistical Power | Single result snapshot | Inclusion probability across sessions |

| Actionability | Qualitative notes | One-click optimization |

Citation sources churn at 40% to 60% monthly. A brand cited reliably in October might be completely displaced by November. Monthly manual audits can’t catch drift at that speed.

Topify runs automated prompt tracking across all three platforms, normalizes the results into a unified Visibility Score, and surfaces Competitor Citation Benchmarking so you can see exactly which prompts a competitor dominates and what’s driving their edge. The Source Analysis feature reverse-engineers the specific third-party domains driving competitor citations, including the “aristocratic” domains like Wikipedia, Reddit, and industry journals that account for 43% of all AI citations.

That’s not a report you read once. It’s a live signal you act on weekly.

What to Do With Citation Data After You Have It

Citation data is only useful if it changes what your team produces. Three actions to take immediately after your first audit.

Identify which content types are getting cited, then double down. If your pricing pages are getting cited but your blog posts aren’t, that’s not a content quality problem. It’s a format signal. AI models are 6.5x more likely to cite a brand through external authoritative sources than through its own website, which means investing in third-party placements on Reddit, review sites, and industry publications often outperforms publishing more owned content.

Close the gaps where competitors win and you don’t. Use Citation Gap Analysis to find prompts where competitors show up and you don’t. If competitors are winning because they have original research or proprietary statistics, that’s the content gap to close. Content that contains 32% more explicit concepts than average is significantly more likely to earn a citation.

Restructure high-performing pages into extractable chunks. The BLUF rule applies across all three platforms: answer the question directly in the first 100 words, include a specific data point, and wrap the block in schema markup. That format change alone can move a page from a supporting citation to a primary one.

3 actions to take after your first citation audit

- Pull your top 10 cited pages and identify their shared format (length, structure, data density)

- Run a Competitor Citation report for your top 5 category prompts and map the gap

- Pick your 3 most-visited pages and restructure the opening 150 words to answer the primary query directly

Conclusion

AI citations are no longer a bonus visibility play. They’re a core traffic and conversion channel, one where the gap between tracked brands and untracked ones is widening every month.

The mechanics differ across ChatGPT, Perplexity, and Google AI Overviews, but the underlying principle is the same: the brands that understand where they appear, where they don’t, and why are the ones building compounding authority. The brands that find out six months later are the ones trying to catch up.

Start with an audit. Know your inclusion probability. Then build from there.

FAQ

Can I track AI citations for free?

Manual tracking is technically free, but it’s not reliable. Running tests manually across three platforms costs 5 to 10 minutes per prompt, and without statistical sampling across multiple sessions, a single result tells you little about actual inclusion probability. Paid platforms like Topify start at $99/month and automate what would otherwise take hundreds of hours monthly.

How often should I audit my AI citations?

Citation sources churn at 40% to 60% per month, and the primary narrative inside Google AI Overviews shifts roughly every 90 days. Monthly audits can’t catch that rate of change. High-performing brands have moved to weekly or daily monitoring to catch competitive displacements before they compound.

Does getting cited by AI improve my website traffic?

The impact is non-linear. Overall click volume may drop due to zero-click results, but the traffic that does come through AI citations is substantially higher quality. AI-referred users generate 23x more signups relative to their traffic share, view 50% more pages per session, and have lower bounce rates than traditional organic visitors.

What’s the difference between AI visibility and AI citations?

AI visibility includes both Brand Mentions (your name appears in the AI’s text) and Website Citations (the AI links directly to your URL). Mentions build awareness. Citations drive referral traffic and validate authority. Only 28% of brands achieve both in AI-generated answers.