Your keyword rankings look fine. Traffic is holding steady. The dashboard is green.

And yet, you’re invisible to a growing share of the people searching for exactly what you offer.

That’s the gap most SEO teams haven’t closed. Traditional rank tracking tells you where you stand in Google’s list of blue links. It doesn’t tell you whether you’re showing up in the AI-generated summary sitting above those links, or in the ChatGPT response a prospect pulled up at 11pm before they ever opened a browser tab.

In 2026, that gap is the difference between a brand that wins search and one that just monitors it.

What “SEO Track” Actually Means Now (It’s Not Just Keywords Anymore)

For most of the past decade, SEO tracking meant one thing: keyword position. You picked a list of terms, plugged them into a rank tracker, and watched the numbers move.

That model had a clean logic to it. Higher position meant more clicks, which meant more leads. The math was simple.

The math no longer works.

Search in 2026 isn’t a list you climb. It’s a conversation happening across Google AI Overviews, ChatGPT Search, Perplexity, and Gemini. The entity answering that conversation synthesizes information from multiple sources, and whether your brand gets included, cited, or described accurately depends on a completely different set of signals than traditional rankings.

Tracking has followed the same split. Teams getting the clearest picture of their search performance now measure two parallel worlds: the traditional SERP and the AI answer layer. Teams that don’t are working from incomplete data.

The metrics that defined SEO tracking for the last decade

Before 2024, the core tracking stack was built around average position, organic CTR, domain authority, and crawl coverage.

These metrics were built around Googlebot and the assumption that ranking high meant getting clicked. Keyword tracking was a forecasting tool: move from position 4 to position 2, and traffic projections followed a predictable curve.

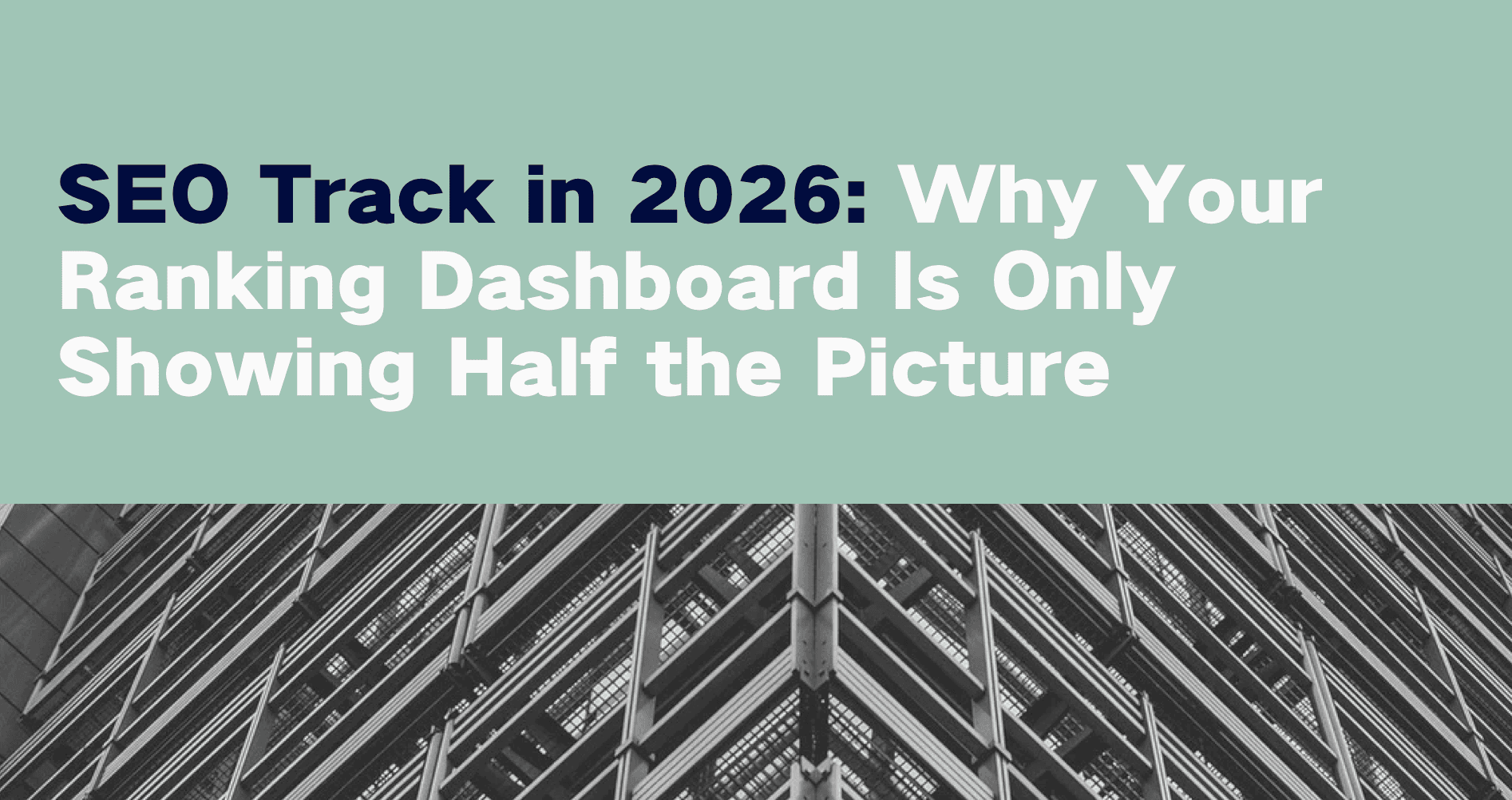

Technical SEO tracking was equally deterministic. You checked indexation rates, fixed crawl errors, confirmed robots.txt wasn’t blocking key pages. Success was measurable and largely platform-agnostic. The whole system assumed Google was the search engine, and a blue link was the destination.

What changed when AI search entered the picture

Zero-click search now accounts for over 65% of all Google searches. On mobile, that figure reaches 77%.

In practice: a user asking for the best project management tool for remote teams doesn’t click through ten results and compare. They read a synthesized paragraph from an AI Overview and, in many cases, stop there. The traditional organic result still exists, but its CTR has collapsed. Where AI Overviews are present, organic click-through rate has dropped 61%, from 1.76% to just 0.61%.

The consequence for SEO tracking is structural. A brand can rank #1 for a high-volume keyword and still capture a fraction of the attention that keyword used to deliver. The impression now lives inside the AI’s answer. Measuring that requires a different approach entirely.

| Measurement Category | Traditional SEO (2015–2023) | AI-Driven SEO (2026) |

|---|---|---|

| Primary Goal | Ranking in the top 10 blue links | Inclusion and citation in synthesized answers |

| Search Intent | Keyword-based, fragmented | Conversational, long-tail, and complex |

| Visibility Surface | List-based SERPs | Multi-surface: AI summaries, social, links |

| Success Metric | Raw traffic and average position | Brand citation share and sentiment accuracy |

The 6 Metrics That Actually Define SEO Performance in 2026

Keyword ranking and position tracking

Rankings still matter. For high-intent commercial queries, like “buy accounting software for startups” or “best HVAC company near me,” traditional results continue to drive strong CTRs.

The approach has changed. Tracking isolated keywords is being replaced by cluster-based tracking, where teams measure visibility across semantically related topic groups tied to specific products or revenue lines. Share of voice within a theme has become more useful than the position of a single term.

The real benchmark: ranking in traditional results while also appearing as a cited source in the AI Overview above them. Brands cited in AI Overviews earn 35% higher CTR than those appearing only in the traditional results below.

Brand visibility rate across search platforms

Search in 2026 is multi-surface. Featured snippets, People Also Ask boxes, knowledge panels, local packs, video carousels, and AI Overviews all carry visibility value independent of whether a user clicks.

Only 360 out of every 1,000 U.S. searches result in a click to the open web. That means the impression itself has become a KPI. Repeated brand exposure in authoritative snippets builds the kind of recognition that drives branded searches later, and branded searches convert at the highest rate.

Visibility rate tracks the percentage of target SERPs where a brand appears in at least one high-impact feature. It’s a more honest measure of actual search presence than keyword position alone.

AI citation and source tracking

This is the most significant new metric in 2026. Citation tracking measures how often, and in what context, a brand is referenced in generative AI responses across platforms like ChatGPT, Perplexity, Gemini, and Grok.

Citations are the new backlinks. They represent a retrieval system’s vote of confidence in your content’s authority. Citation frequency varies meaningfully by platform: Grok cites at a rate of 27.01%, driven by social signal velocity; Perplexity at 13.05%, weighted toward recency and structured data; Google AI Mode at 9.09%, shaped by semantic completeness and E-E-A-T signals.

Tracking requires knowing which URLs and domains AI systems are pulling from for your category. Topify‘s Source Analysis surfaces exactly which domains AI engines cite when answering prompts relevant to your brand, and which ones are being attributed to competitors instead.

Competitor position benchmarking

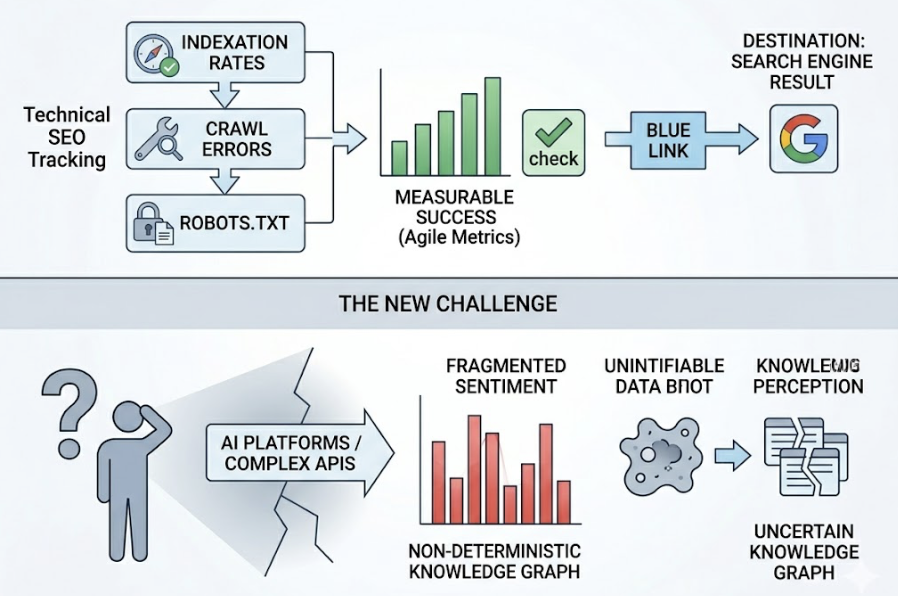

Traditional SEO benchmarking compared keyword rankings side by side. In 2026, the more important comparison is AI citation share by topic cluster.

If a competitor dominates citations for “enterprise cybersecurity trends,” it signals stronger topical authority in the eyes of the LLM. That’s not a backlink gap — it’s a content and credibility gap that plays out inside the model’s internal representation of the category.

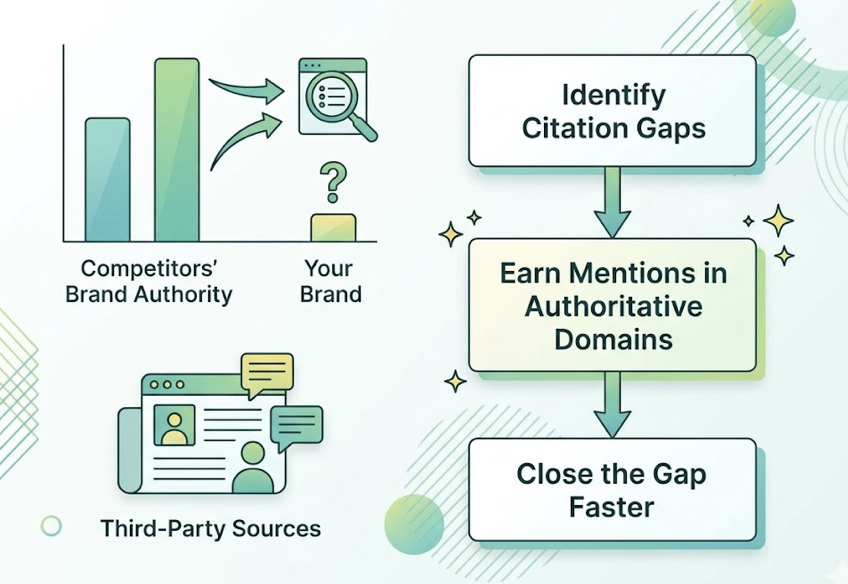

Topify’s Competitor Monitoring tracks this in real time, showing not just where competitors appear relative to your brand, but which third-party sources are validating them. Those sources become targets. Citation gaps often close faster than backlink gaps because the pipeline is shorter: earn a mention in the right authoritative domain, and the model’s next retrieval cycle picks it up.

Sentiment in AI-generated answers

AI systems don’t just mention brands. They describe them. The tone of those descriptions, positive, neutral, or negative, accumulates into something like a reputation layer across the models.

Teams in 2026 track what’s called perception drift: the gradual shift in how AI describes a brand’s quality, pricing, or market positioning. If Perplexity starts describing a SaaS tool as having a “steep learning curve” or “outdated pricing,” that framing can persist and spread before any internal team flags it.

Topify’s Sentiment Analysis assigns a 0-100 sentiment score across AI platforms, giving teams an early warning system before perception drift compounds. Positive sentiment is equally useful — it surfaces what the market is already validating about a brand, often before the internal team notices.

Conversion visibility rate

Visibility metrics only matter if they connect to revenue. Conversion Visibility Rate focuses tracking on the queries that actually drive leads and pipeline, not just impressions.

The data here is direct: AI-referred visitors deliver 4.4x higher conversion value than general organic traffic. These are users who’ve already received a vetted recommendation from a system they trust. They arrive pre-qualified.

Topify’s CVR metric maps this path, showing which AI-referred sessions are generating commercial outcomes and attributing visibility effort to business results. It’s the metric that makes the investment defensible when total click volume is compressing.

Why Google Rank Alone No Longer Tells You If You’re Winning

A #1 ranking in 2026 is still worth having. It’s just not the signal it used to be.

AI Overviews now routinely exceed 1,200 pixels in height. On a standard 900-pixel desktop viewport, the traditional #1 result sits below the fold before a user scrolls. On mobile, it’s further down still.

This is visual displacement. The brand cited inside the AI Overview earns the 35% CTR lift. The #1 organic result, uncited and sitting below the fold, earns significantly less than its position suggests.

The numbers confirm it. Nearly 60% of all searches now end without a click to any destination site. Organic CTR collapses by 61% where AI Overviews appear. On mobile, the zero-click rate hits 77%. Tracking only rank misses all of this. It reports the position of a blue link without measuring whether the AI is citing the brand, describing it accurately, or mentioning it at all.

Bottom line: rank tells you where your link lives. It doesn’t tell you whether the AI trusts you enough to quote you.

The Tools That Cover Both Traditional and AI SEO Tracking

Traditional platforms like Semrush and Ahrefs remain useful for technical audits, backlink intelligence, and keyword gap analysis. In 2026, they’ve integrated basic AI visibility toolkits, but these generally cover AI Overview presence without the citation depth or sentiment accuracy that full GEO monitoring requires.

Site speed matters more than most teams realize. An LCP under 0.4 seconds correlates with 3x more AI citations, making technical performance directly relevant to AI visibility, not just user experience.

The more complete picture comes from purpose-built AI visibility platforms. Topify is built specifically for this layer, tracking brand performance across ChatGPT, Gemini, Perplexity, DeepSeek, and other major AI engines through seven metrics: visibility, sentiment, position, volume, mentions, intent, and CVR.

One operational challenge worth flagging: AI responses for the same prompt change up to 70% of the time. A single snapshot doesn’t tell you much. Systematic prompt execution, running the same queries repeatedly to establish trend data rather than point-in-time readings, is what separates reliable SEO tracking from guesswork.

How to Build an SEO Tracking System That Won’t Be Outdated Next Year

Step 1 – Define your tracking scope (Google + AI)

Start by auditing what your current reports actually measure. Separate vanity metrics from business drivers.

Then expand the scope to include AI-specific visibility. Identify your true SEO competitors, which may include publishers like Reddit or Wikipedia that dominate AI citation slots for your category, not just direct business rivals. Technical readiness matters here too: confirm your robots.txt isn’t blocking AI crawlers like ChatGPT-User, and that key pages use server-side rendering rather than JavaScript-only builds so AI systems can actually parse the content.

Step 2 – Set benchmark metrics before you optimize

Before any optimization work starts, establish a baseline across 50-100 high-intent prompts on ChatGPT, Perplexity, Gemini, and Google AI Mode.

Track citation rate, attribution frequency, and sentiment baseline. Also track the verification tax: the industry average is 4.3 hours per week spent by team members checking AI-generated content for brand accuracy, at an annual cost of roughly $14,200 per employee. That number quantifies exactly how much manual oversight a solid tracking system needs to reduce.

Content freshness is a baseline variable too. Citations drop sharply for content older than three months. The refresh cadence needs to be built into the plan before optimization begins.

Step 3 – Monitor competitor positions in both channels

Ongoing monitoring should focus on fan-out queries. When an AI receives a complex prompt, it breaks it into smaller sub-queries before synthesizing an answer. Tracking which competitors rank for those fragments gives a clear map of where authority is being lost, and where content gaps can be closed.

Track citation gaps alongside rank gaps. These are the authoritative third-party domains — industry journals, analyst reports, community platforms — that AI systems rely on and that don’t yet mention your brand. Closing those gaps is often faster than closing traditional link gaps, and the downstream effect on AI citation frequency is direct.

Conclusion

The ranking dashboard isn’t obsolete. It’s incomplete.

It captures the visible layer of search: the traditional links that users are increasingly bypassing. What it doesn’t capture is whether your brand is the source the AI trusts to answer a user’s question.

In 2026, that’s where discovery happens. A user asks a complex question. An AI synthesizes an answer. The brand cited inside that answer earns the trust transfer. Tracking that process requires measuring citation share, sentiment accuracy, and AI position alongside traditional rank data.

The teams building that tracking system today won’t be scrambling to rebuild it next year. Search volume is fragmenting across ChatGPT, Perplexity, and YouTube. The window to establish AI citation authority before competitors do is narrowing. Brands that treat AI visibility as a measurable, manageable channel now are the ones that will own it.

FAQ

What’s the minimum prompt set needed to establish a reliable AI citation baseline?

50-100 high-intent prompts across your primary platforms is a workable starting point. The goal is enough volume to surface statistical trends rather than individual data points that can swing 70% between queries.

Does content length affect AI citation rates?

Structure matters more than length. AI systems cite content that directly answers a specific question in a retrievable format — a clearly labeled definition, a step-by-step process, a structured comparison. Long content that buries the answer doesn’t outperform a well-structured 600-word page.

How often should content be refreshed to maintain AI visibility?

Quarterly at minimum. AI models show strong recency bias, and citations drop sharply for content older than three months. High-priority topics warrant monthly audits.

Is zero-click search always bad for ROI? Not necessarily. AI citations function like brand placements: users who see a brand described as the top recommendation for a category often conduct a branded search later. Those visits convert at a significantly higher rate, which typically offsets the reduction in raw click volume.

What is “perception drift” and how do you reverse it?

Perception drift is the gradual shift in how AI systems describe a brand’s quality, pricing, or positioning. Reversing it involves publishing updated content that reframes the relevant narrative, earning mentions in high-trust third-party sources carrying the corrected framing, and monitoring sentiment scores to confirm the shift is registering across platforms.

Why do AI systems cite Reddit and Wikipedia so frequently?

AI models prioritize sources with deep community validation and structured information. Wikipedia provides a high-trust entity database. Reddit offers first-hand human experience and reviews, which are core signals within the E-E-A-T framework that modern search algorithms prioritize.