Your client ranks #2 on Google. Traffic is stable. The report looks clean.

Then the client asks: “Why aren’t we showing up when people ask ChatGPT for a recommendation?”

You don’t have an answer. Your rank tracker doesn’t either.

That’s the blind spot. And it’s getting harder to ignore.

Rank Trackers Were Built for a Different Internet

For two decades, rank tracking worked because search had one logic: type a query, get a list of links, click the most relevant one. Position 1 meant visibility. Position 10 meant you’d better optimize.

That logic was built for Google’s “ten blue links” architecture, and it still holds there. The problem is that architecture now represents a shrinking share of where your clients’ audiences actually search.

AI search doesn’t return a list. It synthesizes a single answer. There’s no Position 1 to chase, no CTR to optimize for. The brand either gets mentioned or it doesn’t.

Traditional rank trackers measure the battle for the link. AI search is a battle for the mention. Research shows only 12% of sources cited by ChatGPT overlap with Google’s top 10 results, meaning strong organic rankings offer almost no guarantee of AI visibility. These are two separate competitions, and most agency reports only cover one.

Your Clients Are Searching on AI More Than You Think

This isn’t early adopter behavior anymore.

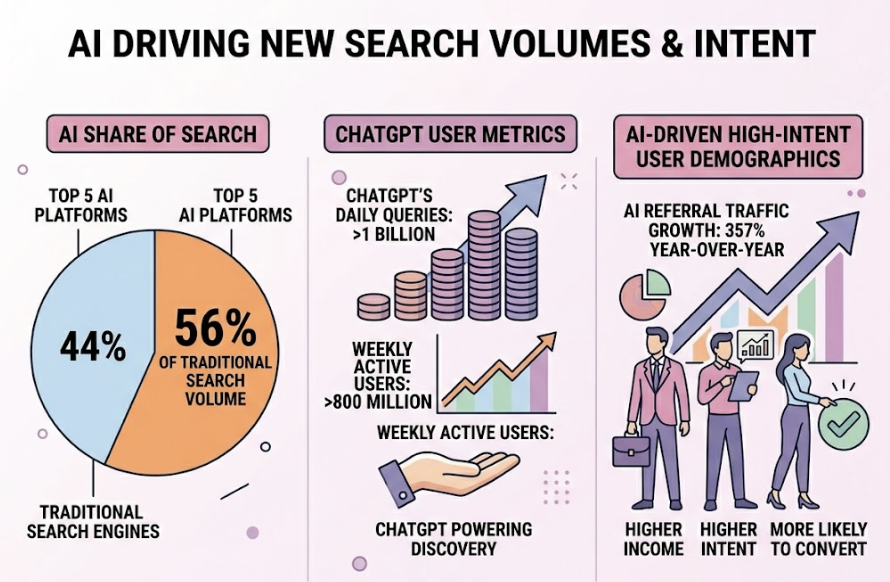

The top 5 AI platforms now account for 56% of traditional search engine volume. ChatGPT alone processes over 1 billion queries daily across 800 million weekly active users. AI referral traffic grew 357% year-over-year, and the users driving that growth skew toward exactly the demographics your clients want to reach: higher income, higher intent, more likely to convert.

Here’s what makes this commercially urgent for agencies: AI search visitors convert at 4.4 times the rate of traditional organic traffic. The conversational format pre-qualifies buyers before they ever reach a website.

Your clients are losing high-converting traffic to a channel you’re not reporting on. That’s not a data gap. That’s a revenue gap.

“Ranking” Means Something Different in AI Search

In Google, rank is a position: 1 through 100, deterministic and stable across sessions.

In AI search, “rank” is a probability. A brand might appear in 40% of responses to a prompt one week and 60% the next, depending on how the model’s retrieval weights shift. There’s no fixed list. There’s a constantly recalculating likelihood of being mentioned.

This changes what agencies need to measure. Visibility in AI search exists across four distinct forms:

- Direct mention: the brand name appears in the synthesized response

- Recommended inclusion: the brand is listed as a top solution for a specific problem

- Citation attribution: the brand’s URL is referenced as a source of authoritative data

- Sentiment framing: the tone the AI uses when describing the brand

Each carries different strategic value. Being recommended first is not the same as being cited as a source, which is not the same as being mentioned neutrally alongside five competitors. Treating all mentions as equal is the same mistake as treating Position 3 and Position 9 as equivalent on Google.

5 Metrics Your Client Reports Are Missing

These aren’t nice-to-have additions. They’re the data your clients need to understand whether their brand exists in the channels shaping purchase decisions.

1. AI Visibility Score

The foundational metric. It measures the percentage of relevant queries where the brand appears in an AI response. A brand with a 10% visibility score is effectively absent for 90% of the audience using AI for research. The calculation: responses mentioning the brand ÷ total tracked responses × 100.

2. Position (Prominence)

Getting mentioned and getting mentioned first are very different outcomes. Position tracks whether the brand appears as the lead recommendation or fifth in a comparison list. It also measures word count share: how much of the AI’s response is actually about the client versus competitors.

3. Sentiment Score

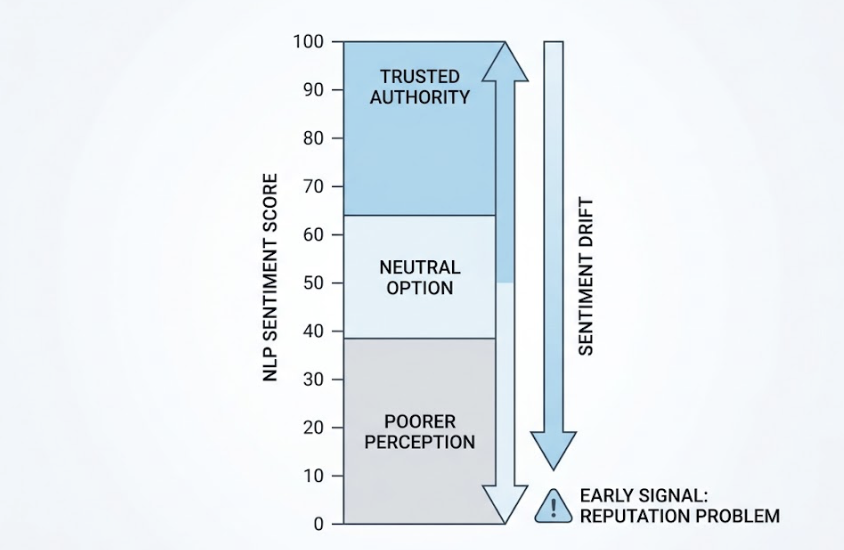

AI platforms describe brands in natural language, which means they assign perception. A 0-100 NLP sentiment scorereveals whether the AI characterizes a brand as a trusted authority (85-100), a neutral option (40-64), or something worse. Sentiment drift over time is often the earliest signal of a reputation problem forming in the AI knowledge graph, before it surfaces anywhere a traditional tool would catch it.

4. Conversion Visibility Rate (CVR)

Up to 70.6% of AI referral traffic shows up as “Direct” in Google Analytics because AI platforms often strip referrer headers. That “dark” traffic isn’t random: it converts at 10.21%, compared to 2.46% for standard direct traffic. CVR connects AI mentions to downstream conversion activity, giving clients an ROI case for visibility investment.

5. Source Coverage

AI models ground their answers in sources they trust. Source coverage reveals which domains get cited when an AI discusses the client’s category. If the client is mentioned but the citation points to a competitor’s comparison page or a Reddit thread, the agency knows exactly which content gap to close. JSON-LD structured data implementation increases the likelihood of AI citation by 2.5x, making this an actionable technical lever, not just a reporting metric.

Managing AI Tracking Across 10+ Clients Without Drowning

Tracking one brand across four AI platforms is manageable. Tracking 15 clients across ChatGPT, Gemini, Perplexity, DeepSeek, and AI Overviews simultaneously is a different operational challenge.

The starting point is prompt taxonomy: standardized sets of queries mapped to each client’s category, use case, and buyer stage. A discovery prompt (“What are the best [category] tools for [use case]?”) measures inclusion in the initial consideration set. A comparison prompt (“[Client] vs [Competitor] for [persona]”) tracks relative positioning and sentiment. These templates can be customized per account and run in parallel across platforms.

Running prompts across multiple LLMs simultaneously rather than sequentially reduces report generation time by 60%. That’s the difference between AI tracking being a manual research project and a scalable agency service.

Topify is built for this architecture. Its multi-project dashboard handles parallel tracking across platforms, aggregates visibility, position, sentiment, and CVR data per client, and surfaces competitor movement in real time. The Basic plan ($99/month) covers up to 4 projects and 100 prompts across ChatGPT, Perplexity, and AI Overviews. The Pro plan ($199/month) scales to 8 projects and 250 prompts for agencies managing larger portfolios.

How to Add AI Rankings to Client Reports Without Starting Over

The goal isn’t to replace what’s working. It’s to add a layer that answers the question traditional reports can’t.

The simplest approach: add an “AI Visibility” column alongside existing keyword rank data. The client sees that they rank Position #2 on Google and hold an 85% mention rate on ChatGPT for the same intent. Or they see they rank Position #1 on Google but have 0% AI visibility, meaning the top organic spot offers no leverage in the channel where high-intent buyers are researching.

That’s a conversation starter, not just a data point.

Topify’s seven core indicators map directly to the KPIs clients already track: AI Visibility Score maps to brand market share, Competitor Share of Voice maps to competitive intelligence, and CVR maps to revenue impact. The transition from “here’s your Google rankings” to “here’s your complete search presence” doesn’t require a new reporting format. It requires adding a generative layer to the one you already use.

Agencies that package this as a standalone offering can white-label AI visibility management at $300 to $1,000 per client per month, creating a recurring revenue stream built on data that competitors aren’t providing yet.

Conclusion

The blind spot in agency rank tracking isn’t a flaw in the tools. It’s a lag between how search works now and how agencies are still measuring it.

Traditional rank trackers will keep doing what they were built to do. The question is whether that’s still enough to explain what’s happening to a client’s brand in the channels that are shaping their buyers’ decisions.

Adding AI visibility data doesn’t require rebuilding the agency workflow. It requires a parallel measurement layer and the willingness to show clients a more complete picture of their search presence.

The agencies that close this gap first won’t just retain clients longer. They’ll have a service that competitors can’t replicate with existing tools.

FAQ

Does AI rank tracking replace SEO rank tracking?

No. Google still processes the majority of searches, and organic rankings remain a core performance indicator. AI tracking fills the measurement gap for the growing share of research and purchase decisions happening in conversational interfaces. The two reports work together.

How accurate is AI visibility data?

AI responses are probabilistic, so visibility scores reflect sampling across multiple prompt runs rather than a single definitive result. Higher prompt volumes produce more reliable scores. Tools like Topify run queries at scale to stabilize the data before surfacing it in dashboards.

How many AI platforms should agencies track?

For most agency clients, starting with ChatGPT, Perplexity, and Google AI Overviews covers the majority of AI search volume. Expanding to Gemini and DeepSeek makes sense for clients with international audiences or enterprise buyers who index toward Google Workspace.

What’s a realistic budget for agency-level AI tracking?

The market has segmented into three tiers: entry-level for 1-5 clients runs $99-$150/month, professional agency-scale for 10-50 clients runs $250-$750/month, and enterprise deployments covering 50+ clients typically start at $1,500/month. Most agencies find the professional tier sufficient to cover a standard client portfolio.