Your domain authority is 70. Your top pages rank in Google’s top three. But when a potential customer opens Perplexity and asks “What’s the best tool for [your category],” your brand isn’t in the answer. Not mentioned once. None of your traditional SEO metrics can tell you why, because none of them were built to measure this.

That’s the gap a Perplexity SEO tracker is designed to close.

Why Perplexity Doesn’t Play by Google’s SEO Rules

Google functions as a pointer. It matches a query to a ranked list of documents based on authority signals, then hands the user a link to click. Perplexity works differently: it reads the web in real time, pulls factual chunks from multiple sources, and synthesizes a direct answer. No ranked links. No click required.

This distinction matters because the inputs that determine visibility are completely different. Research shows only a moderate correlation between traditional domain authority and the probability of being cited by an AI engine. What Perplexity actually favors are “answer capsules,” concise passages that front-load a direct answer within the first 40 to 60 words, along with high factual density and source transparency.

Being number one on Google doesn’t get you into Perplexity’s answer. That’s a separate game, with separate rules.

The scale of this shift is significant. Perplexity’s daily query volume tripled from 230 million in mid-2024 to over 780 million by 2025, with monthly active users reaching between 33 and 45 million. A substantial slice of high-intent search traffic is now flowing through a channel your current toolkit can’t see.

What a Perplexity SEO Tracker Actually Measures

A Perplexity SEO tracker is a monitoring system that automates conversational prompts to Perplexity AI and measures how a brand appears, or doesn’t appear, in the generated answers.

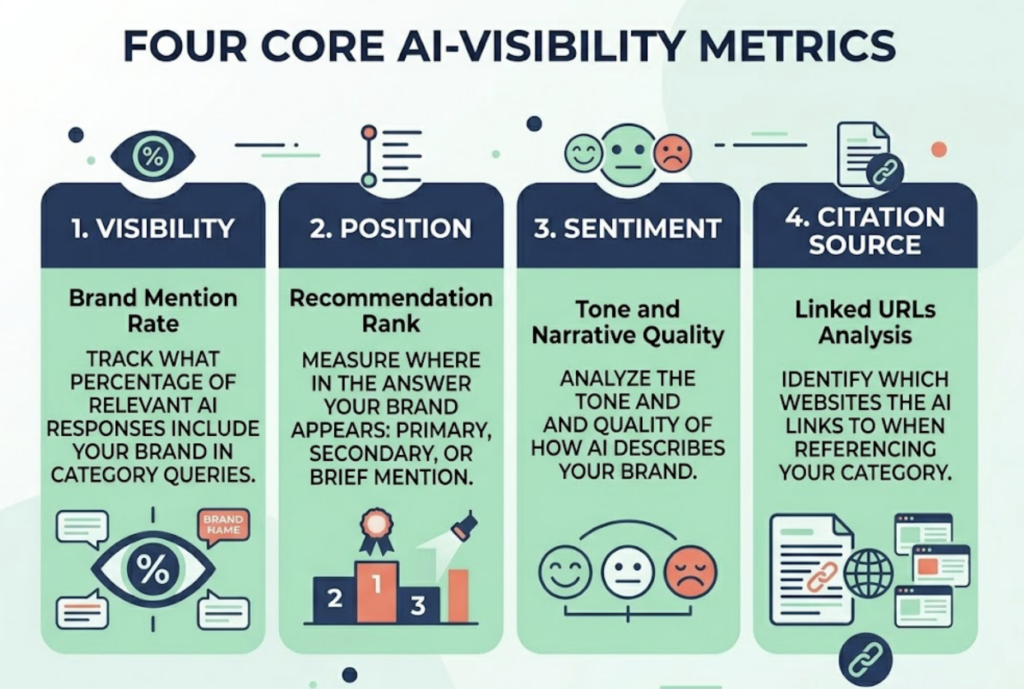

The core metrics cover four dimensions. Visibility, or Brand Mention Rate, tracks what percentage of relevant AI-generated responses include your brand when category or commercial intent questions are asked. Position measures where in the answer your brand appears: as the primary recommendation, a secondary alternative, or a brief background mention. Sentiment analyzes the tone and narrative quality of how the AI describes your brand. Citation Source identifies which URLs the AI is actually linking to when it references your category.

Each of these matters independently. A brand can have high visibility but poor sentiment, consistently appearing as “a reliable option for smaller teams” when the target audience is enterprise. That’s not a win. It’s a positioning problem that only shows up when you track narrative quality, not just presence.

| Metric | What It Measures | Why It Matters |

|---|---|---|

| Visibility (Mention Rate) | % of responses that include the brand | Are you in the conversation at all? |

| Position (LCRS) | Primary vs. secondary placement in the answer | First mention vs. footnote |

| Sentiment Score | Tone and favorability of the description | Is the AI helping or hurting your positioning? |

| Citation Source | Which domains the AI cites | Where to build content authority |

| Share of Voice (SoV) | Your mentions vs. competitors’ | Relative standing in your category |

How Does a Perplexity SEO Tracker Work

The technical architecture follows a five-step pipeline. The tracker first dispatches a pre-defined library of conversational prompts, covering category queries, comparison queries, and brand-specific queries, to Perplexity at regular intervals. It captures the full generated response, including inline citations and related questions. Then it runs semantic enrichment to identify brand and competitor mentions and assesses sentiment. Citation mapping extracts the specific URLs cited, linking them back to the claims they support. Finally, the data flows into a dashboard showing visibility trends, sentiment shifts, and Share of Voice over time.

One technical detail worth knowing: there’s a meaningful difference between API-based monitoring and browser-based scraping. Research found only a 4% overlap in citation sources between API and scraped results for the same prompt. Browser-based scanners capture the experience a real user sees, including citations and Related Questions, which account for 40% of platform queries. If your tracker is only hitting the API, it may be measuring a different experience than what your audience actually gets.

Prompt quality also directly affects data quality. Tracking branded queries alone, like “Is [Brand] good?”, only measures reputation for users who already know you exist. The more valuable signal comes from category prompts: “What’s the best tool for enterprise marketing automation?” That’s where the AI acts as a first-touch discovery layer for users who’ve never heard of you.

5 Metrics Your Perplexity SEO Tracker Checklist Must Include

Most brands start by checking whether they appear at all. That’s necessary but not sufficient. Here are the five metrics that separate a functional Perplexity tracking setup from one that actually drives decisions.

1. Brand Mention Rate across category prompts. Tracking branded queries tells you about reputation management. Tracking category prompts tells you whether you’re making the AI’s shortlist during the discovery phase, where most purchase decisions start.

2. Position and consistency. In traditional SEO, top-three matters. In Perplexity, the goal is to be the primary recommendation. Consistency tracking is equally important: does your brand appear reliably across semantically similar prompts, like “best CRM for startups” and “top CRM alternatives for small businesses”? Inconsistency signals weak authority signals on that topic.

3. Sentiment and narrative quality. A 0-100 sentiment score isn’t just a vanity metric. If Perplexity consistently describes your product as “budget-friendly” and your positioning is premium, the AI is actively working against your sales motion. Tracking narrative quality lets you identify and correct these gaps through targeted PR and content.

4. Citation source analysis. This is the most actionable metric on the list. If Perplexity is citing Reddit threads or third-party review sites instead of your own domain, you know exactly where the gap is. It identifies what practitioners call “inclusion targets”: the sites the AI already trusts, where your brand needs to earn a presence.

5. Competitive Share of Voice. In a zero-click environment, visibility is relative. An 80% mention rate sounds strong until you discover your main competitor has 95%. SoV shows you the real competitive picture across all the prompts that matter for your category.

Topify organizes these into a seven-metric system covering Visibility, Sentiment, Position, AI Volume, Mentions, Intent, and CVR, giving teams a single view of AI search performance rather than isolated data points.

Common Mistakes That Make Perplexity Tracking Useless

Mistake 1: Only tracking branded prompts. This is the most common error. Queries like “Is [Brand] good?” only measure reputation among users who already know you. Research indicates that category and discovery prompts represent the majority of high-value AI search behavior. If you’re not tracking these, you’re blind to your most important exposure opportunities.

Mistake 2: Single-platform tracking. A brand might have 80% visibility on Perplexity but near zero on ChatGPT. Because different LLMs use different retrieval architectures, Perplexity favors Reddit and real-time web sources while ChatGPT leans on Wikipedia and established news, a strong score on one platform doesn’t generalize. Cross-platform tracking reveals the full picture.

Mistake 3: Treating sentiment as positive/negative. Sentiment in AI search is contextual. Being described positively but for the wrong persona, “great for small teams” when you’re targeting enterprise, is a strategic problem that a binary sentiment label won’t surface. Narrative-level analysis is what catches these mismatches.

Mistake 4: Manual pulse-checking without historical data. Typing a prompt into Perplexity and noting the result isn’t tracking. It’s a snapshot. AI answers drift with model updates and refreshed web data. Without automated historical logging, you can’t tell whether your visibility is improving, declining, or whether that PR campaign last quarter actually moved the needle.

That last point matters more than most teams realize. Trending direction is often more informative than the absolute number.

From Perplexity to Claude and Beyond: Why You Need an LLM SEO Tracker Strategy

Perplexity has captured nearly 20% of AI-driven web traffic in the United States, making it the second-most important AI platform for US-focused brands. But your audience doesn’t use a single AI.

ChatGPT accounts for roughly 60-68% of AI search market share and skews toward general consumers. Perplexity at 5.8-8.2% attracts B2B researchers and analysts who favor real-time, citation-heavy answers. Claude pulls developers and technical writers. Gemini captures Google-ecosystem users. Microsoft Copilot dominates enterprise workflows.

The same brand can be the top recommendation on Perplexity and invisible on ChatGPT. That gap isn’t random. It reflects the difference between “real-time web” signals, which Perplexity optimizes for, and “training-set” signals, which drive ChatGPT’s outputs.

| Platform | Market Share (2026) | Primary User Persona | Citation Preference |

|---|---|---|---|

| ChatGPT | 60-68% | General consumers | Wikipedia, established news, Bing |

| Perplexity | 5.8-8.2% | B2B researchers, analysts | Reddit, niche blogs, real-time web |

| Gemini | 15-21% | Google ecosystem users | YouTube, LinkedIn, Google properties |

| Claude | ~2% | Developers, writers | Tech documentation, clean prose |

| Microsoft Copilot | 4.5-12.9% | Enterprise workers | Microsoft Docs, LinkedIn, Bing |

A true best llm seo tracker strategy runs the same prompt library across all relevant platforms, generating what practitioners call a “Model Consensus Score.” If your brand is recommended by Perplexity and Claude but not ChatGPT, the data tells you exactly where to focus your content and citation-building efforts. Without that cross-platform view, you’re optimizing for one slice of the audience and calling it done.

How Topify Tracks Your Brand Across Perplexity, Claude, ChatGPT, and More

Topify approaches Perplexity tracking as one layer of a broader AI search measurement system. The platform supports ChatGPT, Gemini, Perplexity, DeepSeek, and regional platforms including Doubao and Qwen, covering the full distribution of where B2B and B2C audiences are searching.

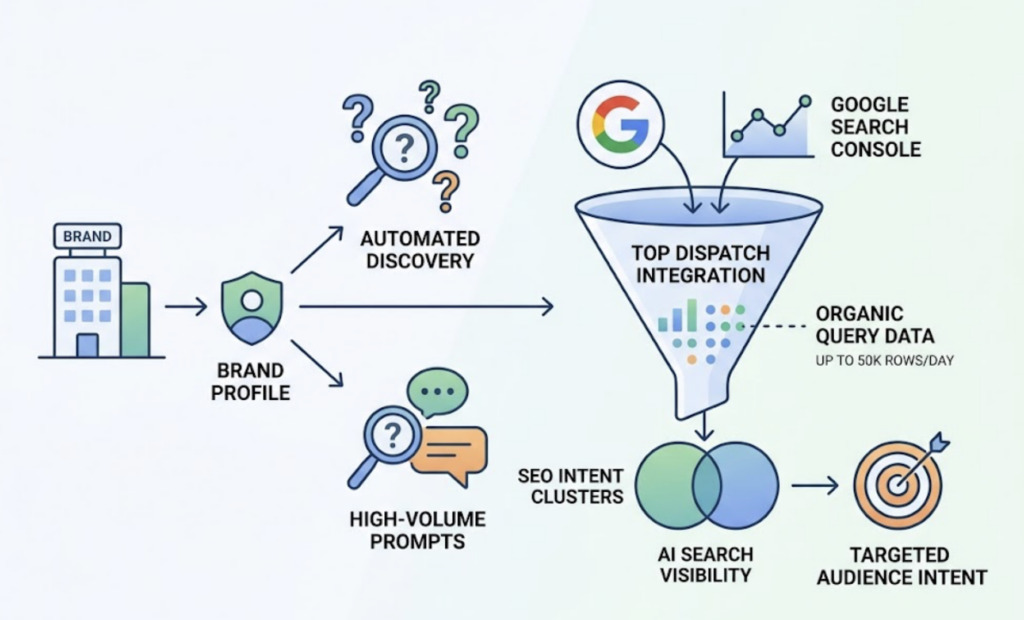

On setup, Topify initializes a brand profile by name and URL, then triggers automated discovery of high-volume prompts relevant to the business. A notable differentiator: Topify integrates directly with Google Search Console to import up to 50,000 rows of organic query data per day. This lets teams correlate their traditional SEO intent clusters with AI search visibility, so they’re not optimizing for AI in a vacuum but targeting the same audience intent that already drives organic traffic.

The dashboard surfaces the seven-metric system covering Visibility, Sentiment Score (0-100), Position, AI Volume, Mentions, Intent, and CVR in a single view. The Basic plan starts at $99/month, which includes 100 prompts and approximately 9,000 AI answer analyses per month, covering four projects and four seats. The Pro plan at $199/month scales to 250 prompts and 22,500 analyses. Enterprise plans start at $499/month with dedicated account management and custom configurations.

For teams managing multiple brands or client accounts, the competitor monitoring layer automatically detects emerging rivals in AI answers and tracks relative position over time. That matters in categories where AI recommendations are shifting quickly and where last quarter’s data is already stale.

Conclusion

The SEO function is shifting from “rank a page” to “shape a narrative.” Perplexity and the broader class of AI search engines are now making first-touch recommendations for millions of B2B and consumer decisions, and traditional ranking metrics have no visibility into that process.

A Perplexity SEO tracker doesn’t replace your existing SEO stack. It fills the blind spot that stack has always had. Start with the five core metrics: mention rate, position consistency, sentiment, citation sources, and Share of Voice. Then expand to a multi-platform strategy that includes a claude seo tracker and broader llm seo tracker coverage. The brands building this measurement infrastructure now will have compounding data advantages that are difficult to replicate later.

FAQ

Q: What is a Perplexity SEO tracker? A: It’s a specialized monitoring tool that automates conversational prompts to Perplexity AI to measure how often a brand appears in generated answers, the sentiment and narrative quality of those mentions, the position of the brand relative to competitors, and which domains the AI is citing as its sources.

Q: How do I improve my visibility in Perplexity AI answers? A: Focus on structuring content into “answer capsules” that front-load direct answers within the first 40-60 words, increase factual density with data points every 150-200 words, use FAQ schema markup, and earn mentions on third-party sites like Reddit and niche authority blogs that Perplexity tends to cite heavily.

Q: How is tracking Perplexity different from tracking Claude or ChatGPT? A: The underlying logic is the same, but citation bias varies significantly by platform. Perplexity is citation-first and favors real-time web sources. ChatGPT leans on its training data and Wikipedia. Claude emphasizes technical documentation and clean prose. A full llm seo tracker strategy runs the same prompt set across all three to identify where gaps are platform-specific versus category-wide.

Q: What’s the pricing for a Perplexity SEO tracker tool? A: Entry-level tools start around $25/month for basic mention tracking. Mid-tier platforms like Topify start at $99/month for 100 prompts and 9,000 AI answer analyses. Enterprise-grade configurations with custom prompt libraries and dedicated account support typically start at $499/month and scale based on volume and platform coverage.