You could rank #1 on Google and still be completely invisible to the 900 million weekly users asking ChatGPT for recommendations.

That’s not a hypothetical. That’s how AI search works in 2026. Users ask a question, an AI synthesizes an answer from dozens of sources, and your brand either appears in that answer or it doesn’t. No click. No impression. No signal in your analytics. Just silence.

An AI answer tracking dashboard changes that. It’s the infrastructure that makes the “answer layer” visible, measurable, and actionable.

What an AI Answer Tracking Dashboard Actually Does

An AI answer tracking dashboard is not a SEO rank tracker with a new coat of paint.

Traditional SEO tools measure where your webpage sits on a results page. An AI answer tracking dashboard measures something fundamentally different: how large language models (LLMs) understand, extract, summarize, and recommend your brand when users ask questions in natural language.

The distinction matters. A brand can hold the top organic spot on Google and still be absent from every ChatGPT, Perplexity, or Gemini answer about its own category. That’s the gap most teams still haven’t measured.

A mature dashboard monitors your brand across the major AI platforms: ChatGPT (900M+ weekly users), Perplexity (which functions as an AI-native search engine with real-time citations), Google Gemini and AI Overviews, and emerging platforms like Claude and Grok. Each platform uses different training data and retrieval-augmented generation (RAG) mechanisms, which means your brand’s visibility can vary significantly from one platform to another. Coverage across all of them isn’t optional — it’s the baseline.

The Metrics That Actually Matter (and the Ones That Mislead You)

Most teams start by asking one question: “Is our brand showing up in AI answers?” That’s the wrong question.

Presence alone tells you almost nothing. If your brand appears in an AI answer about why customers should avoid you, that’s worse than not appearing at all. The real value of a dashboard is in the depth of what it measures.

There are seven metrics worth tracking:

Visibility Rate measures the percentage of tracked prompts where your brand appears. It’s your baseline share of the AI answer ecosystem.

Sentiment Score uses NLP to assess whether AI describes your brand positively, neutrally, or negatively. A brand mentioned in “avoid these companies” lists has a high visibility rate and a terrible sentiment score.

Position Rank tracks where your brand appears within multi-brand recommendation lists. First position signals stronger algorithmic trust. Fifth position, even in a positive list, gets a fraction of the attention.

Mention Volume captures total mentions across the AI ecosystem, giving you a broad-awareness baseline.

Intent Alignment measures whether your brand shows up at the right stages of the buyer journey: research, comparison, and evaluation prompts. Showing up only in awareness-stage answers while competitors dominate “best option” queries is a conversion problem hiding inside a visibility metric.

CVR matters more than most teams expect. Research indicates that users arriving from AI recommendations convert at roughly 4.4x the rate of traditional search visitors. They’ve been pre-sold by the AI before they click anything.

Source Citations tracks whether AI platforms are linking to your domain when generating answers. These citations build the long-term traffic bridge between AI recommendations and your site.

That’s the full picture. Teams that only track presence are flying with one instrument.

How an AI Answer Tracking Dashboard Works Under the Hood

The technical challenge is real, and understanding it helps you evaluate tools more accurately.

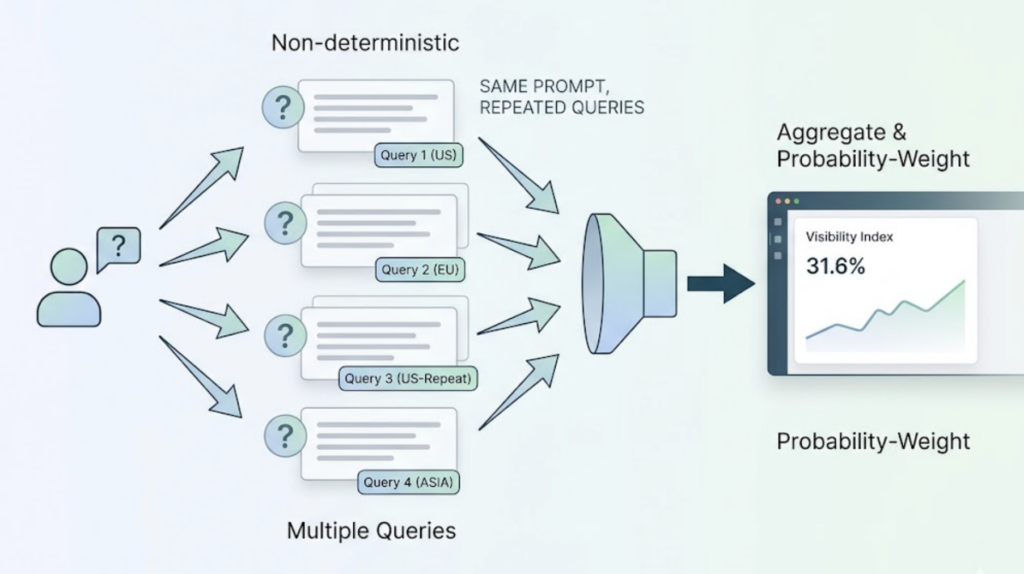

LLMs are non-deterministic. Ask the same question twice and you’ll often get a different answer. This means a dashboard can’t just run a query once and call it data. It needs to run the same prompt dozens of times, across multiple geographic locations (some platforms surface different answers based on region), and aggregate the results into a probability-weighted visibility index.

The workflow looks like this: a high-quality prompt corpus is built first, not keyword lists but context-rich questions that simulate actual user behavior. Those prompts are then executed through real browser environments simulating queries on each AI platform. The generated answers are parsed for entity names, citation links, position data, and sentiment signals. Finally, results across multiple runs are aggregated into a baseline metric.

The key word is “aggregated.” Any single snapshot of an AI answer is noise. Only patterns across multiple samples reveal the true signal. Platforms like Topify handle this through trend-based analysis rather than point-in-time queries, which is how you get data you can actually make decisions with.

5 Things That Tank Your Brand’s AI Visibility (and How to Spot Them)

Teams deploying AI tracking dashboards for the first time tend to carry over SEO habits that don’t translate. Here are the five mistakes that show up most often.

Treating prompts like keywords. A short keyword like “email marketing software” triggers broad, category-level AI answers that rarely include specific brand mentions. Effective tracking requires long-tail, context-rich prompts that simulate real buyer intent. “What’s the best email marketing tool for a 10-person SaaS team with under $500/month budget?” is a trackable prompt. “Email marketing software” is not.

Ignoring machine-readable structure. AI systems don’t read content the way humans do. They extract from patterns, hierarchies, and structured signals. If your site lacks Schema markup or has broken heading hierarchies, even excellent content won’t register cleanly in AI citations, and your dashboard will show a gap that looks like a content problem when it’s actually a technical one.

Skipping trust signals. AI models increasingly favor sources they “trust,” which in practice means sources with clear author credentials, consistent external references, and cross-domain validation. Teams focused purely on content volume and not on E-E-A-T signals tend to see their AI dashboard weights erode over time.

Scaling low-quality AI-generated content. Mass-producing AI-written content that lacks original data, expert perspective, or genuine insight is what researchers call “slopware.” In the short term, it may increase raw mention volume. In the medium term, sentiment scores drop and AI platforms start treating your domain as a low-signal source.

Running AI tracking in isolation. Visibility data that doesn’t connect to your PR pipeline, content calendar, and traditional SEO reporting is expensive noise. The teams getting real value from their dashboards are the ones who’ve integrated AI visibility as a channel alongside existing performance data.

Turn Dashboard Data Into Growth: A 3-Step Strategy

Data without action is just a report. Here’s how to build a closed-loop optimization process around your dashboard.

Step 1: Identify the gaps. Audit which high-value prompt categories your brand is missing from entirely. Tools like Topify offer deep integration with Google Search Console, which makes it faster to surface prompts where you have near-top-5 organic potential but zero AI presence. That overlap is where the highest-ROI content investments live.

Step 2: Benchmark against competitors. Use your dashboard’s Share of Voice (SOV) feature to understand why specific competitors are being cited over you. If a competitor keeps appearing because they have a detailed pricing comparison page or an original dataset, that’s a direct content brief. SOV gives you a number:

SOV = (Brand Mentions / Total Category Mentions) × 100

A 12% SOV in a category where the top competitor holds 38% is a gap you can close with targeted content.

Step 3: Execute and close the loop. Once you know the gaps and the content playbook, execution speed matters. Topify’s one-click execution lets teams translate visibility gaps directly into technical and content adjustments without manual workflow overhead. The faster you deploy, the sooner you capture data from the next AI crawl cycle.

Identify. Benchmark. Optimize. Repeat.

Best Tools for AI Answer Tracking in 2026

The market has matured significantly. Here’s a practical comparison of the tools worth evaluating.

| Tool | Core Strength | Best For | Notable Limitation |

|---|---|---|---|

| Topify | GSC integration, 7-metric GEO analytics, one-click execution | Growth-stage brands and e-commerce teams | Newer entrant vs. enterprise players |

| Omnia | Auto-generates content briefs from gap data | Mid-size teams focused on execution speed | Less depth on raw visibility data |

| Peec AI | Clean UX, daily multi-engine monitoring | Small startups with limited budget | Limited strategic layer |

| SE Visible | Combined SEO + GEO view | Existing SE Ranking users | Narrower AI engine coverage |

On the benchmark question: Perplexity.ai holds a G2 rating of 4.5/5, with users rating its ease of use at 95% and setup speed at 97%. That’s relevant because Perplexity represents high-intent, research-mode users — the exact audience that converts. Any tracking dashboard that can’t accurately capture brand performance on Perplexity is missing data from one of the highest-value AI audiences available.

Topify covers Perplexity alongside ChatGPT, Gemini, DeepSeek, and others, which is what full-spectrum monitoring actually requires.

What AI Answer Tracking Dashboards Actually Cost

The pricing landscape is clearer than most teams expect.

Topify’s plans are structured around how teams actually scale into the channel:

- Basic at $99/month: 100 prompts, 9,000 AI answer analyses, coverage across ChatGPT, Perplexity, and AI Overviews. Practical for startups establishing baseline visibility.

- Pro at $199/month: 250 prompts, 22,500 analyses, 10 seats. Built for teams running active competitive monitoring and content optimization cycles.

- Enterprise from $499/month: Custom prompt volumes, API access, dedicated account management.

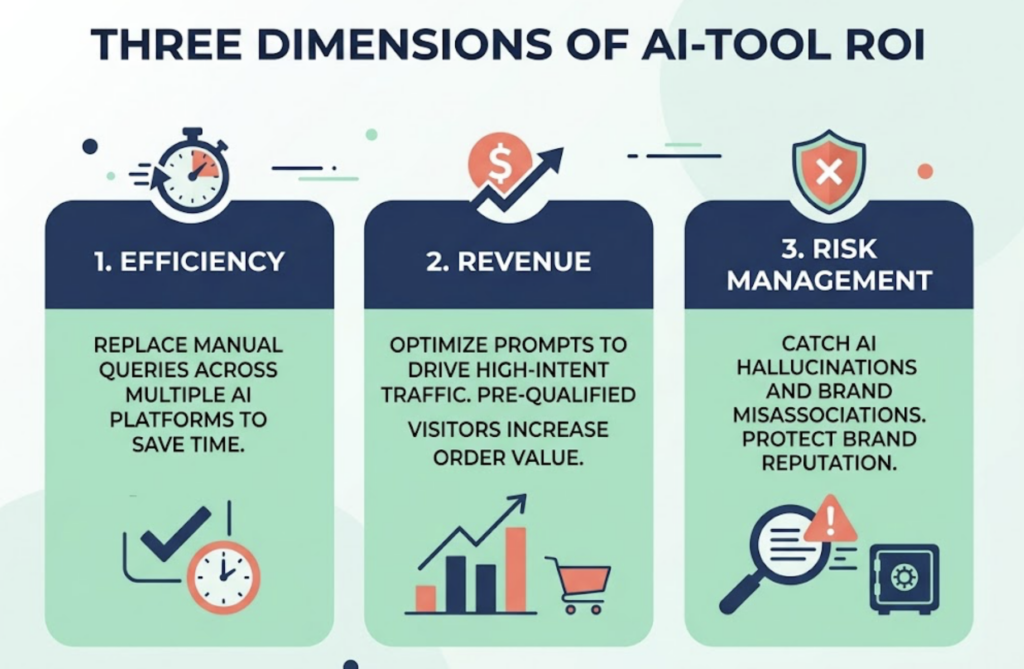

The ROI question has three dimensions. First, efficiency: automated tracking replaces hours of weekly manual queries across multiple AI platforms. Second, revenue: optimizing for high-intent prompts drives more AI-referred traffic, and those visitors arrive pre-qualified, which tends to push average order value up. Third, risk: catching a case where AI is hallucinating a negative description of your brand or incorrectly associating your product with a competitor’s pricing tier is worth significantly more than the cost of the tool that flagged it.

Bottom line: at $99/month, the question isn’t whether the ROI math works. It’s whether your team is ready to act on the data.

Conclusion

In 2026, an AI answer tracking dashboard isn’t an advanced capability reserved for enterprise marketing teams. It’s table stakes.

The brands that treat AI visibility as a structured, measurable channel are already pulling ahead in prompts that drive real purchase intent. The ones still relying on traditional rank tracking and wondering where their organic traffic went are operating on incomplete information.

A good dashboard doesn’t just show you where you stand. It tells you exactly what to fix, which competitors to study, and which prompts represent your highest-value content opportunities. Pair that with the right execution infrastructure, and AI search stops being a black box.

It becomes your next growth channel.