You rank #1 on Google. A potential buyer opens ChatGPT, types the same question, and your brand isn’t in the answer.

That’s not a hypothetical. A study by Chatoptic found that brands on Google’s first page appeared in ChatGPT responses just 62% of the time. Only 12% of AI citations overlap with Google’s top 10. In other words, dominating traditional search no longer means you exist in the place where buyers increasingly go to make decisions.

This is the invisibility paradox — and most marketing teams don’t know it’s happening to them.

Google Rankings Don’t Follow You Into AI Search

For two decades, position one was the finish line. Get there, and you get the traffic.

That assumption no longer holds.

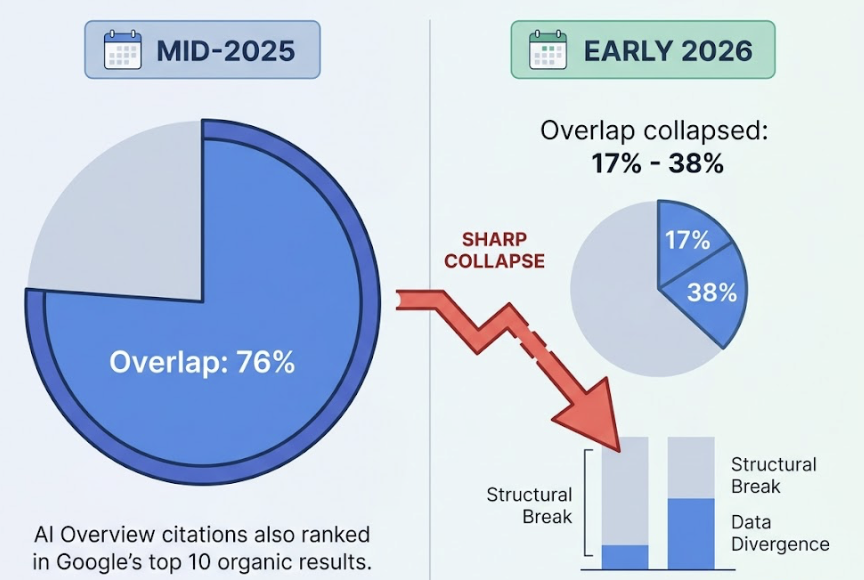

Data from Ahrefs and BrightEdge shows a sharp structural break between traditional SEO performance and AI citation frequency. In mid-2025, around 76% of AI Overview citations also ranked in Google’s top 10 organic results. By early 2026, that overlap had collapsed to between 17% and 38%.

Where are the remaining citations coming from? Pages ranked between positions 11 and 100 now account for roughly 31% of citations. Pages outside the top 100 entirely account for another 31% to 37%.

AI engines aren’t just summarizing your Google results. They’re running what researchers call “Deep Retrieval” — bypassing the traditional hierarchy to find content that fits the specific informational needs of a synthesized answer.

The commercial implication is uncomfortable. A brand can hold position one for its primary keyword while being absent from every AI-mediated shortlist in its category. And because organic traffic and rankings may stay steady throughout, traditional analytics won’t flag the problem.

How AI Search Engines Actually Work

The divergence makes sense once you understand the mechanics.

Traditional search is deterministic. A keyword goes in, an algorithm evaluates relevance and authority, a ranked list comes out. AI search is probabilistic. The same query can produce different outputs each time, drawn from a much wider range of sources.

When a user enters a prompt into ChatGPT Search or Perplexity, the system doesn’t look for an exact match. It runs a process called query fan-out: decomposing the original prompt into multiple sub-queries, each targeting a different facet of the question. A query like “best CRM for enterprise” might fan out into separate searches for scalability, integration, pricing at 500+ users, and security certifications — simultaneously.

The system then pulls from more than 60 sources to build a single synthesized response.

That’s why query length matters. The average traditional Google search runs about 3.4 words. The average AI prompt runs 23 to 60 words. Users aren’t looking for links to research — they’re outsourcing the research itself to the AI and asking for a recommendation.

To decide what gets cited, AI models don’t count backlinks. They look for a consensus layer: multiple independent, authoritative sources describing a brand consistently, in the same category, for the same use case. Content that wins citations tends to be clean, structured, table-friendly, and factually dense. Fluff-heavy pages get skipped.

ChatGPT, Perplexity, Gemini: Not the Same Animal

Not all AI search engines behave the same way — and that matters for how brands approach visibility.

As of early 2026, ChatGPT holds 60% to 73% of the AI search market. Google Gemini sits at 15.3%, Microsoft Copilot at around 13%, and Perplexity at 5.5% to 5.8%. Claude AI holds roughly 5%, growing at 14% quarter-over-quarter.

But market share doesn’t tell the full story. Citation logic differs significantly by platform:

| Platform | Search Index | Citation Style | Primary Strength |

|---|---|---|---|

| ChatGPT | Bing | Selective, conversational | Reasoning, multi-turn dialogue |

| Perplexity | Multi-index | Numbered inline citations | Live web accuracy, research |

| Google Gemini | Less transparent | Ecosystem data, local/real-time | |

| DeepSeek / Qwen | Multi-source | Structured, logical | Technical queries, multilingual |

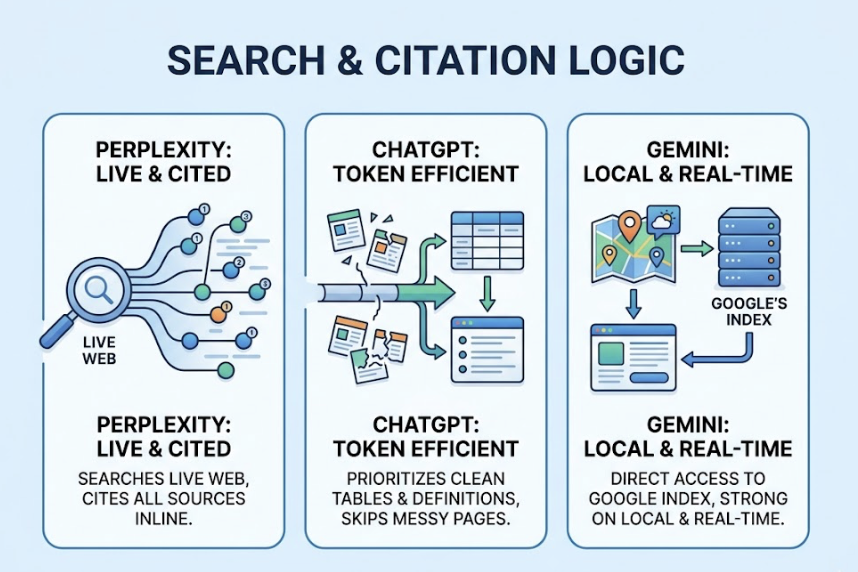

Perplexity searches the live web for every query and cites its sources inline — making it the most auditable of the major platforms. ChatGPT prioritizes “token efficiency,” skipping pages that are hard to parse in favor of clean tables and clear definitions. Gemini has direct access to Google’s index, which gives it an advantage on local and real-time queries but makes its citation logic harder to reverse-engineer.

A brand might be cited consistently in Perplexity and almost never in ChatGPT. That discrepancy is worth knowing before you optimize blindly.

What “Visibility” Means in AI Search

Being visible in AI search isn’t about holding a slot in a list. It’s about three things: how often you’re mentioned, how you’re described, and where in the answer you appear.

Frequency (Visibility Score): Because AI responses are non-deterministic, a single test tells you nothing. A brand that appears in 8 out of 10 ChatGPT responses for a relevant prompt has high visibility — regardless of its Google ranking. Measuring this requires repeated sampling across platforms and prompt types.

Sentiment: AI responses aren’t neutral. A brand might be described as “reliable but expensive” in Gemini and “the most innovative in its class” in Perplexity. That framing shapes buyer perception before they ever visit your site. Managing sentiment across platforms is as important as achieving the mention.

Position: Where you appear in the answer matters. Research shows that 44.2% of AI citations are pulled from the first 30% of source content. Brands mentioned early — or highlighted as the top choice — carry more weight than those buried in paragraph four.

These three dimensions together define what researchers now call AI Share of Voice (SoV): a metric that has no equivalent in traditional SEO, and one that most brands aren’t tracking at all.

SEO Got You Here. GEO Gets You There.

Generative Engine Optimization (GEO) is the discipline that’s emerged to solve the visibility problem. Formalized by researchers at Princeton, Georgia Tech, and partner institutions, GEO involves structuring content specifically so AI engines can discover, extract, and cite it.

The difference from traditional SEO is structural:

| Traditional SEO | GEO | |

|---|---|---|

| Optimization target | Entire web pages | Discrete information units |

| Success metric | Rankings, traffic, CTR | Citations, mentions, SoV |

| Content strategy | Keywords and backlinks | Data, entities, structure |

| Competition | 10 blue links | 2 to 7 cited sources |

Data from the 2026 GEO Benchmark Study makes the levers concrete. Pages with more than 20,000 characters receive 4.3x more citations than thin content. Adding 3 to 5 original statistics boosts citation probability by up to 40%. Including expert quotations lifts visibility by as much as 41%. And leading with the answer — front-loading the key claim in the first third of the content — doubles citation frequency.

Structured heading hierarchies matter too. 68.7% of ChatGPT citations come from pages that follow a strict H1→H2→H3 structure. AI models parse content the way a researcher skims an article: they follow the structure, extract the data, and move on.

The other lever is off-page. AI agents evaluate consensus across the web, not just a brand’s own site. The more consistently a brand is described — same name, same category, same use case — across diverse credible sources, the more trustworthy it appears to AI models. This is why digital PR, review platforms, and knowledge panel management are now core GEO tactics, not optional extras.

How to Find Out If AI Search Engines Recommend You

The audit starts with a shift in mindset: from rank tracking to presence monitoring.

Step 1: Build a prompt set. Identify 20 to 50 prompts that reflect how buyers actually search — branded queries, category queries, and comparative queries. “Who are the leading [category] platforms?” is more useful than testing your exact brand name.

Step 2: Test across platforms, repeatedly. A one-off screenshot is useless in a probabilistic environment. Sample each prompt 3 to 5 times per engine across ChatGPT, Gemini, Perplexity, and any emerging models relevant to your market (DeepSeek, Qwen, Doubao). Note how often your brand appears, how it’s described, and where in the response it lands.

Step 3: Analyze citations. Look at the URLs AI engines are actually citing. Are those owned assets? Competitor content from page two of Google? Third-party reviews you’ve never seen? This reveals exactly where your content is failing the AI’s retrieval logic.

For teams running this at scale, Topify automates the process across all major platforms — ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, and Qwen. Its Visibility Tracking measures mention frequency against competitors in real time. Source Analysis identifies which third-party domains are feeding AI knowledge of your brand, surfacing gaps for targeted digital PR. Sentiment Analysis monitors how each platform frames your brand, so you’re not guessing at the narrative AI is building on your behalf.

The manual process works for a one-time audit. The automated process is what makes ongoing optimization possible.

Conclusion

The research is unambiguous. AI search engines don’t inherit your Google rankings. They build their own picture of which brands are trustworthy, relevant, and worth recommending — and they do it using signals most marketing teams aren’t optimizing for.

Ranking #1 on Google while being invisible to ChatGPT isn’t a theoretical risk. It’s the current reality for a significant share of brands.

The fix isn’t to abandon SEO. It’s to recognize that GEO is now a parallel discipline — one with different content requirements, different success metrics, and a different competitive set. The brands that establish their AI Share of Voice in 2026 will be the ones that show up in the recommendations their buyers are already relying on.

FAQ

What is an ai search engine?

An AI search engine uses large language models to understand natural language queries, retrieve data from the live web or training data, and generate synthesized answers with citations. Unlike traditional engines that return links, AI engines return direct recommendations.

How is ai search engine different from google?

Google uses a deterministic algorithm to rank pages and return a list of links. AI search engines are probabilistic — they decompose queries, retrieve from dozens of sources, and synthesize a single response. The output is a recommendation, not a list.

How do brands get mentioned in ai search results?

Through a combination of entity clarity, third-party consensus, and content that’s easy for AI to parse. Structured headings, original data, expert quotations, and consistent mentions across credible third-party sources all improve citation frequency.

What is ai search engine optimization?

Often called GEO (Generative Engine Optimization) or AEO (Answer Engine Optimization), it’s the practice of structuring content so AI platforms can discover, extract, and cite it. Key tactics include front-loading answers, strict heading hierarchies, adding original statistics, and managing third-party trust signals.

How to check if my brand appears in ai search?

Run a standardized prompt set across ChatGPT, Gemini, and Perplexity, sampling each prompt 3 to 5 times. Tools like Topify automate cross-platform tracking of mention frequency, sentiment, and citation sources.