AI is answering your customers’ questions right now. The part most brands haven’t figured out yet: they have no idea what it’s saying.

That gap is wider than it looks. According to recent research, 75% of AI search sessions end without a single click to an external site. Users get their answer, make a judgment, and move on. Your brand either shaped that judgment, or it didn’t.

The tools most teams are using weren’t built for this. And the ones marketed as “AI trackers” often stop at the most surface-level metric available: whether your brand name showed up somewhere in the answer.

That’s not enough. Here’s the checklist that actually matters.

Most AI Trackers Stop at Mentions. That’s Where the Problem Starts.

Showing up in an AI answer and being recommended by an AI answer are two completely different outcomes.

A mention with a caveat (“some users report issues with…”) can actively undermine a purchase decision before the buyer ever lands on your site. Meanwhile, a brand named first with a clear endorsement captures the majority of user trust in that interaction.

Traditional SEO tools weren’t built to tell the difference. They track blue links and static rankings. Generative engines don’t work that way: they produce synthesized, conversational responses where position, tone, and source all shape the outcome. Research shows that queries with AI features present have already caused a 61% drop in traditional organic CTR. What happens inside that AI answer has real revenue consequences.

The five metrics below are what a real AI tracker needs to measure.

#1 — Visibility Rate: Is Your Brand Actually Showing Up?

The first thing to track isn’t whether you appear in AI. It’s where, how often, and across which platforms.

One platform is not a data point. It’s a blind spot.

ChatGPT, Gemini, Perplexity, and Claude each use fundamentally different retrieval mechanisms and training datasets. Perplexity prioritizes real-time data and forum discussions. Gemini leans into Google’s established trust graph. A brand that appears consistently in ChatGPT responses may be completely absent from Perplexity, and vice versa.

There’s a compounding challenge here: AI models are non-deterministic. Analysis of 10,000 keywords found that only 9.2% of cited URLs remained consistent when the same query was run just three times in a single day. Visibility isn’t a fixed number. It’s a probability, and it needs to be tracked accordingly through repeat sampling across engines.

For e-commerce brands specifically, there’s a 22.9% overlap between traditional organic rankings and AI citations. Ranking #1 in Google does not mean you’re showing up in AI answers. Most brands haven’t checked.

Topify tracks brand visibility across ChatGPT, Gemini, Perplexity, DeepSeek, and other major AI platforms simultaneously, running prompts multiple times per session to build a statistically reliable visibility trend rather than a one-off snapshot.

#2 — Sentiment Score: Being Named Isn’t the Same as Being Recommended

Once you know you’re appearing in AI answers, the next question is: what exactly is the AI saying about you?

According to Gartner research from 2025, 73% of B2B buyers now trust AI product recommendations over traditional advertisements. That makes the quality of the AI’s mention more influential than most brands realize.

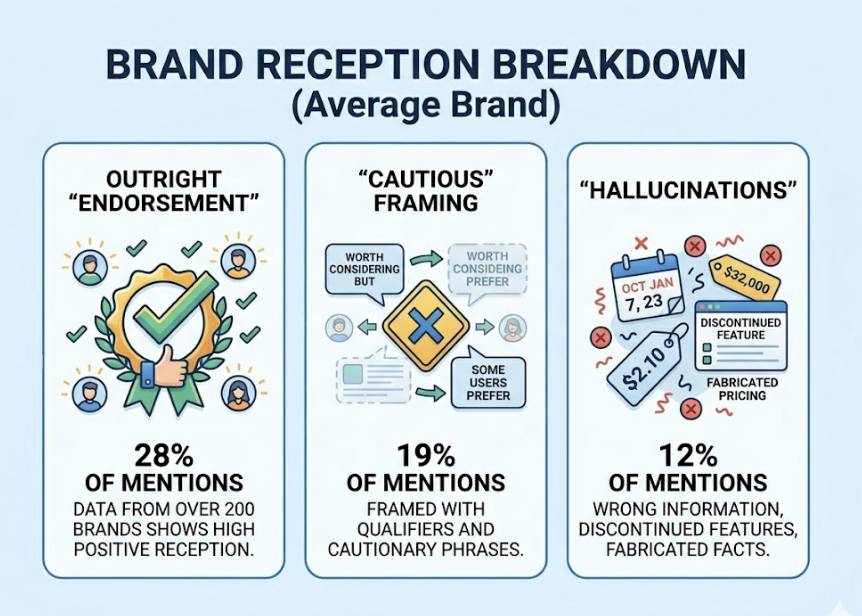

Data from over 200 brands shows the average brand receives an outright “Endorsement” rate of only 28% across category prompts where it appears. The rest? 19% of mentions are “Cautious” (framed with phrases like “some users prefer” or “worth considering but”), and 12% are outright hallucinations: fabricated pricing, discontinued features, wrong information presented as fact.

A hallucination doesn’t just confuse potential customers. It can spread. As AI models pull from web content to train and update, incorrect information can get absorbed and repeated across platforms, creating a cycle that’s difficult to reverse without active monitoring.

The sentiment spectrum runs from explicit endorsement all the way down to negative mention and hallucination. A good AI tracker scores each mention on this spectrum and flags anomalies before they cause downstream damage.

Topify’s Sentiment Analysis assigns a 0-100 score to brand mentions across AI platforms, tracking whether the AI is recommending you, mentioning you neutrally, qualifying you with caveats, or actively misrepresenting your brand.

#3 — Competitive Position: Where You Land Relative to Everyone Else

You could be in the answer and still be losing.

In a synthesized AI response, order matters. A brand named first in a recommendation list captures disproportionate attention and trust. A brand mentioned third, after two competitors, often functions as an afterthought regardless of its actual quality.

The data on this is hard to ignore. Brands cited in AI Overviews earn a 35% higher organic CTR compared to uncited brands in the same query. AI-referred visitors convert at rates 4.4 times higher than traditional organic visitors according to Semrush, and as high as 23 times higher according to Ahrefs analysis.

Position inside the AI answer is a direct revenue variable.

The AI citation probability also follows a clear decay curve. A brand ranking #1 in Google has a 33.07% probability of being cited in AI results. By positions #6-10, that probability drops to the 13-17% range. Below #11, it falls under 5%. Meanwhile, 76.1% of URLs cited in AI Overviews come from Google’s top 10 results entirely.

What this means: your AI visibility strategy and your SEO strategy are more connected than they look, but they aren’t the same. Tracking where you rank relative to competitors inside AI answers is a distinct data layer that requires its own tool.

Topify’s Competitor Monitoring tracks your position relative to competitors across AI platforms in real time, so you can see exactly when a rival moves ahead of you in AI recommendations and understand why.

#4 — Source Attribution: Which URLs Is AI Actually Pulling From?

AI doesn’t generate information out of thin air. It pulls from specific sources to ground its answers.

Knowing which URLs an AI engine is citing is one of the most actionable data points available to a content team. If a competitor’s blog post is being referenced every time someone asks a category question in your space, that URL is part of the trust graph your brand needs to influence.

Here’s a counterintuitive finding from GEO research: adding credible external citations to your own content can increase your AI visibility by 115%. In traditional SEO, linking out to other sites was something to minimize. In the AI era, fact density and external credibility markers are exactly what makes content more citable.

The structural point matters too. Research shows that 44.2% of all LLM citations come from the first 30% of the text. If your answer to a common industry question is buried in paragraph eight, AI engines often won’t find it.

Knowing which sources AI is currently citing gives you a direct map for content investment. Topify’s Source Analysistracks the exact domains and URLs that AI platforms pull from when answering prompts in your category, showing you where authority is concentrated and where the gaps are.

#5 — Prompt Coverage: Are You Tracking the Questions That Actually Matter?

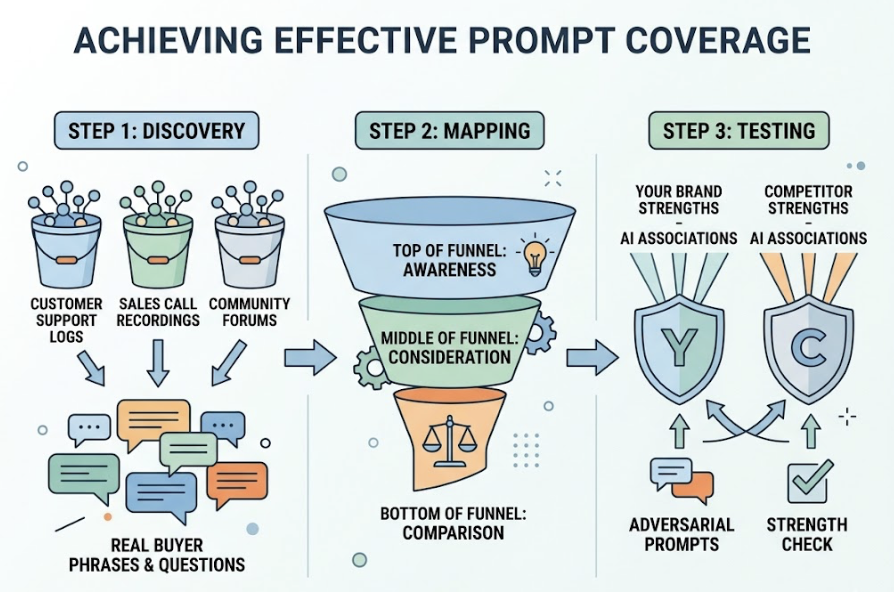

An AI tracker is only as good as the prompts it monitors. And most tools let you set prompts without helping you figure out which prompts to set.

This is a bigger problem than it sounds.

An estimated 70% of AI prompts are invisible to traditional SEO tools because they’re long-form, conversational, and multi-step in ways that keyword tools weren’t designed to capture. Users don’t type “best CRM software” into ChatGPT. They ask “I’m running a 12-person sales team and we keep losing deals in the follow-up stage, what CRM would actually fix that?” The brand that shows up in that answer wins. The brand that’s only tracking short-tail keywords never sees it coming.

The data gets sharper in B2B. In SaaS specifically, there’s a 40-60% disconnect between Google search ranking and AI citation share. Brands that rank #1 organically can have near-zero presence in AI recommendations, simply because they’re not being asked about in the prompts that matter to their buyers.

Effective prompt coverage requires discovery, not just monitoring. That means pulling from customer support logs, sales call recordings, and community forums to find how real buyers actually phrase their questions. It means mapping prompts across intent levels from top-of-funnel awareness to bottom-of-funnel comparison. And it means testing “adversarial prompts” to check whether AI engines associate specific strengths with your brand or your competitors.

Topify continuously surfaces new high-value prompts as AI recommendations evolve, rather than locking you into a static list that gets stale as user behavior shifts.

When All 5 Work Together, You Stop Guessing and Start Acting

Each of these metrics has standalone value. Visibility tells you if you’re in the room. Sentiment tells you if the room is listening. Position tells you where you’re standing relative to competitors. Source attribution tells you which doors to walk through. Prompt coverage tells you which conversations to show up for.

But the real advantage comes from running all five as a connected loop: analyze where AI authority is concentrated, create content built to be cited, distribute through sources AI engines already trust, and measure the impact continuously.

That’s the difference between hoping your brand appears in AI answers and engineering it.

Topify is built around this five-pillar framework, combining visibility tracking, sentiment scoring, competitive position monitoring, source attribution, and prompt discovery in a single platform. It’s used by 50+ enterprises and startups to turn AI visibility from an unknown into a measurable growth channel.

Conclusion

The brands that build an early advantage in AI search won’t do it by accident. They’ll do it by measuring what actually matters: not whether they showed up, but how they showed up, where they ranked, what the AI said about them, which sources drove the mention, and whether they’re tracking the prompts that buyers are actually using.

The five-pillar checklist above is the starting point. The brands ignoring it are leaving their AI narrative to chance.

Frequently Asked Questions

What is an AI tracker?

An AI tracker is a tool that monitors how your brand appears in AI-generated responses across platforms like ChatGPT, Gemini, and Perplexity. Beyond simple mention detection, a comprehensive AI tracker measures visibility rate, sentiment, competitive position, source attribution, and prompt coverage.

Why isn’t Google Analytics enough for tracking AI visibility?

Google Analytics tracks behavior after someone clicks to your site. It can’t tell you what happened inside the AI answer: whether you were mentioned, how you were framed, or where you ranked relative to competitors. AI visibility requires a separate tracking layer entirely.

How often should I run AI tracking reports?

Because AI responses are non-deterministic (the same prompt produces different answers more than 90% of the time), single snapshots aren’t reliable. Tracking should run continuously, with prompts sampled multiple times per session across platforms to build statistically meaningful trend data.

What’s the difference between an AI mention and an AI endorsement?

A mention means your brand name appeared in an AI response. An endorsement means the AI actively recommended your brand using language that signals trust and preference. Research shows brands receive outright endorsements only 28% of the time they’re mentioned, making sentiment tracking essential.

Do traditional SEO rankings affect AI visibility?

Yes, but the relationship isn’t 1:1. Around 76.1% of AI-cited URLs come from Google’s top 10 results, so SEO matters. That said, there’s a 22.9% overlap between traditional rankings and AI citations in e-commerce, and up to a 60% disconnect in SaaS. High organic rank does not guarantee AI visibility.