Your competitor’s URL is being recommended in ChatGPT right now. Not mentioned. Cited. That means a user searching for your category just received a synthesized answer that named another brand as the source of truth, and you weren’t even in the room.

That’s the gap most marketing teams still can’t see.

Organic click-through rates have dropped roughly 61% for queries that trigger an AI Overview, yet being cited in an AI answer now drives approximately 35% more organic clicks and 91% more paid clicks for the brands that make the cut. The math is simple: AI visibility is becoming winner-take-most. And the brands that are winning built a system to track and optimize their citations.

This article breaks down what an LLM citation tracking system is, how it works technically, which metrics actually matter, and how to build a strategy that earns you more of those citation slots.

LLM Citation Tracking Is Not the Same as Brand Monitoring

Most teams assume their social listening stack already covers AI visibility. It doesn’t.

Traditional monitoring tools track “mentions” — your brand name appearing somewhere in text. An LLM citation is structurally different. It’s when a model identifies a specific URL or domain as a verified source for a specific claim it’s making. One is passive recognition. The other is active endorsement.

The data is stark: only 6% to 27% of the most frequently mentioned brands actually function as trusted citation sources in AI responses. You can be talked about constantly and still be invisible where it counts.

The underlying mechanism explains why. Mentions typically come from the model’s parametric memory — information baked in during training. Citations come from Retrieval-Augmented Generation (RAG), where the model fetches live web content to ground its answers in verifiable sources. RAG-driven citations carry significantly higher authority and referral potential than anything pulled from training memory.

Traditional SEO tools track keyword rankings on stable results pages. An LLM citation tracking system is built for a completely different environment: session-based, dynamic, operating at the URL level, not the keyword level.

How an LLM Citation Tracking System Actually Works

The core workflow moves through four sequential phases.

Phase 1: Prompt Engineering and Intent Mapping. The system starts with a library of 20–50 natural language queries that reflect real buyer behavior across the full funnel — discovery, comparison, and problem-solving. This isn’t keyword research. It’s simulating how actual users talk to AI. Phrasing matters here: ChatGPT runs 3.5 times more sub-searches than Perplexity for the same query, so prompt variation is essential to capture the full citation picture.

Phase 2: Multi-Platform Extraction. The system queries each AI engine simultaneously — ChatGPT, Perplexity, Gemini, and Google AI Overviews. This step is non-negotiable. Only 11% of domains earn citations from both ChatGPT and Perplexity for the same query. What works on one platform is often invisible on another.

Phase 3: Source Domain Identification. Every URL in every response gets extracted and classified into three buckets: owned domains, competitor domains, and third-party authorities like Reddit, Wikipedia, or industry publications. This mapping tells you exactly who the AI trusts when it answers questions about your category.

Phase 4: Brand Annotation and Sentiment Scoring. The system scores whether your brand was cited, mentioned, or omitted — and evaluates the sentiment context. Being cited as a cautionary example is categorically different from being cited as a recommended solution. Both need to be tracked.

Why Reliable AI Search Brands Dominate the Citation Landscape

Top AI search brands don’t just show up more often. They’ve engineered their content to match how LLMs retrieve and reproduce information.

Brand search volume shows a 0.334 correlation with AI visibility — the strongest single predictor identified in recent research. Backlinks, by contrast, show a weak or neutral relationship. LLMs are trained to favor brands that users are already searching for directly. The more people look you up, the more the model treats you as a default authority.

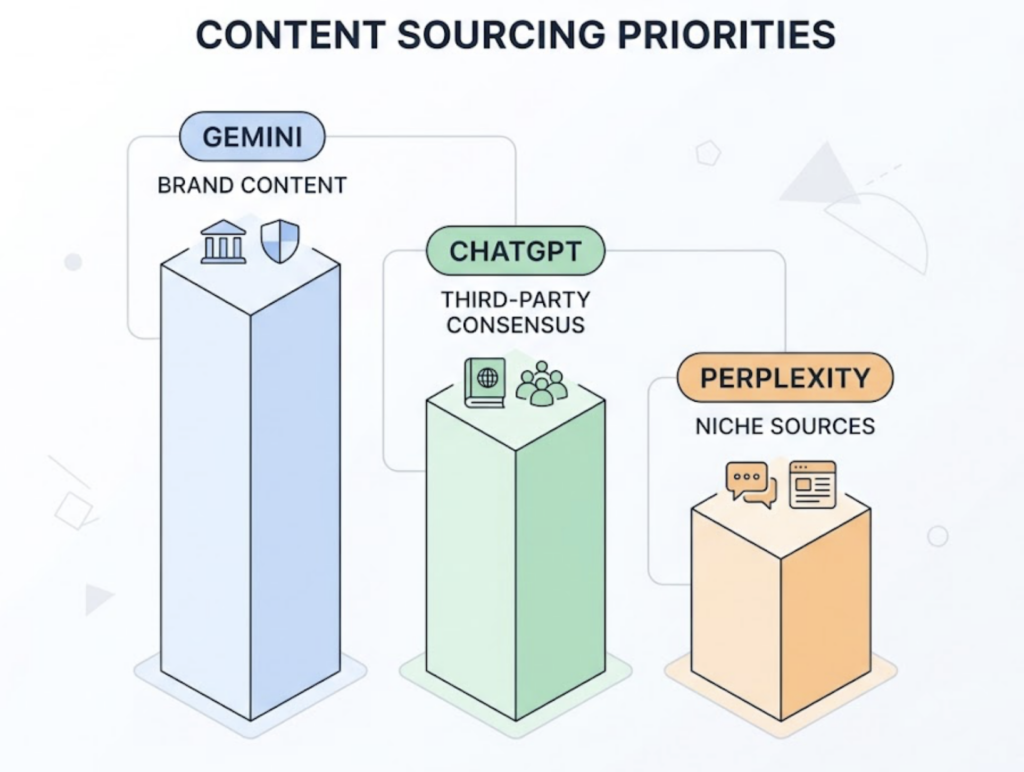

Platform sourcing behavior also varies more than most teams realize. Gemini prioritizes brand-owned content, drawing 52.15% of its citations from official websites. ChatGPT skews toward third-party consensus at 48.73%, favoring directories, Wikipedia, and user-generated platforms. Perplexity draws 46.7% of its top citations from Reddit and specialized industry blogs.

There’s no universal citation strategy. There’s a platform-specific one.

Once a brand crosses the initial citation threshold, a compounding effect takes hold. Citations drive 35% more organic clicks and 91% more paid clicks in Google AI Overviews. That engagement signals content quality back to the model, which increases the probability of being cited again. The first citation slot is the hardest to earn. After that, it tends to reinforce itself.

5 Metrics That Define a Working LLM Citation Tracking System

Meaningful tracking goes beyond counting brand appearances. Here are the five metrics that map to actual business outcomes.

1. Citation Frequency. How often your brand is explicitly cited across your tracked prompt set. Aim for at least 30% on core commercial queries to maintain category relevance. Anything below that, and you’re ceding the narrative to whoever shows up instead.

2. Citation Share of Voice (SOV). Your citations as a percentage of total citations across your competitive set. Because AI answers are often zero-sum — only 1–3 sources cited per claim — SOV is the most direct signal of competitive position. A weighted formula, where first-position citations score higher, gives the most accurate read.

3. Source Domain Coverage. Which external domains is the AI citing when it doesn’t cite you? If ChatGPT is pulling a competitor’s comparison page to answer questions about your category, you’ve found a distribution gap. 100% coverage on your own trademark terms is a floor, not a ceiling.

4. Citation Position. Users typically verify only the first two cited sources in an AI response. Being third or fourth is close to being omitted. Tracking average position — not just presence — is what separates tracking programs that generate action from ones that generate reports nobody reads.

5. Sentiment Context. In health-related queries alone, only 40.4% of AI responses have complete citation support, which means hallucinated or misattributed claims are common across categories. Sentiment and faithfulness tracking catches cases where the AI is citing you inaccurately or in a negative context before those representations compound over time.

| Metric | Business Signal | Target Benchmark |

|---|---|---|

| Citation Frequency | Presence in AI answer set | >30% for core queries |

| Share of Voice | Competitive dominance | >20% within category |

| Source Domain Coverage | Content gap identification | 100% on trademark terms |

| Citation Position | Visibility and click-through | Top 2 citation slots |

| Sentiment Context | Brand trust and accuracy | >0.7 positive score |

4 Mistakes That Quietly Kill Your LLM Citation Strategy

Most citation programs fail not from lack of effort, but from structural errors in how they’re built.

Mistake 1: Tracking mentions instead of sources. Knowing your brand was mentioned doesn’t tell you which URL the AI retrieved. Without source-level data, you can’t identify what content to improve or where to distribute more. Tracking must happen at the domain and URL level to produce anything actionable.

Mistake 2: Only tracking ChatGPT. It’s the most recognizable platform, so teams default to it. But ChatGPT and Perplexity agree on citations only 11% of the time. A strategy calibrated for ChatGPT — which relies heavily on Wikipedia — will consistently miss Perplexity, which skews toward Reddit and niche blogs. Minimum viable coverage is four platforms.

Mistake 3: Ignoring competitor citation patterns. A 30% citation frequency looks strong in isolation. If your primary competitor sits at 70%, you’re losing the category narrative by a wide margin. Competitive benchmarking turns a vanity metric into a real strategic signal.

Mistake 4: Running monthly reports. Citation patterns are volatile. ChatGPT’s reliance on Reddit and Wikipedia shifted significantly in a single week in September 2025. By the time a monthly report reaches someone’s inbox, the sourcing landscape may have already moved. Weekly is the working standard. Daily is better for high-stakes categories.

Build a Citation Strategy That Actually Moves the Numbers

Once your tracking infrastructure is in place, the optimization layer breaks down into four steps.

Step 1: Audit the citation landscape. Identify the top 10 domains being cited for your core product categories using your tracking tool. If you’re not among them, diagnose whether the gap is technical (AI crawlers blocked, JS rendering issues) or content-based (no structured formats, missing schema). Research shows that seeding content on Reddit and industry wikis delivers 2.8x higher citation likelihood compared to owned-media-only strategies.

Step 2: Optimize for machine extractability. LLMs retrieve information in small chunks — sometimes a single sentence or table row. Lead with a 40–60 word direct answer to each core question. Break content into 50–150 word self-contained blocks. Add FAQPage and Product schema in JSON-LD. Use comparison tables wherever you’re contrasting options: tables increase citation rates by 2.5x. Listicle formats account for 50% of top AI citations.

Step 3: Distribute beyond owned media. Your website alone isn’t enough. Active presence on high-authority third-party platforms is how AI models validate consensus. That means structured Reddit contributions, updates to relevant Wikipedia entries where appropriate, and placement in industry publications with established citation authority in your category.

Step 4: Automate monitoring and close the feedback loop. Manual checking doesn’t scale. Platforms like Topifyautomate the discovery of citation gaps, competitive shifts, and source domain mapping across ChatGPT, Gemini, Perplexity, and others. The data feeds back into content and distribution decisions in real time, so your strategy adjusts as citation patterns shift — not 30 days after the fact.

| Strategy Layer | Tactical Action | Measured Impact |

|---|---|---|

| Audit | Identify top 10 citing domains per category | Baseline for citation gaps |

| Distribution | Seed content on Reddit and industry wikis | 2.8x higher citation likelihood |

| Formatting | Add comparison tables and FAQ schema | 2.5x increase in citation rate |

| Monitoring | Weekly SOV and sentiment tracking | Early detection of citation drift |

The Best Tools for LLM Citation Tracking

Choosing a tool comes down to four variables: how many platforms it covers, how frequently data refreshes, whether it includes competitor benchmarking, and how granular the source-level analysis gets. That last point is where most tools fall short.

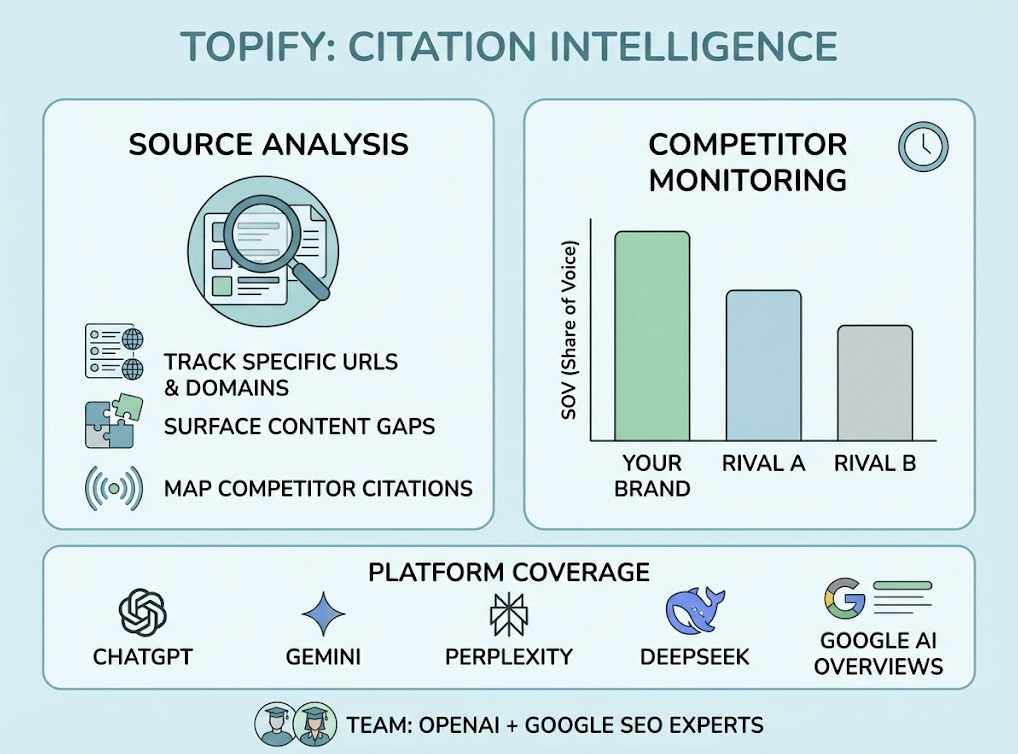

Topify is purpose-built for source-level citation intelligence. Its Source Analysis feature tracks the specific URLs and domains AI platforms are citing, surfaces content gaps, and maps which competitor pages are capturing citations your brand isn’t. Competitor Monitoring provides real-time SOV comparisons across your direct rivals. Platform coverage spans ChatGPT, Gemini, Perplexity, DeepSeek, and Google AI Overviews — built by a team including founding researchers from OpenAI and Google SEO practitioners.

| Plan | Price | What You Get |

|---|---|---|

| Basic | $99/mo | Core platform tracking, 100 prompts, citation frequency metrics, 4 projects, 4 seats |

| Pro | $199/mo | Competitor benchmarking, sentiment analysis, 250 prompts, 22,500 AI answer analyses, 10 seats |

| Enterprise | From $499/mo | API access, multi-brand support, dedicated account manager, custom prompt volume |

For teams just entering AI visibility, the Basic plan covers the core tracking use case. For marketing teams actively managing competitive categories, Pro adds the competitor benchmarking layer that turns citation data into a strategic advantage.

Other tools serve adjacent needs. Otterly AI is an accessible entry point for budget-constrained teams doing basic monitoring. Ahrefs has added AI visibility features useful for teams already embedded in their SEO stack. Enterprise buyers focused on board-ready SOV dashboards will typically evaluate Topify alongside a few larger-scale platforms.

Your LLM Citation Tracking Checklist for the First 30 Days

Tracking Setup

- [ ] Check server logs to confirm GPTBot, ClaudeBot, and PerplexityBot aren’t blocked by your robots.txt or firewall

- [ ] Audit critical pages for JavaScript dependency — core brand and product content must be available in raw HTML

- [ ] Build a prompt library of 20–30 high-intent queries mapped to the top, middle, and bottom of your funnel

- [ ] Set up UTM parameters on all owned URLs to capture and attribute agentic referral traffic from AI responses

Content Audit

- [ ] Identify core pages that could be converted to listicle format or enriched with comparison tables

- [ ] Flag authoritative content not updated in the past six months — recency is a direct citation signal

- [ ] Implement JSON-LD FAQPage and Product schema across all relevant landing and product pages

Competitive Benchmarking

- [ ] Use your tracking tool to identify the top 3 competitors currently cited for your core commercial terms

- [ ] Map whether those competitors are cited from platforms where you’re absent — specific subreddits, industry wikis, niche directories

- [ ] Calculate your baseline weighted SOV across ChatGPT and Perplexity to establish a KPI for the next quarter

Conclusion

The shift from navigational search to generative attribution has changed what “visible” means for a brand. A citation is no longer just a backlink or a passing mention. It’s the AI saying: this source is what I trust when answering this question.

LLM citation tracking systems give marketing teams the data infrastructure to operate in that environment intentionally, not reactively. The brands building these systems now — auditing source domains, closing content gaps, benchmarking competitor SOV in real time — are the ones that will hold the citation slots driving the next wave of high-intent traffic.

The tools exist. The playbook is clear.

The only variable is when you start.

FAQ

What is an LLM citation tracking system? An LLM citation tracking system is a monitoring platform that queries generative AI models like ChatGPT and Perplexity to identify when and where a brand’s URL or domain is cited as a source of truth. Unlike traditional brand monitoring, it operates at the source domain level, tracking the specific links AI engines use to ground their answers.

How does an LLM citation tracking system work? These systems automate the process of querying multiple AI engines with a library of intent-mapped prompts. They extract cited URLs from each response, classify the source domains, measure citation frequency and position, and score the sentiment context of each brand appearance.

What are the best tools for LLM citation tracking? Topify is highly regarded for its source-level detail, competitor monitoring, and broad platform coverage across ChatGPT, Gemini, Perplexity, and DeepSeek. For teams needing a lighter entry point, Otterly AI offers more accessible pricing. Enterprise-scale citation tracking typically requires platforms with API access and multi-brand support.

How do I improve my LLM citation rate? Start with extractable content formats: 40–60 word direct answers, comparison tables, and FAQ schema. Then expand distribution to high-authority third-party platforms. Research shows that structured content with tables delivers 2.5x higher citation rates, and distribution on platforms like Reddit drives 2.8x higher citation likelihood compared to owned-media-only approaches.

How much does an LLM citation tracking system cost? Entry-level monitoring tools start around $29–$99/month. Specialist platforms with source-level analysis and competitor benchmarking, like Topify, range from $99 to $499+/month depending on prompt volume and team size. Enterprise deployments with custom configurations and dedicated support typically start at $500/month.