Your domain authority is 72. Your keyword rankings are solid across three dozen commercial terms. But when someone types “best tool for [your category]” into ChatGPT or Gemini, your brand doesn’t appear once. A competitor with half your backlink profile does.

This isn’t a fluke. It’s what happens when traditional SEO metrics stop explaining AI search behavior. Understanding the gap requires a different framework entirely: AI citation tracking.

What AI Citation Tracking Actually Measures (And Why It’s Not SEO)

AI citation tracking is the practice of monitoring which specific domains and URLs are referenced by generative AI platforms when answering user queries. It measures source selection, not link hierarchy.

That distinction matters more than it sounds. In traditional search, a high-DA site might rank first because of historical backlink volume. But the same site can be invisible in an AI answer if its content is buried behind a paywall, lacks structural clarity, or doesn’t provide a direct extractable response to what the model is trying to prove.

The correlation data makes this concrete. Backlinks have a strong correlation with Google rankings (r > 0.70) but show low correlation with AI citation rates (r = 0.218). By contrast, topical authority depth correlates at r = 0.41 with AI visibility, and roughly 65% of AI citations go to content published within the past year. Structure and freshness beat popularity.

There’s also a critical distinction between brand mentions and website citations. A brand mention occurs when an AI names your company in its response. A citation is when it links to your URL as a source of evidence. The gap between these two states, often called the Mention-Citation Gap, is where most brands quietly lose. You’re recognized, but not trusted as a source.

Closing that gap is the actual goal of any serious AI citation tracking service.

How AI Citation Tracking Service Works: The Mechanics Behind the Data

Most AI citation tracking services work by reverse-engineering the retrieval-augmented generation (RAG) pipeline that modern AI platforms use to generate answers.

When a user submits a prompt, the AI doesn’t just pull from memory. It runs a multi-step process: expanding the query into search terms, retrieving candidate documents from an index (Bing for ChatGPT, Google Search for Gemini), re-ranking those candidates by relevance and clarity, then synthesizing an answer while citing the specific chunks it used.

An AI citation tracking service replicates this process systematically. Step one: submit a library of representative prompts across the buyer journey, from “what is X” to “best X for Y.” Step two: parse the responses to identify every cited URL and brand mention. Step three: aggregate which domains are winning share of voice across your prompt set. Step four: compare your citation footprint against competitors to find the specific source gaps where you should have been cited but weren’t.

Platform-specific behavior adds another layer of complexity. Gemini tends to prioritize brand-owned websites and official domains. ChatGPT leans toward directories and third-party consensus sources like Yelp or industry listings. Perplexity favors niche expertise and specialist publications. Claude relies heavily on community and user-generated content like Reddit and forums.

This means a brand that tracks only one platform is likely misreading its actual AI visibility position.

5 Signals That Your AI Citation Strategy Needs a Fix

For most teams, AI citation problems don’t show up in dashboards. They show up as quiet losses you can’t explain.

Signal 1: The competitive frequency imbalance. Your competitors appear in AI answers 3x more often than your brand across the same set of industry prompts, even when your organic rankings are comparable. The AI has decided their content is more citable, typically because it’s better structured for extraction by a RAG system.

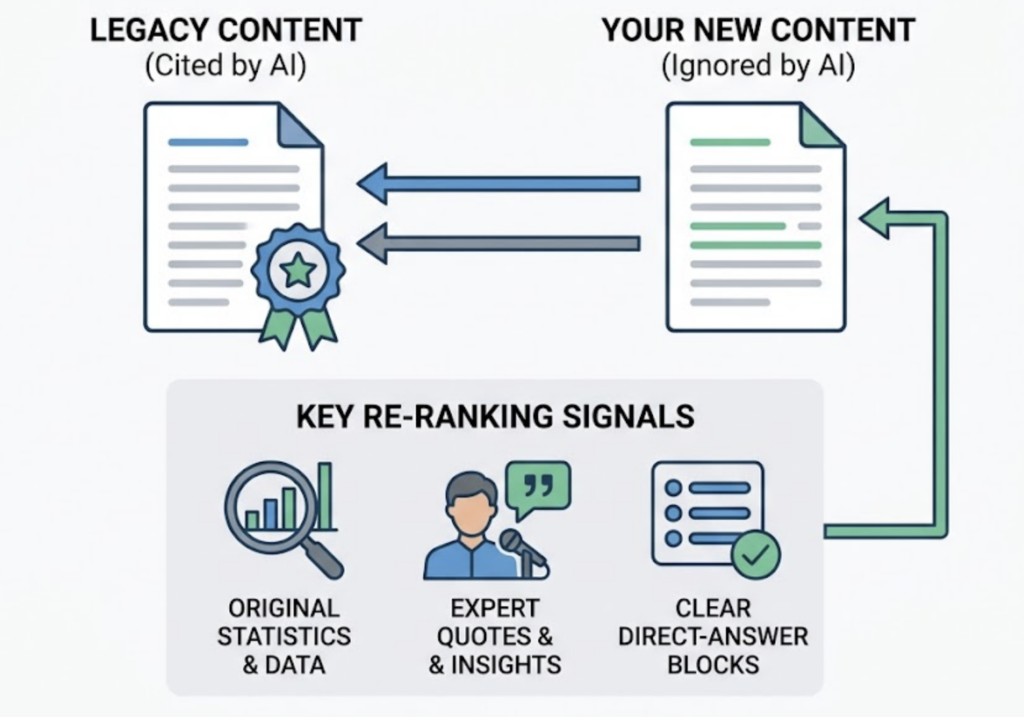

Signal 2: Legacy citing beats your new content. The AI keeps citing a competitor’s three-year-old article while ignoring your comprehensive, recently updated piece. This usually means your new content lacks the signals that help AI re-rankers justify it as an authoritative source, such as original statistics, expert quotes, or clear direct-answer blocks.

Signal 3: High-traffic pages with zero citations. Your top organic articles are pulling solid traffic but have a citation rate near zero. The content is “AI-opaque.” It may be too long-winded, structured for human reading rather than chunk extraction, or missing the direct-answer format that AI crawlers prioritize.

Signal 4: Third parties describing your product better than you do. Gemini is citing Reddit threads and directories to explain your own product while your official website gets ignored. This signals a failure in schema markup or a lack of verifiable, structured data on owned content.

Signal 5: No change after optimization. You restructured content with FAQ blocks and direct answers, but AI citation rates haven’t moved after 60 days. Your tracking granularity is likely too coarse. You’re either monitoring the wrong prompts, not covering enough platforms, or the tracking cycle is too short to detect the slow re-ranking shifts.

That last one is worth sitting with.

How to Measure AI Citation Tracking: Metrics That Actually Matter

A single snapshot of AI visibility is nearly meaningless. The value is in tracking trends and competitive share over time.

Citation Frequency is the baseline: the percentage of tracked prompts where your domain is cited. Think of it as your “at-bat rate” in AI answers.

Citation Share of Voice (C-SOV) is more diagnostic. It’s your brand’s citation count divided by all citations in a given prompt set. A C-SOV of 5-10% is healthy in general categories. In highly competitive niches, 1-5% is realistic. This is the metric that tells you whether you’re growing relative to the category, not just in isolation.

Citation Prominence adds depth. A citation placed in the first paragraph of an AI response or at the top of a sources list drives meaningfully higher click-through than one buried at the end. Some tracking platforms now apply prominence scoring to weight these positions.

Citation Velocity measures the change in citation frequency over 30-day cycles. Citation losses tend to be binary, either you’re cited or you’re not. A sudden drop in velocity is an early warning that the AI has re-ranked away from your content, often triggered by a competitor’s recent optimization or a freshness issue on your side.

The CTR stakes are real. When a brand is cited in an AI Overview, organic CTR holds relatively stable. When a brand is present in a search but not cited, organic CTR drops roughly 46%. That’s not a soft signal. That’s a measurable revenue gap.

A Practical Checklist for AI Citation Tracking Setup

Getting the infrastructure right before you start tracking saves a lot of false signal interpretation later.

- Define a core prompt set of at least 50 questions spanning informational, comparative, and transactional intents

- Cover ChatGPT, Gemini, and Perplexity at minimum (each uses different retrieval logic)

- Establish a citation baseline for at least 3 direct competitors before you start optimizing

- Verify that AI crawlers like OAI-SearchBot and GPTBot are not blocked in your robots.txt

- Set tracking frequency to weekly or bi-weekly (daily is too volatile; monthly is too slow)

- Map citations back to specific URLs and content formats to identify what’s actually working

The Best AI Citation Tracking Tools in 2026: What to Look for Before You Commit

Not every tool marketed as an “ai visibility tracker” actually gives you the source-level data you need to act. Before committing, look for three things: multi-platform coverage (at least ChatGPT, Gemini, Perplexity), competitor source benchmarking, and the ability to trace citations back to specific URLs rather than just brand mentions.

Here’s how the main options compare:

| Tool | Starting Price | Key Advantage | Best For |

|---|---|---|---|

| Topify | $99/mo | Source Analysis + 7-platform coverage | Marketing teams, agencies |

| ZipTie | $69/mo | Real screenshots + CTR analysis | SEO teams focused on AIO |

| Profound | $399/mo | 10+ engines + enterprise compliance | Large enterprises |

| Otterly AI | $29/mo | Affordable multi-platform entry | Small teams, early-stage |

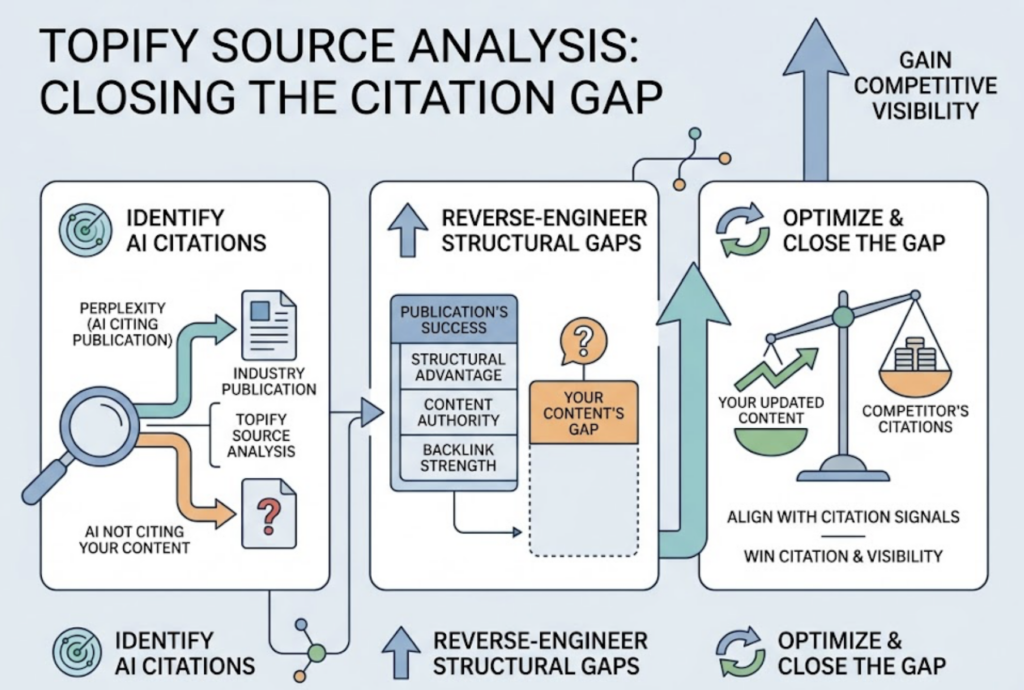

For teams that need a best ai visibility tracker with genuine depth, Topify tends to stand out for a specific reason: its Source Analysis function lets you reverse-engineer exactly which domains and URLs the AI is citing in your category, including which sources are driving your competitors’ visibility. In practice, this means you can see that Perplexity is consistently citing a specific industry publication instead of your content, identify what that publication is doing structurally, and close the gap.

Topify covers ChatGPT, Gemini, Perplexity, DeepSeek, and several other major platforms, which matters if your audience is global. The platform tracks seven core metrics: visibility, sentiment, position, volume, mentions, intent, and CVR. For teams managing multiple brands or clients, it also includes one-click competitor monitoring and prompt discovery.

The Basic plan starts at $99/month and includes 100 tracked prompts and 9,000 AI answer analyses across 4 projects. The Pro plan at $199/month scales to 250 prompts and 22,500 analyses. For enterprise needs with dedicated support, pricing starts at $499/month. Full details are on the Topify pricing page.

On the flip side, if your primary need is tracking Gemini visibility specifically, a gemini visibility tracker with screenshot capture (like ZipTie) may be useful for visual documentation. But if your goal is understanding why the AI cites what it cites and how to change it, source-level analysis is non-negotiable.

A 90-Day Strategy to Improve Your AI Citation Tracking Results

The brands improving their AI citation rates aren’t publishing more content. They’re publishing content that AI systems can actually extract from.

Days 1-30: Establish the baseline. Run your core prompt set across at least three platforms. Identify the top 5 domains that are being cited instead of you. Audit their content structure, not their DA. Note whether they use direct-answer introductions, comparison tables, original statistics, or FAQ blocks. That’s your optimization target, not their backlink profile.

Also do the technical basics: verify crawler access, implement Organization and FAQPage schema, and check whether your brand is properly represented in knowledge bases that AI engines use for parametric grounding.

Days 31-60: Run content experiments. Research from the Generative Engine Optimization (GEO) study points to three high-impact interventions. Adding expert quotes to content correlates with roughly a 41% visibility increase. Including clear statistics correlates with a 22% lift. Converting paragraphs into comparison tables and numbered lists increases the likelihood of AI Overview citation by around 47%.

Rewrite your top 20 pages to open with a 40-60 word direct answer. Match H2 headers to the exact phrasing of user prompts, not your internal topic taxonomy.

Days 61-90: Validate and scale. Compare your current citation rates against the Phase 1 baseline. Identify which content formats drove the biggest lift. Look for any “binary losses,” prompts where you were previously cited but have been pruned. These usually indicate a freshness gap or a competitor who recently optimized for that specific prompt.

Then replicate what worked. The goal is a documented content structure that consistently earns citations, not a one-time spike.

Conclusion

AI citation tracking isn’t a niche analytics exercise. It’s the mechanism that determines whether your brand shows up in the answers your buyers are reading right now.

Traditional SEO tells you how you rank in a list. AI citation tracking tells you whether you’re the evidence behind an answer. As generative discovery continues to reshape how buyers research decisions, with some projections pointing to 50% of search interactions running through AI engines by 2028, the brands that built systematic tracking infrastructure early will have a compounding advantage. Get started with Topify to see where your brand stands in AI answers today.

FAQ

Q: What is an AI citation tracking service? A: It’s a platform that systematically queries AI tools like ChatGPT, Gemini, and Perplexity to monitor which brands and URLs are referenced as sources. Unlike traditional SEO tools, it measures your presence inside synthesized AI answers, not just link rankings.

Q: How much does AI citation tracking cost? A: Pricing varies widely by use case. Entry-level tools start around $29/month for basic multi-platform tracking. Mid-market platforms with source analysis and competitor benchmarking typically run $99-$250/month. Enterprise solutions with custom prompt volumes and dedicated support start at $399-$499/month and scale from there.

Q: Can you give examples of AI citation tracking in practice? A: A SaaS brand tracking the prompt “best project management tool for remote teams” across three months might find that ChatGPT consistently cites a competitor’s comparison article rather than their own product page. The source analysis reveals the competitor’s article uses a structured feature table and a direct 50-word summary at the top, neither of which the brand’s page has. That’s a specific, actionable content gap, not a vague “we need better content” conclusion.

Q: How is AI citation tracking different from traditional backlink monitoring? A: Backlink monitoring tracks permanent HTML links between sites for SEO authority. AI citation tracking monitors real-time, algorithmically generated references that an AI uses to verify a specific claim. Backlinks correlate strongly with Google rankings. They show much weaker correlation with AI citation rates, where content structure and data clarity are the primary drivers.