Your Google rankings are holding. Your content is ranking on page one. But organic traffic is quietly falling — and no one can explain why.

That’s not a mystery anymore. It’s a structural shift. Nearly 69% of all global searches now end without a single click, with users getting their answers directly from AI-generated summaries. The traffic didn’t disappear. It got intercepted.

The problem isn’t your content. It’s your dashboard. The KPIs you’ve been reporting for years were built for a world where people clicked. That world is shrinking fast.

Your Rankings Are Still Going Up. Your Traffic Isn’t.

Traditional SEO metrics assume a simple chain: high rank → impression → click → visit. Break any link in that chain and the whole model falls apart.

Statista and Similarweb data now shows 68.7% of global searches resolve entirely within the search ecosystem, never generating an outbound click. That’s roughly 14 billion daily search sessions that produce zero traffic for any external site. On mobile, the zero-click rate hits 77.2%.

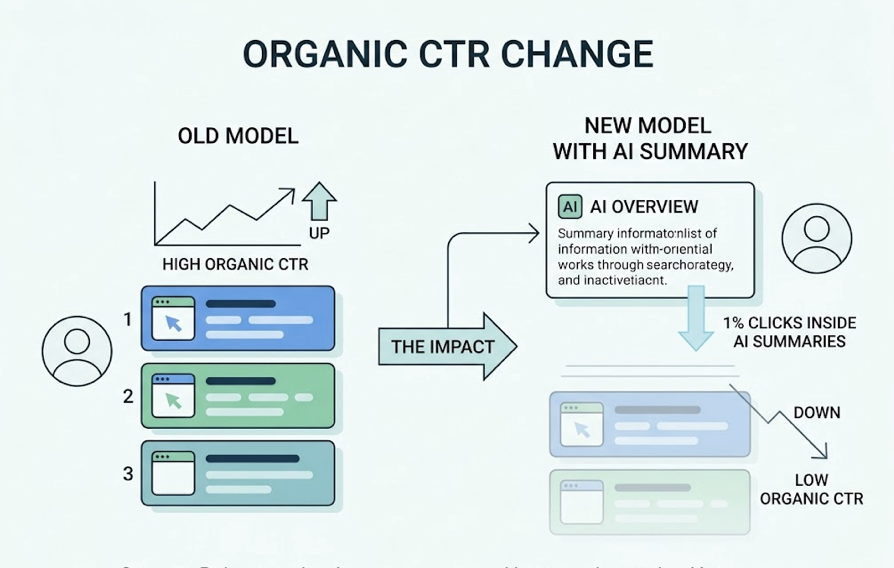

The data from Seer Interactive and Authoritas makes the CTR impact concrete: when an AI Overview is present, organic CTR drops 61% — from 1.76% down to 0.61%. Paid CTR fares worse, collapsing 68% from 19.7% to just 6.34%.

Ranking #1 still matters. But it no longer guarantees visits the way it used to.

SEO, AEO, and GEO Don’t Just Differ in Name

Before rebuilding your KPI framework, it helps to be precise about what you’re actually optimizing for. These three disciplines have different mechanics, different targets, and different definitions of “winning.”

| Dimension | SEO | AEO | GEO |

|---|---|---|---|

| Core goal | Rank in blue-link results | Be the direct answer (snippets, voice) | Get cited and recommended by LLMs |

| Primary target | Google SERP | Featured snippets, Alexa, Siri | ChatGPT, Gemini, Perplexity |

| Success signal | Rank position, CTR, traffic | Inclusion rate in direct answers | AI visibility, sentiment, position |

| Key technical signal | Backlinks, relevance | Schema markup, FAQ structure | Entity authority, third-party mentions |

SEO handles the bottom of the funnel, where users still click to transact. AEO wins featured extractions for factual, question-based queries. GEO earns the brand a seat in synthesized, conversational responses.

You need all three. But you can’t track all three with the same scorecard.

The 3 SEO KPIs You Can’t Rely on Anymore

This isn’t about abandoning what worked. It’s about knowing where the blind spots are.

Organic CTR used to be a reliable proxy for content relevance. Now it measures something else entirely: how many of your indexed queries don’t trigger an AI answer. Pew Research and Semrush data shows only 1% of users click links embedded inside AI summaries. If your highest-traffic informational queries now trigger AI Overviews, CTR will drop even if your content is performing well.

Rank position is still useful for commercial queries. But for informational queries, ranking #1 organic is now primarily a prerequisite for citation — not a traffic driver in its own right. It gets you in the room. It doesn’t guarantee the result.

Bounce rate and time-on-page are losing meaning for informational content. When users increasingly resolve their question before reaching your site, the visitors who do arrive skew toward high-intent, late-funnel behavior. Your averages get distorted.

These metrics didn’t stop working. They stopped telling the whole story.

The KPI Framework Built for AEO and GEO

Here’s what the new scorecard looks like in practice. These are the metrics that actually reflect how your brand performs in a world where AI answers first.

AI Visibility Rate (Share of Model) This is the new Share of Voice. It measures how often your brand appears in AI-generated responses for high-intent category prompts. If you query ChatGPT with 100 variations of “best CRM for SaaS teams” and your brand appears in 48 of those responses, your Share of Model is 48%.

Citation Frequency How often AI platforms cite your content or mention your brand name in a response. This is the AEO equivalent of backlink count — it’s an authority signal in generative results. Research from the Princeton GEO study confirms brand search volume carries a 0.334 correlation with model confidence, making it the single strongest predictor of AI recommendation.

Sentiment Score AI doesn’t just list brands. It characterizes them. Being described as “reliable but expensive” or “good for small teams” shapes whether you appear in “best” or “affordable” category prompts. Sentiment Score measures the polarity of how AI engines describe your brand — and it directly filters which prompts you’re eligible to win.

Position in AI Answer Not all mentions are equal. Being named first in a ChatGPT recommendation carries more weight than appearing fifth. Position tracking measures your relative rank within AI-generated responses compared to competitors.

Recommendation Rate vs. Mention Rate There’s a critical gap between being listed and being recommended. A brand can appear in an AI response as a neutral option or as the explicit top pick. Recommendation Rate captures how often the AI actively steers users toward your brand, not just mentions it.

These five metrics, tracked consistently, give you a diagnostic view of your AEO and GEO performance that rank tracking simply can’t provide.

One Metric Most Brands Miss: Conversion Visibility Rate

Most teams stop at visibility. That’s a mistake.

High visibility with weak sentiment or poor positioning may generate brand impressions that never translate into intent. The gap between “being mentioned by ChatGPT” and “driving a user to search your brand name or visit your site” is where most AEO strategies leak.

CVR — Conversion Visibility Rate — estimates the likelihood that an AI-generated mention is actually moving users toward a conversion action, even when no click is recorded. It bridges the technical visibility metrics and real business outcomes.

The commercial case for closing that gap is real. Adobe’s 2026 analysis found AI-driven traffic to retail sites converts 42% better than traditional search traffic. In B2B SaaS, the gap is even wider: AI search visitors convert at 23x the rate of traditional organic visitors, because they’ve already completed a deep evaluation session inside the AI interface before ever clicking.

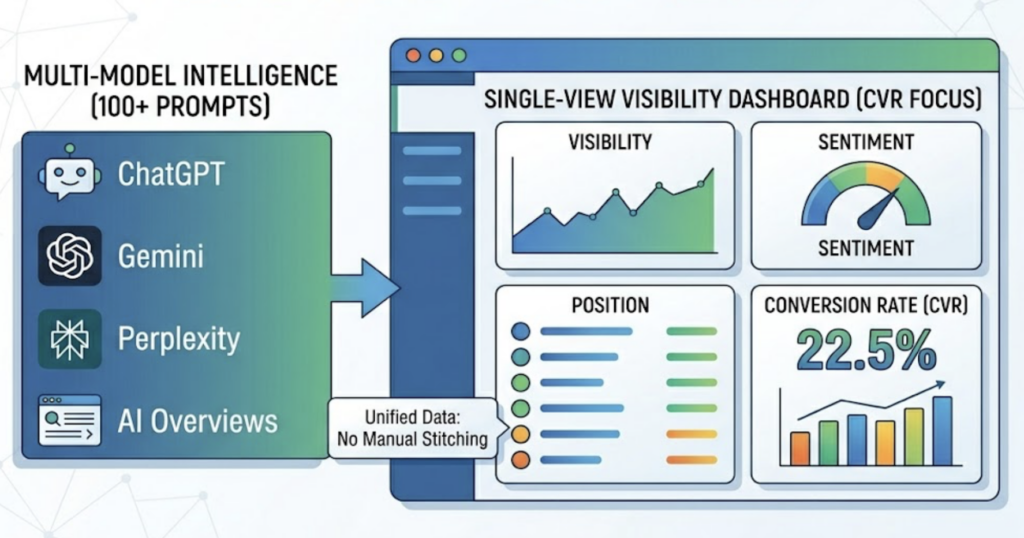

Topify includes CVR as one of its seven core tracking metrics, alongside visibility, sentiment, position, volume, mentions, and intent. The combined view is what makes it possible to correlate AI surface performance with downstream revenue signal — instead of guessing.

How to Start Tracking These KPIs Without Building from Scratch

The practical challenge is setup. Most teams default to Google Search Console and GA4, which have real blind spots in generative environments.

GSC’s new AI Mode filter tracks impressions and clicks, but it doesn’t capture pure citations — cases where a user saw your brand in an AI Overview and didn’t click anything. For queries with six or more words (the long-tail conversational format most likely to trigger AIOs), you’re essentially flying blind without a dedicated tracking layer.

GA4 is more useful for measuring quality once traffic arrives. Teams are building custom “AI Search” channel groups using regex to capture referral traffic from sources like chatgpt.com, perplexity.ai, and gemini.google.com. That data consistently shows AI traffic is a small slice of total volume (roughly 1% in 2026) but converts at a disproportionately high rate.

For actual AI visibility tracking, the practical path is to:

- Define the 30-50 core prompts your category uses across different AI platforms

- Establish a baseline Share of Model for each platform separately

- Track weekly — AI recommendations shift faster than SERP rankings

- Monitor competitor positions in parallel, not separately

Topify’s Basic Plan at $99/month handles this across ChatGPT, Gemini, Perplexity, and AI Overviews with 100 prompts out of the box. The seven-metric dashboard gives you visibility, sentiment, position, and CVR in a single view, so you’re not manually stitching together data from multiple tools.

One thing to flag: don’t assume a strategy optimized for Google’s AI Overviews transfers directly to ChatGPT. Research shows only an 11% overlap between domains cited by ChatGPT and those cited by Perplexity. Platform-specific optimization is table stakes in 2026.

Your Reporting Template Needs to Change, Too

The metrics upgrade only matters if the reporting structure changes with it. A slide deck built around “organic traffic up 12% month-over-month” doesn’t capture whether your brand is gaining or losing ground in generative search.

A practical AEO/GEO reporting template covers four layers:

AI Visibility Score — Share of Model across your core prompt set, broken out by platform. This is your headline metric, equivalent to the old “rankings summary.”

Competitor Gap — Where competitors appear in prompts where you don’t, and where you’ve closed or widened the gap month-over-month.

Sentiment Trend — Whether AI characterizations of your brand are moving in a commercially favorable direction (more “recommended,” fewer “expensive,” etc.).

CVR Estimate — Correlation between AI visibility changes and branded search volume or direct traffic, as a proxy for downstream commercial impact.

The goal isn’t just to rank. It’s to be the brand AI recommends.

That distinction shapes every decision downstream — what content you build, which third-party platforms you prioritize, how you brief your PR team. The 82-85% of AI citations that come from third-party sources (media coverage, Reddit threads, G2 reviews) means your off-site presence is now a direct AEO/GEO input, not just a brand exercise.

Conclusion

The KPI migration from SEO to AEO/GEO isn’t a future problem. For most brands, the gap between what their dashboard shows and what’s actually happening in AI search is already widening.

The good news: the new framework isn’t complicated. Share of Model, Citation Frequency, Sentiment Score, Position, Recommendation Rate, and CVR replace the old rank-and-click model with metrics that map directly to how AI engines make recommendations.

Start with a prompt baseline. Build the tracking layer. Then run SEO and AEO/GEO in parallel — they serve different funnel stages now, and collapsing them into one scorecard is how teams end up optimizing for the wrong signal.

FAQ

What’s the difference between AEO KPIs and GEO KPIs?

AEO KPIs focus on inclusion rate in direct-answer formats — featured snippets, voice search, factual extractions. GEO KPIs measure brand performance in synthesized, conversational AI responses from LLMs like ChatGPT or Gemini. In practice, you’ll want both: AEO metrics for structured content performance, GEO metrics for brand narrative and recommendation positioning.

Can I use Google Search Console to track AEO performance?

Partially. GSC’s AI Mode filter captures clicks and impressions on generative features, but it doesn’t record citations where a user read your brand name in an AI Overview without clicking. For full AEO visibility, you’ll need a dedicated multi-engine tracking tool alongside GSC.

How often should I review my AEO metrics?

Weekly at minimum. AI recommendations shift significantly faster than SERP rankings, and competitor positioning can change after a single news cycle or third-party content spike. Monthly review cycles that work for traditional SEO will miss meaningful movement in generative results.

What’s a good AI visibility score benchmark?

This varies by category and competitive density, but a Share of Model above 30% for your core category prompts is generally considered a strong position. More important than the absolute number is the trend and the competitor gap — are you appearing in prompts where your top competitors appear? That comparison is typically more actionable than a standalone score.

Do I need separate KPIs for different AI platforms like ChatGPT vs. Perplexity?

Yes. Research shows only an 11% overlap between domains cited by ChatGPT and those cited by Perplexity. A brand can rank well on one platform and be nearly invisible on another. Platform-specific tracking is essential, especially if your target audience is concentrated on particular AI interfaces.