Your brand ranks #1 on Google. Traffic looks stable. The dashboard is green.

And somewhere right now, a high-intent buyer just asked ChatGPT which tool to use in your category. Your competitor got recommended. You weren’t mentioned.

Your KPIs didn’t catch it.

That’s not a data gap. That’s a measurement system built for a world that no longer exists.

Ranking #1 on Google Doesn’t Mean You Exist in AI Search

Google’s dominance is cracking. Its global search market share has dropped below 90% for the first time since 2015, sitting at 89.56% as of early 2025. Meanwhile, ChatGPT now handles roughly 2.5 billion prompts per day, with about a third of those being direct information queries.

The shift isn’t just about volume. It’s about how answers get built.

AI platforms like ChatGPT, Perplexity, and Gemini use Retrieval-Augmented Generation (RAG) to synthesize answers from crawled sources. They don’t serve a list of links. They make a judgment call about which brands to name, which to skip, and what to say about each one.

Research shows that only 12% of AI-cited sources overlap with Google’s top 10 organic results.

That’s the gap most SEO teams still can’t see.

Why Traditional SEO KPIs Break Down for AEO

The entire logic of SEO measurement rests on a single assumption: users click links, and clicks are trackable.

AI search breaks that assumption completely.

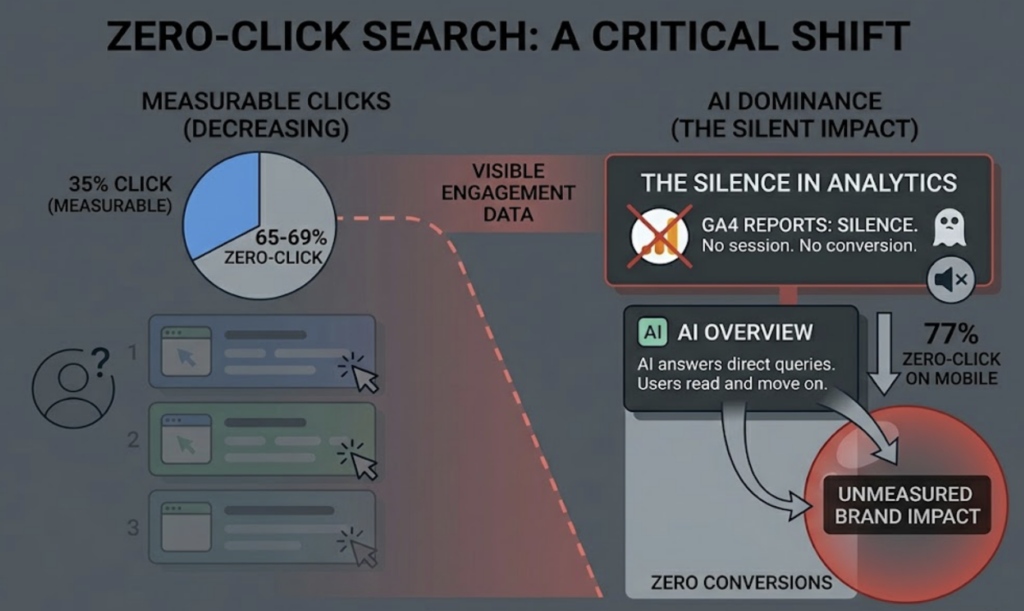

Zero-click search now accounts for 65–69% of all Google queries, and 77% on mobile. When AI Overviews answer a question directly, users read the summary and move on. No click. No session. No conversion event in GA4. Your analytics report shows silence while your brand’s narrative is actively being shaped in AI-generated text.

There are three specific failure modes worth understanding.

The invisible mention. A user asks an AI which software to use for your exact use case. Your brand gets described positively. They internalize the recommendation. GA4 shows zero traffic from the interaction.

The competitor blind spot. AI platforms often present competitors in a synthesized narrative, not as a list of domain names. Without dedicated monitoring, you have no way to know your share of voice in AI answers is eroding week by week.

The sentiment drift. AI pulls from third-party sources like Reddit, G2, and Wikipedia when forming its descriptions of brands. If your reputation is slipping in those channels, AI starts adding qualifiers. “While [Brand] is well-known, recent user feedback suggests…” That kind of framing does damage that never shows up in a keyword ranking report.

Gartner projects that traditional search engine traffic to websites will fall 25% by the end of 2026. The measurement gap isn’t theoretical. It’s already costing brands visibility they can’t currently quantify.

The 5 KPIs That Actually Measure AEO Performance

These aren’t replacements for your existing SEO stack. They’re the metrics your current stack was never designed to capture.

1. AI Visibility Rate

This is the foundational AEO metric, and the closest equivalent to keyword ranking in traditional SEO.

It measures the percentage of prompts in a defined test set where your brand gets mentioned or cited by an AI model. If you run 100 industry-relevant queries and your brand appears in 18 of them, your AI Visibility Rate is 18%.

For market leaders, this number typically needs to exceed 30% to reflect genuine category authority. Most brands tracking this for the first time discover they’re well below that threshold, even when their Google rankings look healthy.

2. Brand Mention Frequency by Platform

Not all AI platforms recommend the same brands. ChatGPT leans on Bing-indexed content and high-authority encyclopedia-style sources. Perplexity is a pure RAG engine that heavily weights Reddit discussions and real-time news. Gemini integrates Google’s Knowledge Graph and YouTube signals.

A brand that dominates on Perplexity can be nearly invisible on ChatGPT, and vice versa.

Tracking mention frequency across platforms separately gives you an accurate picture of where your AI presence is strong and where the gaps are. Averaging across platforms produces a number that’s accurate nowhere.

3. AI Sentiment Score

Visibility without sentiment context is incomplete data.

This metric tracks the attitudinal tone AI uses when mentioning your brand, expressed as a score (typically on a 0–100 or -100 to +100 scale). The calculation looks at positive recommendations and neutral mentions against negative descriptions and factual errors generated about your brand.

Being mentioned with the wrong framing compounds over time. AI systems aren’t static. They update their descriptions of brands as new content gets crawled. A negative sentiment score is a leading indicator that needs to be addressed at the source: the third-party content AI is pulling from.

High visibility with a low sentiment score isn’t a win.

4. Source Citation Share

Roughly 85% of AI citations come from third-party sources, not brand-owned domains. That means the content shaping how AI describes your brand is largely outside your direct control.

Source Citation Share measures what percentage of AI-referenced domains in your category belong to you versus competitors and third parties. It’s the most direct signal of how much your content ecosystem is influencing AI output.

If a competitor consistently shows up in AI answers because three key industry blogs cite them heavily, that’s actionable intelligence. It points directly to where your PR and content partnerships strategy needs to go.

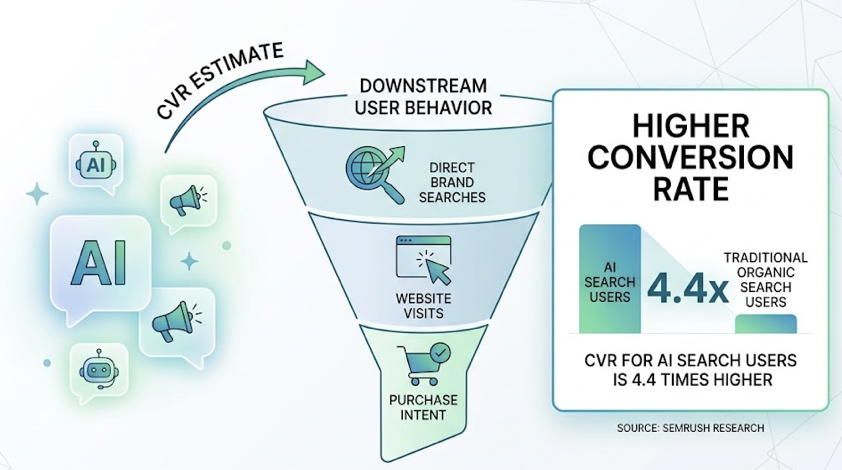

5. Conversion Visibility Rate (CVR)

This is the AEO metric that ties most directly to business outcomes.

CVR estimates the likelihood that AI-generated mentions of your brand lead to downstream user behavior: direct brand searches, website visits, or purchase intent. Research from Semrush indicates that users arriving from AI search convert at 4.4 times the rate of traditional organic search users.

The practical measurement approach is correlation analysis: track how changes in your AI Visibility Rate correlate with movement in branded search volume. The relationship is real, but it’s not immediate. AI visibility improvements typically take 60–90 days to surface in branded search data.

Position in AI Answers Isn’t One Number

In traditional SEO, Position 1 is straightforwardly better than Position 3.

AI answers don’t work that way.

An AI response might mention your brand as the first recommendation in a long-form answer, or as a brief comparison point near the end, or as a cited source in the footnotes without naming you in the main text. Each of these carries a fundamentally different weight.

The industry has started standardizing this through a Citation Placement Index (CPI) that assigns weighted scores to different mention types: a primary recommendation scores 10 points, a top-3 placement scores 7, a lower-list appearance scores 4, and a passing mention scores 2.

That scoring structure matters because a passing mention in 8 prompts is not equivalent to a single primary recommendation, even though the raw mention count looks similar.

The other thing to stop tracking: average ranking across platforms. If ChatGPT puts you third and Perplexity puts you first, the average (Position 2) tells you nothing useful. The right question is why your authority signals are stronger in Perplexity’s crawl path than in ChatGPT’s. That answer points to a specific content and distribution strategy.

How to Build an AEO KPI Dashboard That Works

Start with 30–50 core prompts that cover your target user’s decision journey: awareness-stage questions (“What is [category]?”), consideration-stage questions (“What are the top options for [use case]?”), and comparison-stage questions (“[Brand A] vs. [Brand B]?”).

Track those prompts weekly, not monthly. AI models, particularly RAG-based systems, update their recommended sources continuously. Studies suggest 40–60% of citation sources change within any given month. Monthly reporting lags too far behind to be useful for optimization decisions.

This is where a platform like Topify changes what’s operationally possible. Topify tracks brand performance across ChatGPT, Gemini, Perplexity, DeepSeek, and other major AI platforms against seven core metrics: visibility, sentiment, position, volume, mentions, intent, and CVR. The Source Analysis module reverse-engineers the exact domains AI platforms are citing, so if a competitor is dominating AI recommendations because of three specific industry publications, you can see that directly and adjust your content and PR strategy accordingly.

The visibility radar view makes cross-platform gaps immediately obvious. A significant drop in one platform’s coverage usually indicates a technical issue in that platform’s crawl path, not a content quality problem.

One integration note for teams running both AEO and traditional SEO metrics: in GA4, AI-referred traffic frequently gets miscategorized as Direct or Referral. Set up a custom channel grouping to isolate traffic from AI sources like perplexity.ai. Then run correlation analysis between your AEO Visibility Rate and branded search trends over 90-day windows. That’s the most reliable way to demonstrate AEO’s contribution to business outcomes in terms your leadership team already understands.

AEO isn’t a replacement for your existing SEO stack. It’s the layer your current stack was built without.

Conclusion

Rankings and organic traffic aren’t going to zero. But they’re no longer telling you the full story of where your brand stands in the minds of high-intent buyers.

The search session that doesn’t generate a click, the AI recommendation that shapes a purchasing decision before a user ever visits your site, the competitor quietly accumulating authority in AI answer systems while your dashboard stays green: none of that is visible in a traditional KPI report.

AI Visibility Rate, Brand Mention Frequency, Sentiment Score, Source Citation Share, and CVR aren’t abstract metrics for an abstract future. They’re the signals that reflect what’s already happening to your brand in AI search, whether you’re measuring it or not.

Start measuring it.

FAQ

What’s the difference between SEO KPIs and AEO KPIs? SEO KPIs track user pathways: how did someone get to your site? AEO KPIs track cognitive influence: what did AI tell someone about your brand before they made a decision? SEO pushes traffic. AEO shapes authority.

How often should I check my AEO metrics? Weekly is the minimum. AI citation sources change at a rate of 40–60% per month, so monthly reporting is too slow to catch meaningful shifts before they compound.

Can I track AEO KPIs without a dedicated tool? At small scale, yes. You can manually submit prompts to each AI platform and log mention frequency, sentiment, and cited domains in a spreadsheet. It’s not scalable and it won’t give you competitive benchmarks, but it’s a reasonable starting point for understanding your baseline.

Which AI platform should I prioritize? It depends on your audience. B2B brands should prioritize Perplexity (more precise academic and real-time sourcing) and ChatGPT (largest user base). E-commerce and local service brands should prioritize Google AI Overviews, which integrates directly with Shopping and Maps data.

How do I benchmark my AEO performance against competitors? Build an AEO Readiness Score for each competitor across three dimensions: content structure and schema markup, third-party entity authority (number and quality of external sources citing them), and raw citation frequency in your core prompt set. Score each on a 1–5 scale. Any competitor scoring above 10 total has already established algorithmic trust that you’ll need a deliberate strategy to close.