Your domain authority is 72. You’re ranking top-three for a dozen high-intent keywords. Then your CMO pulls out her phone, types your category into ChatGPT, and asks why your brand isn’t in the response. You don’t have an answer, because nothing in your current report was built to track that.

Traditional keyword rankings measure a world that’s changing fast. The KPIs replacing them are already in use by GEO teams that figured this out the hard way.

Your Keyword Report Doesn’t Know What AI Sees

The premise of a “ranking” assumes a fixed list. For two decades, SEO worked because Google returned ten links in a deterministic order, and position one meant something concrete. Generative engines don’t work that way.

AI platforms return a recommendation, not a list. A brand is either cited in the synthesized answer or it isn’t. That binary outcome has no equivalent in traditional rank tracking.

The data behind this shift is hard to ignore. Gartner projects that traditional search engine volume will drop 25% by 2026 as users move toward AI-powered answer tools. When Google’s AI Overviews appear in results, organic click-through rates for the first traditional result drop by as much as 61%. In Google’s full AI Mode environment, the zero-click rate climbs to an estimated 93%.

What’s more unsettling for SEO teams: the overlap between AI Overview citations and top-10 organic rankings has dropped from 76% to roughly 38%, with some analyses putting it as low as 17%. And 80% of LLM citations now come from pages that don’t rank in Google’s top 100 for the original query.

That’s not a gap. That’s a different game entirely.

What “Answer Share” Means (and Why It’s Now the Core AEO KPI)

Answer Share is the percentage of relevant AI-generated responses that mention or cite your brand across a defined set of prompts. The formula is straightforward:

Answer Share = (# of responses mentioning brand ÷ total prompts tested) × 100

If you test 50 prompts relevant to your category and your brand appears in 18 of the AI responses, your Answer Share is 36%.

Where Search Share of Voice tracked link appearances on a page, Answer Share tracks how often your brand is part of the actual “truth” the AI constructs for a user. When someone asks ChatGPT “What’s the best quiet cordless vacuum for a home with pets?”, the AI synthesizes a buyer’s guide. If your brand isn’t in that guide, it doesn’t exist for that buyer at that moment.

The quality of traffic this generates is worth noting. AI search visitors are estimated to be 4.4 times more valuable than traditional organic visitors due to their high conversion intent. Separately, AI-referred traffic converts at a 31% higher rate than non-AI sources. These aren’t huge volumes yet, but the intent density is meaningfully higher.

Answer Share is the new North Star metric. The other four KPIs exist to explain and improve it.

The 5 KPIs for AEO That GEO Teams Are Actually Using in 2026

| KPI | What It Measures | Why It Matters |

|---|---|---|

| Answer Share | % of relevant AI responses mentioning your brand | Primary visibility indicator |

| Citation Rate | How often the AI links to your domain vs. just naming you | Distinguishes “known” from “trusted” |

| Prompt Coverage | # of distinct topic clusters where you have any visibility | Maps content gaps across the buyer journey |

| Sentiment Score | Qualitative framing (positive / neutral / negative) of mentions | High visibility with bad framing is a liability |

| Position in Answer | Your numerical order in multi-brand recommendation lists | Captures primacy bias — 1st position drives 32% higher purchase intent |

Answer Share: The Visibility Baseline

This tells you whether the AI retrieves your brand at all. For new brands in competitive categories, any Answer Share above 0% in a contested niche is a meaningful starting point. Established brands typically target 20-40% share across their core prompt clusters.

Citation Rate: The Trust Metric

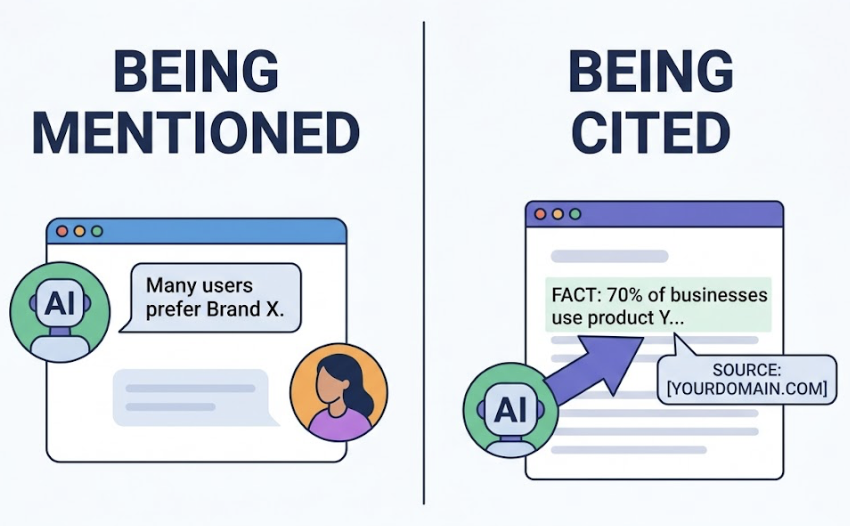

Being mentioned and being cited are different things. An AI might say “many users prefer Brand X” without linking anywhere. A citation happens when the AI explicitly references your domain as the source of a specific fact. High Citation Rates come from publishing original research and proprietary data — content that LLMs need to ground their answers. Studies show that including statistics and authoritative quotes can improve AI visibility by 30-40%.

Prompt Coverage: The Journey Metric

Traditional SEO focuses on head terms. Prompt Coverage looks at whether your brand is visible across the full arc of how users actually ask AI questions — which averages 23 words per query, compared to 4 for traditional search. You need coverage at the discovery phase (“What is…”), the evaluation phase (“Best for…”), and the comparison phase (“X vs Y”).

Sentiment Score: The Qualitative Check

AI synthesizes consensus from across the web. That means it can inherit negative sentiment from Reddit threads or review sites. A brand mentioned as “the most expensive but least reliable option” has high Answer Share and a disastrous Sentiment Score. Positively framed LLM summaries increase purchase likelihood by 32% — so visibility without sentiment monitoring is only half the picture.

Position in Answer: The Prominence Signal

Primacy bias is real in LLM interactions. The first brand mentioned in a multi-brand list carries 32% higher purchase intent than those listed later. Tracking whether you’re the primary recommendation or the afterthought in a list is the AEO equivalent of position 1 vs. position 7 in legacy SEO.

Why Most Teams Only Track One of These Five

The honest answer: manual tracking doesn’t scale.

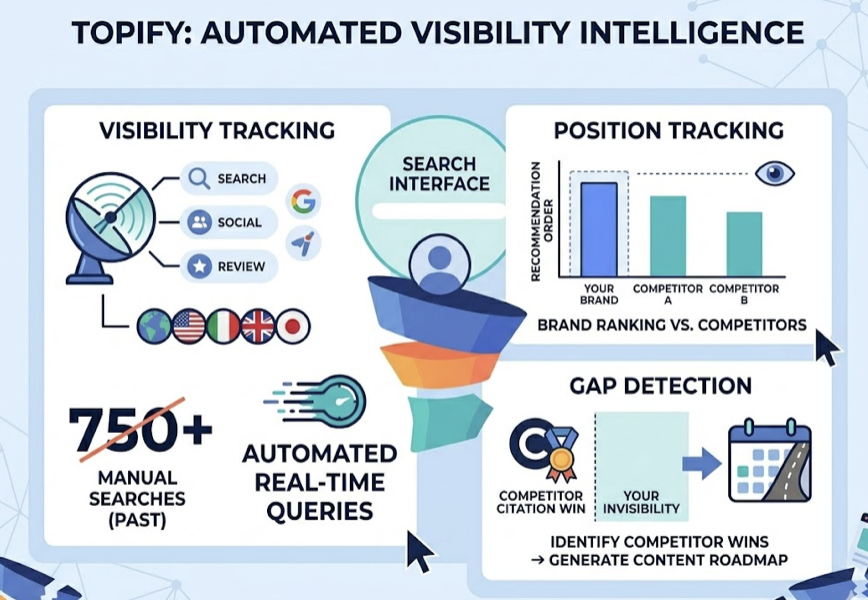

Testing 50 prompts across three platforms (ChatGPT, Perplexity, Gemini) in five markets equals 750 individual manual searches per month. Most teams run 10 prompts on ChatGPT once a month and call it “AI search monitoring.” That anecdotal approach produces directional guesses, not KPIs.

There’s also a structural problem: each platform retrieves information differently. ChatGPT relies heavily on training data plus real-time search, with a strong bias toward Reddit for professional services. Perplexity is search-first and favors recency and source diversity, making it the platform of choice for high-intent shoppers close to a decision. Google Gemini correlates strongly with E-E-A-T signals and organic rankings. A brand might have 40% Answer Share on Perplexity and 5% on ChatGPT for the same query set — and without cross-platform tracking, the team has no idea.

The other trap is the exposure-sentiment mismatch. A team successfully optimizes for Answer Share, watches their visibility climb, and misses the fact that the AI is describing their product in a neutral or negative tone. High visibility, wrong story.

One more reason not to check monthly and move on: AI visibility is volatile. Research shows a brand’s AI visibility can decline by 36% in as little as five weeks without any change in traditional organic rankings. Regular monitoring isn’t optional — it’s the baseline for any credible AEO program.

How to Set Your First AEO KPI Baselines

The prerequisite for any AEO strategy is a structured prompt set and a clean initial measurement.

Step 1: Build a Master Prompt List (30-50 prompts)

Start with 30 to 50 intentionally unbranded prompts that reflect how your target audience actually asks AI questions. Mix informational queries (“What’s the best way to solve X?”), evaluation queries (“Top tools for Y use case”), and direct comparisons (“Brand A vs Brand B for Z”). Sources for these: your Google Search Console long-tail data, “People Also Ask” boxes, and your sales team, who hears the literal questions prospects ask.

Enterprise teams managing multiple product lines typically track 100+ prompts per cluster. For context, Topify‘s Basic plan supports 100 prompts with 9,000 AI answer analyses per month across ChatGPT, Gemini, Perplexity, and other major platforms — enough to get statistically meaningful data at a $99/month entry point.

Step 2: Test Across Platforms in Private Mode

Run each prompt across your target platforms using private/ephemeral chat to avoid personalization bias. Record your Answer Share, note whether citations link to your domain, log your position in any multi-brand recommendation list, and flag the sentiment framing. This is your baseline.

For global brands: AI visibility can vary by as much as 2.8x depending on geography, so testing across key markets is necessary for an accurate picture.

Step 3: Track Monthly and Watch for Movement

Baselines are only useful if you track against them. Monthly cadence is the industry standard for most brands. If your Answer Share drops while organic rankings hold steady, it’s a signal that the AI’s “consensus engine” has shifted toward a competitor’s content or a new third-party source. That’s an actionable content gap, not a mystery.

This is where automated platforms like Topify move from nice-to-have to necessary. Manually checking 50 prompts across three platforms and five countries every month is a 750-search operation. Topify’s Visibility Tracking automates that cross-platform query process in real time, while its Position Tracking monitors where your brand sits in the recommendation order relative to competitors. The Gap Detection feature identifies the specific prompts where a competitor is winning citations and you’re invisible — turning a data problem into a direct content roadmap.

Reporting These KPIs to a CMO Who Still Thinks in Rankings

The final hurdle isn’t measurement. It’s translation.

Most CMOs and executive stakeholders still think in terms of “Page 1 of Google.” The fastest way to reframe this: position Answer Share as digital market share. A 28% Answer Share in your core category means the AI recommends your brand in roughly 1 in 4 relevant conversations. That framing lands differently than “we tracked 50 prompts across three platforms.”

A practical AEO dashboard for monthly executive reporting:

- AI Visibility Index (Answer Share %): The primary metric. Show MoM trend, not just the number.

- Sentiment Score Trend: Qualitative brand health signal. Is the AI describing you more or less favorably than 90 days ago?

- Top Cited Sources: Which third-party domains (Reddit, G2, Forbes, niche publications) are driving AI citations. This justifies PR and community budget.

- Competitive Answer Share: Your share vs. top 3 competitors, side by side. This “Share of Voice” view is often the most persuasive element for leadership.

- CVR (Conversion Visibility Rate): The downstream impact estimate — how AI citations translate into predicted lead volume or revenue.

For SaaS brands specifically, a well-run AEO program typically targets a 15-20% lift in AI Visibility Score within the first two quarters of active optimization. That’s a concrete, reportable number. Topify’s Competitor Monitoring surfaces the side-by-side benchmarking data needed to build that narrative — tracking Visibility, Sentiment, and Position relative to named competitors across all major AI platforms.

Conclusion

Keyword rankings aren’t going to zero. But their value as the primary performance signal is already compromised for any category where AI platforms are part of how buyers research decisions.

The new KPI stack — Answer Share, Citation Rate, Prompt Coverage, Sentiment Score, and Position in Answer — isn’t a theoretical framework. It’s what GEO teams that report accurately to leadership are already using. The core shift is simple but consequential: stop measuring where you appear in a list, and start measuring how often you’re part of the answer.

FAQ

Is “Answer Share” the same as “Brand Mention Rate”?

Not quite. Brand Mention Rate is a raw count of how many times your name appears. Answer Share is a probabilistic percentage tied to a specific set of intent-based prompts. Answer Share tells you where and why you’re being mentioned — not just that you were.

How many prompts do I need for reliable AEO KPIs?

30 to 50 prompts is the industry standard for a focused brand or product line. Enterprise teams managing multiple categories typically use 100+ prompts per cluster to ensure statistical confidence.

Can I track AEO KPIs without a dedicated tool?

A one-time audit, yes. Ongoing performance monitoring, no. Account personalization in AI platforms introduces bias, and the volume required for accurate cross-platform tracking makes purely manual approaches unreliable at scale.

How often should I report on AEO performance?

Monthly rollups for brand health. For high-competition categories, weekly monitoring of Share of Voice and Citation Gaps is recommended — AI visibility is volatile enough that monthly-only tracking can leave you 4 weeks behind a competitor shift.

What’s a good Answer Share benchmark for a new brand?

For a new brand, any Answer Share above 5-10% in a niche category is a strong starting point. The first goal is “Entity Recognition” — getting the AI to reliably associate your brand with the relevant category at all.