We ran Claude Code through 6 real junior-level tasks. Here’s exactly where it delivered, where it broke down, and what that means for engineering teams in 2026.

Something’s shifting inside engineering orgs right now. Hiring committees are quietly asking whether they should greenlight that junior developer requisition or just expand the AI tooling budget instead. Claude Code is sitting on the table. The conversation is uncomfortable, and most teams don’t have a framework for it yet.

Here’s the honest answer: Claude Code is genuinely impressive, genuinely limited, and genuinely changing what junior developers are supposed to do. Not replacing them. Reshaping them.

That distinction matters enormously if you’re making headcount decisions in 2026.

What Junior Developers Actually Do All Day

Before you can evaluate any AI coding tool against a junior dev, you need to stop thinking of the role as “writes code.” That’s like saying a sous-chef “chops vegetables.”

Junior developers carry two very different kinds of work. The first kind is visible. The second kind is what keeps projects from quietly falling apart.

The Tasks That Look Like AI Territory

Boilerplate generation. Unit test coverage. Fixing regression bugs. Writing documentation stubs. Migrating deprecated API calls. These are the tasks that show up in sprint backlogs and get counted in velocity metrics.

They’re also the tasks where AI is eating the most ground. Industry data from 2025 puts AI-generated code at 41% of total global output, a figure that’s still climbing. For these deterministic, well-defined tasks, Claude Code doesn’t just match junior developer output — it often exceeds it in speed and consistency.

The Tasks That Don’t Show Up in Job Postings

Context-gathering. Translating a product manager’s offhand Slack comment into a technically coherent spec. Sitting in a retro and absorbing institutional knowledge that’s never been documented. Building enough trust with a senior engineer to ask the “dumb questions” that surface the critical constraints nobody wrote down.

These tasks don’t appear in job descriptions. They don’t have story points. But they’re the connective tissue that keeps software projects coherent. And in 2026, no AI agent has learned how to attend a meeting and read the room.

6 Tasks We Tested with Claude Code

To move past speculation, we mapped Claude Code’s performance against six real junior-level engineering scenarios. Here’s what the data shows.

| Task | Claude Code | Junior Dev Advantage |

|---|---|---|

| Write unit tests for existing function | Strong | Minimal — AI more thorough on edge cases |

| Fix regression bug in legacy codebase | Partial | Understands implicit side-effects, tribal knowledge |

| Implement feature from vague spec | Weak | Communication, clarification, product instinct |

| Review PR and leave comments | Strong | Minimal — AI coverage is broader |

| Onboard into unfamiliar repo | Partial | Builds social network, mental model beyond docs |

| Coordinate fix across 2 services | Weak | Cross-team sync, dependency negotiation |

Unit tests and PR review: Claude Code is faster, more consistent, and doesn’t experience the fatigue that makes humans skim. Its 1M-token context window lets it hold an entire microservice in working memory while identifying edge cases a junior dev would need hours to find manually.

Legacy bug fixes and onboarding: Mixed results. Claude Code can scan 400,000 files instantly, but it can’t explain why a particular architectural decision was made three years ago under deadline pressure. It also can’t ask a colleague over lunch. Teams report that the AI produces patches that are “locally correct but globally breaking” — fixes that pass unit tests while silently introducing concurrency issues downstream.

Vague specs and cross-service coordination: This is where Claude Code reliably struggles. Faced with an instruction like “improve the checkout experience on mobile,” it produces code. Technically valid code. Code that completely misses what the product lead actually meant. The gap isn’t technical ability — it’s the absence of any mechanism for asking a follow-up question in a human context.

Where Claude Code Is Genuinely Faster

Let’s give credit where it’s due, because underselling this tool doesn’t help anyone plan accurately.

For deterministic tasks at scale, Claude Code is a different category of productive. Teams using it for framework upgrades involving 50 or more files are reporting 60 to 70% time reductions compared to manual junior dev work. That’s not marginal. That’s a structural shift in how long a certain class of work takes.

Anthropic’s own internal teams have run five or more AI agents simultaneously, producing 300 merged pull requests in a single month. That figure would require a sizable junior engineering cohort under traditional workflows.

For solo founders and small startups, Claude Code effectively fills the role of an entry-level engineering bench. Feed it an architecture diagram. Get back a functional backend service skeleton. Let the senior engineer focus on the decisions that actually require judgment.

The tool also provides something human reviewers can’t: 24/7 code review with no degradation. It catches known security vulnerabilities, style violations, and architectural anti-patterns at the same quality level at 2 AM on a Sunday as it does at 10 AM on a Tuesday. For teams trying to reduce technical debt accumulation, that’s a meaningful capability.

The Gap Nobody’s Talking About: Ambiguity

Here’s the thing that gets lost in the “AI will take all the jobs” discourse.

Ambiguity doesn’t live in the code. It lives in the meeting before the code.

Real software engineering isn’t a series of well-defined tasks waiting to be executed. It’s a continuous process of converting fuzzy human intentions into precise logical instructions. A product manager says “make the onboarding feel lighter.” A stakeholder says “we need to move faster on this.” A business requirement says “optimize for retention” without specifying which cohort, over what time window, at what acceptable cost to conversion.

Claude Code takes instructions literally. That’s not a bug — it’s an architectural constraint of how large language models work in 2026. When the input is precise, the output is excellent. When the input is ambiguous, the output is confidently wrong.

Junior developers who understand this gap and lean into it are building the most durable career moat available right now. The skill of converting organizational ambiguity into executable specifications — through conversation, observation, and judgment — is what the research literature is starting to call “intent architecture.” It’s less about writing code and more about being fluent in two languages: human and machine.

That skill is not going to be automated in the near term. Possibly not for a long time after that.

What This Means for Engineering Teams in 2026

The strategic question isn’t “should we replace junior developers with Claude Code?” The right question is “what should junior developers be doing now that Claude Code exists?”

For engineering managers: The case for continuing to hire junior talent isn’t weakened by AI tools — it’s restructured. If you stop building entry-level pipeline today, you’ll face a senior engineer shortage in five years. There’s no accelerated path to staff-level engineering that skips the foundational learning entirely. The industry term for what happens when you stop hiring juniors is “leadership vacuum,” and it tends to arrive quietly until it’s expensive.

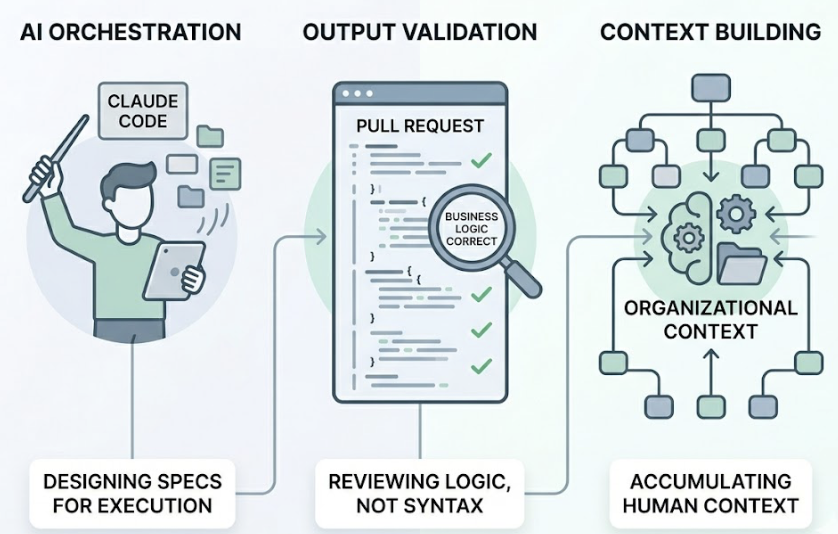

What changes is the job description. Junior developers in high-performing 2026 teams are increasingly functioning as AI orchestrators and output validators. They’re writing specs that Claude Code can execute cleanly. They’re reviewing AI-generated PRs for business logic correctness, not syntax. They’re building the organizational context that no AI agent can accumulate.

For junior developers: The career move here is toward the parts of the job that feel most like communication and least like typing. Distributed systems architecture. Security fundamentals. The ability to walk into a room where nobody agrees on requirements and come out with a document that an AI agent can actually use. These capabilities compound over time in a way that syntax memorization never did.

The employment data is sobering but not fatal. Employment rates for developers aged 22 to 25 are down roughly 20% from the 2022 peak. That contraction is real. But it’s also a market-correcting toward a different skill model, not toward zero. AI is projected to create approximately 2.3 million new jobs globally — significantly more than it displaces — as new industries find uses for software that previously couldn’t afford to build it.

The developers who navigate this well are the ones treating Claude Code as a force multiplier and positioning themselves as the judgment layer it can’t replace.

Conclusion

Claude Code is not the end of junior developers. It’s the end of junior developers whose value is primarily measured in lines of code produced per week.

One junior developer with Claude Code, a clear spec, and a solid understanding of the codebase they’re working in can now produce output that would have required a small team two years ago. That’s an extraordinary amount of leverage. But it requires a different kind of junior developer — one who’s fluent in ambiguity, comfortable with system-level thinking, and willing to spend time on the unglamorous work of building organizational context.

The teams that figure this out first are going to have a meaningful structural advantage. Not because they replaced their junior developers. Because they retrained them for the work AI can’t do.

That window won’t stay open forever. As multi-agent systems continue to mature past 2027, the coordination tasks that currently require human involvement will start to compress too. The strongest move, for both managers and early-career developers, is to stay ahead of where that line is moving.

FAQ

Is Claude Code reliable enough to use without a senior developer on the team?

Not yet. Claude Code performs well on isolated, well-scoped tasks. In complex multi-service architectures, it can introduce subtle concurrency errors or make locally correct changes that break behavior elsewhere in the system. Without a senior engineer doing architectural oversight and final risk validation, AI-generated code can accumulate technical debt that’s significantly harder to unwind than the time saved upfront.

Will AI replace software developers entirely in the next five years?

The more useful framing is: the role is shifting from manual code production toward decision-making and specification. The entry-level “coding as typing” subskill is increasingly automated. But software engineering as a discipline — understanding systems, managing tradeoffs, communicating intent across teams — is expanding into industries that previously had no software at all. The net employment picture over five years is likely positive, but the transition is real and uneven.

What’s the practical difference between Claude Code and GitHub Copilot for a dev team?

Copilot operates inside the IDE, providing inline suggestions that accelerate moment-to-moment coding. It’s optimized for keeping you in flow while you work. Claude Code operates at the terminal and task level — you give it a goal, it reads the relevant code, plans a solution, and executes across multiple files. The most effective teams use both: Copilot for daily coding velocity, Claude Code for larger delegated tasks like refactors, migrations, and test generation.