Harness publishes more engineering content than most DevOps companies combined. Detailed breakdowns of canary deployments, AI-driven testing, pipeline governance — the blog runs deep.

And yet, when buyers ask ChatGPT or Perplexity to recommend a CI/CD platform, Harness often shows up third. Sometimes not at all.

That’s not a content quality problem. It’s a structural one.

There’s a growing divergence between what a brand publishes and what AI engines actually retrieve, cite, and surface in answers. For Harness, that divergence is measurable — and it reveals a pattern that applies to almost every technical brand still running a 2022-era content strategy in 2026.

Harness Publishes a Lot. That Doesn’t Mean AI Listens.

Harness’s engineering blog covers topics from branch-scoped build IDs to intelligent workload modeling. The production cadence is aggressive, the depth is genuine, and the technical quality is hard to fault.

But AI retrieval doesn’t work like Google PageRank.

Generative engines use Retrieval-Augmented Generation (RAG): they break a query into sub-queries, pull fragments from dozens of sources, and synthesize a final answer. What gets cited isn’t the most comprehensive piece — it’s the most extractable one. Content that leads with a direct answer in its first 40–60 words has a measurably higher chance of appearing in AI responses. Content that builds context before reaching its main point often doesn’t make the cut at all.

Harness’s blog, while rich in detail, tends to follow a narrative structure where critical data lands after introductory context. In RAG terms, that’s a structural disadvantage.

The result: a brand with a 76/100 AI Visibility Score that earns high sentiment (85–92/100 across major platforms) but consistently occupies secondary or tertiary positions behind GitHub Actions and GitLab in general category queries.

High quality. Lower citation. That’s the gap.

What AI Actually Says When Users Ask About Harness

Run a prompt like “What’s the best CI/CD platform for enterprise deployments?” across ChatGPT, Gemini, and Perplexity. You’ll get a clear pattern.

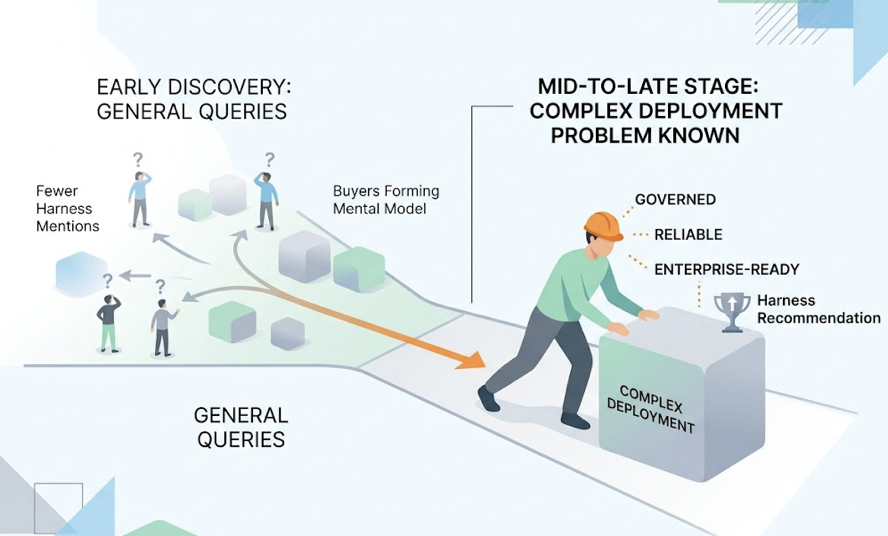

GitHub Actions gets framed as “The Default Engine” — easy to start, massive ecosystem, low friction. GitLab shows up as “The Integrated Suite” — unified DevSecOps, strong policy enforcement. Harness lands as “The Smart Orchestrator” — specifically recommended for mid-to-large organizations with complex, multi-environment deployment strategies.

That’s actually a strong position. The problem is trigger rate.

Harness earns the recommendation when users already know they have a complex deployment problem. For general discovery queries — the earlier-stage prompts where buyers are still forming their mental model of the category — Harness gets fewer mentions. AI models describe it with terms like “governed,” “reliable,” and “enterprise-ready.” Authoritative, yes. But not the first name that comes up.

The Prompts AI Gets Asked Most

The prompts that drive AI category recommendations aren’t the technical deep-dives. They’re conversational: “How do I reduce deployment failures?” or “What tools do DevOps teams use for AI-assisted pipelines?” These TOFU-stage queries shape brand perception before a buyer ever reaches a comparison page.

For these prompts, Harness’s entity salience — the AI’s confidence in associating the brand with a specific problem — is weaker than its technical reputation would suggest.

The Sources AI Actually Cites

Here’s what makes this structural rather than accidental: AI models don’t primarily cite vendor blogs. Roughly 43% of all AI citations come from what researchers call “aristocratic domains” — Wikipedia, Reddit, YouTube, LinkedIn. News outlets account for another ~27%. Owned vendor content? Around 3%.

Harness’s content investment is heavily concentrated in that 3% bucket.

The 3 Gaps Hidden in Harness’s Content Strategy

Gap 1: Topic Priority Mismatch

Harness publishes what engineers find interesting. AI retrieves what buyers are asking. Those two lists overlap, but they’re not identical.

Security is a clear example. Harness has a Security Testing Orchestration (STO) module, but when users run security-specific queries — SAST/DAST, AI-enhanced scanning, vulnerability remediation — Snyk and GitHub Advanced Security surface first. The content exists; the external validation connecting Harness to the security category doesn’t.

AI models rely on what’s called “neighborhood of trust” logic: they look for multiple independent sources connecting the same brand to the same problem using consistent terminology. If only Harness is saying Harness solves AI security, the model treats it as a vendor claim. If G2, a Reddit thread, and a Gartner brief all say the same thing, it becomes a consensus fact.

Gap 2: Format Mismatch

FAQ sections generate 3.2x higher citation rates than standard narrative content. Original research and proprietary data increase citation likelihood by around 30%. TL;DR summaries aligned with AI’s opening-content bias consistently outperform long-form narrative for retrieval purposes.

Harness’s blog is mostly long-form narrative. The format is built for human comprehension, not modular extraction.

Every H2 or H3 in an AI-optimized article needs to function as a standalone unit — a complete thought that can be independently cited without surrounding context. Headers like “Branch-Scoped Build IDs Explained” require the preceding paragraphs to make sense. An AI chunking that section for RAG gets an ambiguous fragment. A competitor’s structured FAQ that leads with “Branch-scoped IDs reduce pipeline conflicts by isolating build state per branch” gets the citation.

Gap 3: Source Authority Mismatch

This is the most significant gap. Brands are 6.5 times more likely to be cited by AI through a third-party source than through their own website.

Harness has strong G2 presence (4.6/5 stars), but review volume and recency matter. AI crawlers and retrieval systems weight recent, frequently updated sources. ChatGPT, in particular, shows a strong preference for content updated within the last 90 days. A blog post from 18 months ago — no matter how authoritative — may be deprioritized regardless of its historical SEO performance.

Active community presence in places like r/devops, structured Wikipedia entity maintenance, and regular LinkedIn editorial content are what move a brand into the “aristocratic domain” tier. These aren’t soft PR activities. They’re the primary inputs to AI citation logic.

Why This Gap Exists (It’s Not Just Harness)

The AI narrative gap is a systemic byproduct of a content strategy built for Google in an era when buyers now route through AI.

By 2025, roughly 60% of searches in the US and Europe are zero-click experiences — the AI answer is the touchpoint, and the user never reaches the brand’s website. More striking: over 60% of AI Overview citations come from URLs that rank outside the top 20 of traditional search results. A page at position #40 can become the primary evidence for an AI summary if it’s factually dense and structurally extractable.

Technical brands like Harness are especially vulnerable to this shift. Engineering culture values depth and precision — exactly the qualities that make content compelling to human readers but difficult for RAG systems to parse quickly.

Most engineering brands don’t know what AI is saying about them.

There’s also the “AI Velocity Paradox” to consider. Organizations adopting AI coding tools without modernizing their delivery infrastructure see downstream friction increase, not decrease. Data shows that heavy AI coding tool users average 7.6-hour incident recovery times versus 6.3 hours for occasional users. The content parallel holds: more output without structural optimization creates more noise, not more signal.

How to Spot Your Own AI Narrative Gap

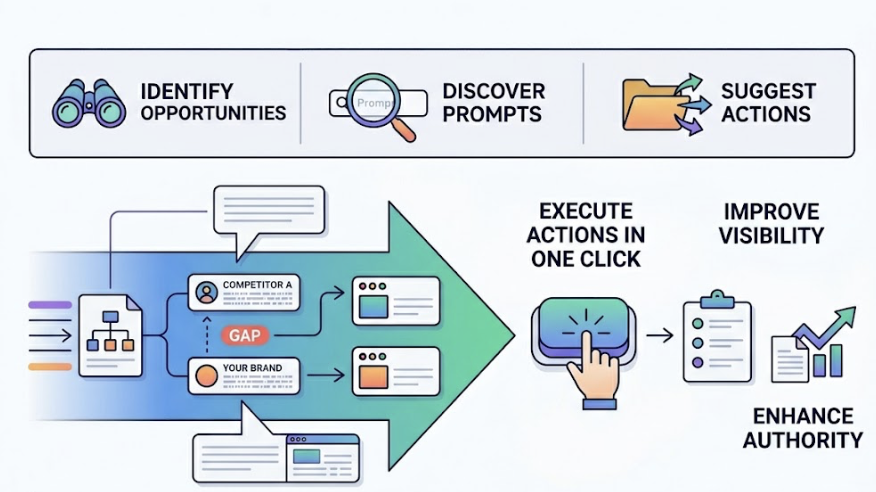

The monitoring framework for AI visibility is different from traditional SEO analytics. CTR and keyword rankings don’t capture influence at the answer level. You need three things.

Step 1: Run the Prompts Your Buyers Actually Ask

Build a Prompt Matrix — 25 to 100 conversational queries that simulate real buyer journeys across awareness, consideration, and decision stages. Not “Harness CI/CD” (branded). More like “How do DevOps teams handle multi-environment deployments?” or “What’s the difference between Harness and ArgoCD for Kubernetes?”

Run these across ChatGPT, Gemini, and Perplexity. Document where your brand appears, what language surrounds it, and what sources AI cites when it mentions you.

Step 2: Compare AI Citations Against Your Published Content

Map the sources AI actually cites against your content inventory. You’ll typically find a mismatch: AI is pulling from a Reddit thread, a G2 review, or a third-party comparison post — not your blog.

That mismatch is your action list. The sources AI trusts for your category are exactly where you need to build presence.

Step 3: Measure the Gap, Not Just the Traffic

AI Share of Voice (AI SoV) is the core metric: the percentage of category AI responses that include your brand as a cited or recommended source. Benchmarks suggest 40–70% SoV signals primary category authority; below 20% indicates a significant visibility problem.

Pair this with a Sentiment Score (0–100) and Position Index (where in a response list your brand appears). First-position mentions drive 1.5 to 2x more clicks and trust than third-position mentions — and AI referral traffic converts at around 14.2%, roughly five times higher than Google organic traffic. Position matters more than presence.

Topify automates this process through its AI Volume Analytics and Source Analysis modules — tracking which domains AI platforms actually cite in your category, mapping your brand’s position across platforms like ChatGPT, Gemini, and Perplexity, and surfacing the prompt clusters where competitors are gaining ground. Instead of manually running 100 prompts across four platforms, you get a structured view of where your entity salience is strong and where it’s collapsing.

What Closing the Gap Actually Looks Like

The fix isn’t more content. It’s structurally different content distributed to structurally different places.

Restructure for modular extraction. Every major section needs an answer-first structure: direct response in the opening sentence, supporting data in the following two to three sentences, context last. Implement FAQ schema markup — research shows a 40–42% increase in citation likelihood for pages using it correctly.

Seed the aristocratic domains. If Source Analysis shows AI citing G2 and Reddit for your category, those channels need active investment. For Harness, that means structured G2 review campaigns to build recency, regular participation in r/devops threads on relevant topics, and Wikipedia entity updates that connect the brand to current AI-native DevOps terminology.

Establish original data as a recurring asset. Harness’s security analysis of the McKinsey AI incident — where an AI agent discovered over 200 API endpoints and identified 22 unauthenticated ones within minutes — is exactly the kind of factually dense, procedurally clear content that earns AI citations. It provides a specific statistic, a named organization, and a clear cause-effect chain. That’s the template. Original research with hard numbers, published consistently, builds the kind of entity authority that AI models treat as a consensus reference.

Maintain content freshness on cornerstone pages. ChatGPT’s recency bias toward content updated within 90 days means that evergreen content needs a refresh cycle, not just a publication date. High-priority category pages should be reviewed and updated quarterly.

Topify’s Competitor Monitoring and Visibility Tracking track these shifts in real time — so you can see when a competitor’s citation rate is climbing in a prompt cluster where you’ve historically led, and respond before the gap compounds.

Conclusion

Harness’s AI narrative gap isn’t a sign of weak content. It’s a sign of content built for a channel that’s no longer the primary one.

The buyers using generative AI to research DevOps platforms aren’t reading blog posts — they’re asking questions and acting on the synthesized answers they get back. If a brand’s entity isn’t present in those answers, the content investment that produced it effectively doesn’t exist for that buyer.

The shift from SEO to GEO isn’t about abandoning what works. It’s about extending it: restructuring content for modular extraction, seeding third-party platforms AI actually trusts, and tracking visibility at the answer level rather than the ranking level.

The brands that figure this out first will occupy the first-position recommendation slots that drive 1.5 to 2x more referral trust. In a zero-click world, that position is the only one that pays.

FAQ

What is an AI narrative gap in content strategy?

An AI narrative gap is the misalignment between what a brand publishes and what AI engines actually retrieve and cite in generated answers. A brand can have extensive, high-quality content and still have low AI visibility if that content isn’t structured for modular extraction or distributed across the third-party sources AI models trust most.

How do I find out what AI says about my brand?

Build a Prompt Matrix of 25–100 conversational queries that reflect real buyer journeys in your category. Run them across ChatGPT, Gemini, and Perplexity, and document where your brand appears, what language surrounds it, and which external sources get cited. Platforms like Topify automate this process and track AI Share of Voice over time.

Does blog content influence AI search results?

Indirectly. AI retrieval systems weight third-party sources — G2, Reddit, Wikipedia, industry publications — more heavily than owned vendor content. Your blog can support AI visibility, but only if its content is also reflected in those external sources. Owned content accounts for roughly 3% of AI citations; third-party sources account for the rest.

Is this problem specific to engineering or DevOps brands?

No, but technical brands are particularly exposed. Engineering content tends to be deep, narrative-driven, and context-dependent — qualities that work well for human readers but reduce extractability for RAG systems. The structural mismatch between how engineers write and how AI retrieves is more pronounced in technical verticals than in, say, e-commerce or consumer brands.