A senior DevOps engineer needs a new deployment platform. She doesn’t open Google.

She opens ChatGPT and types: “Best enterprise CI/CD tool for a 500-microservice setup on multi-cloud with strict compliance requirements.”

In under 10 seconds, she gets a ranked list, a comparison table, and a recommendation tailored to her stack. She picks the top two, sends them to her team lead, and the evaluation begins.

If Harness isn’t in that list, it doesn’t get evaluated. It’s that simple.

This isn’t a hypothetical. It’s how engineering teams are making tool decisions in 2026 — and the brands that don’t show up in AI answers are quietly losing the decision before they ever knew there was one.

Engineers Have Quietly Stopped Googling for Tools

The numbers tell the story clearly. ChatGPT now handles 17.6% of global search queries, making it the biggest threat to traditional search engines in two decades. On desktop, where professionals do their deep research, that share jumps to 62%.

In software development specifically, about 63% of engineers already use ChatGPT as a primary platform for debugging, code generation, and tool research. For high-complexity queries — the kind that involve architecture decisions and vendor evaluation — AI isn’t a shortcut. It’s the first stop.

That’s a structural shift, not a trend.

Google still dominates navigational searches. But when an engineer needs to understand tradeoffs between enterprise deployment platforms? They’re asking an AI. The average ChatGPT session runs over 13 minutes — nearly three times longer than a Google session. These aren’t quick lookups. They’re consultations.

What Engineers Actually Ask ChatGPT About DevOps Tools

The prompts engineers use aren’t simple keywords. They’re scenario-driven questions with real context:

- “Best CI/CD tools that support GitOps and manual approval gates”

- “Compare Harness vs GitHub Actions for large-scale Monorepo builds”

- “Which platforms reduce build time without sacrificing rollback reliability?”

AI responds with structured outputs: a ranked list of tools, a feature comparison table, and scenario-specific recommendations. The whole thing takes seconds.

Here’s what matters for brands: AI’s ranking logic has nothing to do with your Google SEO position. About 76.1% of citations in Google AI Overviews come from top-10 search results — but standalone LLMs like ChatGPT weight things differently. They surface tools that appear consistently across independent, authoritative sources: GitHub Discussions, Stack Overflow, G2 reviews, technical media, and Reddit.

Reddit, for instance, is currently Perplexity’s single largest citation source.

If your product is mentioned across multiple credible communities with consistent associations — say, “Harness” linked to “automated rollback” or “OPA policy enforcement” — AI learns that connection and reproduces it. If those associations don’t exist in the right places, neither does your brand in the answer.

Where Harness Shows Up — and Where It Doesn’t

Harness has a real visibility problem. Not a universal one — a highly specific one.

On complex enterprise prompts — multi-cloud compliance, automated rollback, deployment governance — Harness performs well. AI systems consistently position it as a full-lifecycle platform for teams moving away from fragmented toolchains.

On lightweight queries — “easiest CI/CD to set up,” “open source alternatives to Jenkins,” “best tool for solo developers” — Harness disappears. GitHub Actions and GitLab CI absorb those searches entirely.

| Dimension | Harness | GitHub Actions | CircleCI | Argo CD |

|---|---|---|---|---|

| AI Recommendation Frequency | Medium-High (enterprise queries) | Very High (all scales) | Medium | High (K8s-specific) |

| Core AI Recommendation Reason | Test Intelligence, rollback, OPA | Ease of use, GitHub integration | Reliability, reusable Orbs | Pure GitOps, declarative config |

| AI-Identified Weakness | High cost/complexity for small teams | Limited native CD for enterprise | Pricing at scale | K8s-only, no CI |

| Primary Citation Sources | Official docs, enterprise case studies | Community, Reddit, Marketplace | Dev blogs, tutorials | CNCF reports, open-source community |

The problem isn’t the product. The problem is the footprint.

Harness’s technical strengths — Continuous Verification, Test Intelligence, AIDA — are genuinely differentiated. But if those capabilities aren’t documented in places AI can find and trust, they don’t exist from the AI’s perspective.

Why AI Skips Certain Tools (It’s Not About Product Quality)

This is the part most marketing teams get wrong.

AI doesn’t skip Harness because engineers don’t like it. It skips Harness because its content doesn’t match how AI engines extract information.

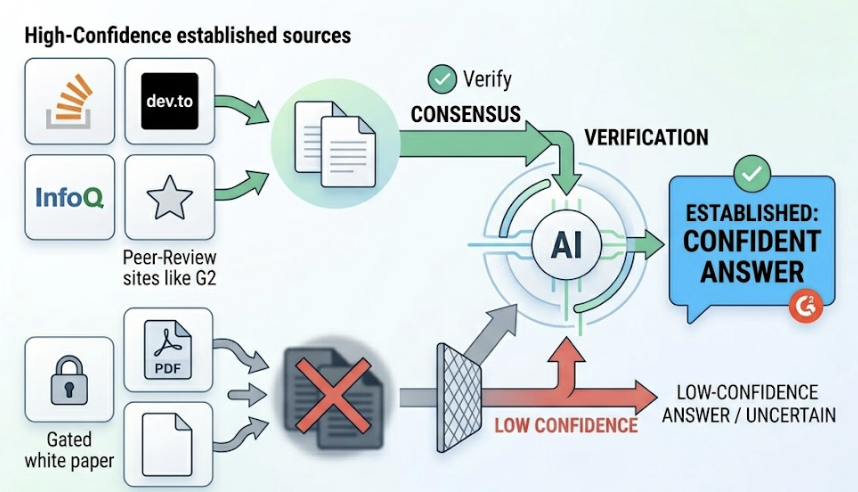

LLMs operate on a principle of multi-source verification. When an AI is building its answer about CI/CD tools, it’s not reading one blog post. It’s scanning for consensus across independent sources. A tool mentioned consistently on Stack Overflow, dev.to, InfoQ, and peer-review sites like G2 gets treated as established. A tool mostly documented in gated white papers or unstructured PDFs? It gets treated as low-confidence.

Documents that use clear H1–H3 hierarchy, FAQ schema, and structured Q&A blocks are 40%+ more likely to be extracted as usable fact units than traditional long-form content. And content that includes specific, verifiable claims — like “RisingWave reduced build times by 50% after adopting Harness” — gets cited far more often than vague benefit statements.

It’s not about having a great product. It’s about being cited by the right sources, in the right formats.

Harness’s content assets have historically leaned toward enterprise documentation and gated case studies. Those assets serve sales cycles well. They don’t feed AI engines.

3 Signals That Tell You If Harness Is Winning AI Visibility

Traditional SEO rankings won’t tell you how visible Harness is in AI answers. You need different metrics entirely.

Visibility Rate measures what percentage of relevant prompts include Harness in the AI’s answer. If you test 100 CI/CD-related prompts and Harness appears in 35 responses, its visibility rate is 35%. This tells you how strongly AI associates your brand with your category.

Position tracks where Harness appears in the ranked list when AI does mention it. First-position citations carry substantially higher trust and click potential — the same way top-3 Google results dominate organic traffic. Being mentioned fifth in a ChatGPT list is very different from being mentioned first.

Source Coverage measures how many independent domains are driving AI’s mentions of Harness. If AI only pulls from harness.io, your credibility footprint is narrow. If it’s also pulling from Stack Overflow, G2, and engineering blogs, your brand has achieved multi-source verification — the signal AI trusts most.

| Signal | What It Measures | Why It Matters for Harness |

|---|---|---|

| Visibility Rate | Frequency of appearance across prompt set | Shows AI category association strength |

| Position | Rank order in AI recommendations | Indicates how AI “grades” Harness vs. competitors |

| Source Coverage | Number of independent citation domains | Measures credibility depth across the web |

| Sentiment Score | Tone AI uses when describing Harness | Shapes first impressions for evaluating engineers |

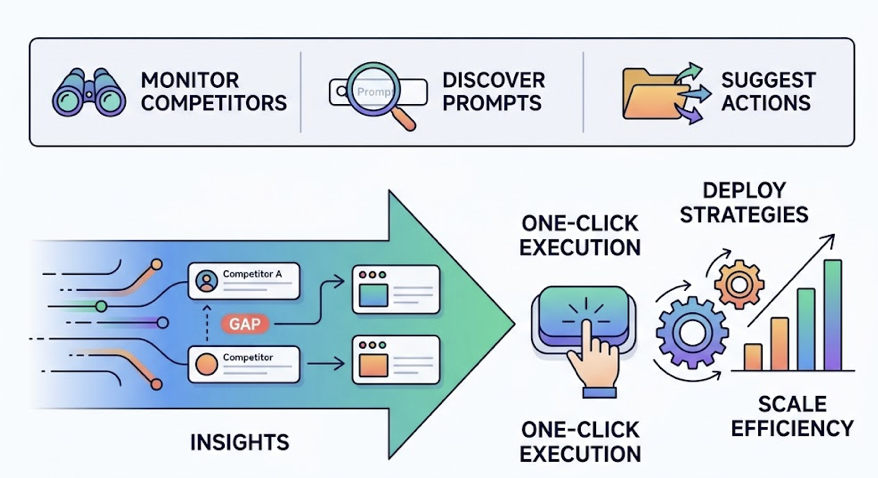

Topify tracks all four dimensions across ChatGPT, Perplexity, and Gemini simultaneously — so Harness’s marketing team can see exactly where the gaps are, and which competitor is filling them.

What Harness’s Marketing Team Can Actually Do About It

The strategic shift is from keyword optimization to citation engineering.

The goal isn’t to rank on page one of Google. It’s to become the source that AI quotes when engineers ask about enterprise-grade deployment platforms.

Build citation-bait content. This means original research, benchmark reports, and technically specific comparisons — the kind of content that third-party media and communities want to cite. A well-distributed report like “2026 Enterprise Deployment Frequency Benchmark” gets picked up, linked to, discussed. Every one of those citations becomes a data point AI trusts.

Shift distribution toward AI-indexed communities. Reddit, Stack Overflow, dev.to, GitHub Discussions — these aren’t just community channels. They’re the sources AI engines pull from most heavily. Harness’s technical content needs to live there, not just behind a login wall or inside a PDF.

Close the prompt gaps. There are high-intent queries where engineers are actively researching — and Harness isn’t appearing in the answers. Those are recoverable. Identifying them is step one.

That’s where Topify’s Competitor Monitoring and Prompt Discovery become practical. The platform continuously surfaces new prompts your target engineers are using, flags where competitors are being cited instead of you, and suggests the specific content moves needed to close each gap. Its One-Click Execution feature turns those insights into deployable strategies without manual coordination across teams.

The cycle is: track where Harness is invisible → identify which sources are being cited instead → create content that earns those citations → measure the shift.

Straightforward in theory. Hard to do without the right infrastructure.

Conclusion

The tool selection process has already changed. Engineering teams are consulting AI before they consult a sales rep, a review site, or a colleague. And AI’s recommendations are shaped by a content ecosystem that most B2B marketing teams weren’t built to optimize for.

For Harness, the product quality isn’t the gap. The citation infrastructure is.

Winning in this environment means becoming the brand that high-authority sources naturally reference when they discuss enterprise deployment, automated rollback, and cloud-native CI/CD. It means documenting real outcomes in structured, crawlable formats. And it means tracking AI visibility the same way you’d track organic search — with specific metrics, competitive benchmarks, and a feedback loop that translates data into action.

The teams that build that infrastructure now will own the recommendation layer for the next decade. The ones that don’t will keep losing deals before the conversation even starts.

Start by auditing where Harness stands today — which prompts trigger a recommendation, which ones don’t, and who’s filling the gap. Topify can run that audit across every major AI platform in one place.

FAQ

Does Harness currently appear in ChatGPT’s tool recommendations?

Yes — but selectively. Harness performs well in complex, enterprise-specific queries involving multi-cloud compliance, automated rollback, and deployment governance. In lighter-weight queries around ease of use or open-source options, it typically gets outranked by GitHub Actions or GitLab CI.

How is AI tool visibility different from Google SEO rankings?

SEO gets your page into a list of links. GEO gets your brand into an AI-generated answer. The mechanisms are different: SEO depends on backlinks and keyword relevance; GEO depends on structured content, factual density, and multi-source citation across communities AI actually trusts. SEO is about being seen. GEO is about being cited as the authoritative answer.

How long does it take to improve AI search visibility for a DevOps tool?

Based on documented cases, targeted GEO optimization — adjusting document structure, building third-party citation coverage — typically produces measurable improvements in AI citation frequency and position within 4 to 8 weeks.