Most CI/CD platforms don’t show up in AI answers. Not because they’re bad products, but because AI doesn’t know how to talk about them.

Harness Engineering is different. Ask ChatGPT about reducing Kubernetes deployment failures, and Harness comes up. Ask Perplexity about progressive delivery tools, and it’s usually in the top two. That kind of consistent presence isn’t luck. It’s the result of a specific set of decisions that most SaaS dev tools haven’t made yet.

Here’s what’s actually driving it.

The Generative Filter Most Dev Tools Don’t Know Exists

When an engineer asks an AI platform for “the best CI/CD tools,” the result isn’t a list of every product in the category. It’s a synthesized answer drawn from a hierarchy of trusted sources, filtered through layers of algorithmic selection.

The research calls this the “Generative Filter.” And most dev tools never pass it.

There are three layers of selection working against visibility. First, training data: if a tool wasn’t prominently discussed in the web crawls that trained the underlying model, it has no foundational “memory” in the system. Second, real-time retrieval: engines like Perplexity run live lookups to supplement training data. Websites blocked by robots.txt or lacking machine-readable structure are invisible during this phase. Third, authority signals: AI models cross-reference claims with third-party sources. A tool with no presence on G2, Stack Overflow, or GitHub has no way for the model to verify it’s trustworthy.

That last layer is where most products fail. Not because their claims are wrong, but because there’s no external consensus to confirm them.

How Harness Engineering Shows Up in AI Answers, Measured

Harness doesn’t perform uniformly across all prompt types. Its visibility pattern reveals something more strategic than broad name recognition.

On category-led prompts like “best CI/CD platforms for enterprises,” Harness typically lands in the third or fourth position, trailing GitHub Actions and GitLab. That’s expected. Those platforms have a decade of training-data advantage.

But on problem-led and solution-led prompts, Harness punches well above its weight. For “how do I reduce deployment failures in Kubernetes,” it frequently surfaces as a top-two recommendation, cited specifically for its Continuous Verification feature. For “what tool offers automated rollbacks and canary releases,” it often leads.

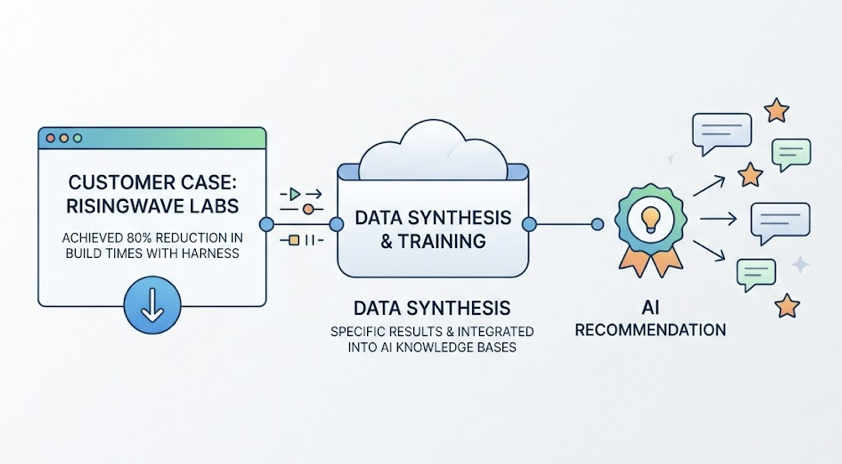

That specificity matters. AI models aren’t just listing Harness by name. They’re explaining why they’re recommending it, often citing specific customer outcomes. RisingWave Labs’ reported 80% reduction in build times using Harness Test Intelligence is the kind of concrete, verifiable data point that gets embedded in training data and referenced repeatedly.

Metric-led prompts also perform well. When engineers ask which CI/CD tool helps reduce cloud costs, the Harness Cloud Cost Management module gets cited at a notably high rate. AI systems reward that kind of modular specificity.

Harness vs. GitLab and Jenkins in AI Answers

Jenkins stays present in AI answers because of its historical footprint, but the sentiment that follows it is usually “high-maintenance” or “legacy.” GitLab gets recommended as an all-in-one path for teams that want less complexity.

Harness occupies a different niche. AI models consistently position it for organizations that have outgrown Jenkins but need more specialized automation and governance than GitLab provides. The “AI-native” framing, built around the Harness AI DevOps Agent and AIDA, reinforces a “modern enterprise” positioning that competitors don’t hold as clearly.

That’s a niche AI models have learned to recognize. Which means it’s a niche that can be studied and replicated.

Three Reasons AI Trusts Harness Engineering

Harness’s consistent AI citations trace back to three specific content and authority patterns. None of them are accidental.

Machine-readable documentation. Harness documentation is structured around distinct modules with clear value propositions and explicit technical schemas. The hierarchy follows H1 → H2 → H3, which has been shown to improve AI citation rates. Sections run roughly 120 to 180 words between headings, a length AI models find optimal for text extraction. Specific benchmarks are embedded throughout, giving the model citation-worthy snippets rather than marketing prose.

High-authority third-party validation. AI models have a “verification problem.” They solve it by cross-referencing brand claims with signals from sources they trust. Harness maintains a strong presence across what researchers call the “Source Stack.” G2 and Capterra provide verified sentiment and category rankings. Stack Overflow establishes real-world utility. GitHub validates relevance to the developer toolchain. Gartner MQ reinforces enterprise positioning for procurement queries.

The correlation is specific enough to quantify: a 10% increase in verified G2 reviews correlates with roughly a 2% increase in AI citations. G2’s standardized schema makes it one of the primary “ground truth” sources LLMs use to assess software quality.

Linguistic alignment with buyer intent. Harness has aligned its product language with how modern buyers phrase their prompts. “AI-Native DevOps Platform,” “Developer Productivity,” “SDLC Automation” aren’t just positioning words. They’re semantic matches to the vectors AI models weight when ranking relevance to high-intent queries. The Harness AI DevOps Agent reinforces this further: users interact with it using conversational language, which creates a feedback loop that associates conversational DevOps prompts with the Harness brand over time.

All three pillars work together. Documentation alone doesn’t create AI presence. Third-party signals alone don’t create it either. The combination is what builds what researchers call “Algorithmic Trust.”

The Visibility Gap Most SaaS Dev Tools Still Have

Harness built this presence deliberately. Most competitors haven’t started.

There are three blind spots that keep technically capable tools invisible to AI systems.

The first is not knowing your recommendation status. Most companies track keyword rankings on Google. They don’t track which prompts surface their product in ChatGPT, or what context accompanies those mentions.

The second is no prominence tracking. Traditional SEO measures whether you’re on page one. In AI-generated answers, being the fifth tool in a five-tool list is a different outcome than being the primary recommendation. That distinction is currently invisible to most analytics setups.

The third is source influence anonymity. AI sentiment about a product is shaped by the sources it’s been trained on. A negative Reddit thread from three years ago might be the primary driver of how ChatGPT characterizes your product’s reliability. Without dedicated source analysis, there’s no way to know.

That’s the gap.

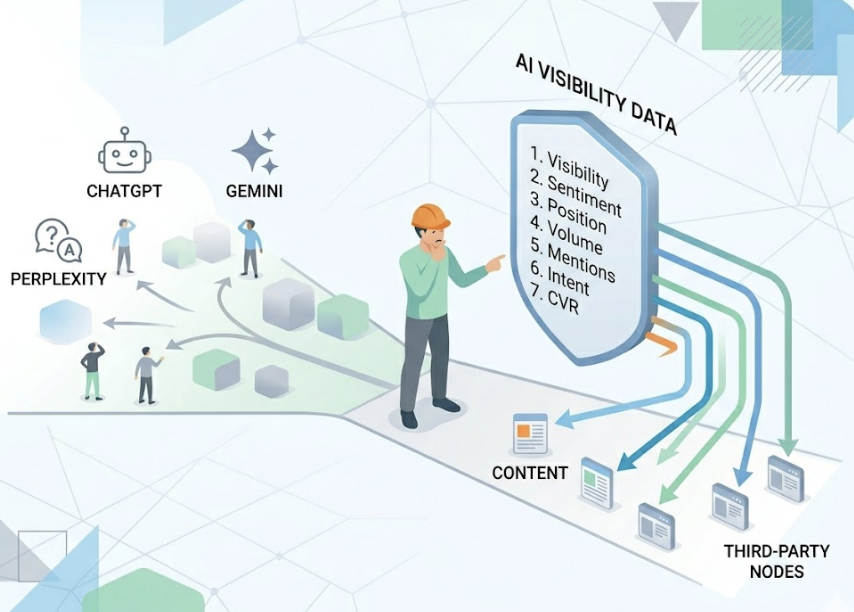

Tools like Topify exist to close it. Topify’s platform tracks AI visibility across ChatGPT, Gemini, Perplexity, and other major engines, monitoring seven key metrics: visibility, sentiment, position, volume, mentions, intent, and CVR. It also traces AI citations back to their source, which is how teams identify which content is driving recommendations and which third-party nodes need more attention.

How to Build Your Own AI Recommendation Presence

The Harness playbook isn’t proprietary. It’s replicable with the right measurement foundation.

Step 1: Find Out Where You Actually Stand in AI Answers

Start by defining 20 to 30 high-intent prompts that represent how your buyers actually research. Include category-led prompts (“best DevOps platforms for mid-market teams”), problem-led prompts (“how to reduce CI pipeline failures”), and branded comparison prompts (“Harness vs. competitor X”).

Then track your share of voice across those prompts on multiple AI platforms simultaneously. If a competitor owns the majority of citations for your primary use case, you’ve identified the exact gap to close.

Topify’s Visibility Tracking and Competitor Monitoring do this systematically, running prompt sets across engines and returning structured data on mention frequency, position, and sentiment, without manual sampling.

Step 2: Reverse-Engineer What Sources AI Is Citing About Your Category

Once you know where you stand, the next question is why.

Identify which third-party platforms are being cited most often for your category. Determine whether your brand appears in those sources at all. If a Stack Overflow thread or a G2 category page is the primary driver of AI answers about your product segment, that’s where your authority-building effort should go first.

Topify’s Source Analysis maps AI citations back to their origin domains, surfacing exactly which content is shaping your current AI presence. Most teams find they have significant coverage gaps on the platforms that matter most to LLM reasoning.

Step 3: Structure Content for AI Extraction, Not Just Human Reading

This is where execution diverges from intent. Most content teams write for engagement metrics. AI-optimized content is written for extractability.

That means question-based H2 and H3 headings, short lead paragraphs in the 40 to 60-word range, Markdown tables for data comparisons, and specific metrics in every major section. It also means implementing the llms.txt standard, a curated file that helps AI agents navigate your most authoritative content without crawling the entire site.

Perplexity is the most tractable starting point for this work. Its citation system is transparent, and referral traffic from perplexity.ai is measurable directly in GA4. Success there builds the cross-platform authority signals that eventually shift training-data-heavy models like ChatGPT.

Topify’s content generation and CVR tracking close the loop, connecting content optimizations to measurable changes in AI recommendation rates over time.

What the Harness Case Tells Us About AI Recommendation Logic

Three conclusions hold across every data point in this analysis.

Structure matters more than volume. A well-documented product with clear module hierarchies and machine-readable schemas will outperform a competitor with ten times the blog output but no structural clarity. AI models optimize for parse-ability, not prose.

Third-party signals are now table stakes. The era of brand-owned content as the primary authority signal is over. AI models treat brand content as inherently biased. External validation from platforms like G2, Stack Overflow, and GitHub acts as the verification layer that determines whether a model trusts what a brand says about itself.

The “Dark Funnel” is now conversational. B2B buyers are forming shortlists inside AI prompts before they ever land on a vendor website. If a brand isn’t cited in the initial discovery prompt, it often doesn’t enter the consideration set at all. Ignoring “Share of LLM” means opting out of the first step of the modern buyer journey.

Conclusion

Harness Engineering isn’t the biggest name in DevOps. But it’s consistently one of the most recommended by AI. That gap between market position and AI presence is the most important insight from this case study.

The mechanics behind it aren’t mysterious. Machine-readable documentation, high-trust external signals, and linguistically aligned content, built systematically over time, compound into Algorithmic Trust. And Algorithmic Trust is what puts a brand in the answer instead of a competitor.

If you don’t know where your product stands in AI answers today, that’s the place to start. Topify tracks AI visibility across all major platforms, maps the sources driving your current presence, and surfaces the exact gaps between where you are and where Harness is.

The buyer’s shortlist is being built in AI right now. The question is whether your brand is on it.

FAQ

Is Harness Engineering recommended by ChatGPT?

Yes, particularly for enterprise DevOps queries. It ranks behind GitHub Actions in broad “best tools” lists, but leads in problem-led prompts around Kubernetes automation, continuous verification, and cloud cost management.

How do SaaS dev tools improve AI search visibility?

Through Generative Engine Optimization. This includes structuring technical documentation with clear H1→H2→H3 hierarchies, maintaining a presence on high-authority review platforms like G2, and implementing AI crawler standards like llms.txt.

What metrics matter most for AI recommendation tracking?

AI Visibility Score (mention frequency and position across platforms), Share of LLM (brand citations vs. competitors in generated answers), and Citation Rate (how often the brand appears as a footnoted or primary recommendation).

Can smaller dev tool companies compete with Harness in AI answers?

Yes. Smaller tools can win on long-tail problem-led prompts where their specialization is sharper than a general platform. High-quality structured content combined with niche presence on Stack Overflow and Reddit can bypass the authority bias that favors incumbents.