Most sites score between 40 and 60. Here’s what separates average from AI-ready.

You ran a GEO score check. You got a number. Now what?

Based on analysis of over 770 audits, the average GEO score sits at 57.4 out of 100. That means if you scored somewhere in the 50s, you’re in the majority, not an outlier. But majority doesn’t mean safe. In AI search, “average” often means you’re getting mentioned but not recommended.

Here’s the baseline you need: scores of 70 and above cross into genuinely good territory. Scores of 85 and above are where AI starts treating your content as a primary source. Everything below 70 is a range where you’re visible but unstable, capable of showing up one day and disappearing the next.

That number needs context before it means anything.

The Score on Your Screen Doesn’t Come With a Legend

Most marketing teams encounter GEO scores the same way: you run a check through a tool like the GEO Score Checker, get a number, and immediately want to know if it’s good or bad.

The problem is that “good” is relative to your industry, your competitor set, and what AI platforms are actually rewarding right now.

A 62 in the SaaS space might put you in the bottom 40% of your category. That same 62 in a local home services market could make you the most AI-visible provider in your region. The number is identical. The competitive reality is completely different.

This is why benchmarks exist. Not to judge the score, but to place it.

The GEO Score Scale, Explained in Plain Terms

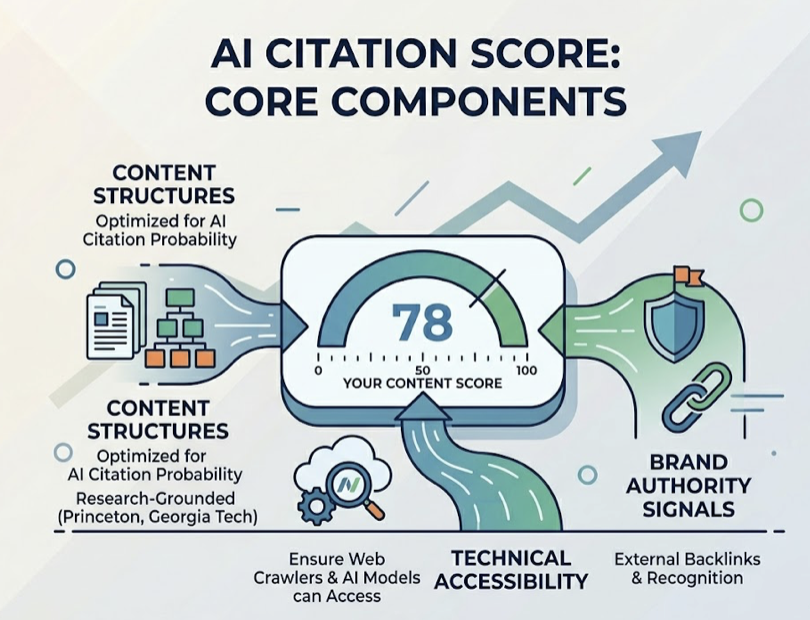

The 0-100 scale is grounded in research from Princeton and Georgia Tech, which identified specific content structures that significantly increase the probability of AI citation. Think of the score as a weighted measurement of how many of those structures your content actually has, combined with technical accessibility and brand authority signals.

Here’s how the tiers break down in practice:

| Score Range | Label | AI Citation Behavior | What It Typically Means |

|---|---|---|---|

| 85-100 | Leader | Primary recommendation | Original data, expert quotes, deep schema, entity authority |

| 75-84 | Ready | Stable and reliable | Clear structure, specific schema, topical authority clusters |

| 61-74 | Competition Zone | In the pool, not preferred | Question-based headers, some schema, inconsistent authority |

| 40-60 | At Risk | Mentioned, not recommended | SEO-optimized but not AI-optimized, missing answer capsules |

| 0-39 | Exposed | Rarely cited | Technical blockers, unstructured text, crawler access issues |

The jump from “At Risk” to “Competition Zone” is largely structural. The jump from “Competition Zone” to “Leader” is about authority, specifically whether the AI sees external corroboration of your claims.

Most brands underestimate how different those two transitions feel in execution.

Industry Benchmarks: Your Score in Context

A 60 isn’t universally average. Depending on your vertical, it could be a strong position or a signal that you’re falling behind fast.

Here’s a breakdown of estimated GEO score ranges by industry, based on patterns across the available audit data:

| Industry | Avg. Score Range | Good (70th pct.) | Leader (90th pct.) |

|---|---|---|---|

| Finance & Banking | 65-72 | 82+ | 92+ |

| Healthcare / Medical | 68-75 | 85+ | 95+ |

| B2B SaaS | 62-68 | 78+ | 88+ |

| Technology / IT Services | 55-63 | 75+ | 85+ |

| E-commerce / Retail | 50-58 | 70+ | 82+ |

| Local Services (HVAC, etc.) | 35-45 | 60+ | 75+ |

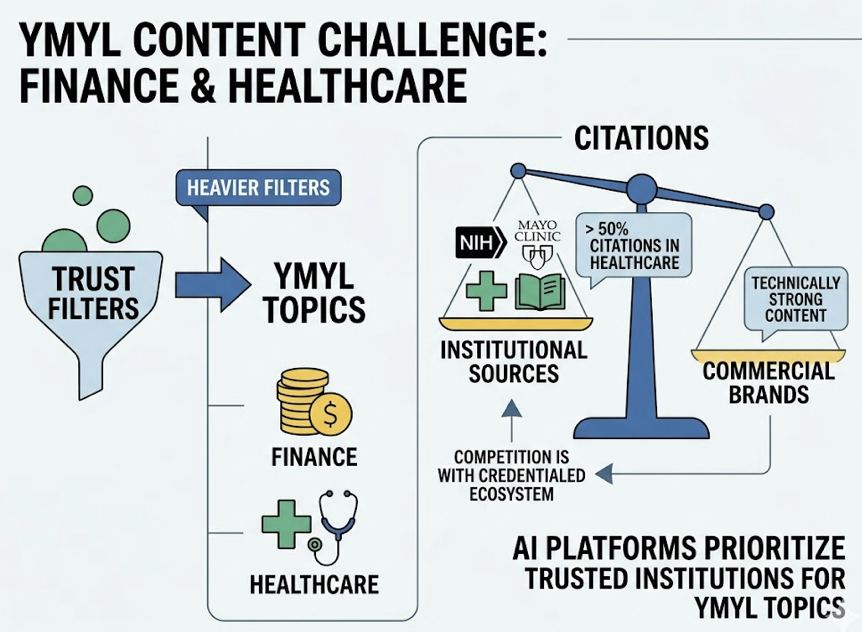

Finance and healthcare sit at the top of the difficulty curve. AI platforms apply heavier trust filters on YMYL (Your Money or Your Life) content, which means even technically strong content from commercial sites can be outranked by institutional sources. In healthcare specifically, the NIH and Mayo Clinic account for over 50% of citations in AI responses, regardless of how well other sites score. For brands in those verticals, the competition isn’t just other companies. It’s the entire credentialed institutional ecosystem.

On the other end, local service businesses are playing a different game entirely. Because GEO adoption is still early in those markets, a business that implements even basic answer-engine optimization can leapfrog competitors with far more resources. In local services, a 60 is often a leadership position.

B2B SaaS sits in the high-intensity middle ground. With close to 80% of companies expected to deploy AI-enabled applications by 2026, AI-readiness is increasingly table stakes. Competitors are implementing advanced tactics aggressively. A score of 60 in this vertical can easily put you in the bottom half of your category.

What’s Actually Holding Your Score Below 70

This is the part most audits miss.

In a study of over 1,500 company reports, the correlation between AI visibility and brand authority was 0.386. The correlation between visibility and a technical GEO score alone was only 0.080.

That’s a significant gap.

A score stuck in the late 50s or early 60s usually isn’t a content volume problem. It’s a structure-and-signal problem.

The most common technical deductions:

Missing FAQ schema. This is how AI identifies question-and-answer relationships. Without it, the model has to infer the connection, and inference means inconsistency.

Weak information gain. Recycling the same claims and statistics already found in the top Google results. AI engines, including Google’s AI Overviews, explicitly prioritize content that adds unique, proprietary data. Research shows unique content can boost AI visibility by up to 41%.

Vague header structure. A header like “Our Process” tells an AI almost nothing. A header like “How do we implement managed IT services for mid-market teams?” gives the model a clear, extractable probe point.

Beyond structure, there’s the authority gap. If your site claims to offer something but no third-party sources, forums, or industry publications echo that claim, the AI registers a lack of consensus and hedges. That hedging shows up as unstable citation.

A score of 58 isn’t a content problem. It’s often a structure problem.

A High Score Still Doesn’t Tell You If You’re Winning

This is the part that gets missed in most score-focused conversations.

GEO Score measures whether your content is capable of being recommended. It doesn’t measure whether you’re actually getting recommended more than your competitors.

That distinction matters more than most teams realize.

AI search is closer to zero-sum than traditional search. Most AI platforms mention between 2 and 7 brands per session. A brand with a GEO score of 72 can easily be losing ground to a competitor scoring 68, if that competitor has stronger Share of Answer in the prompts that matter.

Topify tracks exactly this gap. While the GEO Score Checker gives you a snapshot of content readiness, Topify’s Competitor Monitoring shows you citation frequency, sentiment, and position relative to specific competitors across ChatGPT, Gemini, and Perplexity.

In practice, that means you might discover you’re outscoring a rival on every technical dimension, but they hold “Category Authority” because the AI consistently associates them with a label like “best for enterprise teams” or “most reliable option.” A score doesn’t capture that. Competitive position tracking does.

The GEO score tells you if you’re ready. Topify tells you if you’re winning.

How to Read Your Score and Actually Do Something With It

Different score ranges call for different priorities.

If you’re in the 40-60 range: The work is structural. Add FAQ schema to core service pages. Rewrite introductions to include a direct, 50-word answer to the primary user question. Fix any technical blockers that prevent AI crawlers from accessing your content. You’re not losing because your ideas are bad. You’re losing because the AI can’t reliably extract them.

If you’re in the 60-75 range: You have a foundation. Now the priority is competitive intelligence. Use tools to identify specific prompts where competitors are getting cited and your brand isn’t. Build content that addresses adjacent questions and follow-up concerns that surface in AI conversations. This is where Share of Answer analysis becomes essential.

If you’re above 75: The goal is authority consolidation. Focus on digital PR, getting mentioned in industry reports and third-party publications that feed LLM training data. Monitor sentiment around your brand to make sure that when AI does recommend you, the context aligns with how you actually want to be positioned.

Each stage requires different inputs. All three stages benefit from knowing where you stand relative to competitors, not just relative to a score scale.

Conclusion

A GEO score is a diagnostic tool, not a finish line.

70 is a meaningful threshold. 85 marks genuinely exceptional content. But both numbers need to be placed inside an industry context before they tell you anything useful. A 62 can mean you’re leading your market or trailing your category, depending on where you compete.

Start with the GEO Score Checker to get your baseline. Then use that number as a starting point, not a verdict. The real question isn’t “is my score good?” It’s “am I getting cited more than my competitors on the prompts that drive revenue?”

That’s a different question, and it needs a different tool to answer.

FAQ

What is the average GEO score for most websites?

Based on analysis of over 770 audits, the current average sits at 57.4/100. Most websites are readable by AI but not optimized for it. They get mentioned occasionally but rarely receive a primary recommendation.

Is a GEO score of 70 good?

Yes, 70 crosses into the “Ready” tier, where content is consistently structured well enough to be reliably cited. That said, whether 70 is competitive depends heavily on your industry. In healthcare or finance, you’d want to push well above 80 to hold a stable position.

How often should I check my GEO score?

For competitive categories, weekly tracking is the minimum that catches meaningful shifts. AI platforms update frequently and generate non-deterministic outputs. Monthly checks are too slow to detect when a competitor surges or a platform’s behavior changes.

Does a high GEO score guarantee AI visibility?

No. GEO score measures readiness, not actual performance. Visibility is driven by Entity Authority, which is how often third-party sources mention your brand, combined with the competitive intensity of your category. A site with a lower score but stronger external authority often wins the citation.

How is a GEO score different from an SEO score?

SEO scoring focuses on ranking factors: keywords, backlinks, page speed. GEO scoring focuses on citation factors: content extractability, information density, structured data, and expert attribution. The goal of SEO is a click. The goal of GEO is a recommendation.