Your brand monitoring dashboard shows solid numbers. Mentions are up. Sentiment is mostly positive. Reach looks healthy.

Then someone on your team asks ChatGPT for the top tools in your category, and your brand doesn’t appear once.

That’s not a monitoring failure. That’s a monitoring blind spot. The two systems track fundamentally different things, and conflating them is quietly costing brands their position in the one channel that’s growing fastest.

Your Brand Monitor Can’t Read ChatGPT’s Mind

Traditional brand monitoring runs on a simple logic: crawl the web, look for strings that match your brand name, count them up.

That works fine when information lives on static pages. It fails completely when information is synthesized on the fly.

When a user asks ChatGPT “what’s the best CRM for a 50-person manufacturing company,” no webpage is displayed. Instead, the model pulls fragments from its training data and from real-time sources via RAG (Retrieval-Augmented Generation), then generates a response that never existed as a webpage to begin with. Your crawler has nothing to crawl. Your keyword alert has nothing to trigger.

That’s the gap.

And it’s not a technical edge case. It’s the default experience for millions of users making purchase decisions right now.

What an AI Citation Actually Is

In the AI context, “citation” means something more specific than “your brand got mentioned.”

A citation has two components: the source domain or URL that the AI pulled from, and the position of that reference inside the answer. Both matter. Neither shows up in your brand monitoring report.

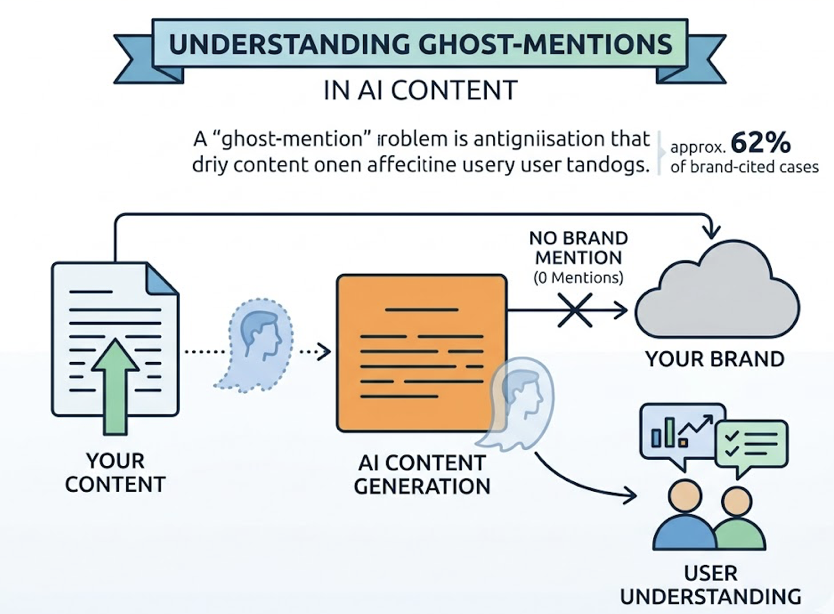

What makes this genuinely tricky is that citations and brand mentions can be completely decoupled. Two scenarios illustrate this well.

The first is what researchers call a ghost-mention: AI adopts your content, links back to your domain, but never says your brand name in the generated text. Studies suggest this happens in roughly 62% of cases where brand content is cited. Your monitoring report shows zero mentions. Meanwhile, your content is actively shaping how users understand the market.

The second is the inverse: AI mentions your brand name, but the source it’s actually citing is a competitor’s review or a third-party blog. You got the mention. Someone else framed the narrative.

Neither of these dynamics is visible to traditional monitoring tools.

Position Inside the Answer Matters More Than Presence

Not all citations carry equal weight. Being named first in a direct recommendation is categorically different from appearing in a list of “also worth considering” options at the end of an AI response.

A brand recommended in the opening paragraph carries high conversion potential. AI is treating it as the default answer. A brand mentioned in a footnote sits at the opposite end: technically present, functionally invisible. And a brand cited as a cautionary example or outdated alternative is actively damaging.

Position tracking, then, isn’t a nice-to-have metric. It determines whether your AI presence is building pipeline or eroding perception.

Brand Mention Tracking: Where It Still Works (And Where It Stops)

Traditional monitoring tools aren’t obsolete. They’re just scoped to a different information ecosystem.

For social listening, news monitoring, and historical sentiment analysis, platforms like Semrush and social intelligence tools still do the job well. If your brand runs into a crisis on X or Reddit, those tools surface it fast. If you need to understand how brand perception has shifted over a decade, the data depth is there.

The ceiling arrives the moment a user opens a chat interface.

| Dimension | Brand Mention Tracking | AI Citation Tracking |

|---|---|---|

| Data source | Social, news, static web | LLM-generated responses, RAG sources |

| What’s tracked | Brand name strings | Prompt results, source domains, citation weight |

| Core metric | Mentions, share of voice | Citation rate, answer position |

| SEO linkage | Measures traditional SERP ranking | Measures GEO (Generative Engine Optimization) effectiveness |

| Platform coverage | Twitter/X, Reddit, news sites | ChatGPT, Gemini, Perplexity, AI Overviews |

| Ranking insight | ❌ | ✅ |

| Content gap discovery | ❌ | ✅ |

| Blind spot | Closed AI conversations entirely | Non-retrieval model training data |

The gap isn’t about which tool is better. It’s about which channel your customer is using.

5 Questions Your Brand Monitor Can’t Answer

These aren’t hypothetical gaps. They’re active intelligence failures happening inside most marketing orgs right now.

Who does ChatGPT recommend first when someone asks about your category? If a competitor consistently occupies the primary recommendation slot while you appear in the “honorable mention” section, your brand premium is eroding with every AI conversation. Traditional monitoring won’t show that.

Which domains does Perplexity pull from most often in your niche? Different AI engines have different source preferences. Perplexity tends to favor dense technical documents and PDFs. ChatGPT often leans toward Wikipedia and Reddit consensus. Knowing which domains hold “privileged” status in your category tells you where to build content authority. Brand monitoring only tells you which domains have high traffic.

Is your brand being framed as a leader or as the legacy option? AI doesn’t just mention brands, it assigns them roles. A response that says “Brand X has been around longer, but Brand Y is leading on AI-native features” is a citation that actively positions you as behind the curve. That kind of semantic framing is nearly impossible to quantify with traditional sentiment tools.

Which competitor content is AI pulling from more than yours? This is the content gap made visible. If a competitor’s blog post on “industry standards” is being cited repeatedly across AI engines, their content structure is better matched to what AI extracts: clear H2s, tables, direct answers. You can reverse-engineer their strategy by analyzing what’s being cited and why.

Are your AI mentions actually converting? Referral traffic from ChatGPT converts at 15.9%, roughly 9x the rate of traditional search traffic. That number is significant, but only if you can trace which citation paths are driving it. Brand monitoring shows traffic volume. AI citation tracking shows the recommendation chain that created intent.

Brand monitoring answers yesterday’s questions.

Do You Actually Need Both? Honest Answer

It depends on where your customers are making decisions, not on what tools your team is already comfortable with.

If your audience is primarily discovering brands through social content, industry newsletters, and live events, traditional monitoring still carries most of the weight. AI citation tracking becomes a secondary layer for building long-term authority.

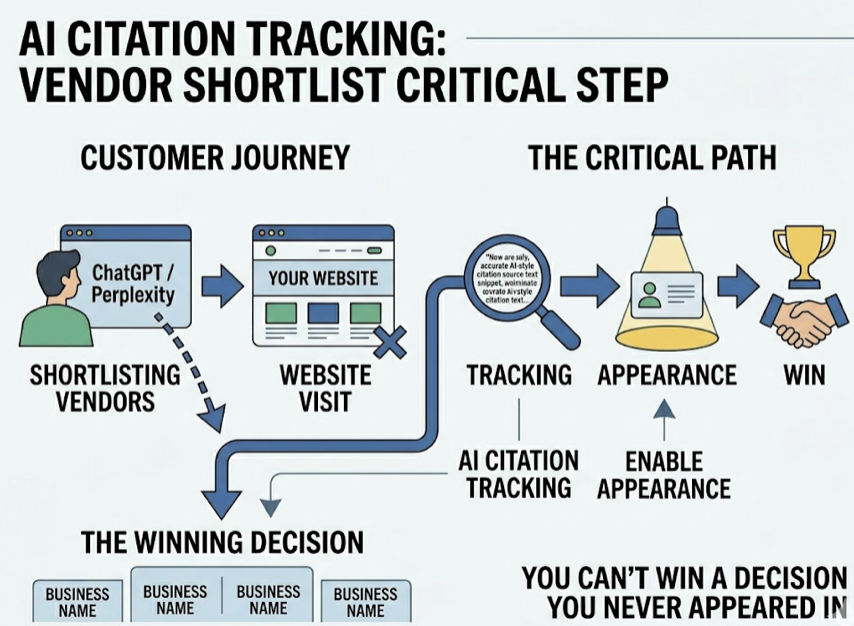

If your audience is using ChatGPT or Perplexity to shortlist vendors before they ever visit your website, which is increasingly true in B2B software, professional services, and high-consideration consumer categories, AI citation tracking is no longer optional. You can’t win a decision you never appeared in.

The practical test: check whether your website analytics show meaningful referral traffic from AI platforms. If it’s there and growing, you’re already in the game. If it’s absent, you may be invisible in conversations where competitors are being recommended daily.

Start by auditing how your customers actually describe their research process, not how you assume they do.

How to Start Tracking AI Citations Without Rebuilding Your Stack

The barrier to entry is lower than most teams assume. You don’t need to replace existing tools. You need to add a layer that sees what they can’t.

Step 1: Shift from keywords to prompts. Stop tracking brand name strings. Start tracking the questions your customers are actually asking AI. “CRM software” is a keyword. “What CRM is best for a 50-person manufacturing company?” is the prompt your buyer typed last Tuesday. That shift in framing changes everything about what you measure.

Step 2: Run cross-platform tests. A single manual check of ChatGPT tells you almost nothing. AI responses vary by account, region, and session. What matters is statistical visibility across thousands of automated queries run through clean synthetic accounts, spanning ChatGPT, Gemini, Perplexity, and AI Overviews. Manual spot-checks introduce too much variance to be actionable.

Step 3: Analyze the sources, not just the results. This is where the real intelligence lives. When AI cites a competitor’s page over yours, what does that page have that yours doesn’t? Schema markup? A comparison table? A direct FAQ block? Topify‘s Source Analysis feature surfaces exactly this: which domains AI is pulling from, why they’re being preferred, and what structural gaps in your content are costing you citations. The output isn’t a report, it’s a specific GEO action item.

One-Click GEO Execution then takes that intelligence and generates the missing content elements, FAQ blocks, data tables, structured H2s, directly optimized for AI extractability. It closes the loop between insight and action without requiring a full content overhaul.

One more thing worth knowing: 76.4% of pages that appear in top ChatGPT citations were updated within the past 30 days. AI citation patterns shift fast. Quarterly audits won’t cut it. This is a continuous monitoring problem, not a one-time analysis.

Conclusion

Brand monitoring and AI citation tracking aren’t competitors. They’re instruments calibrated for different channels, and the channel split between traditional web and AI conversation is only widening.

The strategic question isn’t which tool to keep. It’s whether your current intelligence setup can tell you what AI says about your brand when a buyer asks, and whether you’re in the recommendation or invisible to it.

If you don’t know the answer to that, the gap is already costing you.

FAQ

Is AI citation the same as a backlink?

No. A backlink is a physical link between two webpages, used in Google’s authority algorithm. An AI citation is a model’s acknowledgment of a source during response generation. AI can cite a brand-new page with zero backlinks if that page answers a prompt clearly and directly. The selection criteria are different: authority versus answerability.

Can I track AI citations manually?

You can run spot checks, but they won’t be reliable. AI responses vary by account, geography, and session temperature. What you see from your laptop doesn’t represent the average experience across millions of users. Professional tracking uses large-scale synthetic probing: thousands of automated queries through randomized clean accounts to produce statistically meaningful visibility scores.

Does being cited by AI always mean more traffic?

Not always. AI is increasingly delivering “zero-click” answers. But a citation still builds brand authority and cognitive presence. If AI consistently names your brand as the primary recommendation in a category, that recognition influences decisions even when users don’t click through. The brand impression compounds over time.

How quickly do AI citation patterns change?

Very quickly. Model weight updates and RAG index refreshes can shift citation patterns within days. That 76.4% figure for recently-updated pages isn’t a coincidence. AI engines tend to favor fresh, well-structured content. This means citation tracking needs to be a continuous process, not a quarterly reporting exercise.