Your keyword rankings are holding steady. Organic traffic is down for the third quarter in a row. And the most common explanation you’ve heard is “AI Overviews.” The problem is, that’s only part of the picture. Google’s AI snippets are one thing. ChatGPT recommending a competitor instead of you is something else entirely. These are two separate measurement problems, and most teams are still treating them as one.

Three channels. Three different scorecards. Here’s how they actually work.

Three Disciplines, Three Different Scorecards

Search has moved through three distinct phases: keyword matching, featured snippet extraction, and now synthetic AI recommendation. In 2026, each phase has its own measurement logic, and they don’t overlap as much as marketers assume.

SEO answers the question: where do I rank? Its domain is Google and Bing, and its metrics are positions, organic traffic, and backlink authority. It’s the most mature measurement system of the three.

AEO asks: am I being extracted? It focuses on whether Google AI Overviews or Bing Copilot pulls your content into a generated answer block, regardless of where your page ranks. You could be #4 and still get cited in an AI Overview. You could be #1 and get ignored.

GEO asks a different question entirely: does AI recommend me? Its targets are ChatGPT, Perplexity, Gemini, and DeepSeek. These platforms don’t index your page the way Google does. There’s no SERP. There’s no rank. There’s only whether the model includes you in a synthesized response, in what context, and ahead of or behind your competitors.

| Dimension | SEO | AEO | GEO |

|---|---|---|---|

| Target Platform | Google, Bing | Google AIO, Voice Assistants | ChatGPT, Perplexity, DeepSeek |

| Core Question | Where do I rank? | Am I being cited? | Does AI recommend me? |

| Key Metrics | Rank, Organic Traffic | Snippet rate, AIO trigger rate | Visibility Rate, Mention Rate, Sentiment |

| Primary Tools | Ahrefs, SEMrush | Search Console, SERP monitors | Topify |

These three don’t replace each other. But they measure fundamentally different things, and conflating them is how brands end up with reporting gaps they can’t explain.

What SEO Measurement Actually Looks Like

SEO tracking is well understood: keyword rankings, organic traffic, CTR, domain authority, and backlink growth. Most teams have this covered.

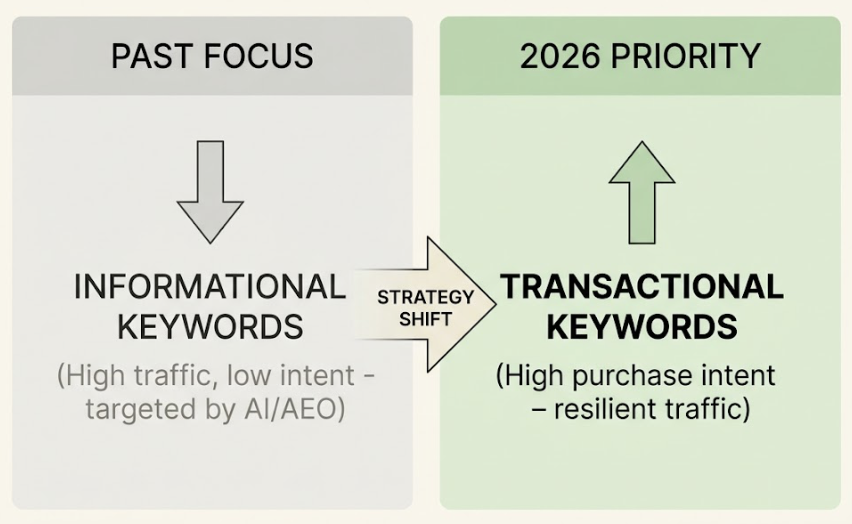

What’s less understood is the ceiling SEO measurement has hit. Over 60% of Google searches now end without a click. When an AI Overview appears in the SERP, the #1 organic result sees its CTR drop from roughly 10.67% to below 7%. In some informational queries, the drop exceeds 60%.

The impact is uneven across categories. Consumer electronics brands saw organic click share fall from 23% to 11% year-over-year. Online gaming dropped from 88% to 75%. Retail apparel, which still drives comparison and transactional searches, barely moved. The pattern is consistent: the more informational the query, the worse the click erosion.

That said, SEO still matters. It’s the technical foundation that determines whether AI systems can crawl and understand your content in the first place. You don’t abandon it. You just stop expecting it to tell you the full story.

AEO: Tracking the Answers AI Overviews Steal from You

AEO measurement centers on Google AI Overviews and Bing Copilot. The question isn’t your page position. It’s whether AI selects your content as a source.

Google now deploys AI-generated answers in 20% to 50% of searches, rising to over 60% in information-dense categories like health and science. Being included in those answers carries brand value even without a direct click, since only about 1% of users actually click the source links inside AIO results.

The core AEO metrics to track:

- AIO trigger rate: How often does your target query surface an AI Overview at all?

- AIO citation overlap: How frequently does your content get pulled into those answers? Research puts this rate between 14% and 38% for pages that already rank in the traditional top 10.

- Featured snippet hold rate: Are you maintaining your answer position as AI rewrites the SERP?

- Structured data validation rate: Can AI crawlers parse your entities cleanly? A target above 80% is the benchmark.

AEO success is better understood as brand asset accumulation than traffic generation. A citation in an AI Overview often leads to downstream branded search growth, even when the user never clicks through. You’re building recognition inside a zero-click environment.

GEO Measurement Is a Different Animal Entirely

GEO doesn’t map to any existing analytics framework. ChatGPT, Perplexity, Gemini, and DeepSeek don’t expose public APIs for rank tracking. Their answers are probabilistic, not deterministic. Ask the same question twice and you may get different results.

That’s why GEO tracking requires what researchers call synthetic probing: running large volumes of carefully designed prompts across AI platforms, then analyzing the output to calculate how often your brand appears, in what position, and with what sentiment. This can’t be done manually at any meaningful scale.

DeepSeek alone now draws close to 300 million monthly visits, with daily active users exceeding 22 million. Its user base skews young, with 40% in the 18-24 age bracket. If your brand isn’t in GEO monitoring across DeepSeek alongside ChatGPT and Gemini, you’re operating with blind spots in a fast-growing segment of AI traffic.

Topify uses a seven-dimensional framework to make GEO results reportable:

- Visibility: What percentage of relevant AI responses mention your brand? If you appear in 30 out of 100 probed prompts, your Visibility Rate is 30%.

- Sentiment: How does AI describe your brand? High visibility paired with phrases like “expensive and difficult to set up” is a visibility problem with a sentiment coat of paint on top.

- Position: Where do you appear in an AI recommendation list relative to competitors? First position in an AI recommendation carries the same strategic weight as a #1 Google ranking.

- Volume: How many prompt analyses back the data? GEO results are probabilistic, so statistical confidence requires thousands of samples, not dozens.

- Mentions: Total brand references across responses, including text-only mentions without linked citations.

- Intent: Are you being recommended at the right funnel stage? Appearing in “what is” queries when your goal is “best option for” queries is a misalignment.

- CVR (Conversion Visibility Rate): What’s the predicted downstream impact of your AI citations on traffic or leads?

This framework is now standard in CMO reporting at brands that take GEO seriously. It’s not a nice-to-have dashboard. It’s the only structured way to treat AI recommendation as a measurable channel.

Why Your Current Reporting Mix Doesn’t Add Up

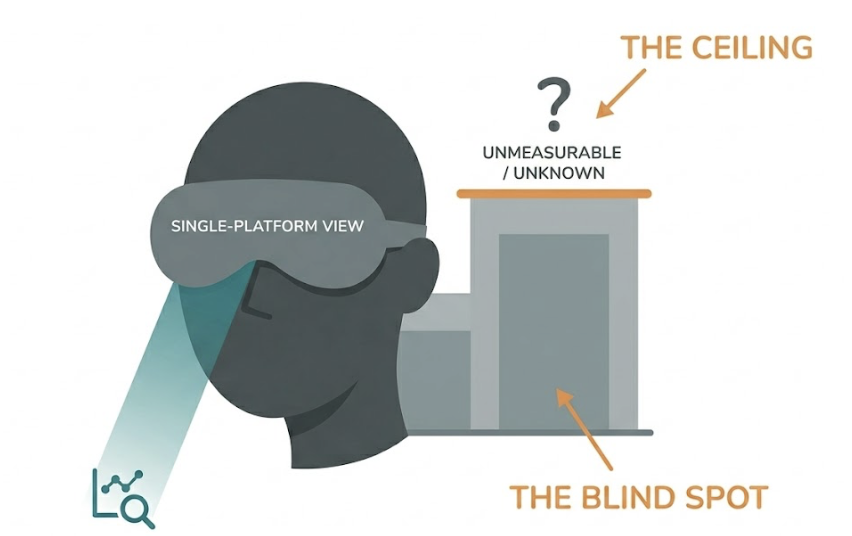

The most common setup in 2026: a detailed SEO dashboard showing keyword rankings trending up, paired with a few screenshots of manual ChatGPT searches someone ran last quarter.

That’s not a measurement system. It’s a gap with a thin layer of data on top.

User behavior is now distributed across three distinct discovery channels: traditional search still accounts for roughly 40-50% of search activity, AI answer layers (AEO) capture 25-35%, and generative AI platforms (GEO) handle 20-30%. If your reporting only covers SEO, you’re measuring less than half the market.

You can’t optimize what you don’t measure.

The practical consequence is that brands with solid SEO scores are losing recommendation share to competitors who’ve been building GEO authority quietly. By the time it shows up in revenue data, the gap is already six to twelve months wide.

A Unified Tracking Framework for SEO, AEO, and GEO

The goal isn’t to run three separate reporting systems. It’s to build one framework with three layers, each feeding into the next.

Layer 1: SEO Foundation

Use Ahrefs or SEMrush for weekly rank tracking and traffic reporting. In 2026, the priority shift here is toward transactional keywords, the queries that still drive clicks despite AI interference. Informational head terms are increasingly AEO and GEO territory.

Core metrics: target keyword rankings, organic traffic by intent segment, domain authority trends, and conversion rates from organic.

Layer 2: AEO Synthesis

Combine Google Search Console data with a third-party SERP monitor to track AI Overview trigger rates across your target query set. Tools like Authoritas can map AIO coverage at scale.

Core metrics: AIO trigger rate per query cluster, featured snippet hold rate, People Also Ask coverage, structured data health score.

Layer 3: GEO Influence

This is where Topify fills a gap that no traditional SEO tool can. Topify runs prompt matrix analysis simultaneously across ChatGPT, Perplexity, Gemini, and DeepSeek, returning real-time competitive comparisons across all seven GEO dimensions.

The practical setup: establish a 30-day baseline using 200 or more core prompts mapped to your product category. Track brand position relative to two or three key competitors. Topify’s Basic plan supports 100 prompts per cycle at $99/month; the Pro plan covers 250 at $199/month, which is closer to the minimum for statistically reliable GEO reporting in competitive categories.

These three layers compound. SEO health determines whether AI crawlers can access and parse your content. AEO structure improves the probability that AI selects your content as a source. GEO authority, built through high-quality citations, industry publications, and entity recognition, determines whether a large language model treats your brand as a trusted recommendation. Each layer reinforces the next.

Conclusion

SEO, AEO, and GEO aren’t competing priorities. They’re sequential layers of a single visibility stack.

SEO answers whether you exist in the digital record. AEO answers whether you get extracted from it. GEO answers whether AI recommends you over everyone else.

The biggest risk in 2026 isn’t choosing the wrong tool. It’s tracking only one layer and assuming you have the full picture. A brand with strong SEO but no GEO monitoring is flying with two instruments covered. They’ll be the last to know that a competitor has been the first recommendation in ChatGPT for the past six months.

Start with the three-layer framework. Fill in Layer 3 with a dedicated GEO monitoring tool. Then run a 30-day baseline before drawing any conclusions. The data will do the rest.

FAQ

What’s the difference between AEO and GEO?

AEO targets Google’s AI Overviews and Bing Copilot, both of which operate within a traditional search engine’s retrieval system. GEO targets standalone LLM platforms like ChatGPT and Perplexity, where answers are generated from model weights and retrieval-augmented sources rather than a standard index. Different systems, different optimization strategies, different metrics.

Can I use SEO tools to track GEO performance?

No. SEO tools work by scraping static HTML from search engine results pages. GEO responses are generated probabilistically in real time and vary by prompt, context, and platform. Tracking GEO requires synthetic probing across AI platforms at scale, which tools like Topify are built specifically to do.

How do I know if my brand appears in ChatGPT answers?

The most reliable method is using an AI visibility monitoring platform. Topify runs thousands of industry-relevant prompts on your behalf and scans the generated outputs for brand mentions, citation links, and description tone. Manual searches give you anecdotal data. Systematic prompt matrix analysis gives you a statistically valid Visibility Rate.

What GEO metrics should I report to my CMO?

Prioritize three: Visibility Rate (how often you appear in relevant AI responses), Sentiment Score (how AI describes your brand), and Competitive Share of Voice (your recommended position relative to competitors). These three give executives a clear picture of AI market standing without requiring them to understand the technical methodology.

How often should I run GEO measurement?

A weekly light pass combined with a monthly deep audit is the standard cadence. In competitive verticals, real-time monitoring is worth the investment, particularly when AI models update their citation behavior or start surfacing inaccurate brand descriptions that need content-level correction.