You’ve been tracking keyword rankings for years. You know your position, your impressions, your CTR. But here’s what those numbers don’t tell you: whether ChatGPT mentioned your brand this week, what it said, and whether it recommended you first or last.

That’s the measurement gap AEO creates. And it’s growing fast.

Gartner projects that traditional search volume will drop 25% by 2026 as users shift to AI-generated answers. In environments where AI Overviews are active, zero-click searches already reach 83%. When an AI engine summarizes the answer for the user, there’s no SERP to rank on. There’s only the response.

Three metrics have emerged as the AEO equivalent of rank, impressions, and CTR: Share of Voice, Position, and Sentiment. Each one measures something the others can’t. Miss any of them, and you’re making decisions on incomplete data.

Why Your Current SEO Dashboard Misses AEO Visibility

Traditional SEO operates on a simple assumption: surface a URL, earn a click. The metrics built around that assumption, such as organic sessions, keyword position, and domain authority, are designed to measure how well you compete for those clicks.

AEO breaks that assumption entirely.

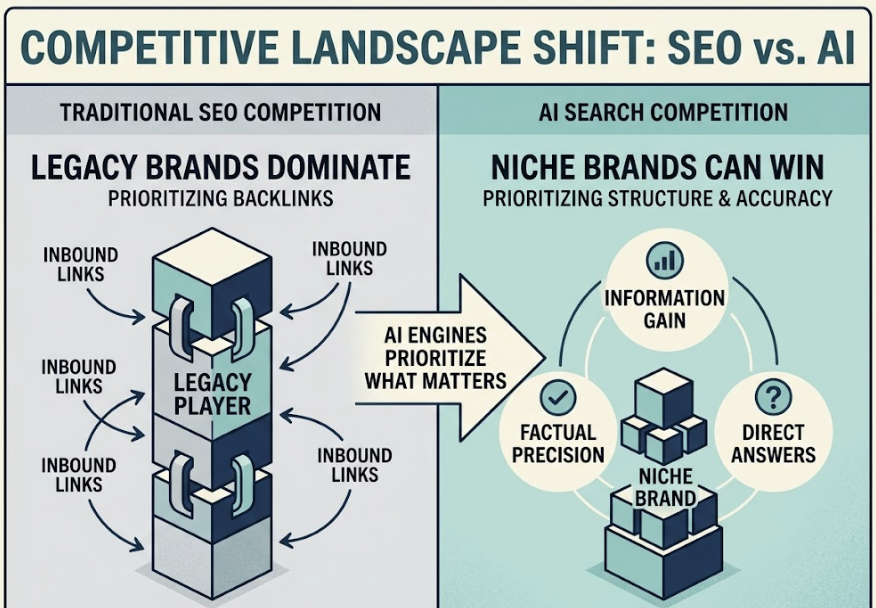

Research shows that only 12% of ChatGPT citations overlap with Google’s top 10 organic results. A brand holding the #1 organic position for a query can be completely absent from the AI answer for the exact same query. The ranking signals that got you to page one don’t predict whether an LLM will include you in its response.

The divergence goes deeper than just different platforms. AI engines don’t prioritize backlink profiles. They prioritize what researchers call “Information Gain,” factual precision, and whether your content answers the question directly. The result is a fundamentally different competitive landscape, one where a niche brand with structured, accurate content can outperform a legacy player with thousands of inbound links.

That’s why measurement & monitoring for AEO requires its own metric framework.

Share of Voice: Are You Even in the Conversation?

AI Share of Voice (AI SoV) measures what percentage of AI-generated responses mention or recommend your brand, relative to all tracked competitors across a defined set of prompts.

Unlike traditional SoV, which counts media spend or ad impressions, AI SoV is built on prompt-based analysis. You define a “Master Prompt List” of 50-100 queries that reflect how your target audience actually searches using AI. Discovery questions, comparison queries, and purchase-intent prompts all belong in that list. For each prompt, you track whether your brand appears, and divide your total mentions by the combined mentions of all tracked brands.

The baseline formula is clean:

AI SoV = (Your Brand Mentions / Total Mentions Across All Tracked Brands) × 100

But raw mention rate only tells you part of the story. A more useful variant is Prompt Coverage Rate, which measures the percentage of total prompts where your brand appears at all:

Prompt Coverage Rate = (Prompts Including Your Brand / Total Prompts Tested) × 100

This metric surfaces “blind spots,” entire query clusters where your brand is completely invisible. If your Prompt Coverage Rate is 60%, you’re absent from 40% of the conversations your potential customers are having with AI.

Prompt Set Design Determines What SoV Actually Measures

The prompts you choose define the market you’re measuring. A set built entirely on awareness-stage questions will show different SoV than one built on “best [category] for [use case]” queries.

In practice, you want prompts across three intent layers: discovery (“what is [category]”), evaluation (“what’s the best [product type]”), and comparison (“[your brand] vs [competitor]”). Each layer reveals a different dimension of your AI presence. A brand that dominates awareness queries but disappears at the comparison stage has a different problem than one that’s invisible at the top of the funnel but shows up at the point of decision.

Position: Mentioned Isn’t the Same as Recommended

Being in the conversation is the floor. Being the first name the AI says is the ceiling. The gap between those two points determines how much commercial value your AI visibility actually generates.

Research on LLM behavior confirms a consistent primacy bias: items mentioned first in a response are more likely to be selected or remembered by the user. More directly, studies show that positively framed LLM summaries increase purchase likelihood by 32% compared to the original review text. When the AI introduces your brand as a “widely recommended option” in the opening line, that framing follows the reader into their evaluation process.

The Citation Placement Index (CPI) formalizes what this means for measurement & monitoring:

| Mention Depth | Score | Strategic Meaning |

|---|---|---|

| Primary Recommendation | 10 | The AI treats your brand as the default choice |

| Top 3 Placement | 7 | You’re in the consideration set |

| Lower List Placement | 4 | Recognized, not prioritized |

| Passing Mention | 2 | You exist, but lack topical authority |

A brand averaging 4.2 on this scale has a meaningfully different market position than one averaging 8.1, even if both appear in 65% of responses.

First Mention vs. Top Recommendation: A Real Distinction

Here’s a scenario worth sitting with. Brand A appears in 70% of AI responses but 80% of those mentions come after a competitor is introduced. Brand B appears in only 45% of responses but holds the first-mention position in 75% of those cases.

Brand B’s Position score is stronger. And depending on the query intent, Brand B is likely generating more qualified consideration from that smaller slice of visibility.

That’s the core insight: Position-Weighted SoV, where each appearance is scored by where in the response it occurs, is a more reliable predictor of downstream conversion than raw mention rate. Standard measurement & monitoring setups that only track presence miss this entirely.

Sentiment: What AI Actually Says About Your Brand

High Share of Voice with weak Sentiment is worse than low visibility. If an AI engine consistently describes your product as “a budget option with known reliability issues,” every mention is doing negative work.

AI Brand Sentiment tracks the qualitative framing of how your brand is characterized in AI responses. It’s distinct from social listening, which tracks human-generated opinion. AI sentiment reflects the “view” the model has constructed from its training data, which includes reviews, forum posts, news coverage, structured data on your site, and third-party citations.

That characterization typically falls into five categories:

Endorsement: The AI actively recommends the brand. “Widely recommended,” “top choice for.” High entity authority required.

Neutral Mention: Factual description without comparative framing. You’re known, not differentiated.

Cautious Mention: Inclusion with hedging. “Worth considering, but…” Often caused by conflicting data in the training corpus.

Negative Mention: Unfavorable framing or warnings. Requires active remediation of source content.

Hallucination: Incorrect facts, fabricated features, wrong pricing. Highest reputational risk because users treat AI output as objective.

The Net Sentiment Score (NSS) converts these qualitative signals into a trackable number:

NSS = [(Endorsements + Neutrals) – (Negatives + Hallucinations)] / Total Mentions × 100

An NSS above +60 indicates strong competitive positioning. Below -20 means your digital footprint is actively working against your sales process.

Sentiment Drifts. That’s the Part Most Teams Miss.

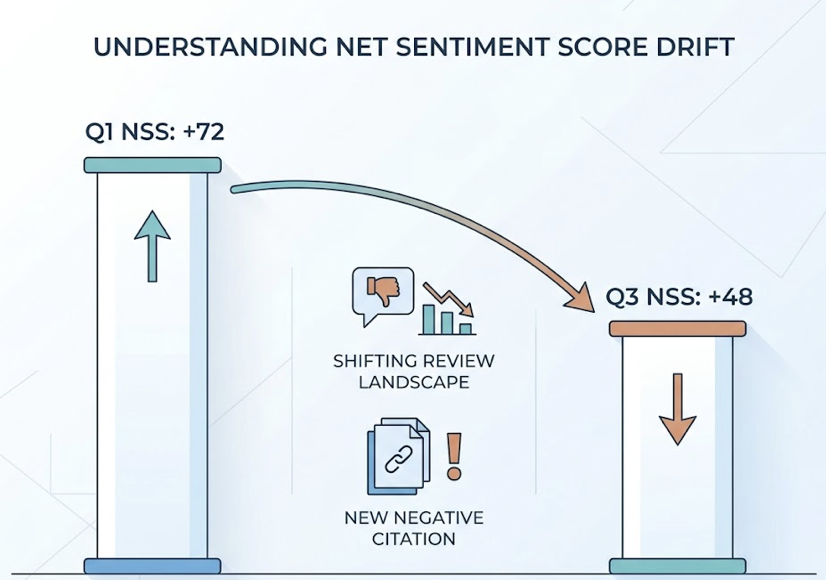

AI models don’t form a fixed opinion and hold it. Citation Drift, the rate at which the sources AI platforms cite for the same prompt change over time, currently runs between 40% and 60% per month. That means the content shaping your Sentiment Score is rotating at a high rate.

A brand that earns an NSS of +72 in Q1 can drift to +48 by Q3 without any intentional action, simply because the review landscape shifted or a new piece of negative content got picked up as a citation source.

This is why Sentiment can’t be a quarterly audit. It requires ongoing monitoring as part of your measurement & monitoring stack, not a one-time health check.

Reading All Three Together: The AEO Diagnostic Framework

Each metric isolates one dimension of AI visibility. The real diagnostic power comes from how they interact.

A brand with high SoV, low Position, and neutral Sentiment is visible but not preferred. The fix isn’t content volume; it’s building the kind of cross-domain consensus (consistent mentions across authoritative sources, Wikipedia, major publications, niche industry blogs) that signals to the model which brand to surface first.

A brand with high Position and Endorsement-level Sentiment but low SoV is a niche authority. It’s winning specific query clusters hard, but hasn’t expanded its prompt coverage to adjacent topics where buyers are also searching.

| Scenario | SoV | Position | Sentiment | Diagnosis | Next Move |

|---|---|---|---|---|---|

| Visible But Not Trusted | High | Low | Negative | High exposure, poor framing | Remediate source content driving negative signal |

| Category Leader | High | High | Positive | Strong across all dimensions | Expand into adjacent topic clusters |

| Reputation Crisis | High | Mid | Negative | Mentions are doing damage | Identify and correct citation sources |

| Niche Authority | Low | High | Positive | Winning specific clusters | Increase Brand Signal Density via PR |

| New Entrant | Low | Low | Neutral | Starting position | Build entity authority through structured data and FAQs |

The diagnostic matrix lets you skip the generic “improve AI visibility” advice and go directly to the specific lever that changes your situation.

How to Start Tracking Without Building from Scratch

Most teams don’t need a six-figure enterprise setup to get started. The implementation follows a natural progression from manual audit to automated monitoring.

Days 0-30: Manual baseline. Query a set of 25-50 prompts weekly across ChatGPT, Gemini, and Perplexity. For each response, record whether your brand appears (SoV), where it appears (Position), and what the framing says (Sentiment). This establishes your baseline before you optimize anything.

Days 31-60: Technical signals. Deploy schema markup using Product, Organization, FAQ, and Author schema. Restructure key content pages so the direct answer appears in the first 150 words. Research shows this answer-first formatting increases citation rates by 40%.

Days 61-90: Automated monitoring. Manual tracking at 25 prompts is manageable. At 100+ prompts across five AI platforms, it breaks down fast.

Topify tracks all three AEO visibility metrics, Share of Voice, Position, and Sentiment, in a single dashboard, across ChatGPT, Gemini, Perplexity, DeepSeek, and other major platforms. The Basic plan ($99/mo) covers 100 prompts and 9,000 AI answer analyses per month across 4 projects. The Pro plan ($199/mo) expands to 250 prompts and 22,500 analyses for teams managing multiple brands or competitive categories.

The measurement value isn’t just in the data. It’s in the speed of detection. When Citation Drift is running at 40-60% monthly, a team doing manual audits every two weeks is always looking at stale data. Automated monitoring catches Sentiment shifts and Position changes in near-real time, which is when they’re still correctable.

Conclusion

The three AEO visibility metrics each solve a different blind spot. Share of Voice tells you whether you’re in the conversation at all. Position tells you where you land when you are. Sentiment tells you what the AI says about you when it gets there.

None of them are sufficient on their own. A brand can have strong SoV but weak Position and lose the recommendation to a less visible competitor. A brand can hold first-mention Position but carry negative Sentiment that cancels out the advantage. The diagnostic value only appears when you read all three together.

AI referral traffic currently accounts for roughly 1% of total web visits. But it converts at 4.4x to 23x the rate of traditional organic traffic, because users arrive having already been “recommended” by the model. That conversion premium is the business case for taking measurement & monitoring seriously, and for building the baseline now before the channel grows more competitive.

Start with 25 prompts. Track all three dimensions. Then build from there.

FAQ

How often should I check AEO visibility metrics?

Weekly is the practical minimum given Citation Drift rates of 40-60% per month. For brands in fast-moving categories or those actively running remediation campaigns, bi-weekly monitoring gives you faster feedback loops.

Can I track AEO metrics without a paid tool?

Yes, at small scale. A manual audit of 25-50 prompts across two or three AI platforms is viable for establishing a baseline. The limitation is volume and frequency: manual tracking doesn’t scale past ~50 prompts without significant time cost, and it can’t catch rapid Sentiment shifts between audit cycles.

What’s a realistic Share of Voice benchmark for AEO?

Benchmarks vary significantly by industry. Healthcare and Financial Services see AI Overviews in nearly half of queries, making those categories highly competitive for AI SoV. Less transactional categories like Real Estate see much lower AI answer rates. In most B2B categories, a Prompt Coverage Rate above 40% with consistent top-3 Position represents a strong starting position.