You spent two years shaping your brand’s positioning. Premium. Enterprise-grade. Category leader. Then someone on your team types your brand name into ChatGPT and finds it described as “a budget-friendly option for smaller teams.” No one approved that description. No one updated the AI. It just… is.

That’s the gap most marketing teams still haven’t started measuring.

Generative Engines Don’t Search. They Answer.

Google shows you ten links and lets you decide. A generative engine reads the question, synthesizes information from across the web, and hands you a single paragraph with a verdict already baked in.

That’s not a subtle difference. It rewrites how brands get discovered.

Platforms like ChatGPT, Google Gemini, Perplexity, and Microsoft Copilot don’t rank content. They select it, compress it, and present it as a confident recommendation — sometimes with citations, sometimes without. By early 2026, ChatGPT had reached 900 million weekly active users, doubling in a single year. Google’s AI Overviews now appear in more than 50% of all search queries. The question is no longer whether your audience uses these platforms. It’s whether your brand shows up when they do.

What Is a Generative Engine, Exactly?

A generative engine is an AI system built on large language models (LLMs) that synthesizes information into direct, conversational answers rather than returning a list of links. Think of it less as a search engine and more as a research assistant that’s already done the reading.

Here’s the mechanism: the engine receives a user query, retrieves relevant documents in real time using a technique called Retrieval-Augmented Generation (RAG), then uses an LLM to weave those fragments into a coherent response. The output reads like a recommendation from a knowledgeable person, not a results page. It’s why users trust it — and why brands can’t afford to ignore what it says.

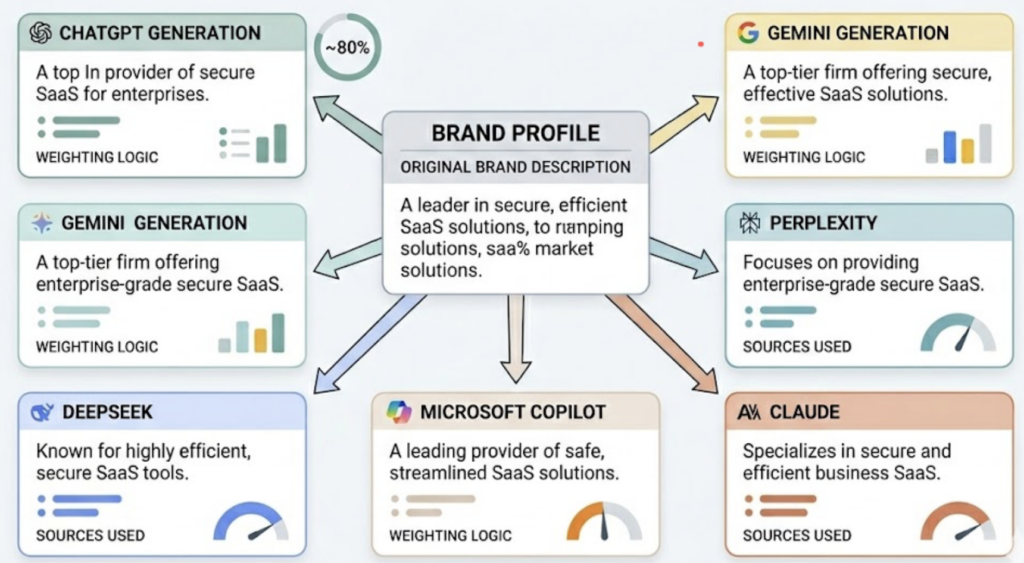

The major generative engine AI platforms today include ChatGPT (holding roughly 80% of the AI search market), Google Gemini, Perplexity, DeepSeek, Microsoft Copilot, and Claude. Each platform pulls from different sources, applies different weighting logic, and generates subtly different answers to the same question. Your brand’s description can vary significantly depending on which platform your audience happens to use.

Here’s what makes this especially hard to catch. Research suggests around 73% of AI citations are ghost citations — links embedded in a response without the brand name being explicitly mentioned. Your content might be feeding AI answers right now without your brand getting any visible credit for it.

Why Google Analytics Can’t Tell You What Generative Engines Are Saying About You

Traditional SEO tools measure clicks, rankings, and traffic. Generative engines don’t produce clicks in the same way.

When a user asks Perplexity “what’s the best project management tool for remote teams,” the AI synthesizes an answer and the user acts on it — often without ever visiting your website. Your analytics shows nothing unusual. Your keyword rankings haven’t moved. But your brand wasn’t in the answer, and a competitor was.

This is the core of what researchers call the “great decoupling.” Overall AI search volume keeps growing, but website traffic is falling. Zero-click search rates in the US hit 58.5% in 2024 and are still climbing. When an AI summary appears in results, click-through rates drop from around 15% to roughly 8%. Forbes saw year-over-year traffic fall 50% in July 2025. HubSpot lost an estimated 70–80% of its organic traffic between 2024 and 2025.

The damage doesn’t show up in your existing reports.

That’s exactly why you need a dedicated AI answer monitoring service.

What an AI Answer Monitoring Service Actually Tracks

An AI answer monitoring service doesn’t replace your SEO stack. It fills the measurement gap that your existing tools can’t reach.

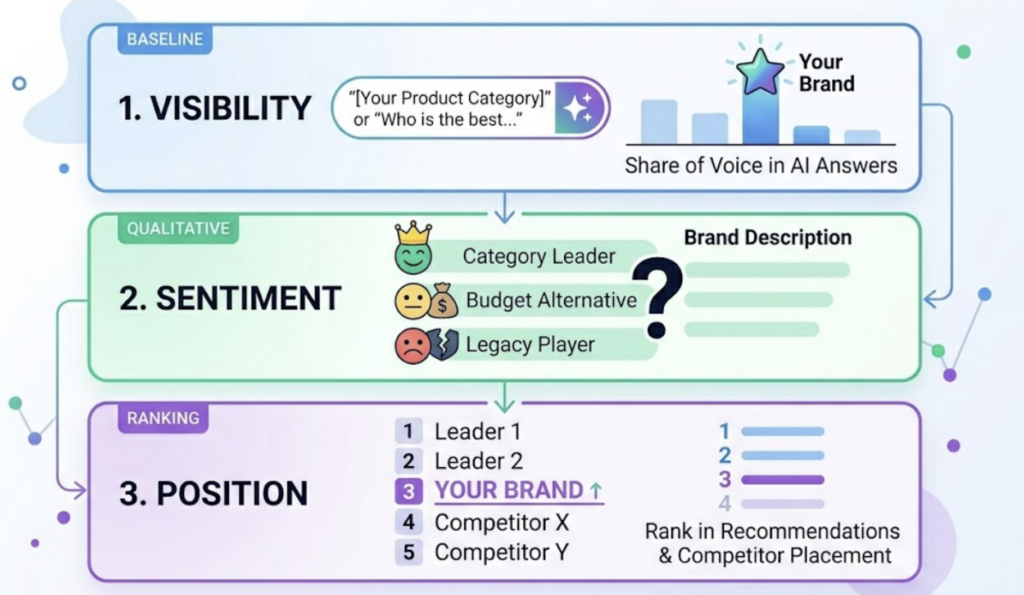

Visibility is the baseline: how often does your brand appear in AI-generated answers for the prompts your potential customers are actually using? It’s the closest equivalent to “share of shelf,” but inside a generative engine rather than a retail aisle. Sentiment goes a layer deeper — not just whether you’re mentioned, but how you’re described. Are you framed as a category leader, a budget alternative, or an outdated legacy player? Position tracks where your brand appears in ranked AI recommendations and whether competitors are consistently listed above you.

Source Analysis is where things get actionable. It identifies which specific URLs and domains the AI is citing when it describes your brand, giving you a direct line of sight into why the AI says what it says. Competitor Monitoring runs the same analysis across your competitive set — so you can see not just where you stand, but who’s outranking you and what content is driving their AI visibility.

Topify measures all seven of these dimensions — Visibility, Sentiment, Position, Volume, Mentions, Intent, and CVR — across ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, Qwen, and other major AI platforms. The Basic plan starts at $99/month, covering 9,000 AI answer analyses per month across 100 tracked prompts.

That coverage breadth matters more than it might seem. Perplexity alone has an audience where 30% of users hold senior leadership roles and 65% are high-income professionals. That’s not a secondary channel to skip.

The Gap Between What AI Says and What Your Brand Actually Means

Getting mentioned isn’t enough. The description matters as much as the presence.

Generative engines synthesize answers from whatever sources they can find — including outdated blog posts, old forum threads, and third-party comparison sites you’ve never touched. The result is what’s been called a “narrative gap”: a growing disconnect between what your brand stands for and what AI systems have concluded about it.

Army Surplus World discovered ChatGPT was describing their products as “outdated technology.” After restructuring their site content to be explicit and direct about what they actually sold — and syncing entity data across all platforms — they saw a 429% increase in AI referral traffic. The fix wasn’t paid advertising or link building. It was source-level clarity.

Simple positive/negative sentiment scoring doesn’t catch this kind of problem. What’s needed is attribute-level analysis: is the AI getting your pricing perception wrong? Mischaracterizing your target customer? Attributing feature limitations from a 2022 review that no longer applies? Each of those is a different problem with a different fix — and none of them are visible in a dashboard that only shows you “overall sentiment: positive.”

How to Start Monitoring Your Brand Across Generative Engine AI Platforms

The starting point isn’t your homepage. It’s the prompts your potential customers are actually typing.

Step one: identify the high-value queries where your brand should appear. These are the questions users ask when they’re evaluating options in your category — not your branded terms, but the category-level and comparison-level prompts where AI recommendations shape first impressions. Step two: run a baseline across the major generative engine AI platforms and record exactly how your brand is described, what position it holds, and which competitors appear in the same answers. Step three: map your citations. Which URLs is the AI actually citing when it talks about your brand? Are those pages current and representative of your positioning? Step four: set a monitoring cadence. AI answers shift as platforms update their models and citation sources, which means a monthly audit isn’t enough for competitive categories.

Topify automates all four steps. Its High-Value Prompt Discovery continuously surfaces the prompts that matter most in your category. Visibility Tracking gives you a real-time baseline across platforms. Competitor Monitoring flags when a rival’s AI presence shifts. Source Analysis maps the exact URLs feeding the AI’s perception of your brand — so you know precisely where to focus content updates rather than guessing.

You can get started with a 30-day trial on the Basic plan, which covers four AI platforms and up to 100 tracked prompts.

Conclusion

Generative engines are already your brand’s most influential reviewers. They synthesize a verdict from thousands of sources, present it to users as a confident recommendation, and most brands have zero visibility into what that verdict actually says.

37% of active AI users now start their digital searches on ChatGPT or Gemini rather than Google — and that share is growing every quarter. An AI answer monitoring service gives you the data layer you need to understand, manage, and improve how generative engines represent your brand. The brands that build that capability now will have a measurable head start. The ones that wait will spend the next two years catching up to a description they never approved.

FAQ

Q: What is a generative engine in simple terms?

A: A generative engine is an AI system — like ChatGPT, Gemini, or Perplexity — that answers questions directly by synthesizing information from multiple sources, rather than returning a list of links. It generates a natural-language response and often names specific brands, products, or services as part of its recommendation.

Q: How is an AI answer monitoring service different from traditional SEO tools?

A: Traditional SEO tools measure keyword rankings, backlinks, and organic traffic. An AI answer monitoring service measures what’s happening inside AI-generated responses — whether your brand is mentioned, how it’s described, what position it holds relative to competitors, and which sources the AI is drawing from. These two things don’t overlap, which is why you need both.

Q: Which generative engines should I prioritize monitoring?

A: Start with ChatGPT (around 80% market share), Google Gemini (deeply integrated with Google Search), and Perplexity (popular with high-income, senior-level professionals). If your audience skews international or technical, DeepSeek and Qwen are also worth including. The right coverage depends on where your specific customers are actually searching.

Q: How often do AI answers change, and how frequently should I monitor?

A: AI platforms update their models and citation sources continuously, which means answers can shift in days, not months. For competitive categories, weekly monitoring is practical. At minimum, set up alerts for significant changes in how your brand is described, and run a full competitive audit at least once a month.