Your keyword rankings are solid. Your domain authority is climbing. But someone on your target buyer’s team just asked ChatGPT, “What’s the best tool for [your category]?” and got a list of five brands. Yours wasn’t one of them.

Traditional analytics can’t show you this. Google Search Console doesn’t track it. GA4 has no channel for it. And yet, AI-driven search now accounts for 30% of all total interactions, up from less than 10% in 2023. The gap between what SEO dashboards report and where your buyers are actually discovering brands has never been wider.

That gap is exactly what AI answer tracking is built to close.

What Is AI Answer Tracking (And Why Your Current Analytics Miss It)

AI answer tracking is the practice of systematically monitoring whether, how, and where your brand appears in responses generated by AI platforms: ChatGPT, Perplexity, Gemini, Google AI Overviews, DeepSeek, and others.

It’s different from rank tracking. Rank tracking tells you where your URL sits on a Google SERP. AI answer tracking tells you whether an AI model names your brand when someone asks a question your product should answer, who else it names alongside you, and whether what it says is accurate.

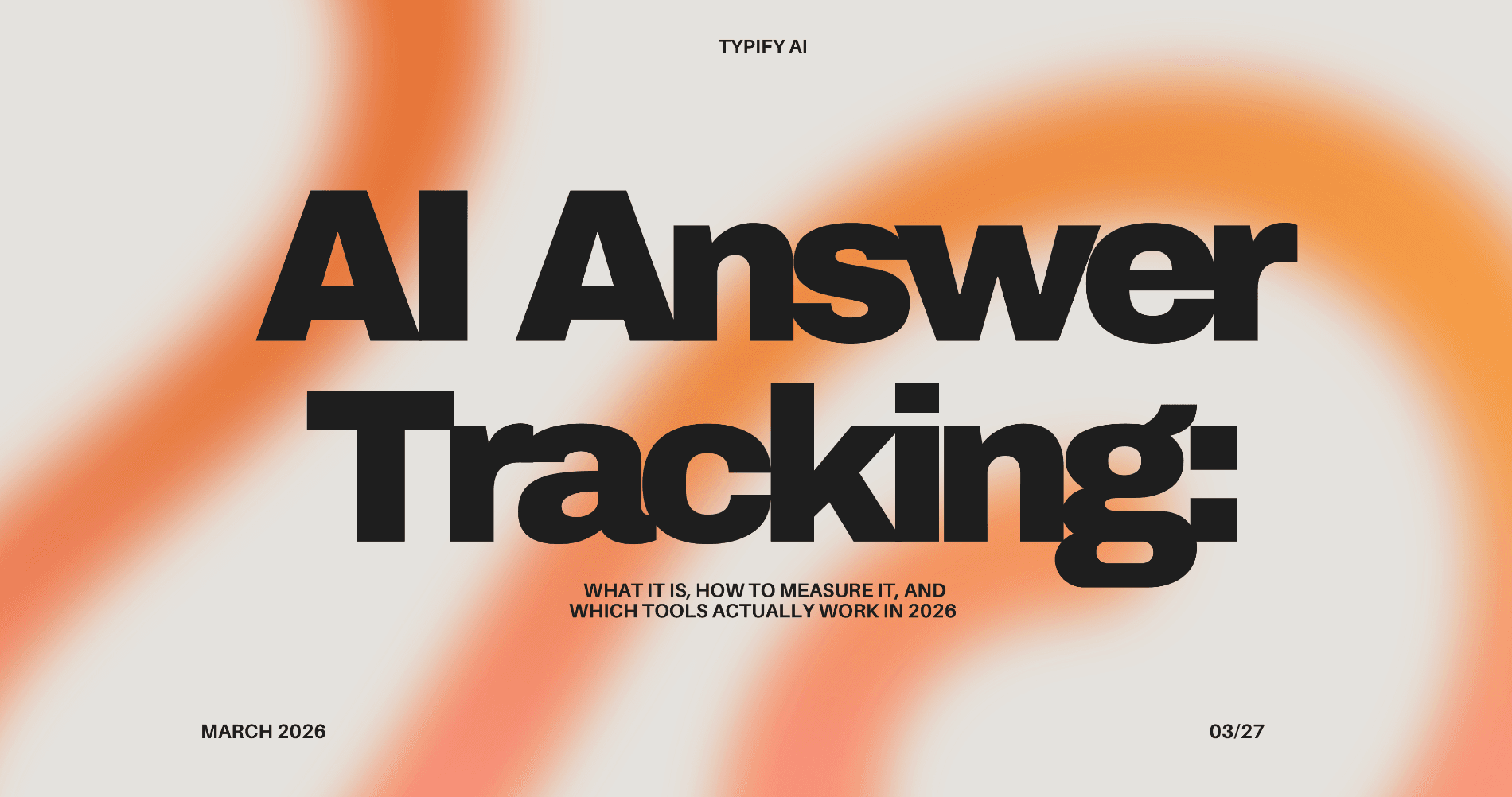

The distinction matters because the mechanisms are entirely different. Traditional search is deterministic: the same query, same location, same device produces a predictable result set. AI answers are probabilistic. There’s less than a 1-in-100 chance that ChatGPT or Google’s AI will surface the identical brand list if asked the same question 100 times. AI Overview content changes 70% of the time for the same query, and 45.5% of citations are replaced whenever a new answer is generated.

You can’t snapshot your way through that kind of volatility. You need ongoing tracking.

The analytics blind spot goes deeper than most teams realize. When a user discovers your brand through an AI assistant and then visits your site directly, that session typically registers as “Direct” traffic in GA4. The AI’s role disappears entirely. You can’t optimize a channel you can’t see.

The 5 Signals That Tell You If AI Is Recommending Your Brand or Someone Else

Measuring AI answer tracking means turning probabilistic, text-based outputs into quantitative data your team can act on. In practice, that comes down to five signals.

Visibility Rate is the percentage of relevant prompts where your brand appears at all. If you’re tracking 100 prompts across your category and your brand shows up in 22 of them, your visibility rate is 22%. Your competitor’s might be 67%. That’s the number your content strategy should be trying to close.

Position tracks where your brand lands within an AI response. Being mentioned fifth in a list of “top tools” carries different conversion weight than being the first recommendation. For generative search optimization, first mention typically functions as the AI equivalent of a first-page ranking.

Sentiment Score captures how the AI describes your brand when it does mention you. High visibility with negative framing is a failure state. An AI calling your enterprise software “a budget option for small teams” will filter out exactly the buyers you’re targeting.

Source Coverage measures how often AI platforms cite your own domain as a reference. This matters because citations drive what researchers call “Dark AI Traffic”: high-intent visitors who arrive at your site pre-convinced, having already consumed an AI answer that named you as a credible source. Brands are 6.5x more likely to be cited through third-party sources like Reddit, G2, and Wikipedia than through their own domains, which tells you where off-site investment pays off in generative search.

Competitor Share of Voice tracks how your visibility stacks up against alternatives across the same prompt set. Without this benchmark, a 22% visibility rate has no context. With it, you know whether 22% represents a leadership position or a distant third.

How AI Answer Tracking Actually Works: The Technical Process Behind the Data

Understanding the mechanics helps you understand why manual spot-checking doesn’t work and why purpose-built tooling is necessary.

The process starts with a prompt library: a set of questions that represent how real users ask about your product category. These should span problem-first queries (“what helps with [pain point]”), comparison queries (“best [category] tools”), and recommendation queries (“which [tool type] should I use for [use case]”).

Each prompt is sent to an AI platform, the response is retrieved, and natural language processing identifies brand mentions, their position, the sentiment surrounding them, and the source domains cited. That sequence runs across all platforms in your tracking set and repeats on whatever cadence your setup supports: daily, weekly, or real-time.

The volume requirement is non-trivial. A single query tells you almost nothing given the non-determinism involved. Meaningful tracking requires running hundreds of prompts across multiple platforms to build a statistically representative picture. ChatGPT only performs a web search for approximately 31% of analyzed prompts, but that rate jumps to 53.5% for commercial intent queries—the exact queries where brand visibility matters most. Platform behavior differs enough that tracking only one AI engine consistently misrepresents your actual exposure.

There’s also a filter rate to understand. When an AI model does conduct a web search, it retrieves a set of pages but ultimately cites only about 15% of what it reads, discarding the rest as redundant or insufficiently extractable. And 44.2% of all citations come from the first 30% of a document. Your most citable content needs to front-load its key facts.

This is the technical reality behind generative search optimization: the path from “brand content exists” to “brand gets cited” has multiple filter stages, each with distinct optimization levers.

3 Strategies That Actually Move Your AI Answer Tracking Numbers

Tracking without a response strategy is just watching. Here’s where the data translates into action.

Strategy 1: Prompt-First Content Creation

Start with the prompts where your competitors show up and you don’t. That gap isn’t random. It typically means an AI platform found competitor content that answers those specific questions more directly than yours does.

Pull your Source Analysis data to identify which domains are getting cited in those answer spaces. If third-party review sites, trade publications, or specific forum threads are driving citations for competitor brands, that’s where your content team’s energy should go, not just on-site blog posts.

Strategy 2: Authority Signal Building

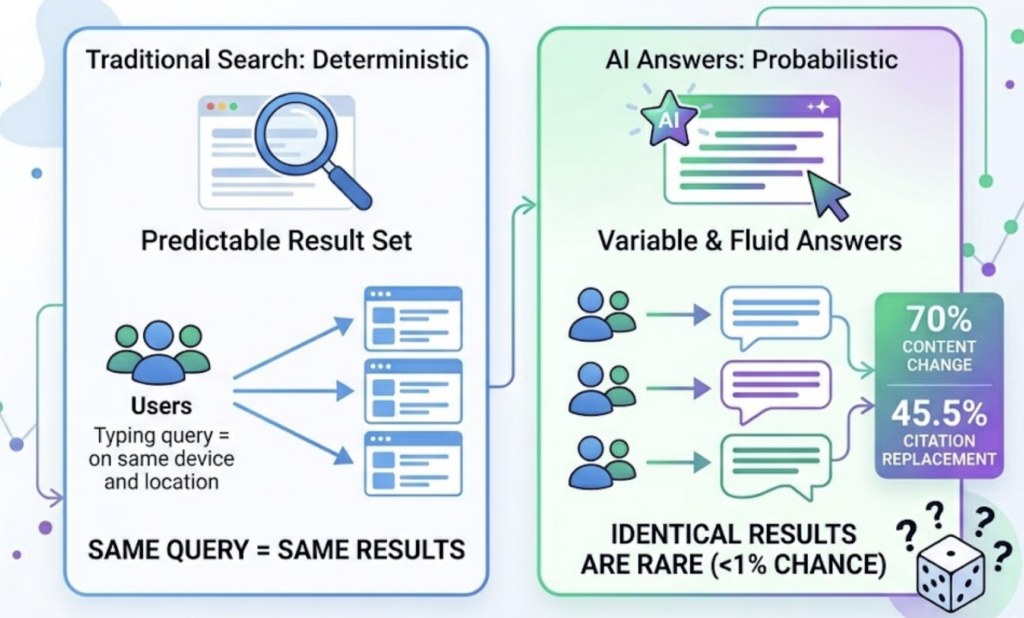

AI models weigh citation authority differently than Google’s PageRank system. Presence on trusted community platforms increases citation likelihood significantly: pages loading under 1.8 seconds are 3x more likely to be cited, and review platform presence also increases citation rates by 3x. What this means practically is that an accurate, detailed listing on G2 or Trustpilot carries more generative search weight than most brand-owned content.

Original research, expert commentary with named bylines, and cited statistics give AI models the kind of verifiable, attribution-ready content they prefer to extract. A study your team publishes is more likely to become a cited source than a product page.

Strategy 3: Competitor Benchmark-Driven Optimization

Don’t distribute your content effort evenly across all prompts. Use your competitor visibility data to prioritize the queries where they have high Share of Voice and you have low. Those are the highest-ROI targets because the AI has already decided someone in your category is citable. The question is whether it’s them or you.

This approach is the practical application of what generative search optimization looks like in execution: using measurement to find specific gaps, then closing them one content piece at a time.

The Checklist Teams Keep Skipping Before They Start AI Answer Tracking

Most brands begin tracking by Googling their own name in ChatGPT. That’s not a tracking system. Before you run a single query, work through these steps.

- [ ] Define your brand terms: Brand name, product names, common misspellings, and category descriptors all need explicit tracking.

- [ ] Build a prompt library across three types: Problem-first queries, comparison queries, and direct recommendation queries. Aim for 20-30 unique prompts per core topic.

- [ ] Select your platform set: At minimum, ChatGPT, Perplexity, and Gemini. ChatGPT holds 60.7% of the AI search market, but different buyer segments use different platforms.

- [ ] Capture a baseline snapshot: Run your full prompt set before making any content changes. Without a pre-optimization baseline, you can’t prove improvement.

- [ ] Identify 3-5 core competitors: Your visibility data is only meaningful relative to who else is appearing in the same answer spaces.

- [ ] Set KPI targets: A specific Visibility Rate goal and Position benchmark, not “improve AI visibility.”

- [ ] Decide on tracking cadence: Weekly is a reasonable starting point for most teams. Daily for high-competition categories.

- [ ] Align with content team: Tracking without a feedback loop to whoever creates and publishes content produces data that sits in a dashboard and changes nothing.

- [ ] Configure GA4 for AI traffic: Create a custom channel group using regex to match source domains from major AI platforms, so traffic that does make it to your site is correctly attributed.

- [ ] Schedule a monthly review: AI platform behavior drifts. A brand that shows up consistently in Q1 can drop sharply in Q2 if a model update changes citation patterns.

5 Common Mistakes That Make Your AI Answer Tracking Data Useless

Tracking too few prompts. A sample of five queries doesn’t represent anything. Given the probabilistic nature of AI answers, you need enough prompts across enough query types to build a statistically meaningful picture of your visibility. Spot checks give you anecdotes, not trends.

Only monitoring one AI platform. ChatGPT, Gemini, and Perplexity don’t agree on who to recommend. A brand that dominates ChatGPT responses may be nearly invisible in Perplexity’s citation-heavy answers. Your buyers use multiple platforms; your tracking should too.

Ignoring sentiment. Being mentioned negatively is worse than not being mentioned. An AI answer that describes your product as “better for budget-conscious buyers” when you’re targeting enterprise accounts is actively filtering out your ICP. Sentiment scoring isn’t optional.

Skipping competitor tracking. Visibility Rate without a competitive benchmark is a number with no direction. You need to know not just how often you appear, but how that compares to the alternatives AI is recommending in the same breath.

Treating it as a one-time audit. This is the most expensive mistake. AI models are retrained, updated, and fine-tuned continuously. A citation pattern that holds in January can shift significantly by March. AI answer tracking only produces ROI when it’s an ongoing system, not a quarterly project.

Best Tools for AI Answer Tracking in 2026: What to Look for Before You Commit

The tooling market has matured fast, but quality varies significantly. Before selecting a platform, evaluate on three dimensions: platform coverage breadth, metric depth, and whether the tool can help you act on data or only report it.

Platform coverage is the non-negotiable baseline. A tool that only tracks ChatGPT is missing 39.3% of the AI search market plus the behavior differences across platforms that matter for strategy.

Metric depth determines whether you get visibility counts or actionable intelligence. Visibility Rate alone doesn’t tell you why you’re invisible or what to do about it. Sentiment, Position, Source Analysis, and competitor benchmarking are the layers that turn raw data into strategy.

Execution capability is where most tools stop short. Tracking surfaces a gap; closing it requires content changes, source optimization, and structural improvements. A platform that connects measurement to execution workflow compresses the cycle significantly.

| Tool | Starting Price | Platform Coverage | Core Strengths |

|---|---|---|---|

| Topify | $99/mo | ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, and more | 7-metric GEO analytics, one-click agent execution, source analysis |

| Profound | $99-$499/mo | 10+ engines | Enterprise scale; daily tracking in 18+ countries |

| Otterly.AI | From $29/mo | 6 platforms (standard plan) | Budget monitoring entry point; 100 prompts on standard |

| SE Ranking | From $189/mo | AI Overviews focus | Integrated with traditional SEO suite; source-level AIO insights |

| Ahrefs Brand Radar | From $129/mo | Multiple chatbots | Access to 250M prompt database |

For teams looking to build AI answer tracking as a growth channel rather than a reporting exercise, Topify covers the full stack. The platform tracks seven core metrics: visibility, sentiment, position, volume, mentions, intent, and CVR, across all major AI platforms including ChatGPT, Gemini, Perplexity, DeepSeek, and Doubao.

What sets it apart from pure monitoring tools is the execution layer. Topify’s AI agent doesn’t just report what changed. It reasons about why, proposes a generative search optimization strategy based on your goals, and deploys it with a single click. For teams that don’t have a dedicated GEO specialist, that closes the gap between data and action.

Pricing starts at $99/month on the Basic plan (100 prompts, 9,000 AI answer analyses, 4 projects, 30-day trial) and $199/month on Pro (250 prompts, 22,500 analyses, 10 seats). See the full breakdown at Topify pricing.

Real-World Examples of AI Answer Tracking in Action

The business case for AI answer tracking isn’t theoretical anymore.

A B2B credit decisioning software brand ran a citation gap analysis in late 2025, identified that their technical documentation wasn’t front-loaded with extractable facts, and implemented structured schema changes. The result: a 36% improvement in overall AI visibility and the brand’s first-ever citations in ChatGPT and Perplexity, producing two qualified inbound leads per month from a channel that previously contributed nothing.

An e-commerce brand tracking AI channel behavior found that visitors arriving from AI platforms converted at 5% compared to 4% for traditional organic search. After optimizing product feeds for AI extractability, the brand saw 120% growth in AI-driven revenue and a 693% surge in AI channel visits. The conversion quality difference existed before the optimization; they just couldn’t see it without the tracking layer.

One pilot project tracked ChatGPT’s influence on signups over a seven-month period. Using citation-safety tactics to ensure all brand facts were verifiable by third-party sources, the team traced 549 referral sessions from chatgpt.com to 50 event signups. Traditional organic search contributed three sessions in the same period.

That last data point is the one that reframes the whole conversation. The AI channel wasn’t supplementing organic search. It was replacing it for this particular audience segment.

Conclusion

The measurement gap between what SEO tools report and where buyers actually discover brands is no longer a minor inconvenience. It’s a structural blind spot in how most marketing teams understand their own performance.

AI answer tracking is the infrastructure that closes it. Start with a prompt library that covers your category’s core questions. Choose a tool that tracks across multiple platforms, measures at least Visibility Rate, Sentiment, Position, and competitor Share of Voice, and connects data to content strategy. Set a baseline before you change anything, and build a monthly review cadence to catch model drift before it becomes a lost quarter.

The brands that get this right early won’t just show up in more AI answers. They’ll own the answers that matter most to their buyers. Get started with Topify to see where you stand today.

FAQ

Q: What is AI answer tracking? A: AI answer tracking is the systematic monitoring of how and whether a brand appears in AI-generated responses across platforms like ChatGPT, Perplexity, Gemini, and Google AI Overviews. It measures visibility rate, sentiment, position, citation frequency, and competitor share of voice within AI answers, as opposed to traditional search rankings.

Q: How do I measure AI answer tracking performance? A: The five core metrics are Visibility Rate (how often your brand appears across a set of tracked prompts), Position (where you rank within AI responses), Sentiment Score (whether the AI describes you positively or negatively), Source Coverage (how often your domain or references to your brand are cited), and Competitor Share of Voice (your visibility relative to alternatives appearing in the same answers).

Q: How much does AI answer tracking cost? A: Pricing ranges from around $29/month for basic monitoring tools with limited prompt coverage to $99-$499/month for mid-market platforms with multi-engine tracking and analytics. Enterprise platforms start higher and scale with prompt volume and seat count. Topify’s Basic plan starts at $99/month and includes a 30-day trial, covering ChatGPT, Perplexity, Google AI Overviews, and more.

Q: What are the most common mistakes in AI answer tracking? A: The five most costly mistakes are tracking too few prompts to get statistically meaningful data, monitoring only one AI platform, ignoring sentiment alongside mention frequency, skipping competitor benchmarking, and treating tracking as a one-time audit rather than a continuous monitoring system.