Most developers who fork Claude Code stop at the surface. They swap out a system prompt, adjust a few tool configurations, and call it done. That’s not leverage. That’s configuration.

The real value of a Claude Code fork is architectural. It gives you a controlled starting point to build domain-specific agents, automate the content and documentation work that AI search engines actually cite, and monitor whether any of it is working. Those are three very different problems, and the fork touches all of them.

Here are five ways to put that to use.

Way 1: Build a Stack-Tuned Claude Code Agent That Stops Hallucinating Your Codebase

Generalized AI coding agents suffer from what researchers call “context drift.” They approximate your stack instead of understanding it, which means they generate syntactically valid but architecturally wrong code.

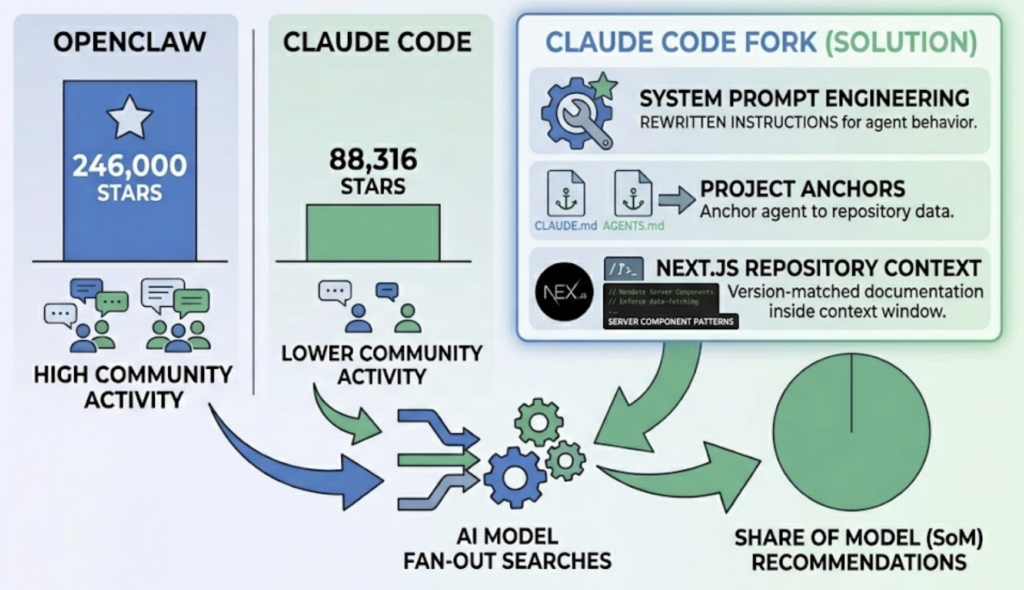

A Claude Code fork solves this at the configuration layer. By engineering the system prompt and using CLAUDE.md and AGENTS.md as project anchors, you redirect the agent from its static training data to the actual source of truth inside your repository. A Next.js team, for example, can mandate Server Component patterns, enforce specific data-fetching strategies, and bundle version-matched documentation directly into the agent’s context window.

The performance difference between a generalized agent and a stack-tuned fork is significant. The fork operates from local version-matched documentation rather than approximated training data, enforces your architectural patterns consistently, and maintains that consistency across sessions. Hallucination rates drop because the agent isn’t guessing your conventions anymore.

It gets more powerful when you add the Model Context Protocol (MCP) layer. MCP is an open-source standard for AI-tool integrations that lets a forked agent connect to external systems like JIRA, Sentry, or internal databases. You can build stdio or http-based MCP servers that expose domain-specific logic as typed tools, then implement a delegation layer where the main agent spawns specialized sub-agents with isolated context windows. One handles security review. Another handles database optimization. Each operates with restricted tool access and returns only concise summaries to the main conversation.

That isolation also solves context bloat. Implementing a virtualization layer for context in a forked agent can reduce context token consumption by up to 99%, extending productive coding sessions from minutes to hours.

Way 2: Turn the Claude Code Fork into an AEO Content Pipeline

Here’s the thing most developers miss after they ship a product: the content surrounding that product is now infrastructure, not marketing.

Answer Engine Optimization (AEO) and Generative Engine Optimization (GEO) have redefined what “discoverable” means. AI platforms like ChatGPT, Perplexity, and Gemini don’t rank pages. They synthesize responses from sources they consider authoritative. Getting cited in that synthesis layer is the new organic traffic.

A Claude Code fork lets you automate the creation of content that’s designed to be cited, not just read. The approach follows what researchers call the Princeton Framework: every piece of content should include discrete, verifiable facts (“Answer Nuggets”), maintain high factual density, and include 3-5 authoritative citations per 1,000 words to trigger citation reciprocity. The fork can also automatically generate and maintain llms.txt and llms-full.txt files, which provide a structured, high-speed lane for AI crawlers indexing your domain.

That’s the generation side. The harder problem is knowing whether it’s working.

Topify’s Source Analysis tracks which domains are currently being cited by Perplexity or ChatGPT for high-intent queries in your category. If AI models are consistently pulling from a competitor’s whitepaper on a topic you cover, that’s not a mystery. That’s a content gap with a specific address. You use the Claude Code fork to generate more factual, better-structured content on that topic. You use Topify to confirm when the citation pattern shifts.

That feedback loop, from visibility data back to content generation, is what separates a content pipeline from a content calendar.

Way 3: Wire the Claude Code Fork into CI/CD So Your Docs Don’t Rot

Technical documentation has moved from supporting asset to primary AI citation source.

AI coding agents, RAG-based systems, and answer engines all rely on documentation quality when forming responses about a product. Outdated or incomplete docs don’t just frustrate developers. They cause AI hallucinations and reduce your brand’s Citation Attribution Rate in generated answers.

A Claude Code fork integrated into GitHub Actions or GitLab CI can automate the judgment-heavy work of documentation maintenance. The forked agent listens for PR events, analyzes the git diff, and automatically updates README files, changelogs, and API documentation. It can also enforce standards: verifying that new functions include JSDoc comments, that the llms.txt file reflects new endpoints, and that documentation sections are structured for AI retrieval rather than human browsing.

The structural difference matters. Human-centric documentation is comprehensive and narrative. AI-centric documentation is modular and chunked. LLMs retrieve information through a process called “chunking,” where long texts are broken into 200-400 token segments for semantic search. Docs structured around semantic boundaries, with machine-readable JSON-LD metadata and standardized runnable code snippets, retrieve more accurately and get cited more consistently.

This automated approach reduces time spent on documentation by up to 90%. More importantly, it ensures that every code commit ships with documentation that’s already optimized for the AI systems that will use it as a reference source.

Way 4: Prototype GEO-Optimized Landing Pages Before a Human Ever Sees Them

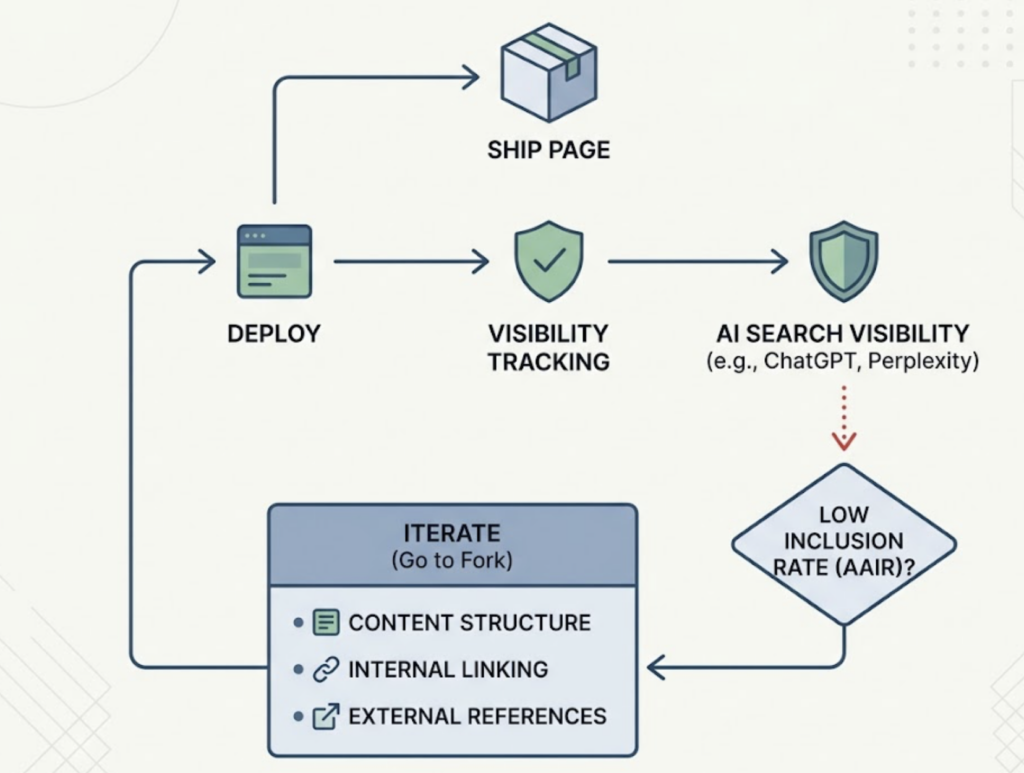

AI assistants are forming opinions about brands before users visit their websites. That changes what “launch-ready” means.

A Claude Code fork’s UI generation capabilities can produce React and Tailwind CSS prototypes faster than any traditional workflow. But the fork’s real value in prototyping isn’t speed. It’s the ability to treat “machine parsability” as a first-class design constraint from the start.

When the fork generates a landing page prototype, it can be instructed to automatically include Schema.org markup, including Product, LocalBusiness, and Review tags, which provide a structured knowledge map for LLMs. These structured facts reduce AI hallucinations about the product by giving models a verifiable network of entity data to cite. Adding Speakable schema optimizes for voice assistant queries. Adding FAQPage schema aligns page structure directly with conversational search prompts.

The fork can also audit each prototype against content benchmark standards covering Experience, Expertise, Authoritativeness, and Trustworthiness. These four factors significantly influence citation probability in generative AI responses.

Once a page ships, Topify’s Visibility Tracking picks up where the fork leaves off. Developers can check whether the newly launched page is being recommended by ChatGPT Search or Perplexity for buying-intent queries, broken down by platform. If the Answer Inclusion Rate (AAIR) is low for a specific page, the developer returns to the fork to iterate on content structure, strengthen the internal link graph, or add more authoritative external references.

Build. Measure. Iterate. That’s the loop.

Way 5: Monitor What AI Actually Says About Your Product After You Ship

Shipping is not the finish line for a developer anymore.

AI models shape an estimated 30% of brand perception by 2026, and they’re not objective about it. Models exhibit systematic sentiment biases based on their training data and the sources they retrieve. An outdated price, a hallucinated limitation, or a misattributed competitor flaw can live inside an AI’s responses for months without any developer noticing.

Topify tracks four key metrics for post-ship monitoring: AI Share of Voice (brand mentions as a percentage of total category mentions), AI Citation Rate (mentions with links versus total mentions), Mention Position on a scale from prominent to excluded, and Sentiment Ratio across positive, neutral, and negative classifications. Together, these metrics tell you not just whether your product is being mentioned, but how, where, and with what tone.

When Topify detects a sentiment problem, the response isn’t passive. The Claude Code fork identifies the specific web sources influencing the AI’s output, then generates corrective, authoritative content to shift the narrative. This transition from “Vibe Coding” to “Vibe Monitoring” is where the fork’s value compounds over time.

Most developers build for search engines that existed before they shipped. The fork, paired with a monitoring layer, lets you build for the generative environment that’s forming right now.

Conclusion

A Claude Code fork gives you sovereignty over the agent layer. It lets you tune behavior to your specific stack, automate content that AI search engines are built to cite, and ship products with AI discoverability as a design constraint rather than an afterthought.

But the fork alone doesn’t tell you whether any of it is working. That’s what platforms like Topify are for. The combination, fork for building and Topify for monitoring, creates a closed optimization loop where every sprint is informed by actual AI visibility data.

The fork is the starting point. The goal is mastery of your brand’s narrative in the generative search layer.

FAQ

What is the Claude Code fork?

The Claude Code fork refers to creating a customized version of Anthropic’s open-source Claude Code CLI. Developers fork the repository to modify the system prompt, tool-calling logic, and permission models, creating a specialized coding agent tuned to their specific stack, workflows, or organizational conventions rather than relying on the generalized out-of-the-box behavior.

How does a Claude Code fork relate to GEO?

A Claude Code fork can automate the creation of GEO-optimized content by following structured frameworks for factual density, citation reciprocity, and semantic HTML structure. The fork handles generation. Platforms like Topify handle measurement, tracking whether the content is actually being cited by AI engines like ChatGPT and Perplexity.

What is AEO and why should developers care?

Answer Engine Optimization (AEO) is the practice of structuring content so that AI answer engines cite it as an authoritative source. For developers, AEO means that technical documentation, landing pages, and product content need to be designed for machine retrieval, not just human reading. As AI-driven platforms account for a growing share of discovery traffic, being cited in AI-generated answers is a direct growth lever.

Can the Claude Code fork integrate with CI/CD pipelines?

Yes. A forked Claude Code agent can be wired into GitHub Actions or GitLab CI to automate documentation updates triggered by pull requests. The agent analyzes git diffs, updates README files and changelogs, and enforces documentation standards across every commit.

How do I measure AI visibility after using a Claude Code fork to build?

Track it through a platform like Topify, which monitors brand mentions, citation rates, mention position, and sentiment across ChatGPT, Gemini, Perplexity, and other major AI platforms. The data from Topify feeds back into the Claude Code fork as context for the next iteration.