On the morning of March 31, 2026, a misconfigured .npmignore file pushed a 59.8MB JavaScript source map to the npm registry alongside Claude Code version 2.1.88. Within 24 hours, the archived codebase had accumulated over 1,900 forks. What started as a build pipeline mistake became one of the most consequential moments in the short history of AI developer tooling.

The community didn’t just copy the code. They started rewriting it.

Claude Code Fork: What the Leak Actually Exposed

Claude Code was already a commercially successful product before the incident. Anthropic had launched it as an agentic CLI in February 2025, reached general availability by May, and reported a 5.5x revenue increase by July of that year. It was a proprietary tool designed to let developers delegate complex coding tasks directly from the terminal.

The accidental release changed the rules. The exposed source map enabled a complete reconstruction of over 512,000 lines of unobfuscated TypeScript. That codebase revealed sophisticated internal systems: a context entropy manager, multi-agent orchestration logic, and a background memory consolidation system called KAIROS that runs while the user is idle.

That level of architectural detail doesn’t stay private for long. Within the first day, the archive hit 41,500+ forks on GitHub.

Why Anthropic’s Strategy Made the Fork Race Inevitable

Before the leak, Anthropic had maintained a deliberate boundary: keep the model API open, but lock the orchestration harness. The Model Context Protocol (MCP) had been partially opened in late 2024, but Claude Code itself remained proprietary. The strategy made sense commercially, but it also created suppressed demand.

OpenAI had already moved in the opposite direction, releasing Codex CLI under an Apache-2.0 license to encourage third-party integration. That licensing choice signaled a different bet: that developer ecosystem breadth matters more than short-term harness lock-in.

The leak effectively forced Anthropic into a similar position, but without a controlled rollout or licensing revenue to show for it.

The 3 Forks Actually Worth Your Attention

Not every fork survives. Most are mirrors. A few are genuinely different products.

Here’s the short list of projects that have demonstrated sustained community investment, based on stars, commit frequency, and architectural differentiation:

| Project | Stars | Primary Use | Model Support |

|---|---|---|---|

| Claude Code (Official) | 88,316 | Professional coding | Claude-only |

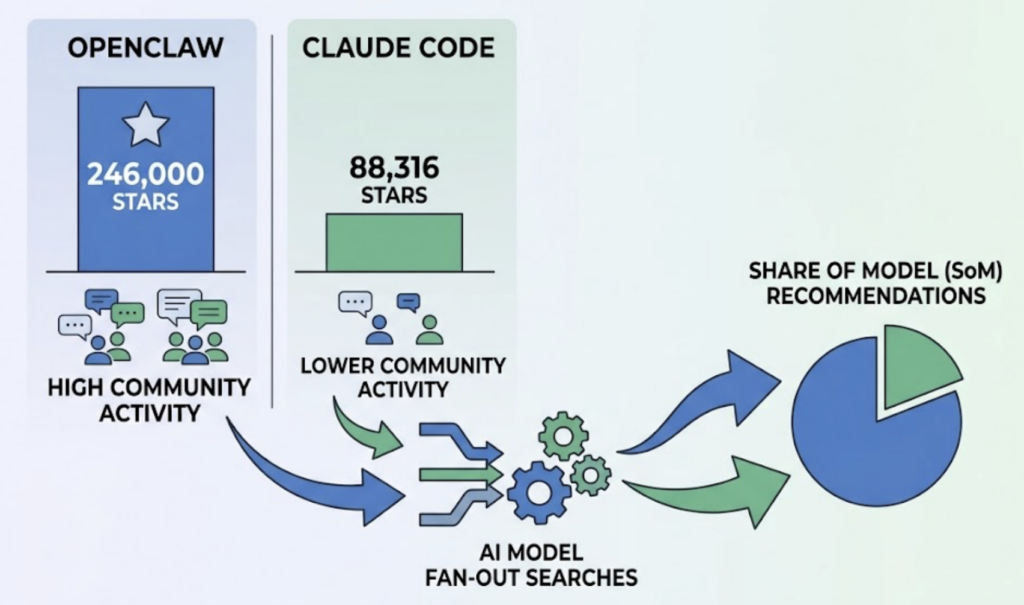

| OpenClaw | 246,000+ | Life automation (WhatsApp/Telegram) | Model-agnostic |

| ECC (Everything-Claude-Code) | 118,000+ | Enterprise workflow standard | Multi-model |

| claw-code | 44,500+ | Python/Rust reimplementation | API-agnostic |

OpenClaw (formerly Clawdbot) is the most ambitious departure from the original design. It runs as a persistent background daemon that checks system status every 30 minutes, proactively notifying users about emails and calendar conflicts via messaging apps. It’s less a “coding tool” and more a personal AI employee.

ECC takes the opposite approach. It stays focused on developer workflows but adds 30 specialized subagents, including a security-reviewer for OWASP compliance checks and a tdd-guide that enforces test-driven development. For enterprise teams, it’s become the de facto configuration layer.

claw-code is the most legally contentious entry. It’s a clean-room reimplementation in Python and Rust, specifically designed to create distance from Anthropic’s intellectual property claims. Its existence raises questions that copyright law in the AI era isn’t fully equipped to answer.

What This Means for AI Search Visibility: GEO and AEO in a Fragmented Ecosystem

Here’s the visibility problem most brands haven’t caught yet.

When a developer asks ChatGPT or Perplexity “what’s the best AI coding agent right now,” the answer engine doesn’t return a list of links. It synthesizes a single recommendation. And it’s increasingly pulling from GitHub activity, Reddit threads, and technical reviews, not just official product pages.

This is where Generative Engine Optimization (GEO) and Answer Engine Optimization (AEO) become operational concerns, not theoretical ones. GEO shapes how AI systems understand and represent brand data. AEO optimizes content to be directly cited in AI-generated responses.

The presence of OpenClaw at 246,000 stars versus Claude Code’s official 88,316 creates a measurable “citation gap.” AI models running Fan-Out searches (where a single user query triggers dozens of background cross-references) increasingly perceive the fork as the more active, more community-validated project. That perception translates directly into recommendation frequency, often called Share of Model (SoM).

Sentiment shifts in AI model outputs typically precede traditional search volume changes by 2 to 3 months. By the time organic traffic metrics show a decline, the visibility damage has already been done.

How Topify Tracks Brand Authority Across the Fork Explosion

The challenge for any brand operating in this space isn’t awareness. It’s measurement. Traditional dashboards show backlinks and keyword ranks. Neither metric captures whether your product is the one ChatGPT recommends when a developer is choosing an AI coding agent at 11pm.

Topify addresses this gap directly. Its Visibility Tracking system queries AI models across ChatGPT, Perplexity, Gemini, Google AI Overviews, and other platforms using thousands of prompt variations, then maps where a brand appears, where it doesn’t, and where it’s being misrepresented.

The Prompt Discovery feature is particularly relevant for this category. A SaaS developer tool might be highly visible for “best tool for Python testing” but completely absent from “most secure coding agent.” Those are different queries with different intent, and both drive decisions.

Topify currently works with 50+ enterprise clients and surfaces what it calls “citation gaps”: the specific high-intent queries where competitors or forks are mentioned and the original brand isn’t. Marketing teams can then build targeted content specifically to fill those gaps.

| Metric | Formula | Why It Matters |

|---|---|---|

| AI Brand Mention Rate | (Brand mentions ÷ Total responses) × 100 | Raw visibility across generative outputs |

| AI Share of Voice | (Brand mentions ÷ All brand mentions) × 100 | Competitive position benchmark |

| AI Citation Rate | (Mentions with citation link ÷ Total mentions) × 100 | Correlates with actual referral traffic |

| Answer Inclusion Rate (AIR) | % of queries where brand appears in the answer | Core GEO performance indicator |

For a brand like Anthropic watching its community forks outpace it on GitHub, these metrics aren’t vanity numbers. They’re early warning signals.

Open Source Velocity Is a GEO Signal

The correlation between GitHub activity and AI citation rates isn’t theoretical. Research has confirmed a coefficient of 0.925 between GitHub stars and a repository’s perceived authority in search engine algorithms. Because Google is the primary referrer to GitHub, repositories that dominate topic pages like “ai agents” or “python automation” become the default candidates for AI retrieval.

The mechanism follows a predictable pattern: constant commits signal freshness to web crawlers, topic dominance builds what researchers call “semantic density,” and as multiple AI models cross-reference the same high-authority sources (GitHub, Reddit, technical documentation), they converge on the same recommendation.

For brands, this means open-source strategy is now part of the GEO stack.

The financial math is direct. Moving from a 5% to a 25% AI citation rate in a market with 100,000 monthly AI queries generates 20,000 additional brand impressions monthly. At a Brand Awareness Value of $5 per impression in B2B SaaS, that’s $1.2 million in annual brand value. Not potential value. Measurable value.

Three actions translate into citation authority:

Own the canonical version. If your project is being forked, the original must maintain the highest commit velocity and community engagement. Forks win when the original stagnates.

Build for machine consumption. Comparison matrices, structured FAQs, and entity-rich documentation aren’t just user-friendly. They’re the formats AI models parse most reliably when generating recommendations.

Treat community as infrastructure. GitHub discussions, Reddit threads, and technical reviews are the “Confirmation” signals that AI systems use to validate brand authority. A brand with no community presence has no GEO foundation.

Conclusion

The Claude Code fork story isn’t really about a leaked file. It’s about what happens when a high-demand tool meets an ecosystem that wants to own its own stack.

OpenClaw and ECC didn’t emerge because developers wanted to copy Anthropic’s work. They emerged because developers needed customization, cost control, and data sovereignty that proprietary tools don’t offer. That demand was always there. The leak just gave it a codebase to work from.

For brands watching this play out, the practical lesson is about the shift from traditional SEO to GEO and AEO. In a world where AI assistants make category recommendations at scale, Share of Model is the metric that matters. And that metric is built through open-source velocity, structured content, and real-time visibility monitoring, not backlink counts.

The fork race isn’t slowing down. The question is whether your brand shows up as the answer when someone asks an AI which tool to use.

FAQ

What is a Claude Code fork?

A Claude Code fork is a derivative version of Anthropic’s agentic coding tool, typically created to add features, reduce costs, or enable fully local execution. Several major forks emerged following a source code exposure in March 2026.

How does Claude Code differ from its forks?

The official Claude Code is a proprietary, terminal-based tool focused strictly on professional coding tasks with enterprise-grade sandboxing. Forks like OpenClaw are model-agnostic and MIT-licensed, designed for broader life automation across messaging platforms.

What is GEO and AEO in the context of AI tools?

Generative Engine Optimization (GEO) and Answer Engine Optimization (AEO) are strategies to improve how a brand appears in AI-generated responses from tools like ChatGPT and Perplexity. In a fragmented tool ecosystem, GEO and AEO determine which product an AI recommends.

How can brands improve their visibility in AI coding tool recommendations?

Brands can improve visibility by increasing open-source activity on GitHub, structuring content in machine-readable formats (tables, FAQs, comparison matrices), and using monitoring platforms like Topify to identify and close citation gaps in AI responses.