Your Google rankings haven’t moved. Your content is still on page one. But somewhere in the last year, the traffic started quietly disappearing.

This isn’t a penalty. It’s not a core update. It’s something more structural: the way people search is changing, and Google rankings are no longer the only thing that decides whether your brand gets found.

Answer engines are now the first stop for millions of buyers. And most brands have no idea where they stand on them.

Search Engines Return Links. Answer Engines Return Decisions.

For two decades, search worked the same way. A user typed a query, Google returned ten links, and the user decided where to click. The search engine was a referee, not a participant.

Answer engines changed that contract entirely.

When someone asks ChatGPT “what’s the best project management software for a remote team,” they don’t get ten links. They get a recommendation, a rationale, and sometimes a comparison table. The AI has already done the research. The decision is largely made before the user visits any website.

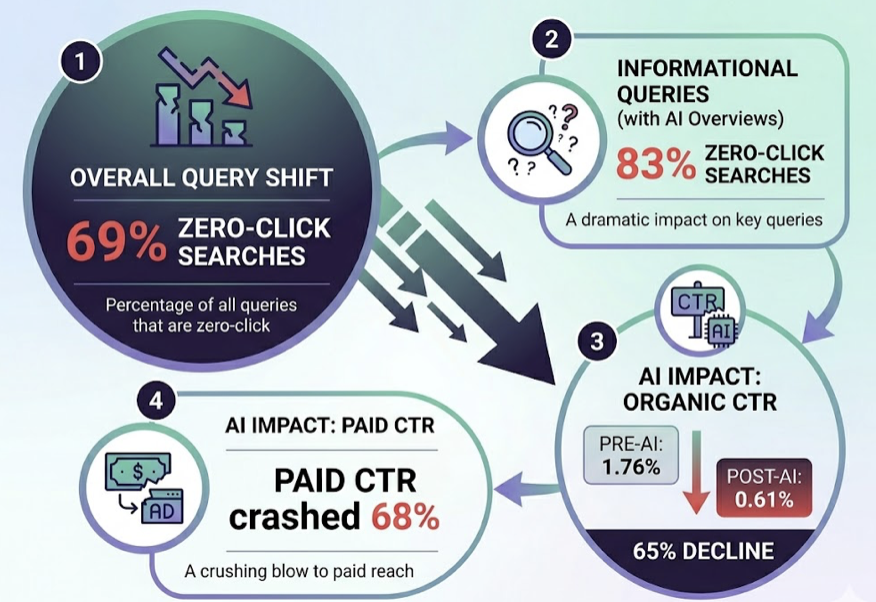

This shift has a measurable cost. Zero-click searches now account for 69% of all queries. For informational queries with Google AI Overviews, that number hits 83%. Organic click-through rates for queries where AI summaries appear have dropped from 1.76% to 0.61%, a 65% decline. Paid CTR in the same conditions has crashed by 68%.

The click-through economy isn’t dying slowly. It’s already in a different shape.

ChatGPT, Perplexity, Gemini: They’re All Answer Engines Now

The answer engine category isn’t one platform. It’s a fragmented ecosystem, and each player operates differently.

ChatGPT dominates B2B research. As of 2026, 47% of B2B buyers prefer it for vendor discovery. Its citations skew heavily toward high-authority domains: Wikipedia accounts for 47.9% of its top citations, and domains with trust scores between 97-100 average 8.4 citations versus just 1.6 for lower-trust domains. Authority is the primary gate.

Perplexity operates on freshness and community validation. Reddit accounts for 46.7% of its top citations. Content that hasn’t been updated in 30 days loses visibility rapidly. It applies what researchers call “time decay” aggressively. If you’re not refreshing your content, Perplexity is quietly deprioritizing it.

Google AI Overviews sits in its own category. It’s still tied to the Google index, but not in the way you’d expect. While 92% of AI Overviews link to at least one top-10 result, 43.5% of cited sources come from domains outside the top 100. E-E-A-T functions as a binary gatekeeper here: 96% of citations come from sources with strong authority signals.

Three platforms. Three different selection logics. One shared outcome: your keyword ranking is not the deciding factor.

Why Your Google Rankings Don’t Carry Over

Here’s the number that reframes everything: only 12% of AI citations overlap with Google’s top 10 results.

That means 88% of what AI engines recommend comes from somewhere outside traditional SEO’s line of sight.

The reason is architectural. Traditional search engines use inverted indices and keyword matching. Answer engines use Retrieval-Augmented Generation (RAG) and semantic vector search. A user’s prompt gets converted into a numeric vector, which is then compared against indexed content in multi-dimensional semantic space. The engine isn’t looking for keyword matches. It’s looking for semantic proximity.

What gets retrieved isn’t a page. It’s a passage.

A 3,000-word blog post optimized for dwell time may perform well on Google. But if the core answer is buried in paragraph twelve, the RAG system skips it. It needs extractable, fact-dense chunks in the first third of the content, where 44% of AI citations are pulled from.

| Ranking Signal | Traditional SEO | Answer Engine (AEO) |

|---|---|---|

| Backlink Volume | High weight | Moderate (entity mentions matter more) |

| Keyword Density | Moderate | Low (vector similarity, not word count) |

| Content Length | High (long-form) | Low (atomic passages preferred) |

| Schema Markup | Optional | Mission critical |

| Social Proof | Low | High (Reddit, G2 heavily cited) |

The optimization target has changed. SEO was about ranking pages. AEO is about being extractable.

What Actually Gets a Brand Cited in AI Answers

The content that earns AI citations shares a consistent set of structural properties. Research on high-performing AEO content points to what some practitioners call the CITABLE framework.

Answer first. Every piece of content should open with a 2-3 sentence summary that names the brand and states the core answer directly. This isn’t just a stylistic choice: 44% of citations are pulled from the first third of a page. If your answer isn’t there, it won’t be extracted.

Block-structured for RAG. Content broken into atomic chunks of 150-300 words, with clear H2/H3 headings, bulleted lists, and HTML tables, is significantly easier for RAG pipelines to ingest. Long narrative sections read well for humans but get skipped by machines looking for extractable facts.

Third-party validation. AI models often trust external platforms more than brand-owned sites. Presence on review platforms like G2 or Trustpilot correlates with 4.6-6.3 citations on average, compared to 1.8 for brands without that presence. Reddit, Wikipedia, and industry publications all function as trust signals.

Schema markup. Implementing Organization, Product, and FAQ schema is no longer optional. Schema creates the translation layer that helps AI systems identify entities and their relationships, increasing the chance of appearing in AI summaries by 36%.

Fresh content. Perplexity’s time decay is aggressive, but freshness matters across all platforms. Content that hasn’t been updated loses ground, especially in fast-moving categories.

The underlying principle: write for a machine that’s looking for evidence, not a human that’s looking for a story.

Brands Already Winning in Answer Engines

Early AEO case studies share a common pattern: the brands winning in AI search treat it as a distinct channel with its own KPIs, not a byproduct of SEO.

Mentimeter, a B2B presentation SaaS, optimized for 555 informational keywords within AI Overviews and generated 124,000 ChatGPT sessions and 3,400 conversions in a single month. Their strategy focused on creating how-to content that AI could summarize cleanly, turning the brand into the go-to reference for collaborative software queries.

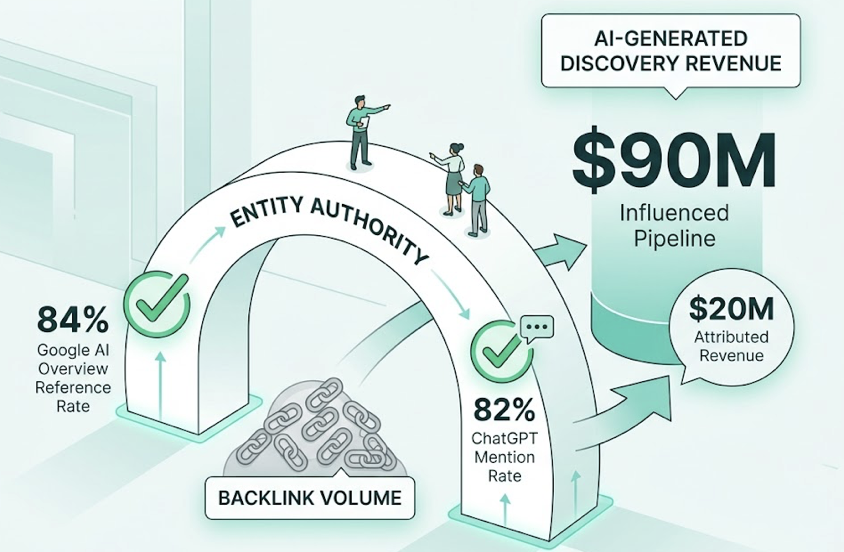

An industrial applications provider focused on entity authority over backlink volume, achieving an 84% reference rate in Google AI Overviews and an 82% mention rate in ChatGPT for its core product categories. The result: $90 million in influenced pipeline and $20 million in revenue directly attributed to AI-generated discovery.

A B2B SaaS firm grew AI-referred trials from 575 to over 3,500 per month, a 6x increase in seven weeks, by fixing broken schema, publishing 66 AEO-optimized articles, and seeding helpful comments on Reddit threads that Perplexity and ChatGPT already cited.

That last point is worth sitting with. Reddit comments became a growth lever. Not because Reddit is special, but because the AI platforms trusted it. AEO strategy follows the trust logic of the platform, not the assumptions of traditional marketing.

How to Measure Your Answer Engine Visibility

This is where most brands hit a wall.

Traditional SEO tools like Ahrefs and Google Search Console track rankings and clicks. They can’t tell you whether ChatGPT mentioned your brand when a buyer asked a comparison question yesterday. That data doesn’t exist in the standard analytics stack.

Answer engine visibility requires a different set of metrics entirely.

AI Mention Rate measures how often your brand appears across a tracked set of AI prompts. It’s the rough equivalent of impressions, but inside the model’s output rather than the SERP.

Share of Voice (AI SOV) measures your brand mentions as a percentage of total category mentions across all AI recommendations. This is the metric that predicts competitive position in the answer economy.

Sentiment Score tracks how AI systems describe your brand. Being mentioned as a “reliable option” is different from being mentioned with outdated pricing or incorrect feature claims. Hallucinations are a real risk.

Citation Rate measures how often AI answers include a link to your domain. A brand can be mentioned without being cited. Citations drive qualified referral traffic; mentions alone don’t.

Tools like Topify are built specifically for this tracking challenge, monitoring brand visibility across ChatGPT, Perplexity, Gemini, and other major AI platforms. One feature worth noting is the ability to surface “Dark Queries”: high-intent conversational prompts with zero search volume in Google but significant activity inside AI platforms. These are the questions your buyers are already asking that you can’t see in Search Console.

The brands that get ahead in answer engines aren’t necessarily the ones with the best content today. They’re the ones who know where they stand and can see where the gaps are.

Conclusion

The shift from search to answers isn’t about one algorithm update. It’s a structural change in how buyers start their research, how they form opinions, and how they make decisions.

Google rankings still matter. But they’re no longer the full picture. If an AI engine doesn’t include your brand when a buyer asks a category question, you’re invisible at the moment that matters most, regardless of where you rank on the SERP.

The brands closing that gap are the ones treating AI visibility as a measurable, manageable channel: tracking their mention rate, auditing their content structure, and building the kind of authoritative presence that machines trust.

The Invisibility Gap is real. The question is whether your brand is on the right side of it.

FAQ

What is answer engine optimization (AEO)?

AEO is the practice of structuring digital content to be extracted, cited, and recommended by AI-powered answer engines like ChatGPT, Perplexity, and Google AI Overviews. It prioritizes machine synthesizability, entity clarity, and passage-level extractability over traditional keyword ranking.

What’s the difference between AEO and SEO?

SEO focuses on ranking pages to drive clicks from search results. AEO focuses on being included in a synthesized AI answer, often in a zero-click environment. SEO relies on backlinks and keyword density; AEO relies on semantic structure, schema markup, and third-party validation. Both matter, but they require different content strategies.

How do I get cited in ChatGPT or Perplexity?

For ChatGPT, domain authority is the primary lever: referring domains above 2,500, presence on Wikipedia and high-trust platforms, and answer-first content structure. For Perplexity, freshness matters as much as authority: content should be updated at least every 30 days, and presence on Reddit and community platforms significantly boosts citation probability.

Does Google ranking still matter?

Yes, but it’s no longer sufficient on its own. While 92% of AI Overviews cite at least one top-10 result, 43.5% of cited sources come from outside the top 100. A page can rank #1 on Google and still be bypassed by AI systems if it lacks E-E-A-T signals or machine-readable structure. The goal is to optimize for both.

How do I know if my brand is being recommended by AI?

Standard analytics tools don’t track AI mentions. You’ll need a dedicated GEO monitoring platform to track your AI mention rate, share of voice, sentiment, and citation rate across platforms. This data is what separates brands that know their AI visibility from those that are guessing.