Your brand ranks #1 on Google. You’ve earned it. But when someone asks ChatGPT to recommend the best tool in your category, your name doesn’t come up once.

That’s not bad luck. It’s a measurement gap.

In 2026, brands operate in what researchers are calling the “synthesis economy,” where AI engines like ChatGPT, Gemini, and Perplexity don’t return a list of links. They return a synthesized answer, with a handful of cited brands, and everyone else is simply invisible. The question is no longer “where do we rank?” It’s “do we exist in the AI answer at all?”

That’s exactly what an AI visibility score solution is built to answer.

Most Brands Are Flying Blind on Their AI Visibility Score

ChatGPT now has 800 million weekly active users, doubling from 400 million in early 2025. Google Gemini logged 1.2 billion visits in October 2025 alone. Perplexity quietly crossed 60 million monthly active users. These aren’t niche tools anymore. They’re primary discovery channels.

And yet most brands have zero data on how they appear inside them.

The scale of the problem becomes clearer when you look at what’s happening to traditional search. AI Overviews now appear on over 50% of all Google queries, a 670% growth rate in under a year. Zero-click searches account for 58.5% of U.S. searches and 59.7% in the EU. When AI Overviews appear, position-one organic CTR drops by as much as 58% to 79%.

Here’s the flip side: visitors arriving from AI platforms view 50% more pages per session and convert at rates 4 to 23 times higher than traditional organic traffic. The traffic is smaller. The intent is much higher.

That’s the gap most brands still can’t see, let alone measure.

What Actually Goes Into an AI Visibility Score Solution

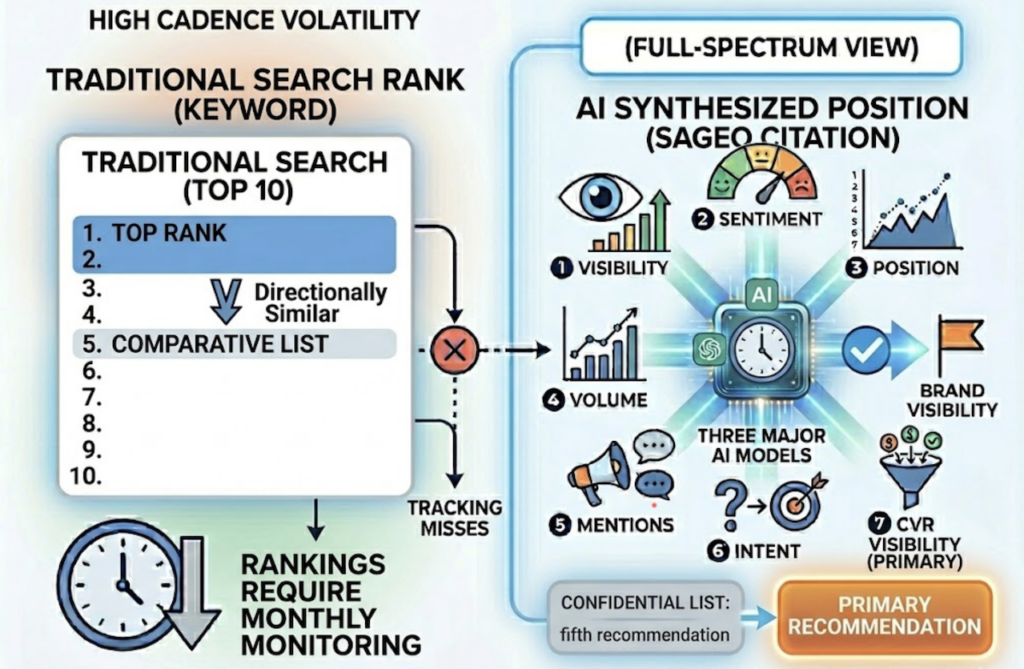

An AI visibility score (AVS) is a composite index, typically normalized from 0 to 100, that quantifies how often and how prominently a brand appears inside AI-generated answers.

It’s not a single number pulled from thin air. A professional AI visibility score solution aggregates multiple underlying signals:

Visibility (Mention Frequency): The raw percentage of prompts where your brand appears across a defined set of category-relevant queries. This is your baseline.

Position (Prominence): Where you appear within the response matters enormously. A mention in the opening paragraph as a primary recommendation carries far more weight than a footnote in a five-brand list.

Sentiment (Contextual Perception): AI platforms don’t just mention brands. They describe them. Being cited as “a trusted option” vs. “a legacy, expensive tool” is a meaningful difference that raw mention counts completely miss.

Source Citation: When an AI engine links directly to your domain as a reference, it signals higher trust than a mention alone. This is the citation layer, and it’s where authority compounds.

Volume (Share of Discovery): The estimated AI-driven impressions your brand receives for a given topic set. Think of it as share of voice, but measured in AI answers instead of ad placements.

A widely used mathematical model weights these dimensions as: AVS=(SIR×wSIR)+(AMV×wAMV)+(SOV×wSOV)+(S×wS), where SIR is your summarization inclusion rate, AMV is mention velocity over time, SOV is share of voice against competitors, and S is your normalized sentiment score.

The score itself is just a dashboard reading. The dimensions underneath it are where the actual work happens.

How to Measure Your AI Visibility Score: A Practical Framework

You don’t need a fully built AI visibility score platform to start. But you do need a structured approach, because unstructured sampling produces noise, not insight.

Step 1: Build a prompt library. A reliable measurement requires 50 to 150 prompts mapped to four categories: definitional (“What is the best [category] tool?”), comparative (“X vs Y alternatives”), use-case specific (“Best software for [workflow]”), and price-intent (“How much does [category] cost?”). These mirror how real users actually query AI systems.

Step 2: Cover multiple platforms. Data collected from a single AI engine is structurally misleading. A brand may score well on ChatGPT due to its training data presence but be invisible on Perplexity, which relies heavily on live web crawling. Research shows AI engines diverge on source selection in 38% to 42% of cases. You need at minimum ChatGPT, Gemini, and Perplexity.

Step 3: Establish a baseline and benchmark against competitors. Once you’ve run your first sampling round, normalize results and identify “shortlisting gaps,” the specific topics or categories where competitors appear consistently but your brand doesn’t. This tells you exactly where to focus.

Step 4: Monitor continuously, not periodically. AI model updates shift citation behavior quickly. Brands that review scores weekly or bi-weekly catch competitive shifts before they compound.

Here’s a counterintuitive finding worth understanding: research into AI citation behavior shows that AI engines frequently bypass top-ranked Google results if the content is poorly structured, instead citing sites from position 11 or lower that provide clear tables, lists, or direct definitions. This “Page 2 Anomaly” means smaller brands with well-structured content can outperform established players in AI visibility even without dominant backlink profiles.

That changes the optimization calculus significantly.

5 Signals That Your AI Visibility Score Solution Is Working

Structural content improvements take time to register in AI systems. Expect a 4 to 8-week window before changes in your AI visibility score reflect real optimizations. Here’s what you’re watching for:

1. Rising mention rate on target prompts. Your brand starts appearing in a higher percentage of the category queries you’re tracking. This is the most direct indicator.

2. Positional advancement. You move from appearing fifth in a comparative list to being introduced as a primary recommendation. Position matters inside synthesized answers in a way that’s directionally similar to, but mechanically different from, traditional keyword ranking.

3. Sentiment shift. The language AI engines use to describe your brand changes from generic or neutral to authority-signaling. Words like “trusted,” “widely used,” or “recommended for” indicate positive momentum in how LLMs classify your entity.

4. Citation ownership. AI platforms begin linking directly to your domain for specific claims, statistics, or definitions rather than routing through third-party review sites. This is the clearest signal that your content is now seen as a primary source.

5. Attributable referral traffic. While still a fraction of total traffic, inbound visits from Perplexity, ChatGPT, and Google AI Overviews trend upward with high engagement metrics. High pages-per-session from AI-referred visitors is a strong indicator of intent alignment.

None of these signals are meaningful in isolation. Tracked together on an AI visibility score dashboard, they tell a coherent story about brand trajectory in the generative discovery layer.

The Tools That Power a Real AI Visibility Score Dashboard

The market for AI visibility score software has sorted itself into three tiers.

Single-platform trackers give you one engine’s data. Lightweight and affordable, but structurally limited given the 38-42% cross-engine divergence rate.

Multi-dimensional analytics suites cover multiple platforms and track several dimensions simultaneously. This is where most serious marketing teams operate.

Full-stack solutions combine tracking, analysis, and execution into one workflow. These handle measurement and act on it.

Here’s how the leading options compare:

| Tool | Price/Month | Engine Coverage | Strongest Use Case |

|---|---|---|---|

| Topify | $99-$199 | 7+ Platforms | SaaS teams needing intent, citation, and sentiment analytics |

| BrightEdge Catalyst | Custom | AIO, ChatGPT, Perplexity | Fortune 500 teams on existing BrightEdge infrastructure |

| SE Ranking | $189 (Core) | ChatGPT, Gemini, Perplexity | All-in-one SEO teams adding AI visibility tracking |

| Peec AI | ~$105 | Multi-model | Startups focused on citation and sentiment monitoring |

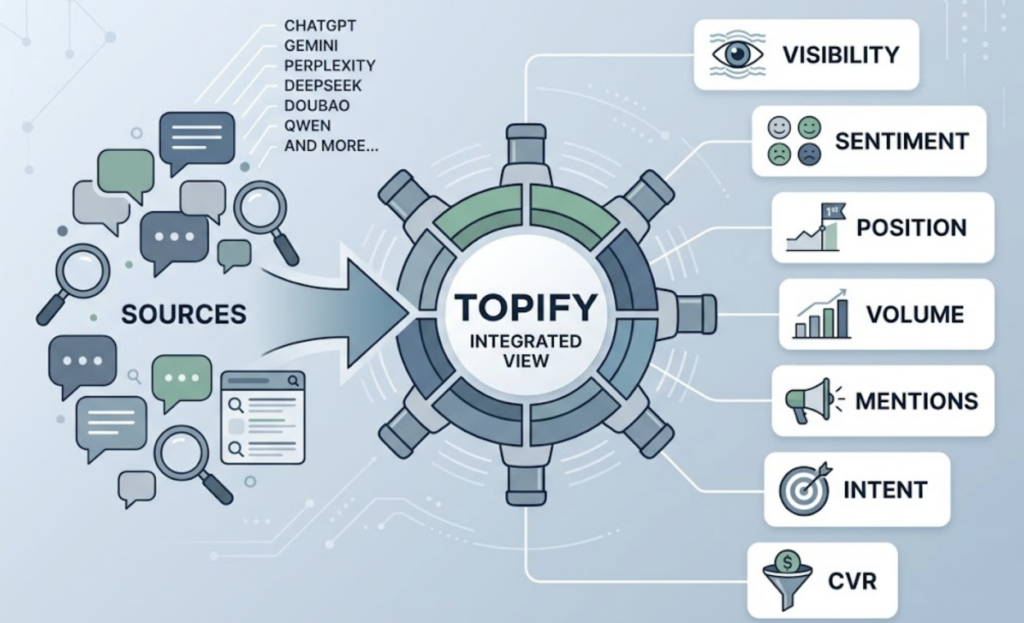

Topify runs its AI visibility score analytics across ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, Qwen, and others, covering the major markets where enterprise and consumer discovery actually happens. Its seven-dimension tracking index measures visibility, sentiment, position, volume, mentions, intent, and CVR simultaneously, not as separate reports but as an integrated view.

Two features differentiate it at the platform level. The AI Volume Analytics module estimates conversational query volume based on actual AI platform usage patterns rather than traditional keyword search volume, which tends to significantly undercount AI-native intent. The Source Analysis feature tracks which third-party domains AI engines are citing for your category, making content gap identification systematic rather than guesswork.

For teams that need more than data, Topify’s one-click execution layer lets you define optimization goals in plain English and deploy the strategy without manual workflows. Pricing starts at $99/mo for the Basic plan (30-day trial, 100 prompts, 4 projects), $199/mo for Pro (250 prompts, 10 seats), and Enterprise from $499/mo with dedicated support.

5 Mistakes That Tank Your AI Visibility Score Before You Even Start

Most brands don’t fail at AI visibility optimization because of bad strategy. They fail because of structural errors in how they measure and approach the problem.

Tracking only one platform. Optimizing solely for ChatGPT creates a systematic blind spot. AI engines diverge on source selection 38% to 42% of the time. A brand invisible on Perplexity is missing a real audience, regardless of its ChatGPT score.

Running one-time audits instead of continuous monitoring. A single measurement tells you where you stood on a specific day. AI models update frequently, and competitive shifts happen between audits. Visibility only becomes strategically useful as a trendline.

Ignoring sentiment. A brand appearing in 70% of prompts with consistently negative framing (“the expensive legacy option”) has a high mention rate and a damaged position. The AI visibility score analytics layer must include sentiment as a core dimension, not an afterthought.

Assuming Google rank predicts AI rank. Research on 15 brands across competitive categories found that top-10 Google results appear in ChatGPT responses only 62% of the time. The correlation between Google position and AI mention position is essentially zero (0.034). These are separate signals requiring separate optimization strategies.

Blocking AI crawlers or using JavaScript-heavy rendering. If PerplexityBot or ChatGPT-User can’t access your pages, you don’t exist in their index. Technical accessibility is table stakes for any AI visibility score solution to actually work.

That last one is the most common mistake, and the cheapest to fix.

Conclusion

An AI visibility score solution isn’t a nice-to-have analytics feature. In 2026, it’s the measurement infrastructure for a traffic channel that’s growing faster than any brand’s current strategy accounts for.

The brands that will maintain relevance in the synthesis economy are the ones treating AI visibility as an operational function: measurable, tracked continuously, and tied to real business outcomes. That means a structured prompt library, multi-platform coverage, and a platform that tracks not just mentions but position, sentiment, citation, and intent together.

The goal isn’t to rank on a page. It’s to be the entity an AI cites when someone asks a question in your category.

FAQ

What is an AI visibility score solution? An AI visibility score solution is a combination of technology and methodology used to measure how often and how prominently a brand appears in generative AI answers across platforms like ChatGPT, Gemini, and Perplexity. It moves beyond traditional SEO to quantify a brand’s share of voice in the conversational discovery layer.

How is an AI visibility score calculated? It’s typically a weighted index from 0 to 100 that aggregates mention frequency, position within the AI response, sentiment, citation share, and estimated volume of AI queries for your category. Advanced systems apply different weights to each dimension based on strategic priorities.

How often should I check my AI visibility score? For stable industries, monthly monitoring is a reasonable floor. For competitive or fast-moving categories, weekly or bi-weekly reviews are recommended to detect model updates and competitive shifts before they compound.

What’s the difference between AI visibility score and SEO rank? SEO rank measures your URL’s position in a list of links for a keyword. AI visibility score measures how an LLM classifies and cites your brand within a synthesized prose answer. They use different signals and respond to different optimization levers.

How much does an AI visibility score solution cost? Entry-level tools start around $89 to $105 per month for basic tracking. Professional tiers range from $199 to $499 per month. Enterprise-grade solutions with custom data pipelines can exceed $1,500 per month. Topify starts at $99/mo with a 30-day trial.

What’s the fastest way to improve my AI visibility score? Add original statistics and unique data to your content, use clear heading hierarchies (H1 through H3), place direct answers in the first 100 words of key pages, and ensure your brand has a presence on authoritative third-party sites like industry review platforms and Wikipedia. Site speed matters too: pages with a First Contentful Paint under 0.4 seconds are cited at roughly 3 times the rate of slower pages.

Is there a checklist for AI visibility optimization? Yes. Optimize for FCP under 0.4 seconds, implement schema markup (Organization, Article, Person), update content at least every 90 days, structure pages with short sections of 100 to 150 words that lead with direct answers, and ensure AI crawlers like PerplexityBot are not blocked in your robots.txt.