You searched “best AI visibility tracking tool,” spent an hour reading landing pages, and ended up with five browser tabs open. Each tool promises a “score.” None of them explains what the score actually measures, how it’s calculated, or what you’re supposed to do when it drops.

That’s not a you problem. It’s a market problem. Most AI visibility score software was built to show data, not to diagnose visibility gaps. And in a landscape where McKinsey projects $750 billion in U.S. revenue will flow through AI-powered search by 2028, “showing data” isn’t enough.

Here’s how to tell the difference.

Most “AI Visibility” Dashboards Show Activity, Not a Score — Here’s the Difference

A mention count is not a score. Knowing your brand appeared in 12 out of 50 ChatGPT responses this week tells you something, but it doesn’t tell you whether that’s good, whether it’s improving, or what’s causing it.

Real AI visibility score software translates raw AI behavior into a weighted, multi-dimensional index. It tells you not just if you appeared, but where in the response, how you were described, and why a competitor consistently outranks you. That’s the gap most teams still can’t see.

The stakes are concrete. Only 16% of brands have implemented systematic tracking for AI search performance, even as consumer behavior has already shifted. Brands without a structured score aren’t flying blind by choice; they simply don’t know the instrument panel exists.

What Is AI Visibility Score Software, and How Does It Actually Work

AI visibility score software measures how often, how prominently, and how favorably your brand appears in AI-generated answers across platforms like ChatGPT, Gemini, Perplexity, and others.

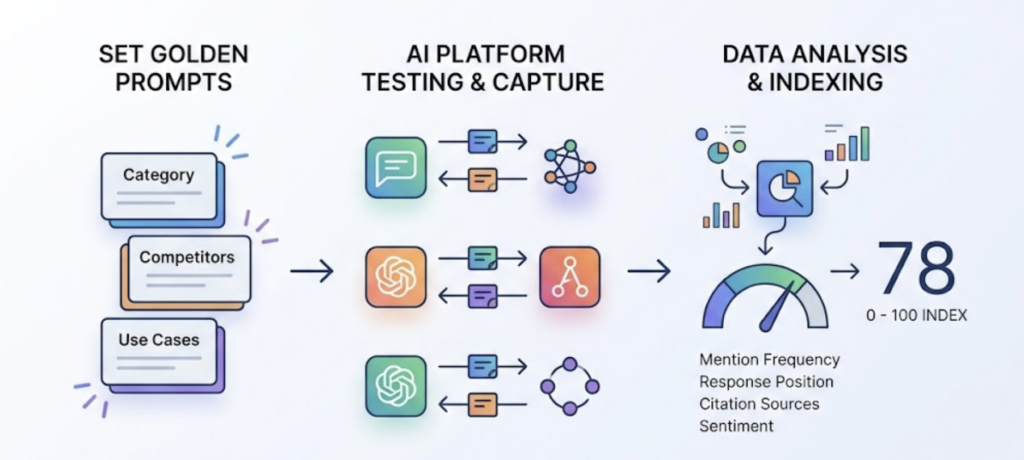

The mechanics work like this: the software defines a set of “golden prompts” tied to your category, competitor comparisons, and audience use cases. It then fires those prompts repeatedly across multiple AI platforms, captures the responses, and analyzes where your brand lands. That raw data gets normalized into a 0–100 index using a weighted formula that combines mention frequency, position in the response, citation sources, and sentiment.

What makes this technically different from SEO tracking is that AI responses are probabilistic, not static. The same prompt can produce a different answer on two consecutive runs. So the score is directional, not a hard count. Repeated sampling reveals stable patterns that tell you what the AI “believes” about your brand.

ChatGPT alone processes over 2.5 billion prompts daily with an 80.49% market share in the AI chatbot sector. The volume of invisible brand-relevant conversations happening right now, without your knowledge, is the actual reason a score matters.

The 7 Metrics That Actually Matter in an AI Visibility Score

A well-built AI visibility score isn’t a single number. It’s a composite. Here are the seven dimensions that serious software should measure to give you an accurate picture of your brand visibility in AI-generated answers:

| Metric | What It Measures | Why It Matters |

|---|---|---|

| Visibility | % of prompts where your brand appears | Baseline presence across AI conversations |

| Sentiment | Positive vs. negative language used to describe you | AI may be recommending you with caveats you’ve never seen |

| Position | Where in the response your brand ranks | First-mentioned brands get “direct-answer” language; later mentions get “also consider” framing |

| Volume | Number of high-intent prompts relevant to your category | Determines the size of your opportunity, not just your current share |

| Mentions | Raw count of brand name appearances per response | Tracks frequency and co-occurrence with competitors |

| Intent | The user goal behind the prompt (informational, purchase, comparison) | High-intent mentions drive pipeline; informational mentions drive awareness |

| CVR (Conversion Visibility Rate) | Estimated likelihood an AI answer drives user action toward your brand | The bridge between AI mentions and business outcomes |

Topify is one of the few platforms that tracks all seven dimensions in a single dashboard, which matters because a brand can score high on visibility but low on sentiment, and the combined picture tells a very different story than either metric alone.

A Practical Checklist Before You Choose AI Visibility Score Software

Not all tools are built the same. Here’s what to verify before committing:

Platform coverage. Does it track ChatGPT, Gemini, Perplexity, and regional platforms like DeepSeek or Qwen? As of early 2026, the AI chatbot landscape is fragmented. A tool that only covers ChatGPT is missing a significant portion of AI-referred discovery.

Score transparency. Can you see how the score is calculated? A number without a methodology is a marketing claim, not a measurement.

Competitor benchmarking. Can you track your position relative to competitors, not just in absolute terms? AI responses are zero-sum at the top. Knowing you appeared in 40% of responses means nothing if your closest competitor appeared in 70%.

Prompt representativeness. Does the tool use prompts based on real user behavior, or canned queries written by the vendor? Tiny changes in phrasing produce different AI outputs. Scripted prompts can inflate scores that real-world searches won’t replicate.

Citation-level data. Does it show you which sources the AI is citing to support mentions of your brand? A brand can appear in an AI response but get zero traffic because the citation links to a scraper or an outdated third-party directory, not your site. This is called source hijacking, and most dashboards that rely on API-only data miss it entirely.

Update frequency. AI citation patterns shift. 76.4% of ChatGPT’s top-cited pages were updated within the last 30 days. A tool that refreshes monthly is reporting on a reality that has already changed.

How to Improve Your AI Visibility Score: 4 Levers That Actually Move the Number

Improving your score is an engineering problem, not a content volume problem. Here are the four levers that produce measurable changes:

1. Fix what the AI is citing about you. Research from NVIDIA shows that page-level content chunking achieves the highest AI retrieval accuracy (0.648), and roughly 90% of ChatGPT citations come from pages beyond the first two pagesof traditional search results. That means your most AI-cited content may not be what you think. Use source analysis to find what the AI is actually pulling from, then optimize those specific pages, not your homepage.

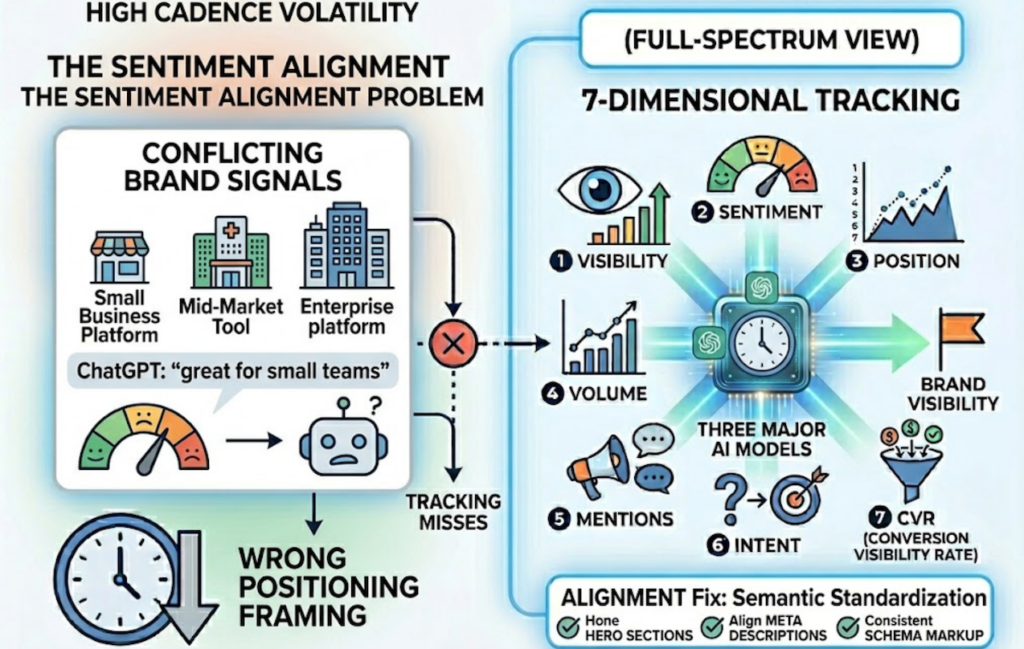

2. Correct how the AI describes you. Sentiment analysis often surfaces surprises. If ChatGPT describes your enterprise platform as “great for small teams,” that’s not a compliment. It’s a positioning signal that’s leaking into AI answers and reaching buyers with the wrong frame. The fix is semantic standardization: align your hero sections, meta descriptions, and schema markup around a single, consistent entity definition. AI models that encounter conflicting signals across your web presence default to a generalized description, which tends to favor whoever has cleaner, more consistent signals.

3. Target high-volume, high-intent prompts. Not all prompts are equal. A brand that appears in a high-intent comparison query (“best [category] for enterprise teams”) is generating pipeline. A brand that appears in a general informational query (“what is [category]”) is building awareness. Topify’s High-Value Prompt Discovery continuously surfaces the prompts driving the most AI search volume in your category, so you’re optimizing for questions that actually move the needle.

4. Track competitor position shifts weekly. AI recommendations aren’t static. A competitor can go from second-mention to first-mention in three weeks based on a content update or a new press mention that AI retrieval picks up. Dynamic competitor benchmarking lets you spot these shifts before they compound. One B2B SaaS team using a structured GEO framework increased their AI citation rate from 8% to 24% in 90 days, generating 47 qualified leads at 2.8x higher conversion than previous channels.

3 Common Mistakes Brands Make When They First Start Tracking AI Visibility Scores

Mistake 1: Tracking only one platform. Teams default to ChatGPT because it’s the most visible. But Google AI Overviews reaches 2 billion monthly users, and Perplexity has become the default research tool for a significant segment of high-income professionals and senior decision-makers. A score that reflects only one platform is a partial view of a multi-platform reality.

Mistake 2: Treating “mentioned” as “recommended.” There’s a meaningful difference between being mentioned fifth in a list and being the first brand recommended with a direct-answer framing. AI visibility score software that only counts mentions without tracking position and sentiment is systematically under-reporting what matters. Position 1 in an AI response correlates directly with the “direct-answer language” that triggers user action. Position 4 and beyond gets “other options” framing.

Mistake 3: Setting and forgetting the prompt set. The prompts your audience uses to find brands in your category change. New use cases emerge. Competitor campaigns shift the vocabulary. AI citation patterns exhibit a strong freshness bias — AI-cited content is on average 368 days newer than traditionally ranked content. If you defined your tracking prompts six months ago and haven’t updated them, you’re measuring a market that has already evolved.

AI Visibility Score Software Pricing: What You Should Expect to Pay

Pricing in this category is typically structured around three variables: the number of prompts tracked, the number of AI platforms covered, and the number of seats or projects.

Entry-level tools start around $29 to $89 per month and generally cover one or two platforms with a limited prompt set. They’re useful for initial exploration but often lack the diagnostic depth to explain why your score is what it is.

Mid-tier platforms in the $99 to $399 range tend to offer multi-platform coverage and competitor benchmarking. This is where most in-house marketing teams and mid-sized agencies operate.

Topify’s pricing sits at this tier with more depth than most: the Basic plan starts at $99/month (annual) and includes 100 prompts, tracking across ChatGPT, Perplexity, and AI Overviews, 9,000 AI answer analyses, and 4 projects. The Pro plan at $199/month scales to 250 prompts, 22,500 analyses, and 10 seats. Enterprise plans start at $499/month and include dedicated account management and custom configurations.

The ROI math is worth running. AI-referred traffic converts at 14.2% versus 2.8% for organic search, with average engagement time nearly four times longer. For a B2B team generating even 10 AI-referred leads per month at a higher close rate, the math on a $199/month tool closes quickly.

Conclusion

Most brands don’t have an AI visibility problem. They have a measurement problem. Without a structured score tracking multiple dimensions across multiple platforms, you’re making optimization decisions based on incomplete information in a channel that’s already influencing purchase decisions at scale.

The shift from “ranking” to “being cited” requires different infrastructure. A real AI visibility score software doesn’t just tell you your number. It tells you why the number is what it is, which sources the AI trusts, how competitors are positioned relative to you, and which prompts are worth winning. That’s what separates a diagnostic tool from a dashboard.

Get started with Topify to see where your brand stands across the major AI platforms today.

FAQ

Q: What is AI visibility score software? A: It’s a category of tools that measures how often, how prominently, and how favorably your brand appears in AI-generated answers across platforms like ChatGPT, Gemini, and Perplexity. It works by firing a defined set of prompts across AI platforms, capturing the responses, and normalizing the data into a weighted score across dimensions like visibility, sentiment, position, and citation sources.

Q: How do I measure my brand’s AI visibility score? A: The most reliable method is using dedicated AI visibility score software that runs repeated sampling across multiple platforms. You define a prompt set tied to your category and use cases, the software executes those prompts, and the resulting data is aggregated into a score. Single-run checks in ChatGPT don’t produce reliable data because AI responses are probabilistic and vary across sessions.

Q: How often should I check my AI visibility score? A: Weekly tracking is the practical standard for most marketing teams. AI citation patterns can shift in two to three weeks based on content updates, new competitor press mentions, or changes in how platforms weight sources. Monthly reporting is a reasonable cadence for leadership summaries, but weekly data is necessary to catch early changes before they compound.

Q: What’s a good AI visibility score benchmark? A: Benchmarks vary by category competitiveness and platform. A general rule: appearing in more than 30% of relevant prompts is a solid baseline for established brands in low-to-mid competition categories. In highly competitive SaaS or B2B categories, top performers typically appear in 50 to 70% of prompts. More important than the absolute score is your position relative to direct competitors and your trend over the past 30 to 90 days.