You’re ranking on page one. Traffic looks stable. The quarterly report shows green.

But when a potential customer asks ChatGPT “what’s the best [your category] tool?”, your brand doesn’t appear. Not on the first response. Not on the third. Not at all.

That’s not a content problem. That’s a measurement problem, and AI visibility score analytics is how you start solving it.

What AI Visibility Score Analytics Actually Measures (And Why It’s Not a Single Number)

AI visibility score analytics is a multi-dimensional tracking system that measures how often your brand appears in AI-generated answers, how prominently it’s placed, and what the surrounding narrative says about you.

It’s not a single ranking. It’s a composite index built from several interconnected signals, each telling a different part of the story.

This distinction matters because the underlying mechanics of AI search are fundamentally different from traditional search. AI search tools captured between 12% and 15% of global search market share by end of 2025, up from roughly 5% at the start of that year. Google’s share dipped below 90% for the first time in a decade. The platforms driving this shift don’t work like search engines. They synthesize.

A traditional search engine points. A generative model answers.

That shift is why your Google rank stops being a reliable proxy for AI presence. Up to 80% of sources cited by ChatGPT don’t appear anywhere in Google’s top 100 results. The two ecosystems are running on different selection criteria.

The 7 Metrics Behind a Complete Brand Visibility Generative Search Score

Topify‘s seven-metric framework gives a full picture of where a brand actually stands in the generative search landscape:

Visibility: The percentage of sampled AI queries that include your brand in the response. Industry analysts suggest investigating if this falls below 5% on your core queries.

Sentiment: The tone of AI language when it mentions you. A 0-100 score that tracks whether you’re being recommended, described neutrally, or quietly undermined. Remediation is typically needed if more than 20% of mentions carry negative framing.

Position: Where your brand lands within the response relative to competitors. First mention carries meaningfully more conversion weight.

Volume: The estimated density of AI search queries relevant to your brand category, based on actual AI search behavior rather than inferred keyword data.

Mentions: Raw frequency of brand references across platforms. Useful for trend-spotting even when Visibility is stable.

Intent: The type of prompt your brand is appearing in. Being cited in “I need to solve X” prompts is a different signal than appearing in “what is X” queries.

CVR (Conversion Visibility Rate): The estimated likelihood that an AI answer is directing users toward a branded interaction. AI referral visitors convert at 4.4x the rate of traditional organic search visitors, and in B2B SaaS contexts that multiplier can reach 23x.

No single metric tells the full story. A brand with high Visibility and poor Sentiment is getting mentioned and quietly buried.

Most Brands Are Flying Blind on Generative Search Metrics

The standard approach is still a spot check. Someone on the team opens ChatGPT, types a competitor query, and reports back at the next standup.

That’s not analytics. It’s anecdote.

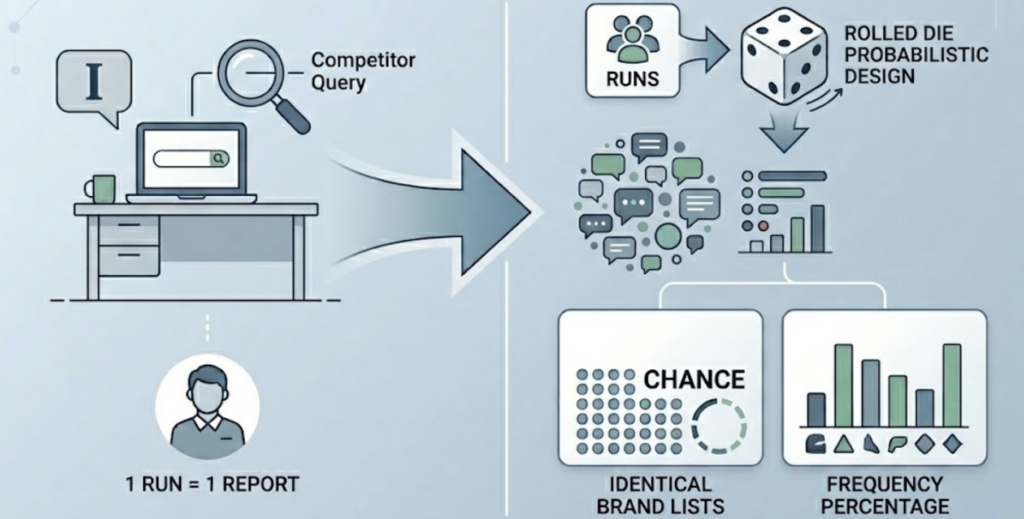

Here’s why it fails: AI responses are probabilistic by design. Research conducted across more than 2,900 AI runs found there is less than a 1-in-100 chance of receiving an identical list of brand recommendations in successive prompts. The model calculates the next token based on weighted probability, which means your brand’s “ranking” isn’t a fixed position. It’s a frequency percentage across a large sample.

If you appear in 45 out of 100 relevant prompts, your AI visibility is 45%. If you appear in 3, it’s 3%. You won’t know which one you are from a single query.

The second blind spot is attribution. GA4 typically categorizes AI referral traffic as generic “Referral,” mixing high-intent ChatGPT visitors with random forum links. Without custom channel configuration, you can’t isolate what AI is actually driving, which means you can’t measure the ROI of any GEO effort you make.

How to Measure AI Visibility Score Analytics: A 4-Step Framework

Step 1: Define your core prompt set. These are the specific questions your ideal customer would ask an AI when looking for your solution. Not just “[brand name]” queries. Category queries: “best [category] tool for [use case],” “how do I solve [problem].” Start with 30 to 50 prompts.

Step 2: Run those prompts across multiple platforms. ChatGPT, Gemini, Perplexity, and DeepSeek each operate on different training data and weight different signals. A brand that dominates on Perplexity can be invisible on Gemini. Topify covers all major AI platforms including ChatGPT, Gemini, Perplexity, and DeepSeek, logging every response at scale.

Step 3: Establish your baseline and benchmark against competitors. Your raw visibility number is only useful relative to something. Topify’s competitor monitoring lets you track your position against rivals in real time, so you know whether a visibility dip is absolute or relative.

Step 4: Run the cycle continuously, not monthly. AI models update their weighting frequently. A content refresh from a competitor, a new Reddit thread gaining traction, a model retraining cycle: any of these can shift your score. AI Overviews usage grew 4x in under a year. The measurement cadence needs to keep pace.

5 Mistakes That Tank Your AI Visibility Score Analytics

Tracking only your brand name. Your brand name is the easiest query to win. It tells you almost nothing. The queries that matter are category-level: “project management tool for remote teams,” “affordable CRM for SMBs.” If you’re not appearing there, you’re losing buyers who’ve never heard of you.

Using one platform as a proxy for all. The correlation between branded web mentions and AI visibility is 0.664, compared to 0.218 for backlinks. But that relationship plays out differently across platforms. Don’t generalize from one AI’s behavior to others.

Treating AI visibility like keyword rank. Traditional rank is relatively stable. AI responses are stochastic. The list order alone has approximately a 1-in-1,000 chance of repeating across successive runs. Measuring visibility as a point-in-time rank is statistically invalid.

Monthly reporting cycles. In traditional SEO, a monthly report often captures enough signal. In generative search, where zero-click rates have climbed to 93% in Google’s AI Mode, the window between a model shift and a traffic change is measured in days, not weeks.

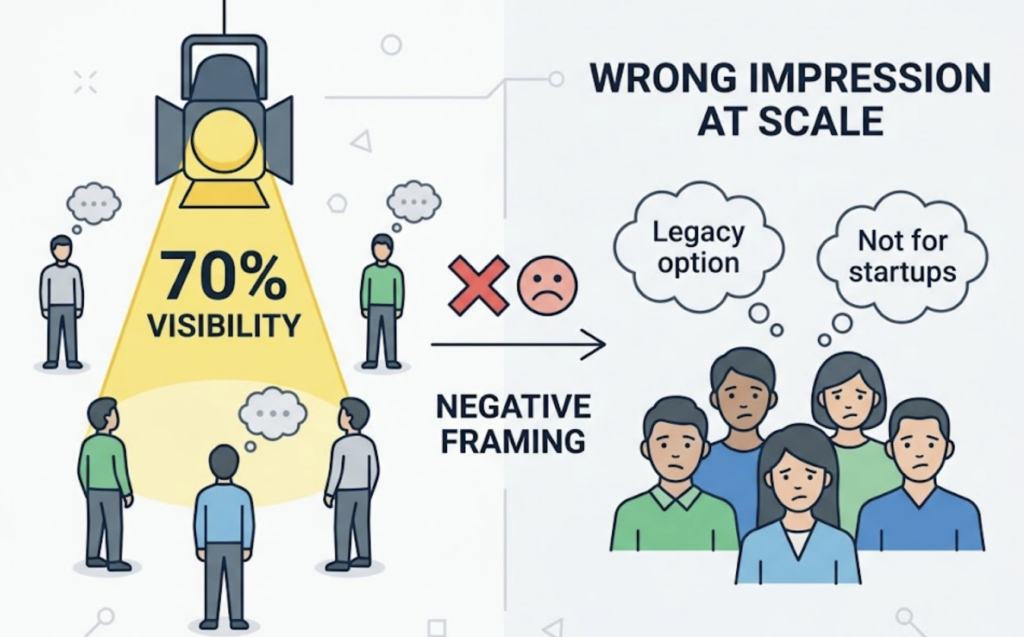

Ignoring Sentiment in favor of Visibility. Appearing in 70% of relevant prompts sounds strong. It’s actually a liability if the AI is consistently describing you as “the legacy option” or “better for enterprise, not startups.” High visibility with negative framing accelerates the wrong impression at scale.

The Tools That Actually Track AI Visibility Score Analytics in 2026

The AI visibility software market has seen over $120 million in investment as of 2026, producing a wide range of platforms built for different team sizes and use cases.

For teams that need comprehensive analytics with execution built in, Topify covers all seven core metrics (visibility, sentiment, position, volume, mentions, intent, CVR) across ChatGPT, Gemini, Perplexity, DeepSeek, and other major platforms. Its Source Analysis feature reverse-engineers the exact domains AI is citing so you can identify content gaps and act on them. The AI agent handles continuous monitoring and strategy execution from a single prompt, no manual workflows required.

Here’s how the current tooling landscape breaks down:

| Tool | Best For | Platform Coverage | Starting Price |

|---|---|---|---|

| Topify | Full-funnel analytics + execution | ChatGPT, Gemini, Perplexity, DeepSeek, and more | $99/mo |

| Profound | Enterprise compliance | 10+ engines | ~$4,000/mo |

| ZipTie | Content optimization workflows | AIO, ChatGPT, Perplexity | $69/mo |

| Otterly.ai | Startup baseline tracking | ChatGPT, Perplexity, AIO | $29/mo |

| SE Ranking | SEO + GEO blended | AIO, Perplexity, Gemini | $119/mo |

| Rankscale | Executive reporting | Multi-engine | $20/mo |

The biggest trap is selecting a tool based on price alone without checking query set stability (does it track the same prompts consistently?) and whether it captures citation-level data, not just mentions.

How to Improve Your AI Visibility Score: A Strategy Checklist

These are the levers that actually move the needle, in priority order:

Content architecture first. 44.2% of all LLM citations come from the first 30% of a document. Put your direct answer within the first 60 words of every page targeting AI visibility.

Build structured content assets. Tables, numbered lists, and comparison blocks are extracted significantly more often than dense prose. Format for machine comprehension, not just human readability.

Prioritize factual density. Specific data points and cited research make content “citable.” Vague benefit claims don’t survive the AI synthesis process.

Fix your E-E-A-T signals. E-E-A-T remains the primary positive ranking activity for 66.3% of search professionals. Clear author credentials, linked professional profiles, and cited external sources build trust with LLMs, especially in competitive categories.

Expand your third-party footprint. AI models aggregate consensus across the web. A mention in a reputable trade publication or an active Reddit discussion carries more AI visibility weight than a new landing page. Branded web mentions correlate with AI visibility at 0.664, three times stronger than backlinks.

Audit your “dark prompts” weekly. These are category-level buyer questions your customers ask AI but never search on Google. Test them manually or use Topify’s prompt discovery to surface the ones worth tracking.

Track AI referral traffic separately in GA4. Use a regex channel group to isolate AI platforms from generic referral traffic. AI sessions run 68% longer and view 50% more pages per session than standard organic. Losing that signal in an aggregated bucket means losing your ROI story.

Run competitor benchmarking monthly. Visibility is relative. Topify’s competitor monitoring flags when a rival gains share so you can identify what changed in their content strategy.

Monitor Sentiment as a leading indicator. A dip in Sentiment often precedes a Visibility drop by several weeks. Catching it early gives you time to correct the narrative before the AI’s weighting shifts.

Set a visibility floor and alert on it. If your score drops more than 10% month-over-month, that’s typically a signal that a competitor has published more citable content or a model has retrained. Don’t wait for the monthly report to find out.

Conclusion

AI visibility score analytics isn’t a replacement for SEO. It’s a parallel measurement system built for a different discovery environment.

The brands that will lead in the next two years aren’t necessarily the ones with the highest domain authority or the most backlinks. They’re the ones that figured out, early, that a different kind of authority was being built in the AI layer, and started measuring it before their competitors did.

Start with your prompt set. Pick a tool that tracks across platforms. Build the baseline. The data will tell you where to go next.

FAQ

What is AI visibility score analytics? AI visibility score analytics is a measurement framework that tracks how often and how well a brand appears in AI-generated responses across platforms like ChatGPT, Gemini, and Perplexity. It combines metrics like mention rate, sentiment, citation quality, and position into a composite view of brand presence in generative search.

How does AI visibility score analytics work? The system runs a predefined set of relevant prompts across multiple AI platforms, records whether and how the brand appears in each response, and aggregates those results into percentage-based scores over time. Because AI responses are probabilistic, a statistically valid score requires running hundreds of prompts rather than spot-checking a few.

How do I measure AI visibility score analytics? Define your core prompt library, run those prompts at consistent intervals across all major AI platforms, establish a competitor benchmark, and track score changes over time rather than snapshots. Tools like Topify automate this process at scale.

What are the best tools for AI visibility score analytics? The right tool depends on your scale and goals. Topify covers the broadest range of AI platforms with a full seven-metric analytics suite and built-in execution capabilities. For enterprise compliance needs, Profound offers deep multi-engine coverage. For early-stage monitoring, Otterly.ai provides a lower-cost entry point.

What does AI visibility score analytics cost? Pricing varies by tool and team size. Topify starts at $99/month for the Basic plan (100 prompts, 4 platforms, 4 seats) and scales to $199/month for the Pro plan (250 prompts, 10 seats). Enterprise plans start at $499/month with custom configuration and a dedicated account manager.