You spent two years building content authority, earning backlinks, and keeping your brand messaging tight across every channel. Then someone on your team asks ChatGPT for a vendor recommendation in your category. Your brand isn’t in the answer. Perplexity describes a competitor as “the leading solution.” Gemini mentions you once, in a comparison where you rank last.

Your monitoring dashboard shows no alerts. Your social listening tool has nothing. Nothing broke. No bad press, no negative reviews. The problem isn’t what someone said about you. It’s what AI decided to say, without anyone watching.

That gap has a name: the absence of an AI reputation monitoring system.

Why Your Current Brand Monitoring Can’t See What AI Says About You

Traditional reputation tools were built for a different world. They crawl text mentions across social platforms, news sites, and review directories, flagging whenever someone publishes content about your brand. That logic made sense when users clicked through to read sources and form their own opinions.

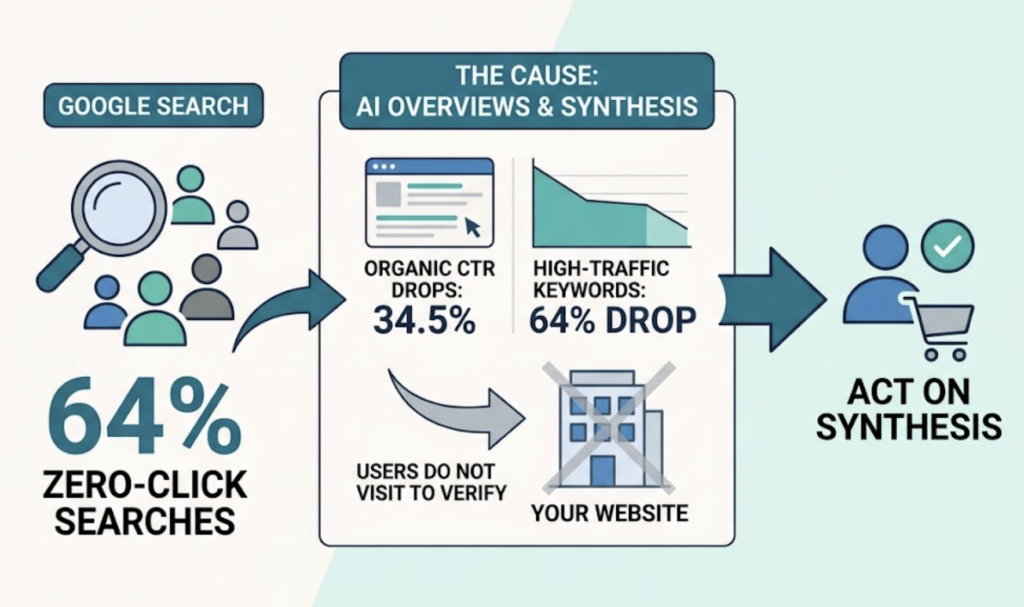

It doesn’t hold anymore. Research from early 2026 shows that nearly 64% of Google searches in the United States now end without a single click to an external website. When an AI Overview is present, average click-through rates for organic links drop by approximately 34.5%, and for high-traffic keywords, that figure reaches 64%. Users aren’t visiting your site to verify what AI told them. They’re acting on the synthesis.

The deeper issue is structural. Traditional monitoring tools are built for deterministic environments: a keyword yields a predictable set of results. Generative AI doesn’t work that way. It synthesizes rather than retrieves. When ChatGPT or Perplexity answers “what’s the best tool for X,” it’s drawing from a reasoning process that weighs authority signals, source freshness, entity consistency, and citation patterns. None of that is visible to your current monitoring stack.

That’s not a gap you can patch with an extra alert. It requires a different kind of system entirely.

What an AI Reputation Monitoring System Actually Tracks

An AI reputation monitoring system is an integrated intelligence layer that tracks, analyzes, and evaluates how generative AI platforms describe your brand across their outputs.

The key distinction is the word “generative.” This isn’t about tracking what people write about you online. It’s about tracking what AI synthesizes about you, based on what it has learned, retrieved, and chosen to cite.

Three dimensions define the system. Tracking covers which AI platforms mention your brand, in response to which queries, and in what context. Analysis examines whether those descriptions are accurate, positive, or misaligned with your actual positioning. Benchmarking maps how your brand’s treatment compares to direct competitors across the same prompt set.

An AI reputation monitoring software or platform that covers all three gives brand teams something traditional tools never could: visibility into the zero-click layer where most modern purchase decisions are quietly forming.

How an AI Reputation Monitoring System Works, Step by Step

Understanding the mechanics matters. Most brands that try to build this capability get the first step right and miss the rest.

Step 1: Prompt Mapping. You don’t monitor your brand name. You monitor the queries where your brand should appear. A well-structured prompt portfolio covers commercial intent (“best [category] for [use case]”), comparison queries (“[brand] vs. [competitor]”), solution-fit searches (“what tool should I use to solve [problem]?”), and risk queries (“is [brand] compliant with [regulation]?”). A functional portfolio typically spans 20 to 100 prompts.

Step 2: Cross-Platform Answer Collection. Each prompt is run across the AI platforms your audience actually uses. ChatGPT, Gemini, Perplexity, and for global brands, DeepSeek and Doubao. Answers are collected systematically, not spot-checked manually once a quarter.

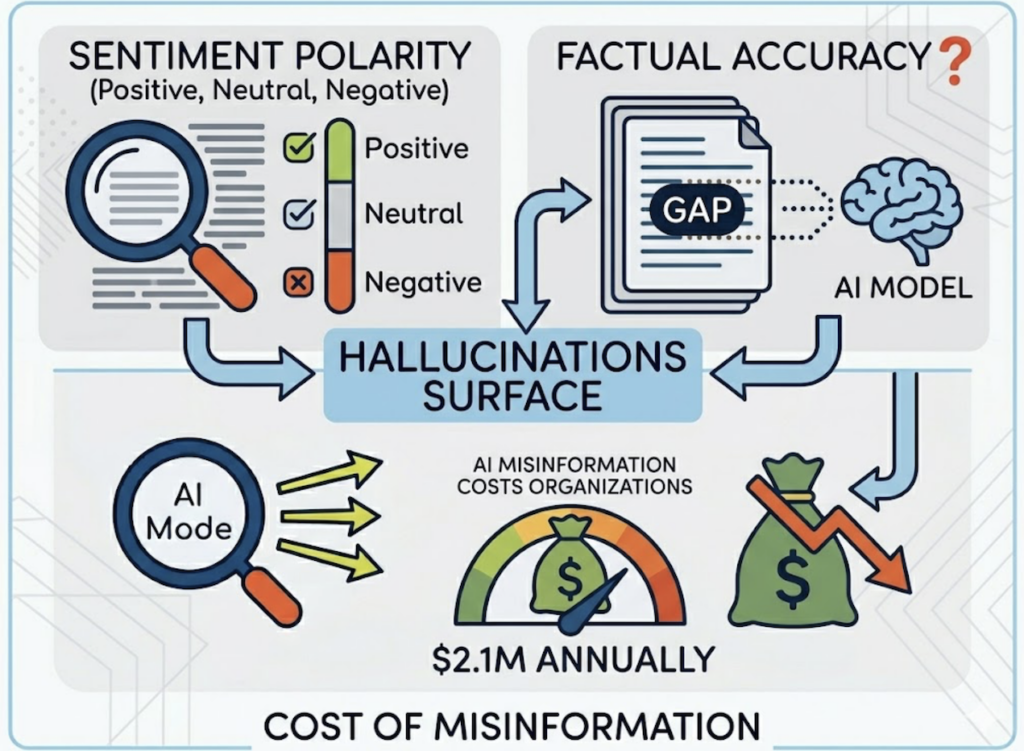

Step 3: Sentiment and Accuracy Analysis. Collected answers are evaluated for sentiment polarity (positive, neutral, negative) and factual accuracy. This is where hallucinations surface. AI models fill information gaps with probabilistic assumptions. A brand that doesn’t explicitly state certain facts may find the model fabricating them, and research estimates that AI misinformation costs organizations an average of $2.1M annually in direct and indirect losses.

Step 4: Competitor Benchmarking. The same prompt set reveals how competitors are described. Your own data only becomes meaningful in that context. Being mentioned positively tells you little if two competitors are consistently positioned as the clear first choice in the same answer.

Step 5: Source Tracing. This identifies which third-party domains the AI is citing when it generates answers about your brand or category. If a competitor’s blog post serves as the primary reference for your use case, that’s a content gap with a direct fix. Source tracing turns monitoring from observation into action.

5 Metrics Your AI Reputation Monitoring Dashboard Can’t Skip

Not all data is equal. These are the metrics that drive real decisions.

1. Answer Inclusion Rate (Visibility) The percentage of tracked prompts where your brand appears in the AI-generated response. This is the new “ranking” metric. Being absent from 60% of relevant queries is a concrete, measurable problem. Unlike traditional rank tracking, this number reflects actual user exposure, not just algorithmic position.

2. Sentiment Score A 0-100 scale measuring whether AI descriptions lean positive, neutral, or negative. Importantly, different AI models carry systematic tendencies: some skew toward positive framing, others consistently trend neutral or slightly negative. Your AI reputation monitoring analytics need to account for platform-level baseline, not just raw scores in isolation.

3. Position in Response Being mentioned fourth in a list is not the same as being mentioned first. Research consistently shows that first-mentioned brands in AI-generated lists capture disproportionate trust and consideration from users, while brands appearing fourth or fifth correlate with significantly lower conversion intent. Position tracking tells you whether you’re being recommended or just included.

4. Source Coverage Which domains is AI citing when it describes your brand or category? If high-authority third-party sites aren’t including your brand in their coverage, the AI won’t either. Source coverage maps the upstream problem that’s producing the downstream visibility gap.

5. CVR (Conversion Visibility Rate) An estimate of how likely an AI recommendation is to translate into user action. A mention in a “best tools for enterprise” response carries more conversion weight than a passing reference in a category overview. CVR puts commercial context behind visibility numbers so teams can prioritize the prompts that actually matter for pipeline.

These five metrics, tracked consistently inside a structured AI reputation monitoring dashboard, give brand teams the signals they need to act rather than react.

4 Mistakes That Break Most AI Reputation Monitoring Systems

Most teams that try to build this capability make the same four errors.

Mistake 1: Monitoring only one AI platform. ChatGPT is one AI platform. Depending on your audience, Perplexity may drive more research-phase queries. Gemini may dominate mobile and Google Workspace users. For APAC markets, DeepSeek prioritizes technical authority signals and chain-of-thought reasoning, while Doubao runs on ByteDance’s ecosystem with distinct ranking logic tied to video content and user-generated reviews. An AI reputation monitoring system that covers one platform gives you one slice of a fragmented picture.

Mistake 2: Treating mentions as endorsements. Your brand appearing in an AI answer doesn’t mean it’s being recommended. AI might reference you in a comparison that positions a competitor as the stronger choice, or use language that subtly frames your product as suited for smaller or less sophisticated use cases. Every mention needs sentiment and context analysis. A count means nothing without a read.

Mistake 3: Skipping competitor benchmarking. Your inclusion rate only means something relative to what competitors are getting. A 40% inclusion rate looks solid until you find out your closest competitors average 75%. Isolated metrics don’t tell you whether you have a problem. Benchmarked metrics do. This is the difference between a dashboard and an insight.

Mistake 4: Running periodic audits instead of continuous monitoring. AI models update their citation behavior, sometimes significantly, within short windows. Perplexity refreshes its index daily and applies time-decay mechanisms that reduce visibility of content that isn’t regularly updated. A monthly snapshot will miss drift entirely. Weekly monitoring cycles are the baseline for any brand operating in a competitive category.

How to Build an AI Reputation Monitoring Strategy That Holds

Strategy starts before the tools. Here’s what needs to be in place first.

Start with high-intent prompts, not brand name searches. The users who haven’t already found your brand are the highest-value audience to reach. “Best [category] tool for [use case]” queries are where purchase decisions form before anyone visits your website. Commercial intent prompts belong at the top of your monitoring priority list.

Establish a baseline before tracking trends. Data without a reference point is noise. You can’t identify improvement or degradation without a baseline snapshot across all tracked prompts, platforms, and competitors. Run the baseline before you change anything.

Connect source gap analysis to your content calendar. When AI cites a competitor’s content to describe your category, that’s a direct signal: publish something that fills that gap. AI reputation monitoring analytics become most actionable when mapped to a content response workflow. The monitoring tells you what to write. The content changes what the model cites.

Align monitoring cadence with your content publishing cycle. If your team publishes monthly, monitor weekly. Changes in AI citation behavior don’t align with editorial calendars. You need to catch shifts before they compound into a structural disadvantage.

Build a reporting rhythm that connects to decisions. Monitoring data that doesn’t influence content strategy, PR responses, or product positioning is overhead, not intelligence. Define in advance which metrics trigger which actions. That’s what makes an AI reputation monitoring solution a business tool rather than a reporting exercise.

The Right AI Reputation Monitoring Platform for 2026

Choosing a platform comes down to three questions: Which AI platforms does it cover? How deep are the analytics? And does it close the loop between insight and action?

Topify is built for exactly this use case. It tracks brand performance across ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, Qwen, and other major AI platforms, covering both Western and APAC markets in a single AI reputation monitoring solution. That matters for any brand with a global audience or expansion plans.

The platform measures seven core metrics per query: Visibility, Sentiment, Position, Volume, Mentions, Intent, and CVR. That maps directly to the five metrics outlined above, with Volume and Intent data layered on top to give strategic context beyond raw performance.

Topify’s Source Analysis is where monitoring becomes a content strategy tool. It traces exactly which domains AI platforms cite when generating answers about your brand or category, so you can see the gap, identify what’s filling it, and produce content specifically designed to reclaim those citations.

Competitor monitoring runs in parallel throughout. You’re never evaluating your own numbers in isolation. Topify surfaces who AI is recommending alongside you (or instead of you) and tracks how that competitive picture shifts week over week.

For teams that want to move from monitoring to optimization, Topify’s one-click AI agent execution connects insight to deployment directly. You define the goal, review the proposed strategy, and launch. No manual workflow between the data and the action.

Pricing starts at $99/month (Basic plan) with a 30-day trial, covering 100 prompts across ChatGPT, Perplexity, and AI Overviews, with 4 projects and 9,000 AI answer analyses included. The Pro plan at $199/month expands to 250 prompts and 10 seats. Enterprise configurations start at $499/month with a dedicated account manager and custom scope. Full details are at Topify’s pricing page.

Trusted by 50+ enterprises and startups, Topify was built by a team that includes founding researchers from OpenAI and Google SEO practitioners, which shows in the depth of its prompt analysis and citation modeling.

AI Reputation Monitoring Checklist: 8 Things to Set Up Before You Start

Before you run a single prompt, get these in place.

- [ ] Define which AI platforms your target audience uses most (don’t default to ChatGPT alone)

- [ ] Build a prompt portfolio of 20-50 queries covering commercial, comparison, and solution-fit categories

- [ ] Run a baseline audit across all platforms and document inclusion rate, sentiment score, and position

- [ ] Set up competitor tracking so your baseline data includes relative benchmarks, not just absolute numbers

- [ ] Configure Source Analysis to identify which third-party domains are being cited in your category

- [ ] Set sentiment alert thresholds so unexpected shifts trigger a review, not just a weekly summary

- [ ] Create a content response SOP that maps source gaps to publishing priorities

- [ ] Establish a weekly monitoring cadence with a named owner responsible for reviewing trend data

This checklist for AI reputation monitoring system setup takes an afternoon to complete. Skipping it costs months of visibility drift with no clear explanation.

Conclusion

Traditional brand monitoring was built for a world where users clicked links and read sources. That world is shrinking. With roughly one in six people globally now using generative AI tools to research, evaluate, and decide, AI-generated answers have become a primary reputation channel. Most brands don’t have a system for it yet.

The starting point isn’t a perfect strategy. It’s a baseline. Run your core prompts across the major platforms. See what AI is saying. Measure it against competitors. Then build from there.

Get started with Topify and establish your AI reputation baseline in under 30 minutes.

FAQ

Q: What is an AI reputation monitoring system?

A: An AI reputation monitoring system is a set of tools and workflows that tracks how generative AI platforms, including ChatGPT, Gemini, and Perplexity, describe your brand in their generated answers. It measures visibility (whether your brand appears in relevant queries), sentiment (how it’s described), position (where it ranks relative to competitors in AI responses), and source coverage (which third-party content is shaping the AI’s view of your brand).

Q: How do you measure the effectiveness of an AI reputation monitoring system?

A: The most reliable indicators are Answer Inclusion Rate trend over time, Sentiment Score trajectory across platforms, Position relative to direct competitors, and Source Coverage improvement after content interventions. An effective system shows upward trends in inclusion rate and sentiment as GEO-optimized content begins to get cited by AI platforms.

Q: What are examples of AI reputation monitoring in practice?

A: A SaaS brand might discover that ChatGPT consistently recommends a competitor first for “best project management tool for remote teams,” while Perplexity cites a three-year-old review article to describe their pricing. Those findings trigger specific actions: publishing content that targets the competitor comparison query, and restructuring the pricing page with machine-readable schema. Monitoring then confirms whether those changes shift AI citation behavior over the following weeks.

Q: How much does an AI reputation monitoring system cost?

A: Costs vary by platform scope and feature depth. Entry-level AI reputation monitoring tools typically start between $25-99/month for basic prompt tracking. Topify’s Basic plan starts at $99/month and includes a 30-day trial, covering 100 prompts across ChatGPT, Perplexity, and AI Overviews. The Pro plan is $199/month with 250 prompts and 10 seats. Enterprise plans start at $499/month with custom configurations and a dedicated account manager. See the full breakdown at Topify’s pricing page.