ChatGPT, Perplexity, and Gemini answer questions about your brand every day. They describe your product, assess your reputation, and compare you to competitors. Most brands have no idea what those answers look like.

That’s not a PR problem. It’s a measurement problem.

AI reputation monitoring analytics is the discipline that closes this gap. It tracks how AI systems represent your brand across platforms, turns those representations into measurable signals, and gives your team the data to act before the narrative hardens.

By 2026, roughly 30% of brand perception will be shaped directly by AI-generated content. More than 2 billion people are already exposed to AI-generated search overviews every month. If your monitoring setup doesn’t cover what AI is saying about you, you’re making decisions on incomplete data.

What AI Reputation Monitoring Analytics Actually Measures

This isn’t a renamed version of social listening. The underlying mechanics are different.

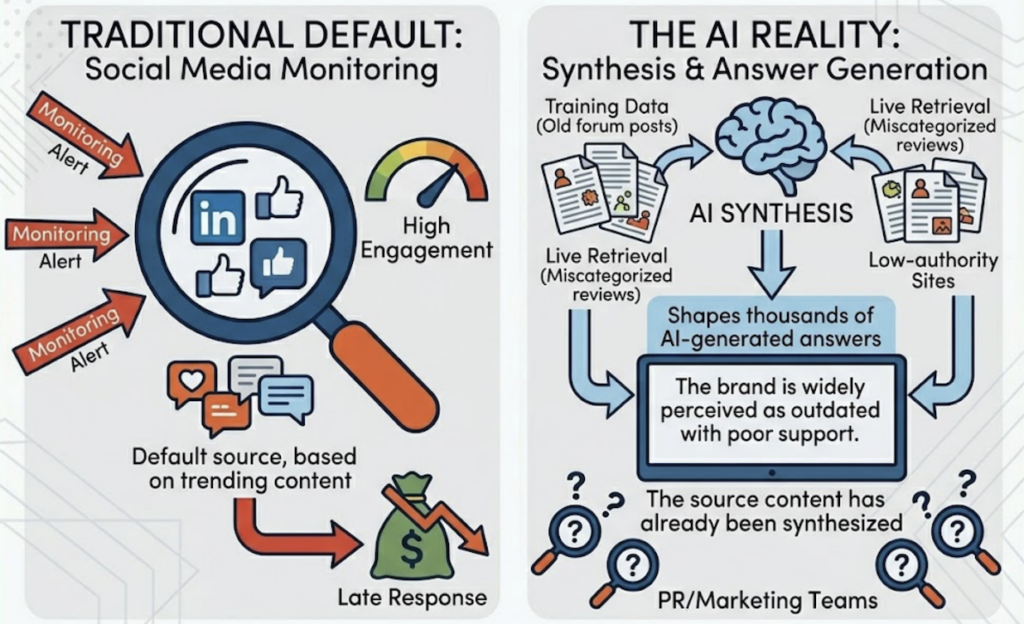

Traditional brand monitoring is built around keyword matching. It scans social posts, news articles, and review platforms for mentions of your brand name. It works well for the media environment it was designed for.

AI reputation monitoring analytics tracks something else entirely: how large language models synthesize information about your brand when responding to user queries. The input isn’t a public post. It’s a user prompt. The output isn’t a tweet or an article. It’s a generated answer with implied authority.

That difference matters. AI systems don’t just repeat what they find. They combine sources, assign weight, and produce a summary that many users treat as fact. A two-year-old forum post and a recent negative review can both end up shaping an AI’s answer about your brand, even if neither received significant engagement on its original platform.

Traditional monitoring would never surface either of those as a risk. AI reputation monitoring analytics will.

The Four Core Signals AI Monitoring Tracks

Every AI reputation monitoring system, regardless of the platform or tool, centers on four signal types:

Visibility is how often your brand appears in AI answers to relevant queries. Not just whether you’re mentioned, but whether you’re recommended when users ask about your product category.

Sentiment is the qualitative tone AI models use when describing your brand. The word choices matter: “widely recommended” and “worth considering” carry very different weights in a user’s decision process.

Position is where your brand appears in AI-generated lists or comparisons. First position isn’t just a ranking. It’s an implicit endorsement.

Citation is which external sources AI platforms are using to support their answers about you. Those sources are often third-party review sites, industry publications, or community forums, and understanding them tells you where your brand’s AI narrative is actually being built.

6 Metrics That Tell You If AI Is Helping or Hurting Your Brand

Most teams track vanity metrics. Here’s what actually signals AI reputation health.

Visibility Rate measures what percentage of relevant prompts generate a mention of your brand. If 100 users ask AI about the best solution in your category and your brand appears in 60 answers, your visibility rate is 60%. This is the baseline metric for AI brand presence, calculated as total mentions divided by total prompts tested.

Sentiment Score goes beyond positive or negative. Advanced AI reputation monitoring tools analyze the specific descriptors AI models use, linking them to business drivers like price perception, ease of use, or support quality. Brands that integrate sentiment data at this level of granularity see an average 23% improvement in customer satisfaction over 12 months, according to recent industry research.

Position Ranking tracks where your brand appears in AI-generated lists across platforms. Positions 1 through 3 are widely considered the “golden range,” where user trust and conversion intent are highest. Position 4 and beyond drops off sharply. You need to know your average position across ChatGPT, Perplexity, Gemini, and other platforms separately, not as a blended number.

Citation Sources reveal which external domains AI platforms are drawing on to describe your brand. The distribution varies significantly by platform. On ChatGPT, Wikipedia and major news sites account for roughly 48% of citations. Perplexity leans heavily on Reddit and real-time news at around 46.7%. Google AI Overviews pulls from Reddit, product pages, and blogs at about 21%. Knowing which sources dominate your brand’s AI narrative tells you exactly where to focus your content and PR efforts.

Mention Volume Trends track changes in how frequently your brand is referenced in AI answers over time. A sudden spike in mentions paired with declining sentiment is often the earliest indicator of a cross-platform reputation issue developing before it surfaces in traditional monitoring channels.

Competitor Gap puts your visibility in context. If a key competitor appears in 75% of relevant AI queries and your brand appears in 40%, you have a 35-point visibility gap. That gap represents queries where customers are hearing a recommendation that doesn’t include you.

Why Most Brand Monitoring Setups Miss 90% of the AI Signal

The problem isn’t that teams aren’t working hard. It’s that they’re using tools built for a different media environment.

Mistake 1: Monitoring social, ignoring AI synthesis. Most PR and marketing teams default to social media as the primary source of brand intelligence. But AI models don’t generate answers based on what’s trending on LinkedIn. They pull from their training data and, in some cases, live retrieval, which can include outdated forum posts, miscategorized reviews, and low-authority third-party sites. By the time that content surfaces in a brand monitoring alert, it may have already shaped thousands of AI-generated answers.

Mistake 2: Writing off AI-driven traffic as untrackable. Many teams notice branded search traffic growing without a clear source in GA4 and categorize it as direct. In practice, a significant portion is “dark traffic,” users who read an AI answer and then manually search the brand name. Because AI recommendations don’t always include clickable links, traditional attribution models break down. Treating this traffic as untrackable means losing a key signal about AI’s actual influence on brand discovery.

Mistake 3: Monitoring one platform and calling it done. ChatGPT gets most of the attention, but Gemini, Perplexity, and Claude all handle queries differently and draw on different source preferences. ChatGPT tends to respond with higher confidence and carries a hallucination rate of around 67%. Claude takes a more cautious approach. The result is that the same brand query can generate meaningfully different answers across platforms, and about 80% of consumers report doubting brand information consistency when they encounter contradictory AI responses. If you’re only monitoring one platform, you won’t see those contradictions until someone else points them out.

A 5-Step Framework to Set Up AI Reputation Monitoring Analytics

Getting started doesn’t require a complete technology overhaul. It requires the right structure.

Step 1: Define the prompts your customers actually ask. Start with three categories: commercial queries (“what’s the best [product category] for [use case]”), problem-based queries (“how do I solve [specific pain point]”), and trust-verification queries (“[brand name] vs [competitor]” or “[brand name] reliability”). These prompts become the foundation of your monitoring set.

Step 2: Map your baseline visibility across platforms. Run your core prompts across ChatGPT, Perplexity, Gemini, and at minimum one other platform. Because AI answers include randomness, run each prompt multiple times to get a stable visibility percentage. This baseline tells you where you’re visible, where you’re absent, and where you’re being described incorrectly.

Step 3: Score sentiment at the prompt level, not the brand level. Overall sentiment scores can hide significant problems. A brand might score well on “enterprise reliability” queries while performing poorly on “ease of onboarding” queries. That’s a product and content signal, not just a communications one. Prompt-level sentiment analysis makes the data actionable.

Step 4: Identify citation gaps. Extract the sources AI platforms are citing when they describe your competitors. Look for third-party review sites, industry directories, and community platforms where competitors have strong presence and you don’t. Also look for citations your competitors hold that are based on outdated or low-quality content. Those are the ones you can displace.

Step 5: Set up recurring benchmark reports. AI models update continuously. A visibility rate measured in January may look quite different by March. Weekly reviews should focus on prompt-level fluctuations. Monthly reviews should compare competitor share of voice. Quarterly reviews should assess longer-term sentiment trends and identify any emerging risks before they become visible in traditional channels.

What to Look for in an AI Reputation Monitoring Tool

Not all tools in this space are built the same. Four criteria separate genuinely useful platforms from dashboards that generate reports without driving decisions.

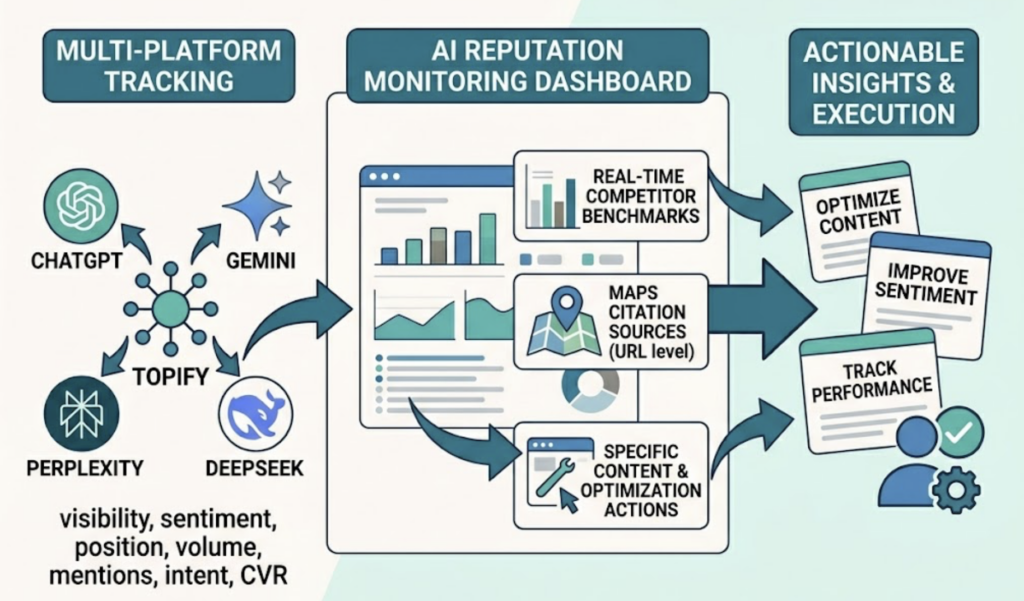

Platform coverage is non-negotiable. Any AI reputation monitoring software that only tracks one or two platforms will give you a structurally incomplete picture. Look for coverage across ChatGPT, Perplexity, Gemini, and ideally DeepSeek and other emerging platforms if your audience is global.

Metric depth determines whether the tool can answer the right questions. Surface-level mention counts aren’t enough. You need prompt-level sentiment breakdowns, citation source mapping, and position tracking by platform, not aggregated.

Automation and update frequency matter at scale. If you’re monitoring hundreds of prompts across multiple platforms, manual workflows don’t work. Look for systems that run queries automatically, surface anomalies proactively, and ideally suggest optimization actions alongside the data.

Actionability is the test most tools fail. Data that doesn’t connect to an action is just reporting. The most useful AI reputation monitoring platforms tell you not only what’s happening but where to focus to change it.

Topify is built around all four of these criteria. It tracks brand performance across ChatGPT, Gemini, Perplexity, DeepSeek, and other major AI platforms using seven core metrics: visibility, sentiment, position, volume, mentions, intent, and CVR. The AI reputation monitoring dashboard surfaces competitor benchmarks in real time, maps citation sources at the URL level, and connects those insights to specific content and optimization actions.

Where Topify separates itself from standard AI reputation monitoring solutions is execution. Most platforms stop at the data layer. Topify includes a one-click agent that can deploy optimization strategies directly from the dashboard, which reduces the gap between identifying a problem and acting on it from weeks to hours.

For teams that need to manage multiple brands or clients, the AI reputation monitoring system scales without requiring parallel manual workflows. Each project runs independently with its own prompt set and reporting cadence.

AI Reputation Monitoring Analytics Pricing: What to Expect

Pricing in this category varies significantly based on three factors: how many prompts you’re monitoring, how many platforms are covered, and whether the tool includes execution features or just reporting.

The market currently breaks into four tiers. Lightweight tools targeting individual brands or early-stage startups typically run $29 to $99 per month, covering 15 to 100 prompts with daily basic reporting. Mid-market platforms for growing teams sit in the $189 to $499 range, with 250 to 400 prompts, multi-seat access, and GEO audit features. Enterprise-grade AI reputation monitoring platforms start around $500 and scale to $2,500 or more per month, adding custom configurations, dedicated account management, and compliance tooling. Full-service managed solutions, which include content production, PR distribution, and active monitoring, typically start around $5,000 monthly.

Topify’s AI reputation monitoring platform starts at $99 per month for the Basic plan, which covers 100 prompts, 9,000 AI answer analyses across ChatGPT, Perplexity, and AI Overviews, and 4 project seats. The Pro plan at $199 per month scales to 250 prompts and 22,500 AI answer analyses. Enterprise plans start at $499 per month and are fully customizable. For teams that need full-service execution alongside monitoring, Topify’s managed GEO service starts at $3,999 per month.

Compared to the broader market, Topify’s entry price is below the category average for the level of platform coverage and metric depth it provides.

Conclusion

Your brand’s AI reputation isn’t static, and it’s not self-managing. Every day, AI systems are answering questions that directly affect how potential customers, partners, and investors perceive you. Most of that is happening without your knowledge.

AI reputation monitoring analytics gives you the visibility to change that. It’s not a defensive tool. Used well, it’s a growth system: one that tells you exactly which prompts to target, which citation gaps to close, and which competitor advantages to challenge.

The brands that build this capability now, before it becomes standard practice, will have a structural advantage that compounds over time. AI systems reward consistency, authority, and source depth. Building those takes time. Starting later just makes the gap harder to close.