Your team spent months building a clean brand identity. Then a potential customer opened Perplexity and asked, “What are the best tools in [your category]?” The response came back with five competitors, a confident tone, and zero mention of you.

The unsettling part isn’t that you weren’t included. It’s that you had no idea.

That’s the core problem with AI reputation right now. Most brand managers are still using tools built for a world where reputation lives on indexable pages. In the generative era, it doesn’t.

Why Traditional Reputation Tools Leave You Blind to AI

Tools like Google Alerts, Brandwatch, and Meltwater were engineered for a deterministic web. Content gets published to a URL, crawled by a bot, and retrieved based on keyword relevance. That’s how ORM has worked for two decades.

Generative AI breaks every assumption in that model.

When ChatGPT or Gemini answers a query, it synthesizes a unique response in real time. That response doesn’t live on a searchable URL. It’s generated within the context window of a specific prompt, then disappears. There’s no page for a monitoring tool to crawl, no feed to scrape, no alert to trigger.

The result: traditional monitoring coverage of AI-generated content remains effectively 0%. A brand’s reputation can shift dramatically inside the latent space of an LLM while every legacy tool shows green.

What makes this harder is the non-deterministic nature of AI responses. The same query can generate different narratives across different sessions, platforms, or timeframes. This means “brand reputation” in AI search isn’t a fixed fact to track. It’s a probability distribution that shifts continuously.

| Feature | Traditional Search (SEO/ORM) | Generative Search (AI Reputation) |

|---|---|---|

| Data Retrieval | Deterministic: retrieves indexed links | Probabilistic: synthesizes new text |

| Primary Metric | Clicks and rankings | Mentions and citations |

| Content Stability | Static: pages remain consistent | Dynamic: responses evolve per prompt |

| Visibility | Publicly searchable via URLs | Ephemeral: exists within chat sessions |

| Authority Signal | Backlinks and PageSpeed | Semantic depth and entity clarity |

What AI Reputation Actually Means in 2026

In the generative era, “AI reputation” isn’t a collection of reviews. It’s a synthesized narrative.

It’s defined by what AI models believe to be true about your brand, based on training data, retrieval sources, and the specific prompt context. Unlike traditional ORM, which aggregates what users say about you, AI reputation is a summary of what the model says, unprompted, when someone asks.

Nearly 37% of consumers now start their search journeys on AI platforms rather than traditional search engines. And AI-driven traffic converts at 15.9%, compared to 1.76% for traditional organic search. The economic stakes of being misrepresented, or invisible, are real.

A complete AI reputation monitoring solution needs to track four dimensions:

Visibility (Mention Rate): How often your brand appears in AI responses for relevant category prompts. This is your share of voice in the generative ecosystem.

Sentiment (Emotional Framing): Not just positive or negative, but how the model frames you. “Reliable market leader” and “budget alternative with occasional bugs” are both technically positive, and both will tank your enterprise pipeline.

Position (Priority in List): In multi-brand recommendations, being first-mentioned carries meaningfully more authority than being listed fifth.

Source Attribution (Citation Trust): Which domains the AI cites when describing your brand. If it’s citing your technical documentation, authority is high. If it’s citing a three-year-old Reddit thread, that’s a different problem.

The 5 Things a Real AI Reputation Monitoring Solution Must Track

Not every AI reputation monitoring tool covers the same ground. Before evaluating any platform, it helps to know what a complete solution actually tracks.

1. Cross-platform visibility. Brand discovery is fragmented across AI engines. A brand may be well-represented on ChatGPT while remaining invisible on Perplexity or Gemini. This isn’t random: only 11% of cited domains overlap across major AI platforms, because each engine uses a different retrieval architecture with different source preferences. Any AI reputation monitoring software that only covers one platform is showing you a partial picture at best.

2. Sentiment score over time. A score of 80+ on a 0-100 scale typically signals “market leader” framing. Scores below 65 indicate potential reputational risk. More important than any single score is the trajectory. A downward trend over three weeks, even within a “safe” range, signals narrative drift before it becomes a baseline fact for the model.

3. Prompt-level intent breakdown. Knowing your brand was mentioned is not enough. A real AI reputation monitoring system tells you which specific prompts triggered the mention, and which didn’t. Prompts segment by intent: informational (“What is X?”), commercial (“Best X for use case Y”), and comparative (“Is X better than Z?”). Each segment can tell a completely different story about where you’re winning versus where you’re losing the narrative.

4. Competitor positioning. In AI recommendations, the interaction is zero-sum. If a competitor is mentioned instead of you, you don’t get partial credit. Monitoring must track “Share of Model,” the percentage of category mentions that belong to your brand versus rivals. A competitor’s visibility jumping 10% in a week typically signals a successful GEO push that requires a counter-strategy.

5. Source attribution integrity. AI models are only as accurate as what they’re citing. A robust AI reputation monitoring platform audits the domains AI engines use when describing your brand, including citation rate, source authority mapping (Wikipedia vs. unverified forum), and factual accuracy flags for hallucinations or outdated product information.

What Your AI Reputation Monitoring Dashboard Should Actually Show You

Most dashboards show you data. The ones worth using show you what changed, why, and what to do next.

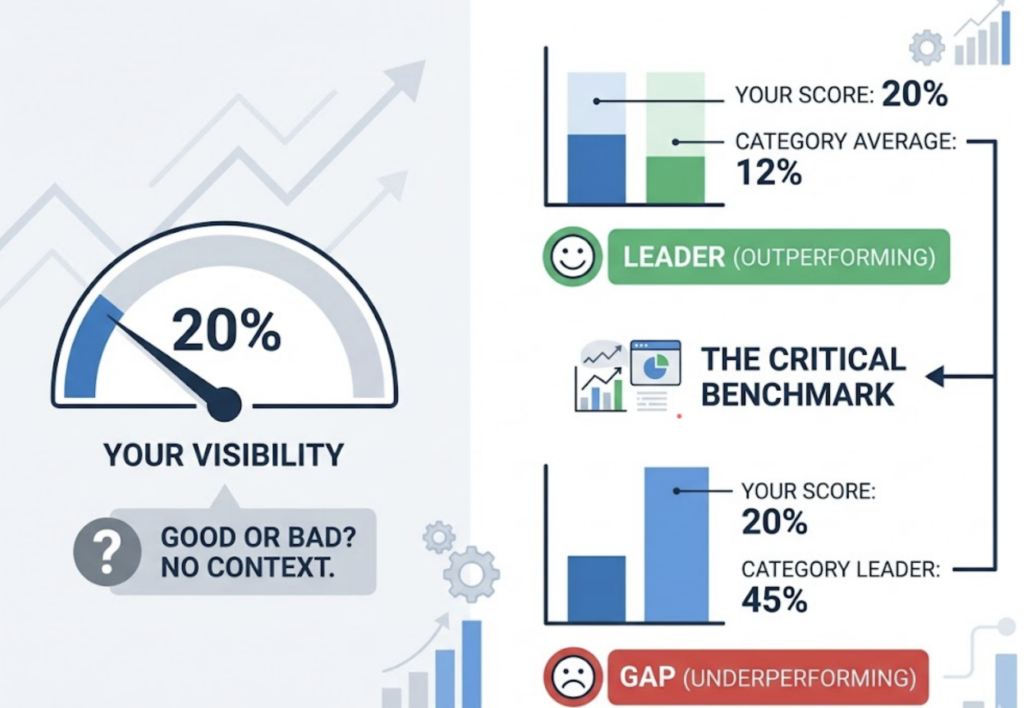

The visibility trend line is the starting point. It maps your brand’s inclusion rate across tracked prompts over time. But visibility in isolation is a vanity metric.

That’s where the category average becomes critical. If your visibility is 20%, that number means nothing without context. A category average of 12% makes you a dominant leader. A category leader sitting at 45% makes 20% a serious gap. An AI reputation monitoring analytics suite without a category benchmark is telling you your score without telling you the game.

The sentiment timeline works the same way. A sharp downward spike doesn’t always mean a crisis. It means something happened, and you need to find out what. NLP-based categorization (positive, neutral, negative) across sessions helps surface the shift pattern before it becomes a sustained trend.

The competitor overlay adds the competitive dimension. A useful visualization maps brands on two axes: visibility score (how often mentioned) against citation rate (how often trusted as a source). This surfaces the strategic difference between brands with high visibility but low trust, and those with lower visibility but high citation authority. Knowing where you sit relative to competitors tells you whether your next move should be an awareness play or a credibility play.

This is what a real AI engine optimization platform’s dashboard looks like when the data is actually configured for decision-making, not just reporting.

How Topify Tracks AI Reputation Across Four Dimensions

Topify is built around the five tracking requirements above, integrated into a single platform designed for brand managers and marketing teams who need action-ready intelligence, not raw data exports.

The four core modules map directly to the dimensions that matter.

Visibility Tracking monitors brand inclusion across ChatGPT, Gemini, Perplexity, DeepSeek, and other major AI platforms, with a real-time Visibility Index that shows how often your brand appears in category-defining prompts. You’ll see the trend line, the category average, and the gap in a single view.

Sentiment Analysis scores each brand mention on a proprietary 0-100 scale and identifies the specific drivers pulling sentiment up or down. Is the model framing your pricing negatively? Is a specific feature getting described inaccurately? Topify surfaces the “why,” not just the score.

Source Analysis maps the referral graph for every AI answer, identifying which domains are being cited when AI describes your brand. It also surfaces “source opportunities,” high-authority sites that are already citing competitors but not yet referencing you.

Competitor Monitoring gives a head-to-head view against up to five rivals, tracking share of voice and position within AI recommendations across all covered platforms.

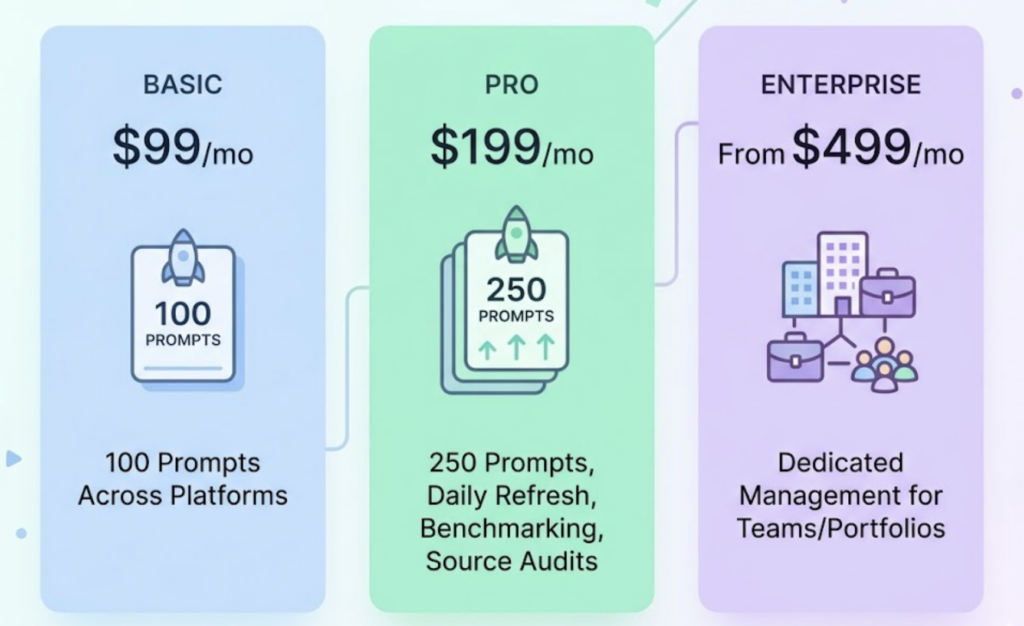

Topify’s Basic plan starts at $99/month, covering 100 prompts across major AI platforms. The Pro plan at $199/month expands to 250 prompts, daily refresh cycles, full competitor benchmarking, and detailed source attribution audits. For enterprise teams managing multiple brands or client portfolios, dedicated account management is available from $499/month. Full pricing details are available here.

From Monitoring to Action: What to Do With AI Reputation Data

Data without a decision framework is just overhead. Here’s how brand managers typically translate Topify’s insights into concrete moves.

Address sentiment at the source. When sentiment trends negative, the path forward isn’t a content blitz. Use Source Analysis to trace the root cause. In many cases, an LLM’s negative bias links back to a single widely-cited source, whether an outdated press release, a biased review aggregator, or a competitor’s comparison page. Publishing corrective content on high-authority domains, or updating the original source, can force a re-evaluation during the next retrieval cycle.

Close visibility gaps with prompt-level targeting. When Competitor Monitoring shows a rival dominating a specific query type (say, “best option for mid-market teams”), the fix is structural. Content needs to directly address those prompt patterns, using question-forward summaries, extractable fact blocks, and consistent product naming that builds entity clarity. This is the core of GEO execution.

Build citation authority where it counts. High visibility with a low citation rate signals that AI engines know your brand exists but don’t trust your site as a source. The action framework here is targeted placement on the domains AI engines already cite: industry directories, trade publications, Wikipedia categories, and niche research hubs relevant to your category. Topify’s Source Analysis identifies exactly which domains to prioritize.

Conclusion

Traditional ORM tools were built for a world where reputation lives on indexable pages. That world still exists, but it’s no longer where buying decisions start for a growing share of your audience.

AI-driven search interactions are projected to account for 30% of total digital discovery by 2026. Brands without an AI reputation monitoring solution in place won’t know what AI engines are saying about them until the effect shows up in pipeline data. By then, the narrative has already been repeated thousands of times across millions of sessions.

The starting point is straightforward: choose a platform that covers multiple AI engines, tracks sentiment over time, shows your visibility trend line against a category average, and gives you the source attribution data to act on what you find. That combination turns AI reputation from an invisible risk into a measurable, manageable channel.

FAQ

Q: What’s the difference between AI reputation monitoring and traditional online reputation management?

A: Traditional ORM aggregates public reviews and social mentions from indexable web pages, focusing on star ratings and sentiment across visible, crawlable content. AI reputation monitoring tracks how Large Language Models synthesize those signals into a conversational narrative. It measures “share of model” and “citation trust” rather than review volume, which are fundamentally different metrics with different drivers.

Q: How often should I monitor my brand’s AI reputation?

A: Daily or weekly monitoring is recommended for active brands. AI models update their retrieval and weighting frequently, and a negative narrative can become a baseline “fact” for a model within weeks. Monthly snapshots are often too slow to catch a drift before it becomes established. Topify’s Pro plan supports daily refresh cycles for this reason.

Q: Can I see how my AI reputation compares to competitors in the same category?

A: Yes. Effective AI reputation monitoring platforms use category averages and competitor overlays to benchmark your Visibility and Sentiment scores against rivals. This distinction matters: a visibility drop could be brand-specific or an industry-wide shift. Category-level context is what tells you which intervention makes sense.

Q: What is a visibility trend line and why does it matter in AI reputation tracking?

A: A visibility trend line is a time-series graph tracking the percentage of relevant prompts where your brand appears in AI responses. A single data point tells you where you are. The trend line tells you whether you’re gaining ground, losing it, or holding steady, and whether that movement correlates with a product launch, a PR event, or a competitor’s GEO push. Without the trend line, you’re navigating without a direction.