You open ChatGPT, type “best [your category] tool for mid-sized teams,” and scan the response. Your brand appears. You feel good. You close the tab.

Two days later, a colleague runs the same query. Different answer. Your brand is gone. A competitor you’d never paid much attention to is now the top recommendation.

That’s not a bug. That’s how AI search works, and it’s why manual spot-checks aren’t a tracking strategy.

Manual Spot-Checks Won’t Cut It Anymore

Traditional search engines are deterministic. Type a query, get the same ranked list. AI search engines are probabilistic, meaning the same prompt can produce different answers depending on timing, session context, and a randomness parameter called “temperature.”

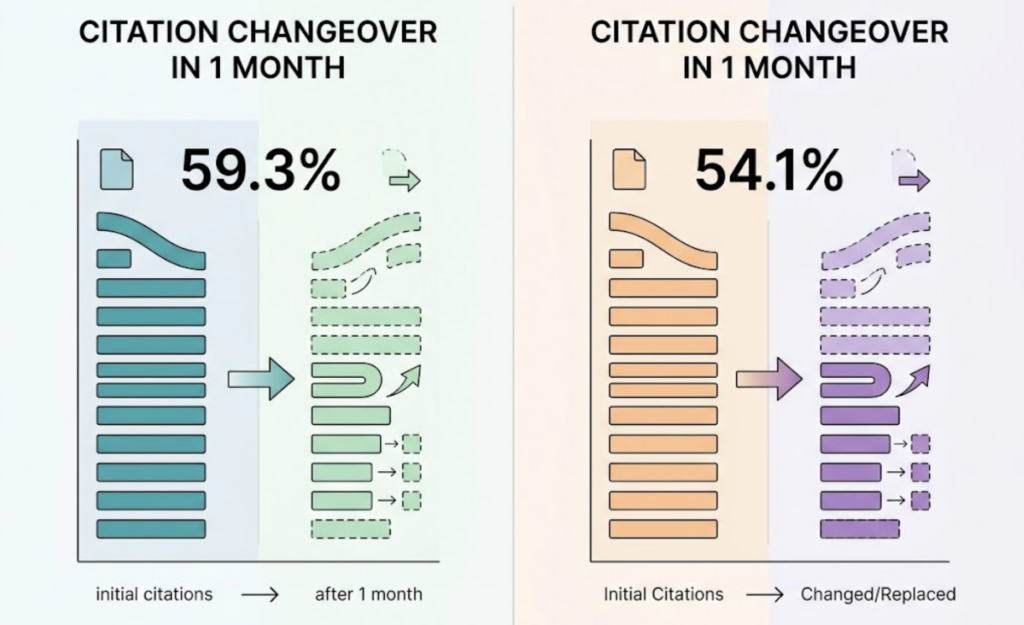

Research shows that 59.3% of domains cited by Google AI Overviews changed within a single month, and ChatGPT’s citation turnover runs at 54.1% over the same period. If you’re checking manually once a week, you’re not tracking visibility. You’re sampling noise.

The scale of the problem compounds this. AI assistants now generate 45 billion conversations per month globally, accounting for roughly 56% of total search volume. Your brand is being queried constantly, across platforms you may not even be monitoring.

That’s exactly what an AI query tracking tool is built to solve.

What an AI Query Tracking Tool Actually Does

An AI query tracking tool automates what your team has been doing manually, but at a scale and frequency no human process can match. It sends a predefined library of prompts to multiple AI platforms, records every response, and analyzes how your brand appears over time.

The core distinction from traditional SEO tools is fundamental. SEO rank trackers index static pages. An AI query tracking tool captures dynamic, generated answers — each one the output of a probabilistic model that may cite different sources every single time it runs.

You can’t crawl your way to this data. AI responses are synthesized, not indexed. That’s why AI query tracking software exists as its own category, separate from anything your current SEO stack can provide.

One nuance worth understanding: AI platforms distinguish between citation (your domain appears as a source link) and mention (your brand is directly recommended in the synthesized answer). A brand with high citation rates but low mention rates has authority that isn’t converting into recommendations. An AI query tracking tool quantifies both, so you know exactly which gap to close.

The Metrics That Turn Raw AI Answers into Actionable Data

Knowing that your brand “appeared” in an AI answer is a start. It’s not enough.

A properly built AI query tracking dashboard tracks seven core dimensions: visibility (did your brand appear?), sentiment (how was it described?), position (where did it rank relative to competitors?), volume (how many users are querying this topic?), mentions (raw frequency), intent (what stage of the buyer journey does this query represent?), and CVR (the likelihood that an AI mention leads to a brand interaction).

The three metrics most teams overlook are sentiment, position, and source. AI platforms routinely describe the same brand differently. One engine may call your product “enterprise-grade.” Another may describe it as “a budget alternative.” Neither may match your actual positioning.

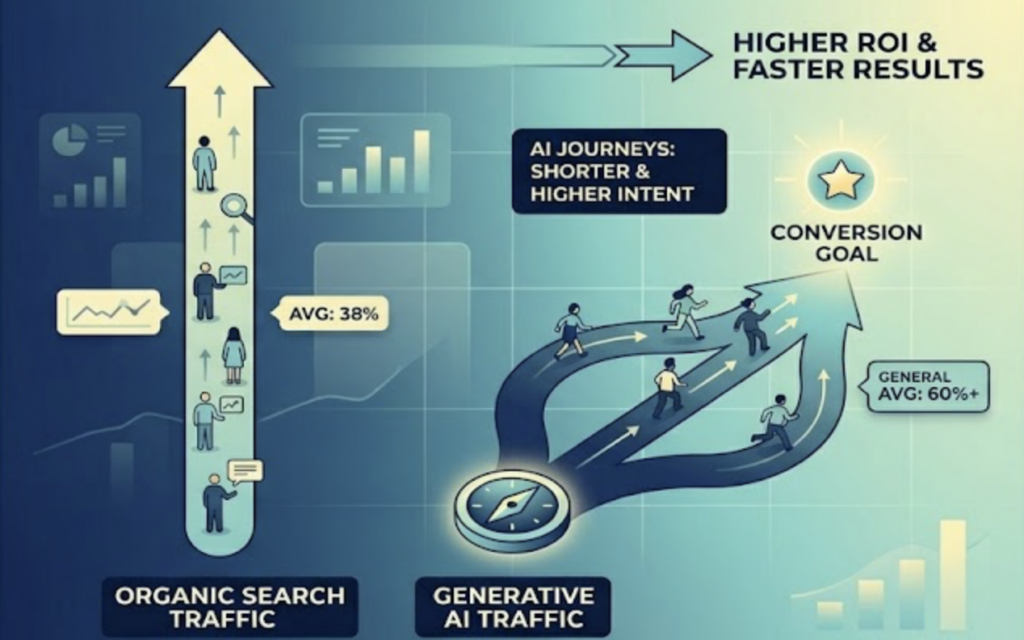

This matters more than it sounds. Research shows that visitors arriving from generative AI sources convert at 4.4x to 23x the rate of traditional organic search traffic. In transportation and logistics, AI-referred visitors convert at 62.76% versus 39.52% for organic. In SaaS and software, the gap runs 57.84% versus 37.17%. Microsoft Advertising data indicates that Copilot-assisted customer journeys are 33% shorter and drive a 76% lift in high-intent conversion rates.

That conversion premium means how AI describes your brand directly affects revenue, not just awareness. Your AI query tracking analytics need to capture sentiment and position, not just presence.

Why Platform Coverage Determines Whether Your Data Is Reliable

Not all AI platforms recommend brands the same way. The differences aren’t cosmetic.

ChatGPT synthesizes answers from a relatively compact source set, averaging 7.92 sources per response, with a strong lean toward Bing-indexed content, Wikipedia, and Reddit. Perplexity is built search-first and averages 21.87 sources per response, consistently favoring official brand sites and authoritative directories. Google AI Overviews is deeply integrated with Google’s Knowledge Graph, and notably, 40% of its citations come from pages ranking outside the traditional top 10 in organic search.

Claude shows a different pattern entirely, citing user-generated content and reviews at 2-4x the rate of other models. What works for ChatGPT visibility often won’t move the needle on Claude.

A brand that performs well on one platform may be invisible on another. A brand well-cited by Gemini may be described negatively by Claude. If your AI query tracking platform only monitors one engine, your data has a structural blind spot built in.

For brands operating globally, this complexity compounds fast. DeepSeek reached 100 million users in seven days after launch, breaking every prior growth record in the category. Platforms like Qwen and Doubao dominate Chinese-language AI search, each with distinct citation preferences. An AI query tracking system that ignores these platforms misses a meaningful share of global AI-driven discovery.

How Topify Tracks AI Queries Across Every Major Platform

Most AI visibility tools cover one or two platforms and market it as comprehensive coverage. Topify tracks ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, Qwen, and other major AI engines across every market where your audience is actually searching.

The platform’s tracking architecture is built around those seven core metrics: visibility, sentiment, position, volume, mentions, intent, and CVR. These aren’t separate reports you have to triangulate manually. They’re unified into a single AI query tracking dashboard so your team can see, in one view, that ChatGPT mentions dropped this week, trace it back to a specific domain that stopped being cited, and understand whether sentiment shifted at the same time.

Topify’s Competitor Monitoring runs in parallel. You don’t just see your own data. You see which competitors AI platforms are recommending instead of you, where they’re gaining ground, and what content they’re being cited for. That’s the difference between knowing you’re losing visibility and knowing why.

The Source Analysis feature goes a level deeper, mapping the exact URLs and domains that AI platforms use when they reference your category. If AI engines consistently cite a third-party review site that doesn’t mention your brand, that’s a specific gap you can close with a targeted piece of content.

Topify’s team includes founding researchers from OpenAI and Google SEO champions, which reflects in the algorithm’s precision. The Basic plan starts at $99/month, covering 100 prompts and 9,000 AI answer analyses monthly across 4 projects. The Pro plan at $199/month scales to 250 prompts and 22,500 analyses for larger teams. Get started here.

How to Track Your Brand’s Visibility in AI Search Results (Step by Step)

The long-tail question “how can I track my brand’s visibility in AI search results?” has a practical answer that most guides skip past: start with your prompt library, not your platform selection.

Step 1: Build a 30-50 prompt baseline set. Around 25% should be brand-verification queries (“What is [brand]?”, “How does [brand] price its product?”). The remaining 75% should split between category-discovery queries (“What’s the best [category] software for mid-sized teams in 2026?”) and competitive comparison queries (“[Brand] vs [Competitor]: what’s the difference?”).

Step 2: Set your baseline across multiple platforms simultaneously. Run your prompt library across ChatGPT, Perplexity, and Gemini at minimum. Record visibility rate, position, and sentiment for each platform separately. Don’t aggregate them. Platform differences are the insight.

Step 3: Define a share of voice target. In B2B SaaS, a reasonable initial benchmark is a 15% category query mention rate, meaning your brand should appear in AI answers to relevant category prompts at least 15% of the time. Track against this weekly, not monthly.

Step 4: Monitor for hallucinations. Research indicates GPT-4 produces factual errors in news-adjacent content at a 67% rate. If AI platforms are misrepresenting your pricing, describing discontinued features, or mischaracterizing your market position, that’s an active brand reputation problem, not just a visibility issue.

Step 5: Connect AI visibility to downstream business metrics. Track whether increases in AI mention frequency correlate with increases in branded search volume on Google. This “assist effect” is how you make the ROI case to stakeholders who still think in terms of clicks and sessions.

An AI query tracking solution like Topify automates steps 2 through 5, surfacing anomalies, competitor shifts, and source changes without manual analysis. That means your team spends time acting on data rather than collecting it.

Conclusion

The gap between brands that are visible in AI search and those that aren’t is widening. By 2028, an estimated $750 billion in consumer spending will be directly influenced by AI search recommendations. The brands showing up consistently aren’t the ones doing the most manual checking. They’re the ones that built a structured AI query tracking system before it became obvious that they needed one.

The starting point isn’t complicated. Define your prompt library. Choose an AI query tracking platform that covers the engines your audience actually uses. Set a share of voice baseline, and track against it week over week. The brands that do this now will have 12 to 18 months of competitive data by the time this becomes standard practice.

Topify is worth a close look if you’re building this infrastructure today. Global AI engine coverage, metrics that connect directly to revenue, and execution tools that don’t require a team of analysts to interpret the results. Start here.

FAQ

Q: What’s the difference between an AI query tracking tool and a traditional SEO rank tracker?

A: A traditional SEO rank tracker monitors your position in static, indexed search results. An AI query tracking tool captures something fundamentally different: the probabilistic, generated answers that AI platforms produce each time a user asks a question. Because AI responses aren’t indexed pages, conventional SEO tools can’t access them. You need purpose-built AI query tracking software that directly queries AI engines, records the outputs, and analyzes them at scale over time.

Q: How many AI platforms should I be tracking?

A: At minimum, ChatGPT, Perplexity, and Gemini for most markets. If you have global operations or serve Asian markets, add DeepSeek, Qwen, and Doubao. Each platform uses different citation logic and source preferences, so a brand’s visibility can vary significantly across engines. Single-platform data creates structural blind spots in your reporting.

Q: How often should I run AI query tracking?

A: Weekly at minimum. Given that ChatGPT’s cited domains turn over at a 54.1% monthly rate, monthly checks will miss meaningful shifts. For brands actively running GEO optimization campaigns or in competitive categories, daily tracking is the more defensible standard.

Q: How can I track my brand’s visibility in AI search results without spending hours manually?

A: Use an AI query tracking platform that automates prompt execution, records responses over time, and surfaces anomalies automatically. The manual approach, where someone pastes queries into ChatGPT and screenshots the results, doesn’t scale and doesn’t produce trend data. Tools like Topify handle the monitoring layer so your team works with interpreted insights rather than raw AI outputs. Less time copy-pasting, more time acting on what the data shows.