Your domain authority is solid. Your top keywords rank well. Search Console shows impressions trending up. Then a colleague types your category into ChatGPT and your brand doesn’t appear once, while a competitor you’ve outranked on Google for two years gets the first recommendation.

That gap has a name: missing AI query tracking. And your current analytics stack has no way to show it to you.

AI Query Tracking Is Not the Same as AI Traffic Tracking

Most teams conflate these two things, and it’s an expensive mistake.

AI traffic tracking measures what happens after someone clicks a link from an AI response to your website. AI query tracking measures something upstream: whether your brand appears in the AI’s answer at all, across which prompts, on which platforms, and in what context.

The distinction matters because the click is increasingly optional. When a user asks Perplexity “What’s the best project management tool for remote teams?”, they get a synthesized answer. They may never click through to any website. If your brand isn’t in that answer, you’ve lost a potential customer before they ever reached your domain, and your analytics will show nothing unusual.

Google Search Console currently provides no native way to isolate AI Overview impressions from traditional search data. Google officially merges both into the same reporting view. GA4, meanwhile, frequently miscategorizes AI-referred traffic as “Direct” because many AI platforms strip referrer headers before passing traffic. The result: high-value AI visitors quietly enter your funnel labeled as unassigned, while the actual source stays invisible.

That’s not a minor reporting quirk. Research shows that AI-referred visitors convert at 4.4 times the rate of average organic search visitors. You can’t optimize what you can’t see.

How AI Query Tracking Actually Works

The core mechanism is straightforward: define a set of prompts your target customers are likely to ask AI platforms, submit those prompts, parse the AI’s responses, and record whether your brand was mentioned, where, and how.

In practice, it’s significantly more complex. Modern AI platforms use Retrieval-Augmented Generation (RAG), pulling from live indexes and synthesizing answers that vary based on session context, model temperature, and recent data updates. The same prompt submitted twice can return different brand recommendations. That non-deterministic behavior is exactly why a single manual check is unreliable.

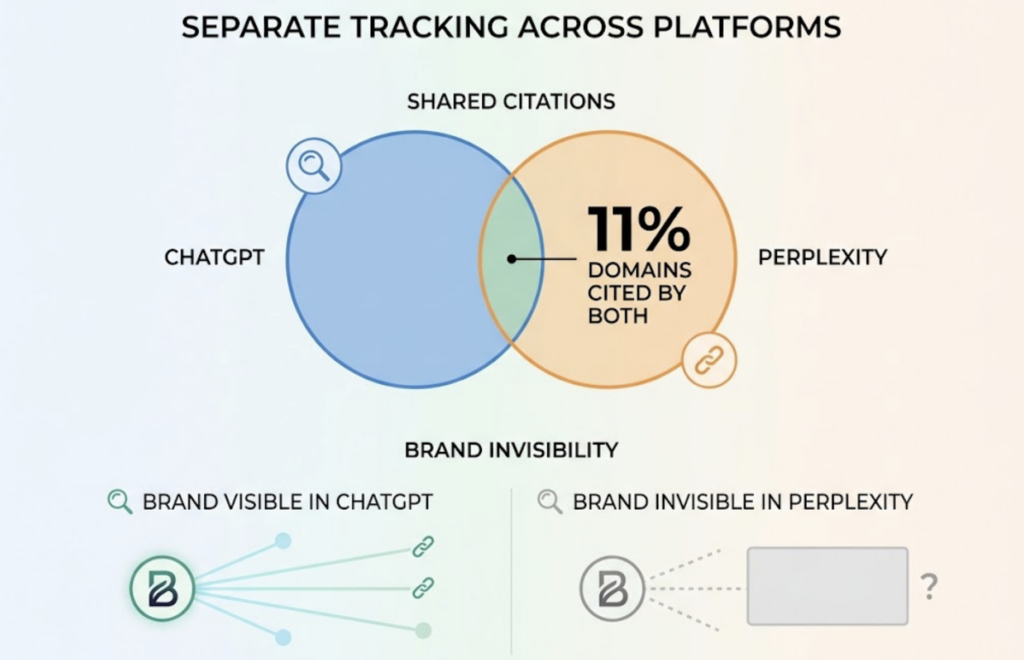

It also means tracking needs to happen across platforms separately. The architectures are fundamentally different. ChatGPT synthesizes from internal knowledge first, pulling external sources selectively for verification. Perplexity is retrieval-first, constructing answers around live web sources and citing heavily. Gemini is search-native, integrated tightly with Google’s index. Research shows that only 11% of domains are cited by both ChatGPT and Perplexity, which means a brand showing up strongly in one engine can be nearly invisible in another.

Add DeepSeek, Qwen, and the growing range of AI assistants, and the platform fragmentation problem becomes clear. AI query tracking that covers only ChatGPT isn’t tracking. It’s sampling.

What AI Query Tracking Actually Measures: 7 Metrics That Matter

Once you have a tracking system in place, the data falls into seven categories. Each measures a different dimension of your AI search visibility.

Visibility Rate is the foundational metric: out of all the prompts you’re monitoring, what percentage of AI responses include your brand? This is also expressed as Generative Share of Voice (GSOV), calculated as total brand mentions divided by total queries analyzed, multiplied by 100. A GSOV of 40% means your brand appears in roughly half of all relevant AI conversations in your category.

Position captures where in the response your brand appears. Research indicates brands mentioned in the first two sentences receive five times more consideration than those cited later in the response. Being mentioned isn’t enough; placement matters.

Sentiment tracks the tone of how AI platforms describe your brand. An AI might mention you while calling you “a budget option” when your positioning is premium. That’s a different problem than not being mentioned at all, and it requires a different fix.

Share of Voice provides competitive context. Your absolute mentions could increase while your share of voice drops because competitors are growing faster. GSOV without competitive benchmarking gives you half the picture.

Mention Count, Source Attribution (which domains the AI is pulling from when it cites your category), and AI Search Volume (how frequently real users are submitting the prompts you’re tracking) round out the full picture.

Topify tracks all seven of these metrics across ChatGPT, Gemini, Perplexity, DeepSeek, and others in a unified dashboard, surfacing the data in a format that connects visibility trends to specific source changes.

A Practical AI Query Tracking Checklist

Getting started doesn’t require perfecting every variable at once. Here’s what matters in the first 30 days.

Prompt selection. Build a prompt library that covers the full buyer journey: awareness-stage questions (“what tools help with AI search visibility”), evaluation questions (“best AI search analytics platform”), and decision-stage comparisons (“Topify vs [competitor]”). A starting library of 30 to 50 prompts gives enough coverage to produce statistically meaningful data.

Platform coverage. At minimum, track ChatGPT, Perplexity, and Gemini. These three represent the majority of current AI search usage in most Western markets. If your audience skews technical or global, add DeepSeek and Qwen. The research suggests that 250 to 500 high-intent queries tracked consistently across platforms is the threshold for statistical stability.

Baseline measurement. Your first round of data is your baseline. Don’t act on it immediately. Run the same prompts for two to four weeks before drawing conclusions. What you’re looking for is a trend, not a single data point.

Review cadence. A weekly snapshot is enough to catch sudden changes. A monthly deep review is where you diagnose root causes and adjust content strategy. Quarterly at minimum, because pages that aren’t updated at that frequency are three times more likely to lose AI citations as fresher competitor content displaces them.

Action triggers. Define in advance what data will prompt a response. A visibility drop of more than 10 percentage points on a specific platform suggests a source attribution issue. A sentiment shift toward negative language around your brand points to off-site content needing attention.

4 Mistakes That Break AI Query Tracking Before It Starts

These aren’t edge cases. They’re the most common reasons teams invest in tracking and still don’t get useful data.

Tracking only branded prompts. Searching “[your brand name]” in ChatGPT tells you whether the AI knows you exist. It doesn’t tell you whether the AI recommends you when someone asks a category or comparison question, which is where most purchase decisions actually happen. Branded prompts should be a small fraction of your total prompt library.

Only checking ChatGPT. Given that only 11% of domains are cited across both ChatGPT and Perplexity, a brand can look healthy on one platform while being almost completely absent from another. Perplexity’s owned website citations account for just 12% of total brand mentions in its responses, meaning the platforms that drive AI discovery aren’t always the platforms where you think you have coverage.

Treating it as a one-time audit. AI models update their citation behavior continuously. A snapshot report from three months ago reflects a different information environment than today. On the flip side, a single bad week of data doesn’t indicate a structural problem. Tracking is useful as a continuous signal, not as an annual exercise.

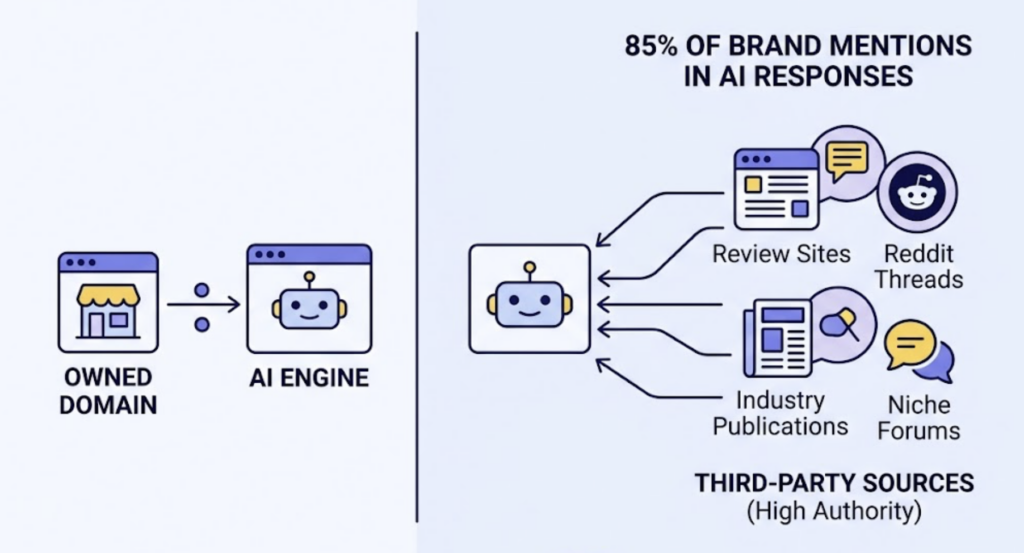

Ignoring source attribution. Knowing that your visibility dropped is half the information you need. The other half is understanding why. AI engines form their recommendations based on what sources they trust for your category. If your brand stops appearing in Perplexity responses, it often means the third-party sources that used to validate your authority have been displaced by fresher competitor mentions. That’s fixable, but only if you can see the source layer.

How to Build an AI Query Tracking Strategy That Drives Action

The data is only useful if it connects to decisions. A working strategy follows four steps: Track, Diagnose, Optimize, Measure.

Track means running your prompt library consistently across platforms and recording the output. This is the operational foundation. Topify’s AI Volume Analytics surfaces high-value prompts you might not have identified manually, showing which queries are driving real AI search behavior in your category, not just which queries you assumed were important.

Diagnose means identifying where visibility is weak and understanding the cause. Platform-level gaps suggest structural content issues specific to how that engine retrieves data. A category of prompts with consistently low visibility suggests topical authority problems. Negative sentiment in AI descriptions points to off-site narrative management issues.

Optimize is where the data drives action. Research consistently shows that 85% of brand mentions in AI responses originate from third-party pages rather than owned domains. That means optimizing your own site content is necessary but not sufficient. The bigger lever is ensuring your brand is mentioned accurately and consistently in the sources AI engines trust: high-authority review sites, Reddit threads, industry publications, and niche forums. Source Analysis in Topify identifies which domains AI engines are pulling from for your category, so you know exactly where to focus off-site efforts.

Measure closes the loop by tracking whether changes in source attribution and content structure translate into Visibility and Position improvements over the following four to eight weeks. Research indicates brands that appear in the top three AI recommendations see up to 34% more qualified lead requests compared to those cited later in responses. That’s a revenue-level metric, not a vanity metric.

AI Query Tracking Pricing: What to Expect

Most AI query tracking tools price based on two variables: the number of prompts you track and the number of AI platforms covered. Volume and breadth both drive cost.

At the budget end, tools like Otterly AI start around $29 per month but typically cover basic mention presence without deep sentiment analysis or source attribution. Mid-market platforms like Peec AI start around €85/month and are popular with agencies for multi-brand reporting. Enterprise platforms like seoClarity start at $2,500/month and are built for large global teams needing SOC 2 compliance and historical data retention.

Topify’s pricing is structured around actual usage rather than inflated enterprise bundles. The Basic plan starts at $99/month (billed annually) and includes 100 prompt slots with coverage across ChatGPT, Perplexity, and AI Overviews. The Pro plan at $199/month expands to 250 prompts and 8 projects, which is the right scale for most growth-stage SaaS and mid-market brands running category-level and competitive tracking simultaneously. Enterprise plans start at $499/month with custom configurations and dedicated account management.

To size your prompt budget: start with 5 to 10 awareness-stage prompts per product category, 5 to 10 comparison/evaluation prompts, and 5 branded prompts. For most brands, 30 to 50 prompts cover the core tracking needs at the Basic tier. Scale up when you’re actively running optimization campaigns and need tighter measurement resolution.

Conclusion

Your SEO rankings haven’t disappeared. But they’re no longer the full picture of how buyers discover your brand. AI search is operating as a parallel discovery layer, and for the visitors it’s generating, conversion rates are 4.4 times higherthan standard organic traffic. That value is accruing to brands that can see and measure their AI presence, and compounding against those that can’t.

The starting point isn’t complex. Build a prompt list of 30 to 50 queries your buyers are likely asking. Pick two or three platforms to baseline. Run the tracking for four weeks before making any changes. What you learn in that first month will tell you more about your actual discovery gaps than a year of keyword rank reports.

Get started with Topify to set up your first prompt tracking project and see exactly where your brand stands across AI platforms today.

FAQ

Q: What is AI query tracking? A: AI query tracking is the practice of monitoring whether and how your brand appears in AI-generated responses across platforms like ChatGPT, Perplexity, and Gemini. It involves submitting a defined set of prompts, analyzing the AI’s answers, and recording metrics like visibility rate, position, and sentiment over time.

Q: How does AI query tracking work? A: A set of prompts representing your customers’ likely questions is submitted to AI platforms on a recurring basis. The responses are parsed to detect brand mentions, record where in the response the brand appears, and evaluate the tone. Because AI responses are non-deterministic (the same prompt can return different answers), tracking requires consistent sampling across 250 to 500 prompts to produce statistically stable data.

Q: How often should I update my AI query tracking data? A: A weekly snapshot is enough to catch sudden shifts. Monthly reviews are where you diagnose patterns and adjust strategy. Content that isn’t refreshed quarterly is three times more likely to lose AI citations as fresher competitor material displaces it in the retrieval layer.

Q: What’s the difference between AI query tracking and traditional SEO monitoring? A: Traditional SEO monitoring tracks keyword rankings and organic traffic, both of which measure what happens after a user reaches a search results page. AI query tracking measures what happens before that, specifically whether AI platforms include your brand in the synthesized answers they present to users, many of whom never click through to any website at all.