Your domain authority is solid. Your keyword rankings haven’t moved in months. But when someone opens Perplexity and asks, “What’s the best tool for [your category]?” your brand isn’t in the answer. Not because you’re irrelevant. Because the system tracking your visibility was never built to measure what happens inside an AI conversation.

That gap is exactly what an AI query tracking system is designed to close.

Most Brands Are Tracking the Wrong Queries in AI Search

Traditional keyword tracking tools were built for a different world. They measure where a page ranks on a results page. AI search doesn’t work that way.

When a user types a question into ChatGPT or Perplexity, the model doesn’t match keywords to URLs. It reasons through the query, synthesizes information from its training data and real-time sources, and generates a direct answer. The brand that appears in that answer isn’t necessarily the one with the highest domain authority. It’s the one the model associates most strongly with the intent behind the question.

AI search queries average more than 7 words. Users tend to ask complex, scenario-specific questions like “which CRM offers the best automation workflow for a remote startup team?” Traditional SEO tools have no way to capture these conversations, let alone tell you whether your brand appeared in the response.

That’s the problem. And it’s why tracking the right queries, across the right platforms, with a system built for AI matters more than most marketing teams currently recognize.

What Is an AI Query Tracking System?

An AI query tracking system is a technology infrastructure that monitors, collects, and analyzes how users interact with large language models, specifically to measure how often a brand appears in AI-generated answers, where it appears, and with what sentiment.

It’s less about “where do we rank” and more about “are we even in the conversation.” The system tracks brand mentions across AI platforms, maps the prompts that trigger those mentions, and traces the sources AI engines pull from when constructing responses.

The difference from traditional SEO software comes down to three things. First, the input: AI tracking works with natural language prompts, not keywords. Second, the output: it measures share of model (SoM) and mention position, not page rankings. Third, the data type: AI responses are probabilistic and shift constantly, meaning static monthly snapshots miss most of what’s actually happening.

How an AI Query Tracking System Works: The 4-Layer Architecture

A professional AI query tracking system runs on four layers, each solving a different part of the measurement problem.

Layer 1: Prompt Library Construction. The system starts by building a library of high-value prompts reflecting how real users talk to AI. This goes beyond brand-name queries. It covers category questions (“best analytics platform for SaaS”), competitive comparisons (“alternatives to [competitor]”), and scenario queries (“how to improve lead scoring with AI”). Advanced platforms also detect “dark queries,” the sub-queries AI models silently generate to gather information when answering a broader question. These rarely show up in Google search data but drive significant AI citation behavior.

Layer 2: Cross-Platform Response Collection. The system simulates real user queries across ChatGPT, Perplexity, Gemini, and other major AI platforms, then captures the full generated responses at scale. Different models have different citation behaviors, and the overlap in sources cited between platforms typically falls below 25%. A brand visible on ChatGPT may be essentially absent from Perplexity’s answers to the same question.

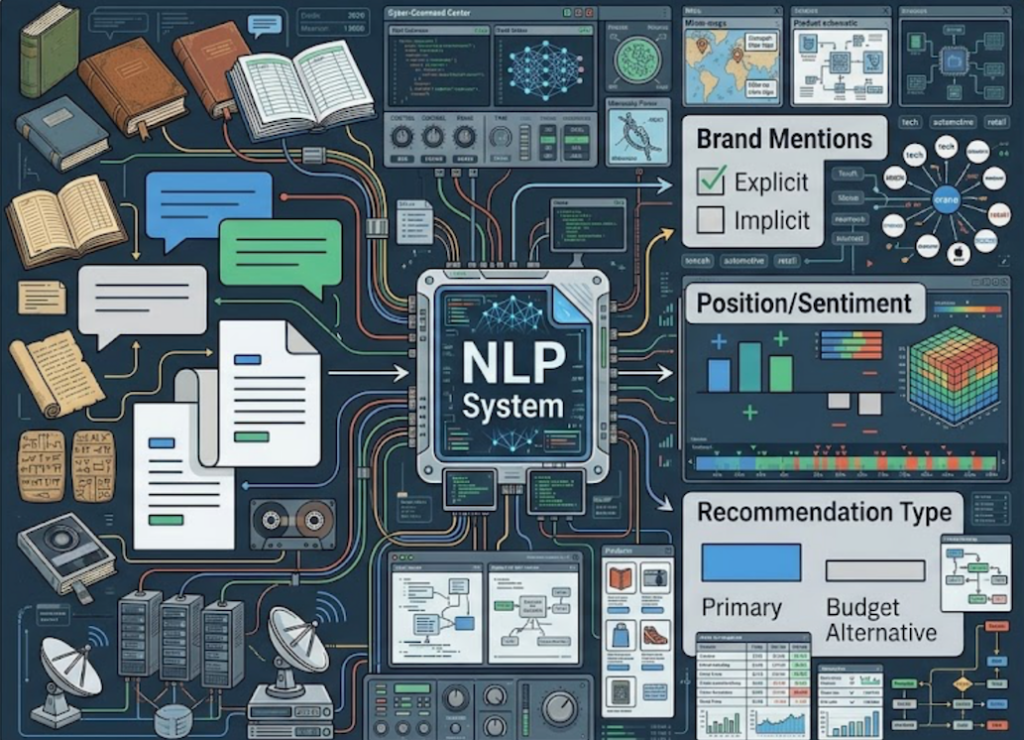

Layer 3: Brand Mention Detection. This is where NLP does the heavy lifting. The system scans collected responses for explicit brand mentions, tracks position, detects sentiment, and flags cases where the brand is cited as a primary recommendation versus a “budget alternative.” It also identifies implicit citations, cases where AI references a concept or dataset that originated with a brand even without naming the brand directly.

Layer 4: Analytics and Reporting. All that raw data gets aggregated into a dashboard showing trends over time, competitor comparisons, and actionable signals. The goal isn’t a report. It’s a direct feed into the content optimization workflow.

Key Metrics an AI Query Tracking Dashboard Should Show

An AI query tracking dashboard that only shows whether a brand appears is leaving most of the value on the table.

The metrics that actually drive decisions are: Visibility Rate (what percentage of tracked prompts return a brand mention), Mention Position (first mention vs. buried reference has a meaningful impact on conversion), Sentiment Score(is the brand being actively recommended or just passively acknowledged), Query Volume (how often specific prompts are being asked across AI platforms), and Source Coverage (which domains AI is pulling from to build answers about the brand).

Leading brands tend to score above 65 out of 100 on composite AI visibility scores. For most brands starting from zero, reaching a consistent 30-40% visibility rate on core category prompts is a strong first milestone.

Citation behavior varies significantly by platform. Perplexity cites external sources in over 96% of responses. ChatGPT cites in roughly 50% of cases. Claude cites in almost none. That difference shapes where content investment should go first.

Also worth watching: around 80% of brand mentions in AI responses are neutral statements rather than active recommendations. Moving even a fraction of those into the “recommended” category is where AI query tracking analytics earns its ROI.

The 3 Most Common Mistakes in AI Query Tracking Setup

Most teams that build AI monitoring for the first time make at least one of these mistakes. The result is a dashboard full of data that doesn’t reflect competitive reality.

Mistake 1: Only tracking brand-name queries. If you’re only asking “does AI mention [brand name]?”, you’re missing where most AI decisions actually happen. Category-level queries like “best project management tool for remote teams” or “what software helps with SOC 2 compliance” are where brand associations get built. A well-structured tracking setup puts roughly 70% of its prompts on category and scenario queries, not brand-name lookups. Over-indexing on brand terms hides the visibility losses happening at the top of the acquisition funnel.

Mistake 2: Monitoring only one platform. ChatGPT holds around 77.97% of the AI search market, which makes it an obvious starting point. But Perplexity attracts a different user profile, researchers and technical professionals who spend an average of 9 to 23 minutes per session. Gemini is deeply embedded in Google Workspace and Android. Each platform has its own citation logic and source preferences. Only monitoring one is like running SEO for a single search engine while ignoring all others. The brands winning in AI search today are tracking across at least three platforms simultaneously.

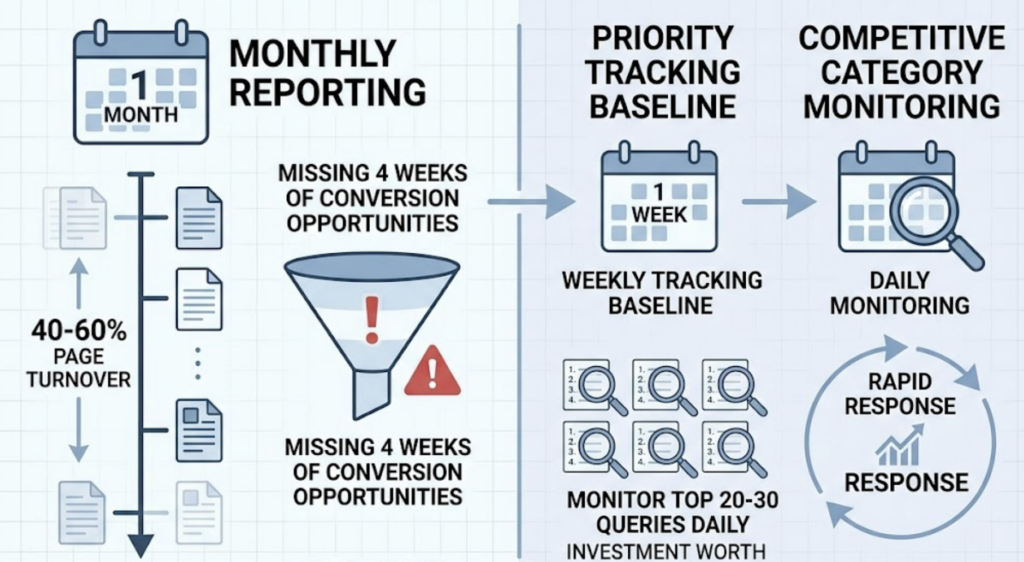

Mistake 3: Running monthly reports. AI citation patterns change fast. The pages AI platforms pull from turn over at a rate of 40-60% per month. By the time a monthly report catches a visibility drop, a brand may have missed four weeks of conversion opportunities. For high-priority prompts, weekly tracking is the baseline. In competitive categories, daily monitoring of the top 20 to 30 queries is worth the investment.

A Practical Strategy for Building Your AI Query Tracking System

Here’s a five-step framework that works for teams at any size, whether you’re starting from scratch or replacing a manual spot-check process.

Step 1: Build your intent matrix. Start with 30 to 100 prompts that map directly to your revenue-driving use cases. Include category-level queries, competitor comparison queries, and specific scenario questions. Don’t try to track everything at once. Focus on the prompts where you’re most likely to lose a deal to a competitor.

Step 2: Choose an AI query tracking platform with prompt discovery built in. Manual prompt selection will always miss the queries that matter most. You need an AI query tracking software that automatically surfaces high-value prompts, including the dark queries competitors haven’t identified yet, and that tracks across at least ChatGPT, Perplexity, and Gemini.

Step 3: Establish a baseline. Before optimizing anything, record your current visibility rate, sentiment scores, and mention positions across your full prompt set. That first week of data becomes your reference point for measuring whether content changes actually move the needle. Without a baseline, you’re flying blind.

Step 4: Set reporting frequency based on competitive intensity. Weekly is the standard. If you’re in a fast-moving category like AI tools, cybersecurity, or fintech, move to daily tracking for your top 20 prompts.

Step 5: Feed tracking data directly into content updates. When the system shows a visibility drop on a specific query, that’s a content action item. Find out what source AI is now citing instead of yours, then update or create content to reclaim that citation path. The feedback loop between AI query tracking analytics and content execution is what separates brands that improve over time from those that just watch the numbers.

Topify handles much of this workflow automatically. Its one-click execution feature lets teams define optimization goals in plain English, review the proposed strategy, and deploy without building a manual process from scratch.

How to Choose the Right AI Query Tracking Tool for Your Team

The criteria that matter most aren’t the ones most tools lead with. Platform coverage and dashboard design are table stakes. What separates useful AI query tracking solutions from expensive data exports is whether they tell you what to do next.

Here’s how the main options compare:

| Tool | Best For | Core Strength | Starting Price |

|---|---|---|---|

| Topify | SMBs, SaaS brands, agile teams | Prompt discovery, 7-metric tracking, one-click execution | $99/mo |

| Profound | Large enterprises, global brands | Deep data, geo and persona simulation | ~$400-500/mo |

| SE Ranking | SEO agencies | Citation format analysis, historical data | $129/mo |

| Otterly.ai | Solo founders, limited budgets | Basic sentiment monitoring | $29/mo |

| Ahrefs Brand Radar | Existing Ahrefs users | SEO data integration | Add-on pricing |

For most teams building an AI query tracking system for the first time, Topify offers the right balance between capability and cost. The Basic plan at $99/month covers 100 prompts and 9,000 AI answer analyses, tracks across ChatGPT, Perplexity, and AI Overviews, and includes High-Value Prompt Discovery that automatically surfaces dark queries.

The Pro plan at $199/month scales to 250 prompts and 22,500 AI answer analyses, with 10 seats and 8 projects. For agencies managing multiple brands, the per-project structure makes reporting cleaner.

The more meaningful difference is what Topify does with the data. Most AI query tracking platforms stop at monitoring. Topify is built around execution. When visibility drops on a key prompt, the system identifies the content actions most likely to recover it and helps deploy them. For teams without a dedicated GEO strategist in-house, that closed loop is the difference between a dashboard and an actual growth channel.

Topify tracks seven core metrics: visibility, sentiment, position, volume, mentions, intent, and CVR. That last metric, estimated conversion visibility rate, is something most AI query tracking dashboards still don’t surface. Knowing a brand appears in AI answers is useful. Knowing which appearances are actually driving users toward a purchase decision is a different level of intelligence entirely.

Get started with Topify to set up your first prompt library and see where your brand stands across major AI platforms within a few minutes.

Conclusion

The brands that treat AI query tracking as a bolt-on to their existing SEO stack will consistently underperform the ones that build it as a primary measurement system. Traditional organic search traffic has already declined 15 to 25% for many categories as users shift to AI-generated answers. That trend isn’t reversing.

Start with a focused set of 30 to 50 high-intent prompts. Track across multiple platforms. Move to weekly reporting on your core queries. And choose an AI query tracking solution that closes the loop between data and execution, not one that just adds another dashboard to ignore. The gap between brands that appear in AI answers and those that don’t is widening faster than most teams realize. The time to build the system is before that gap shows up in revenue.

FAQ

Q: What is an AI query tracking system?

A: An AI query tracking system monitors how users interact with AI platforms like ChatGPT, Perplexity, and Gemini, specifically tracking whether and how often a brand appears in AI-generated responses. It measures share of model (SoM), mention position, sentiment, and the sources AI platforms reference when constructing brand-related answers.

Q: How does an AI query tracking system work?

A: The system simulates real user queries across major AI platforms, captures the full generated responses, and uses NLP to extract brand mentions, analyze sentiment, and identify citation sources. It then aggregates this data into an AI query tracking dashboard showing trends, competitor comparisons, and content optimization signals over time.

Q: What metrics should an AI query tracking dashboard show?

A: The core metrics are visibility rate (how often a brand appears across tracked prompts), mention position (where in the response the brand appears), sentiment score (recommended vs. neutral vs. negative), query volume (how frequently specific prompts are asked across AI platforms), and source coverage (which domains AI pulls from when generating brand-related answers).

Q: What’s the difference between an AI query tracking tool and traditional SEO software?

A: Traditional SEO software tracks keyword rankings and backlinks on indexed web pages. An AI query tracking tool measures brand visibility inside AI-generated conversational answers. The inputs are natural language prompts rather than keywords, the outputs are probabilistic metrics like SoM and citation frequency, and the data changes fast enough that monthly reporting is too slow to be useful.