Your keyword rankings are solid. Your domain authority is holding.

Then someone on your team types your category into Perplexity and

finds three competitors in the answer. Your brand isn’t there. Your

analytics show nothing unusual, because traditional tools weren’t

built to see what AI is doing with your brand.

That’s the blind spot AI query tracking analytics is designed to close.

AI Query Tracking vs. Keyword Tracking: They Measure Completely Different Things

Traditional SEO tracking has a simple logic: a keyword maps to a

ranked URL, a ranked URL earns clicks. You optimize the page,

you move up the list. Clear cause and effect.

AI query tracking doesn’t work that way at all.

When a user asks ChatGPT or Perplexity a question, the model

synthesizes a narrative answer using Retrieval-Augmented Generation

(RAG). It’s not returning a ranked list of pages. It’s deciding

which brands, facts, and sources to include in a generated response.

Your Google ranking position has almost no bearing on that decision.

The data makes this concrete: approximately 70% of the domains

cited in AI-generated responses don’t appear in Google’s top

organic results for the same queries. Being indexed isn’t a

prerequisite for being mentioned by AI.

Here’s what the two systems are actually tracking:

| Feature | Traditional SEO Tracking | AI Query Tracking Analytics |

|---|---|---|

| Primary Metric | Ranking Position (1–100) | Brand Mention Rate & Citation Frequency |

| Unit of Analysis | Short/mid-tail keywords | Conversational prompts & intent maps |

| Output Format | Ordered list of URLs | Synthesized narrative text |

| Visibility Logic | Algorithmic ranking factors | Semantic relevance & information gain |

| Traffic Nature | Click-dependent | Often zero-click / impression-heavy |

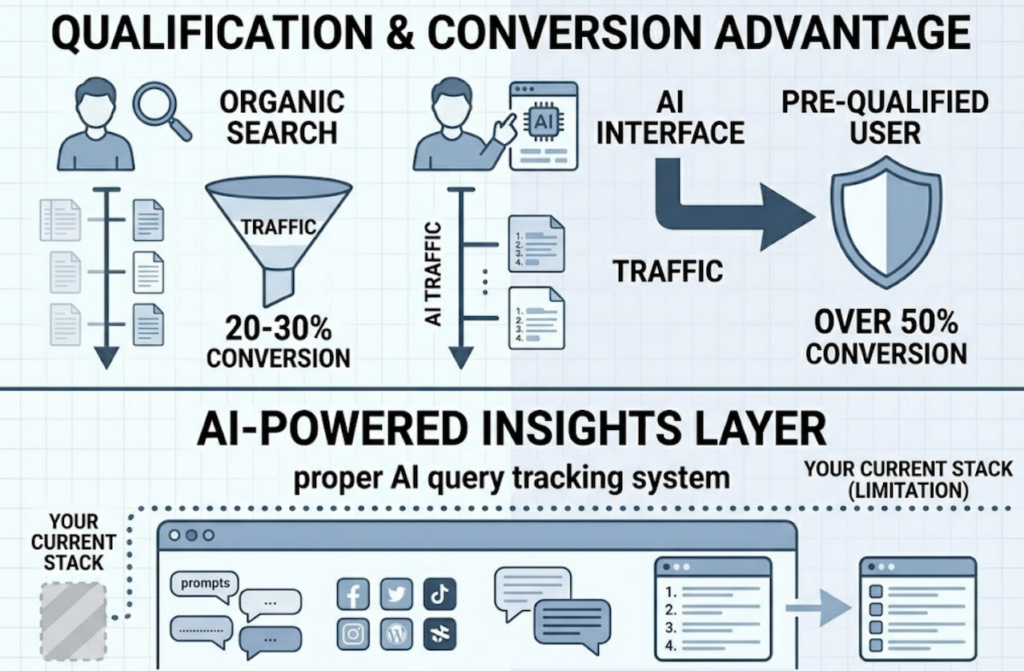

The conversion case for AI traffic is worth noting. In sectors like

SaaS and retail, AI-referred visitors convert at over 50%, compared

to the 20–30% typical of organic search. By the time someone clicks

an AI citation, the AI has already done the qualification work

upstream. The impression matters even when there’s no click.

A proper AI query tracking system tells you which specific prompts

trigger your brand exposure, on which platforms, with what narrative,

and against which competitors. That’s a data layer your current

stack almost certainly can’t see.

5 Metrics That Separate a Useful AI Query Tracking Dashboard from a Vanity Report

Most AI tracking software shows you a mention count.

That’s not enough.

Here’s what a professional AI query tracking dashboard actually

needs to surface, and why each metric carries distinct business value.

Visibility: Share of Voice across platforms

Visibility measures how often your brand appears in AI-generated

answers for a defined prompt set. The key nuance is cross-platform:

there’s only an 11% overlap between the domains ChatGPT cites and

those Perplexity cites for the same queries. A brand with 60%

visibility on ChatGPT for “enterprise security” prompts may have

15% on Perplexity. You need both numbers to understand actual exposure.

Position: Where in the narrative your brand lands

In AI answers, “mentioned” and “recommended first” are completely

different outcomes. Position tracking distinguishes whether your

brand is the primary recommendation, a secondary mention, or a

footnote citation. Mention volume without position data tells you

almost nothing about influence.

Volume: AI prompt-level search demand

Not all queries are worth tracking. Volume data shows which

prompts are gaining real traction in generative AI responses,

not estimated keyword counts from a traditional tool. Topify

surfaces this through its High-Value Prompt Discovery feature,

which automatically identifies the queries already driving

impressions in AI Overviews, even when those queries aren’t

yet generating clicks.

Sentiment: How the AI actually describes your brand

This one gets overlooked most often. An AI might mention your

brand in 80% of relevant responses while consistently describing

your pricing as “complex” or your product as “better suited for

small teams.” That’s negative visibility, and it compounds quietly.

A sentiment index built on NLP classifies the tone of every

mention so your team catches narrative drift before it becomes

a positioning problem.

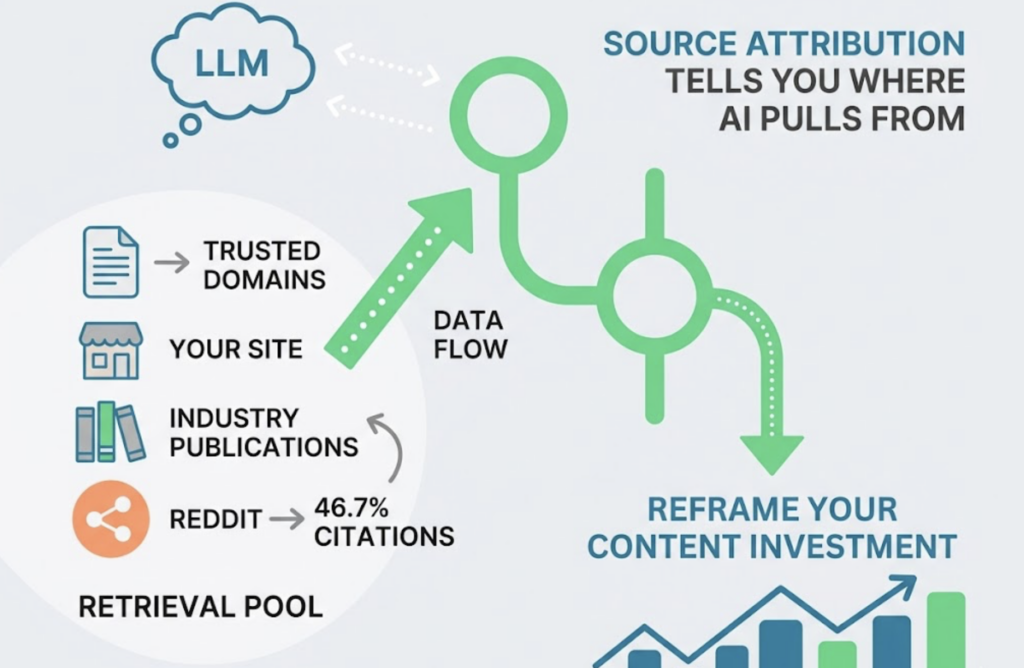

Source: Which domains the AI is citing when it mentions you

LLMs don’t generate information from nothing. They pull from a

retrieval pool of trusted domains. Source attribution tells you

whether the AI is pulling from your own site, industry publications,

or community platforms. Perplexity, for instance, draws nearly

46.7% of its top citations from Reddit. That single data point

completely reframes where your content investment should go.

Five metrics. Five different levers. A dashboard that only shows

mentions is leaving four of them dark.

Guest Posts Don’t Just Build Backlinks Anymore. They Seed AI Citation Pools.

For years, guest posting was primarily a PageRank play. Publish on

a high-DA site, earn a backlink, pass authority to your domain.

The strategy was Google-first, link-first, click-first.

Generative search has shifted the logic entirely.

AI models use RAG to build answers from sources they consider

authoritative. When Perplexity or ChatGPT retrieves content to

answer a query, it favors third-party earned media over your own

site for informational and comparative prompts. If your website

calls your product “the fastest in the category,” an AI may treat

it as a marketing claim. If a respected trade publication says the

same thing in a guest post, the AI is significantly more likely to

cite it as verified fact. Research into Generative Engine

Optimization shows that content with expert quotes and third-party

citations can boost brand visibility in AI responses by up to 40%.

This is exactly where AI discoverability guest post tracking tools

change the workflow for content teams. The process becomes specific

and measurable:

- Use Source Analysis to identify which third-party domains the

AI is already citing for your target queries. - Prioritize guest post outreach to those exact domains.

- After publishing, track whether your visibility score for

those prompts improves and whether the AI is now citing

that article directly.

Topify’s Source Analysis makes this loop

traceable. You’re not guessing which publications matter to AI

citation models. You look at the data, target accordingly, then

validate the result with the next tracking cycle.

The strategic reframe here is worth stating plainly: guest posts

are no longer just a backlink tactic. They’re a seeding mechanism

for AI knowledge graphs. The off-site “billboard” effect matters

in a world where your goal is to be mentioned in the AI answer,

regardless of whether anyone clicks through to your site.

From Zero to Baseline: Your First AI Query Tracking System in 5 Days

The setup barrier is lower than most teams expect. Here’s a

structured five-step process that takes an AI query tracking system

from nothing to an operational baseline inside a week.

Day 1–2: Build a Prompt Library

Don’t start with a keyword list. Start with natural-language

prompts that reflect how real users talk to AI assistants. Industry

practice suggests a starting set of 25 to 100 high-value queries,

organized by intent: informational (“How does X work?”),

comparative (“Brand A vs. Brand B”), and transactional (“Best

solution for Y”). Topify’s High-Value Prompt Discovery automates

this step by surfacing queries already generating AI Overview

impressions for your category, so you’re not guessing which

prompts actually matter.

Day 2–3: Deploy across platforms

Because ChatGPT and Perplexity have almost no citation overlap,

single-platform tracking produces a systematically distorted

picture. Your baseline deployment should cover at minimum ChatGPT,

Gemini, Claude, and Perplexity. Each has different source

preferences and citation logic.

Day 3–4: Document your baseline

For each prompt in your library, record three things: Is your

brand mentioned? Where in the response does it appear? What’s the

sentiment? This becomes your “AI market share” snapshot — the

number every future content action gets measured against.

Day 4–5: Bind content actions to tracking nodes

Every tactic needs a measurement point. Publishing a guest post?

Flag the date and the target query set. Updating a product page?

Same process. This binding is what turns an AI query tracking

solution from a passive reporting tool into an optimization loop.

Day 6–7: Set KPIs and reporting cadence

Shift your team away from click-based KPIs. The metrics that matter

now: AI Mention Rate (what percentage of category queries mention

your brand), Primary Source Rate (how often your own content is the

top citation), and Share of Voice movement week over week. Weekly

reporting cycles work well for most teams. The goal isn’t data

volume — it’s detecting signal fast enough to act.

4 Gaps Most AI Query Tracking Platforms Won’t Tell You About

87% of enterprises plan to increase their AI visibility budgets in

- A lot of that spending is about to go toward tools that

weren’t built for the job.

Legacy SEO platforms have started bolting on “AI features.” Most

of them are surface additions — a mention counter, maybe a

sentiment label — layered on infrastructure that wasn’t designed

for prompt-level tracking. Here’s what to check before committing

to any AI query tracking software.

Multi-platform coverage

A tool that only monitors ChatGPT is monitoring one slice of an

increasingly fragmented AI search landscape. A professional AI

query tracking platform needs real coverage across ChatGPT,

Gemini, Claude, and Perplexity at minimum. Each platform has

different source preferences and different brand treatment patterns.

Tracking one is not a proxy for the others.

Prompt-level granularity

Aggregate mention volume isn’t actionable. You need to know which

specific prompt triggered the mention, what narrative surrounded

it, and whether the response changed when the query was rephrased.

Tools that only surface total mention counts give you the illusion

of intelligence without the data to act on.

Source URL diagnosis

The most operationally useful feature in any AI query tracking

tool is the ability to trace citations back to specific domains

and URLs. Topify integrates with Google Search Console data to

surface query-URL pairs — showing exactly which pages on your

site or on external sites are triggering AI mentions. That’s the

input your content and PR teams actually need to prioritize work.

Real-time competitive benchmarking

In zero-click AI search, competitive visibility is the new keyword

difficulty. Your AI query tracking platform should show where

rivals hold narrative dominance — for example, consistently

appearing as the “easiest to implement” option in comparison

prompts — so your team can identify positioning gaps and address

them directly.

Here’s how the current market compares across these four requirements:

| Platform | Best For | Core Advantage | Price |

|---|---|---|---|

| Topify | In-house teams & agencies | GSC integration + Source URL diagnosis + multi-platform tracking | From $99/mo |

| BrightEdge Catalyst | Enterprise SEO | Executive-ready governance reporting | Custom |

| Authoritas | Agencies / SaaS | UI-crawled tracking for real-world accuracy | Credit-based |

| Scrunch AI | Growth teams | Persona-based tracking across 7+ platforms | $300+/mo |

| GetMint | PR / Reputation | Source diagnosis for outdated citations | €99+/mo |

The practical decision is straightforward. If you need prompt-level

granularity, source attribution, and competitive benchmarking in a

single AI query tracking dashboard without enterprise-tier pricing,

Topify covers what most of the market doesn’t.

Conclusion

Google rankings and AI visibility have decoupled. With AI search

traffic up nearly 800% over two years and roughly 60% of queries

ending without a click, your brand’s real exposure increasingly

lives inside AI-generated narratives that traditional analytics

can’t measure.

The starting point is smaller than it sounds. Pick 25 to 50

high-value prompts in your category. Run them across ChatGPT,

Gemini, and Perplexity. Document what the AI says about you,

where you appear, and what it’s citing. That baseline is the

foundation everything else is built on.

Get started with Topify to run that

first audit. The High-Value Prompt Discovery feature handles

most of the prompt identification automatically, so you’re not

guessing which queries matter.

FAQ

Q1: What’s the difference between AI query tracking and traditional keyword rank tracking?

A: Traditional keyword tracking measures the numerical position of a URL on a search results page. AI query tracking measures how often a brand appears in generated answers, where it sits within the narrative, how the AI describes it, and which sources the AI is citing. The unit of analysis shifts from “keyword to rank” to “prompt to generated narrative to brand mention.”

Q2: Which AI platforms should I include in my AI query tracking system?

A: At minimum: ChatGPT, Gemini, Claude, and Perplexity. Each uses different source preferences — Perplexity draws nearly half its top citations from Reddit, while ChatGPT leans more on brand domains and established publications. Research shows only an 11% overlap between the domains these platforms cite for the same queries, so single-platform tracking gives you a misleading picture.

Q3: How do guest posts improve AI discoverability, and how do I measure the impact?

A: AI models use RAG to pull facts from third-party domains they consider authoritative. A guest post on a high-authority industry site places your brand’s claims inside that citation pool. To measure the impact, use Source Analysis to first identify which domains the AI is already citing for your target queries, publish on those domains, then track whether your visibility score for those prompts increases in the following weeks.

Q4: How many AI queries should I track when starting out?

A: 25 to 100 prompts is the recommended range for an initial prompt library. Organize them by three intent categories: informational, comparative, and transactional. This gives you a meaningful baseline without the data noise of tracking hundreds of long-tail variations simultaneously. You can always expand the library once you’ve established your first baseline.