Google’s AI Overviews follows a completely different set of rules than traditional search rankings. Here’s what actually determines whether your brand gets cited, and what to do about it.

You open an incognito window, type your target query, and see an AI Overview at the top of the page. It’s confident. It’s thorough. It cites four sources.

Your brand isn’t one of them. You’re ranking #1 right below it.

This isn’t an edge case anymore. It’s the new default, and it’s happening to brands across every category. The gap between organic ranking and AI citation is no longer a rounding error; it’s a structural split that’s reshaping where discovery actually happens.

AI Overviews Isn’t a Ranking Feature. It’s a Citation Engine.

Most SEO teams treat AI Overviews like an extension of the standard results page. It’s not. The underlying logic is fundamentally different.

Traditional PageRank evaluates documents based on authority signals: backlinks, user engagement, keyword relevance, domain trust accumulated over years. Those signals still determine where you land in the ten blue links. But AI Overviews doesn’t pick sources from the ten blue links. It runs its own retrieval pipeline.

The system uses Retrieval-Augmented Generation (RAG), powered by Google’s Gemini models. It queries hundreds of candidate documents, chunks them into 150 to 300-word segments, and selects sources based on structural extractability and factual density, not ranking position.

The numbers confirm how far this has drifted. In early 2024, roughly 75% to 76% of AI Overview citations came from pages in the top 10 organic results. By early 2026, following the Gemini 3 rollout, that overlap had collapsed to between 17% and 38%. A brand ranking #1 today is cited in AI Overviews only 33% of the time.

Ranking first means you won the relevance contest. It doesn’t mean the AI trusts your content enough to quote it.

4 Real Reasons Google’s AI Skips Your Content

The exclusion is rarely random. There are four structural patterns that block high-ranking content from being cited.

1. Your answer is buried.

AI systems don’t read your article from top to bottom. They parse it in chunks. If your core answer doesn’t appear in the first 20% to 30% of the page, the retrieval system may skip the page entirely. Data from CXL shows that 55% of AI citations come from the top 30% of a page, while only 21% come from the bottom 40%.

A lengthy introduction, a brand story, or a “why this matters” warm-up is invisible to the extraction layer.

2. Your E-E-A-T signals aren’t machine-readable.

In traditional SEO, E-E-A-T is a relative signal. In AI retrieval, it’s a binary filter. Gemini’s models are designed to avoid hallucinations, so they heavily favor sources with computationally verifiable trust: author credentials with linked professional profiles, original data with quantitative findings, and active entity presence in Google’s Knowledge Graph.

Research from Wellows found that 96% of AI Overview citations originate from sources with strong, machine-readable E-E-A-T signals. A page with a generic “Editorial Team” byline, even at Rank #1, will often lose to a Rank #7 page where the author is a named expert with a verifiable LinkedIn profile.

Proper author metadata alone correlates with a 40% increase in citation frequency.

3. Your brand only vouches for itself.

The AI uses a process called “Query Fan-Out.” When it processes a user’s search, it generates multiple sub-queries to cross-validate information. If your brand appears in answers to the main query but not in the sub-queries, and if no third-party sources independently mention you, the model treats your authority as unconfirmed.

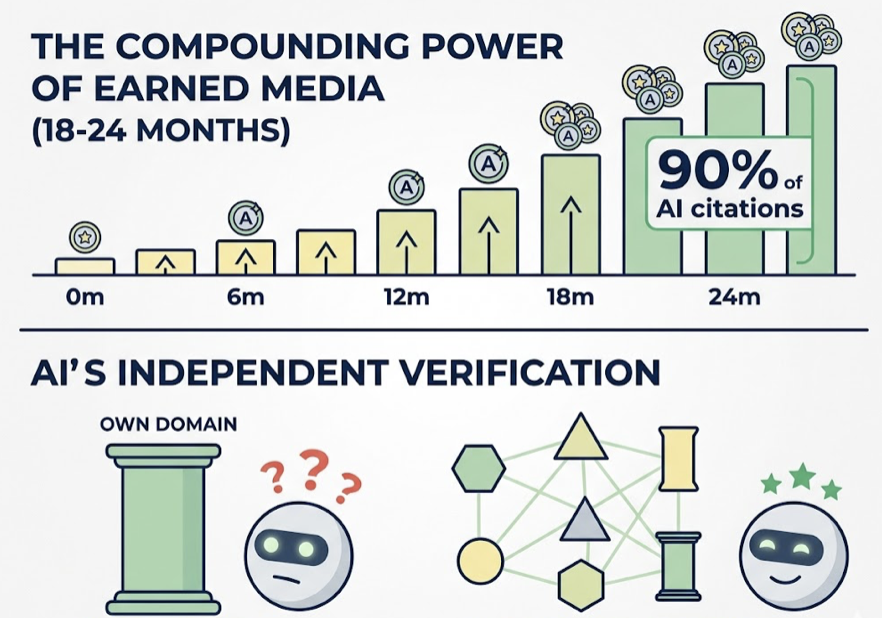

Earned media placements drive 90% of AI citations, and their effect compounds over 18 to 24 months per placement. A brand that appears exclusively on its own domain, regardless of how authoritative that domain is, presents an entity that AI can’t independently verify.

4. You’re missing the structured data layer.

AI synthesis engines prefer content that explicitly defines its own structure. FAQPage and HowTo schema markup deliver a 73% selection boost for AI Overview inclusion. Without it, the model struggles to separate your core answer from your navigation, your disclaimers, and your CTA copy. Sites without Organization, Person, and Article schema are essentially anonymous to the retrieval pipeline.

What Google Actually Cites (and Why It Surprises People)

If you study which sources AI Overviews consistently pulls from, a pattern emerges that most brand teams don’t expect.

Reddit accounts for 21% of all AI Overview citations. Community forums, industry reports, and independently published data appear at rates that far exceed their organic rankings. These sources aren’t winning because of domain authority. They’re winning because they provide structured, first-person answers to specific questions, exactly what a synthesis engine needs.

Google’s model favors three content types above all others: direct answer passages (50 to 70 words that resolve a query without requiring additional context), original quantitative data with cited sources, and third-party corroboration that validates what a brand claims about itself.

The irony is that your brand’s most polished content—the well-written, narrative-driven pillar pages—is often the hardest for AI to cite. It reads beautifully and extracts poorly.

Are You Being Excluded, or Just Outranked?

These are two completely different problems, and most teams are measuring only one of them.

Organic ranking tells you where you sit relative to other URLs on a given keyword. It’s a continuous scale (positions 1 through 10) that shifts gradually. AI Overview visibility is binary: you’re cited or you’re not. And it changes fast. Research from Authoritas indicates that 70% of AI Overview citations change within two to three months.

You can hold steady at Rank #1 for six months while your AI citation rate drops from 40% to 0%. Standard rank tracking won’t catch it. You’ll see stable “visibility” in your dashboard while losing the top of the page to a synthesis that doesn’t include you.

Only 16% of large U.S. brands currently track AI search performance in any systematic way. The remaining 84% are navigating with a blind spot that’s growing faster than they realize.

Tools like Topify are built specifically for this gap. Its Visibility Tracking monitors how frequently your brand appears in AI answers across ChatGPT, Gemini, Perplexity, and AI Overviews. The Position Tracking feature shows where you rank relative to competitors inside those citations, not in the blue links beneath them. And Source Analysis identifies which third-party domains the AI is pulling from when it talks about your category, so you know exactly where your citation footprint is thin.

The CTR math makes this urgent. When an AI Overview is present, organic click-through rates collapse from 1.76% to 0.61%, a 61% drop. But brands that do appear inside the AI Overview earn 35% more organic clicks and 91% more paid clicks than those excluded. The funnel isn’t disappearing. It’s being filtered by the AI before users ever see your link.

Building Content That AI Overviews Actually Cites

The structural fixes aren’t theoretical. They’re specific and implementable.

Lead with the answer. Every page targeting an informational query should open with a 50 to 70-word paragraph that directly resolves the query. No preamble, no framing, no “in this article we’ll explore.” The AI needs the answer in the first scroll, not the fifth.

Restructure headings as questions. H2 and H3 headings phrased as direct questions (e.g., “What does AI Overviews look for in a source?”) create retrieval-ready surfaces. Follow each with one or two sentence answers before expanding.

Make authorship verifiable. Every article needs a named author with an active, indexed professional profile. Add Person schema with credentials. If your current content uses collective bylines, that’s the first thing to fix.

Implement FAQPage schema. It’s the single highest-leverage technical change for AI Overview inclusion, delivering a 73% selection boost. It doesn’t require a redesign. It requires a schema implementation.

Build the off-site citation network. Publish original data that other sites will reference. Secure PR placements on industry publications. Contribute to relevant Reddit threads with substantive answers. Even five to ten high-authority placements in six months can trigger the entity authority threshold that unlocks consistent citation.

AI Overviews Optimization Requires Ongoing Monitoring

One round of optimization isn’t enough. AI Overview citations are volatile by design.

The Gemini 3 update introduced a 32% increase in the number of source URLs per response, which means Google is reaching deeper into the index than before. Smaller, more specialized brands now have real opportunities to out-cite larger incumbents who rely on broad, generic content. But that window requires you to know when you’re in and when you’ve been dropped.

Content published within the last 90 days receives preferential treatment in citation selection. A static page that ranked #1 three years ago will often lose its citation to a fresher Reddit thread or an updated industry report. Freshness isn’t a bonus factor anymore; it’s a maintenance requirement.

Topify’s Competitor Monitoring tracks which brands are being cited in AI answers across your category, and how their citation share changes over time. That visibility matters because what gets the AI to cite you today may not be sufficient in 60 days. The optimization cycle for AI Overviews is closer to a content operations workflow than a traditional SEO audit.

By late 2026, AI Overviews are projected to appear on 70% to 80% of all searches. The brands that treat this as a ranking problem will keep optimizing for a metric that no longer controls the most visible part of the page.

Conclusion

Ranking #1 is still worth doing. It’s just not the same as being cited.

AI Overviews operates as a citation engine with its own criteria: structural extractability, machine-readable authority, third-party validation, and content freshness. The overlap with traditional ranking signals is shrinking fast, from 76% in 2024 to as low as 17% in 2026.

The brands that adapt early will hold a compounding advantage. Citation placements build entity authority that lasts 18 to 24 months. The brands that wait will find the gap harder to close as AI summaries become the default answer surface for most searches.

The question isn’t whether AI Overviews matters for your category. It’s whether you’ll know when you’re in it or when you’ve been quietly removed.

FAQ

Does ranking #1 on Google help with AI Overviews?

It helps, but not reliably. While 92% of AI Overviews link to at least one domain in the organic top 10, a Rank #1 page is only cited 33% of the time. The correlation between ranking position and AI citation is declining as Google’s models prioritize structural extractability and consensus signals.

How often does Google update AI Overviews citations?

More frequently than most teams expect. Research from Authoritas indicates that 70% of AI Overview citations change within two to three months. Unlike organic rankings that drift gradually, AI citations are binary: a source is either in the trusted set or removed entirely in a single update cycle.

Can small brands compete in AI Overviews against big players?

Yes, and they often win. Smaller, specialized brands frequently achieve disproportionate AI visibility because they provide more granular, specific answers than broad enterprise sites. The Gemini 3 update increased the number of sources per AI response by 32%, reaching deeper into the index where specialized content lives.

What’s the fastest way to get cited in AI Overviews?

Restructure your highest-traffic pages with an “Answer-First” format: a direct 50 to 70-word answer at the top of the page, followed by FAQPage and Article schema implementation. Pair that with five to ten high-authority PR placements to build the off-site citation footprint. This combination delivers the highest measurable increase in citation probability in the shortest timeframe.