Someone searches “best project management tool for remote teams” on ChatGPT. The response names three products. Yours isn’t one of them.

You don’t know this happened. Your competitor does.

That’s the gap most brands are operating in right now. Traditional tools — GA4, Google Search Console — only track what happens after someone arrives at your site. They can’t see the thousands of moments where AI shapes a buyer’s perception before any click occurs. Brand mentions in AI answers correlate three times more strongly with AI visibility than traditional backlink profiles. Yet most teams have no system to track them.

This guide breaks down the five metrics that tell you whether your brand is actually winning in AI search — and what to do when you’re not.

Why AI Citations Are a Different Beast Than Backlinks

Getting a backlink is simple enough to understand: another site links to yours, and that signals authority to Google. AI citations work differently, and the difference matters.

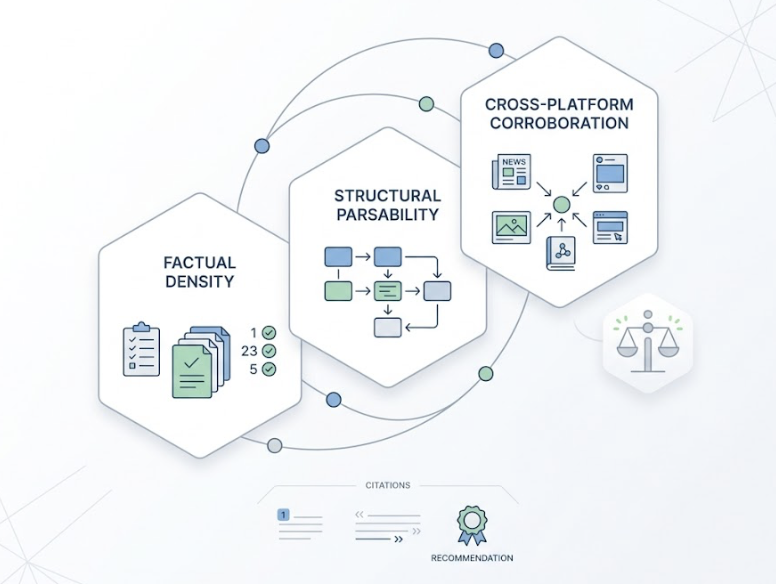

AI models don’t evaluate “who links to you.” They evaluate factual density, structural parsability, and cross-platform corroboration. A citation in a ChatGPT response might appear as a footnote, a passing mention, or a direct recommendation — and those are not the same thing commercially.

Here’s the thing: being cited doesn’t mean being recommended. Being recommended doesn’t mean being named first. And being named first on one platform tells you nothing about your position on another.

GA4 and Search Console track destination traffic. They don’t track the “share of model” — the instances where AI shaped purchase intent without generating a click. That’s where brands are bleeding visibility without realizing it.

| Feature | Traditional Backlinks | AI Citations |

|---|---|---|

| Primary Signal | Link Equity / PageRank | Entity Relevance / Factual Density |

| Control Mechanism | Site editors / Webmasters | LLM Retrieval Algorithms (RAG) |

| Visibility Format | Anchor text on a web page | Footnotes, summaries, direct mentions |

| User Intent | Navigation / Exploration | Information satisfaction / Recommendation |

| Success Metric | Click-Through Rate (CTR) | Visibility Rate / Share of Voice |

| Data Tracking | GA4 / Search Console | AI-specific monitoring (e.g., Topify) |

Metric 1: Visibility Rate — Are You Even in the Room?

Visibility Rate answers the most basic question: for the prompts your potential customers are typing into ChatGPT or Perplexity right now, how often does your brand appear?

The calculation is straightforward. If you test 100 prompts relevant to your category and your brand is mentioned in 30 of them, your Visibility Rate is 30%. But the number alone isn’t the insight — the benchmark is.

| Performance Tier | Visibility Rate | What It Means |

|---|---|---|

| Pre-Visibility | 0% – 15% | Invisible to AI search; high displacement risk |

| Developing | 15% – 30% | Cited occasionally; early traction |

| Category Presence | 30% – 50% | Regularly in the consideration set |

| Category Leadership | 50% – 75% | Recognized as top-tier in the niche |

| Category Dominance | 75% – 100% | The consensus answer for relevant queries |

Most mid-market brands fall in the 15–30% range. Most don’t know it.

What makes this metric harder to manage than search rankings is platform fragmentation. ChatGPT, Gemini, and Perplexity use different retrieval architectures — and the overlap of domains they cite for the same query can be as low as 11%. Your brand can rank well in ChatGPT and be essentially absent from Perplexity for identical queries.

Topify Visibility Tracking monitors brand presence across these ecosystems simultaneously, providing a normalized score that shows where you’re strong and where the gaps are. Without cross-platform tracking, you’re making strategy decisions based on a fraction of the picture.

Metric 2: Citation Source — Who’s Vouching for You?

Here’s the number that surprises most brand teams: 85% of brand mentions in AI answers come from third-party domains. Only 15% come from a brand’s own website.

Your content strategy alone can’t carry your AI visibility. What matters is whether the right external sources are talking about you.

AI models seek corroboration. The more a brand appears across trusted external sources, the more likely it is to be retrieved and recommended. The hierarchy looks roughly like this:

- Public forums: Reddit drives nearly 50% of top sources for Perplexity and features prominently in Gemini results

- Industry review platforms: G2, Capterra, and Yelp provide the social proof models use to validate recommendations

- Encyclopedia and news: Wikipedia and major publishers anchor ChatGPT’s general knowledge layer

The top cited domains for each platform in 2025 look like this:

| Rank | ChatGPT | Gemini | Perplexity |

|---|---|---|---|

| 1 | Wikipedia (7.8%) | Reddit (2.2%) | Reddit (6.6%) |

| 2 | Reddit (1.8%) | YouTube (1.9%) | YouTube (2.0%) |

| 3 | Forbes (1.1%) | Quora (1.5%) | Gartner (1.0%) |

| 4 | G2 (1.1%) | LinkedIn (1.3%) | LinkedIn (0.8%) |

| 5 | TechRadar (0.9%) | Gartner (0.7%) | Yelp (0.8%) |

The strategic question isn’t just “are we on these platforms.” It’s “which specific URLs are carrying our competitors’ visibility, and are we absent from those exact locations?”

Topify Source Analysis reverse-engineers which domains are fueling competitor citations. That data becomes a PR and content roadmap — target the sources AI trusts, earn the mentions, and eventually those mentions surface in the retrieval layer.

Metric 3: Position in Answer — First Mention or Footnote?

Visibility Rate tells you how often you show up. Position tells you whether showing up is actually working.

In a conversational AI response, the first recommendation carries something researchers call “recommendation bias.” Up to 74% of users choose the AI’s first mentioned option. The difference between being named first and being listed third isn’t just aesthetic — it has a direct impact on whether anyone goes looking for your brand after that interaction.

A useful scoring framework for quantifying this:

| Placement Quality | Points | Description |

|---|---|---|

| Primary Citation with Link | 5 | Named first; includes a direct URL |

| Primary Citation (No Link) | 4 | Named first; no link |

| Secondary Mention with Link | 3 | Listed as an option; linked |

| Secondary Mention (No Link) | 2 | Listed as an option; not linked |

| Passing Mention | 1 | Brief mention, no recommendation |

| Absent | 0 | Brand doesn’t appear |

A brand could have a 40% Visibility Rate but score an average of 1.5 on this scale — meaning it’s consistently being listed as “others also include” rather than the lead recommendation. That’s a very different strategic problem than low visibility, and it requires a different fix.

Topify Position Tracking surfaces this distribution by brand, by competitor, and by prompt type — so you can see not just whether you’re being mentioned, but what kind of role the AI is casting you in.

Metric 4: Sentiment Score — What Is AI Actually Saying About You?

Being visible isn’t always a win. If the AI is consistently describing your brand as “an older option worth considering for smaller teams,” that’s visibility working against you.

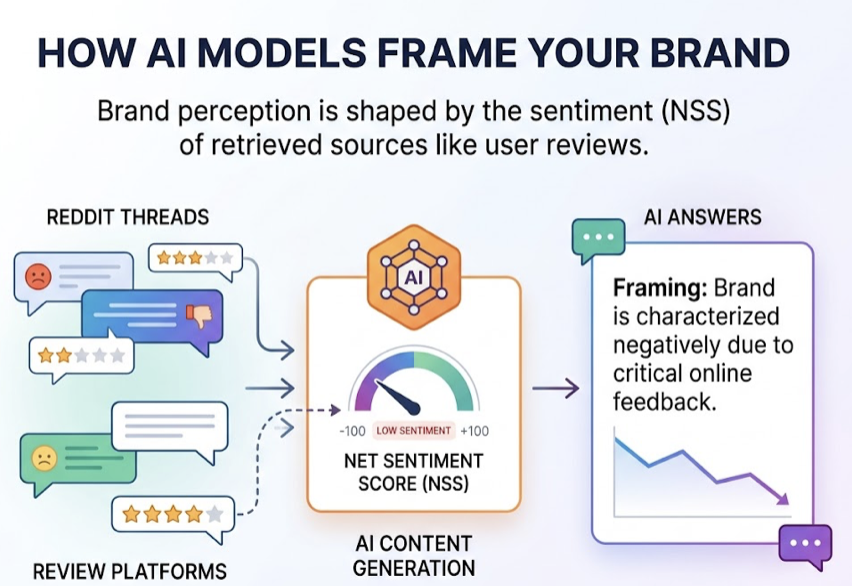

AI models characterize brands based on the sentiment of the sources they retrieve. If Reddit threads and review platforms are critical of your product, those attitudes tend to show up in how AI answers frame you. The Net Sentiment Score (NSS) captures this on a scale from -100 to +100.

The thresholds matter:

| NSS Range | Perception Status | Strategic Action |

|---|---|---|

| +60 to +100 | Brand Advocacy | Leverage for high-intent marketing |

| +20 to +60 | Healthy Reputation | Maintain trajectory; optimize for intent |

| 0 to +20 | Vulnerable / Neutral | Focus on earning “enthusiastic” mentions |

| Below 0 | Crisis Zone | Identify and correct negative source material |

The hallucination category deserves specific attention. AI occasionally generates factually incorrect claims about brands — invented pricing, wrong founding dates, fabricated product limitations. These aren’t just reputation problems; they’re retrieval problems. The fix requires identifying which source material is feeding the error and correcting it upstream.

Topify Sentiment Analysis uses NLP to detect shifts in AI’s attitudinal tone toward your brand across platforms. A sudden NSS drop is often a leading indicator of a narrative forming on Reddit or review platforms — before it reaches traditional media.

Metric 5: CVR — Does Being Cited Actually Drive Action?

The prior four metrics measure what’s happening inside the AI response. CVR (Conversion Visibility Rate) asks whether any of it is translating to commercial outcomes.

AI-referred traffic is a different animal than traditional search traffic. A user who arrives at your site after reading a ChatGPT recommendation has already been through the research and comparison phase. The AI handled it. That changes the conversion math significantly:

- B2B SaaS: AI-referred visitors convert at 12–15%, vs. 2.5–4% for traditional organic search — roughly a 4x lift

- E-commerce: AI traffic converts 42% better than traditional paid search, with users spending 48% more time on-site

- Lead generation: AI-referred sign-up conversions have been measured at 1.66% vs. 0.15% for traditional organic — an 11x difference

Not all prompts carry the same conversion potential, though. Prompt intent changes everything:

| Prompt Intent | Conversion Potential | What It Drives |

|---|---|---|

| Informational (“What is…”) | Low | Brand imprinting / Awareness |

| Comparison (“Brand X vs Y”) | Medium | Consideration / Validation |

| Transactional (“Best tool for…”) | High | Direct conversion / Purchase |

The challenge is that most of these interactions are “zero-click” — users don’t always visit your site after seeing you mentioned. Topify CVR correlates these invisible influence moments with Branded Search Lift, the measurable increase in users searching for your brand by name in the days following AI exposure.

That’s the closest proxy to attribution that currently exists for this channel.

These 5 Metrics Don’t Work in Isolation

Tracking each number separately misses the point. The value is in reading them together as a diagnostic system.

A high Visibility Rate with a low Sentiment Score means you’re visible, but the AI is saying something unfavorable. Fix the source material, not the visibility strategy. A strong Position Score on informational prompts with weak CVR suggests you’re winning awareness but not conversion-stage queries — the prompt library needs rebalancing toward transactional intent.

Here’s a practical operating framework:

| Metric | Check Frequency | Warning Threshold | Response |

|---|---|---|---|

| Visibility Rate | Weekly | Below 20% | Audit content for parsability and entity clarity |

| Citation Source | Monthly | Competitor share 2x yours | Target high-citation 3rd-party domains via PR |

| Position (APS) | Weekly | Avg score below 0.5 | Improve unique data points and information gain |

| Sentiment (NSS) | Daily | Score below 0 | Identify and correct negative source material |

| CVR / Branded Search | Monthly | Declining trend | Realign prompt library toward commercial intent |

The operational problem is that these signals live in different places — AI responses, review platforms, search trend data, traffic analytics. Topify consolidates them into a single dashboard, identifying specific “Citation Gaps” where your brand should appear but doesn’t, and providing a prioritized action list for content and PR teams.

Without that consolidation, most teams end up checking metrics inconsistently and reacting to problems weeks after they develop.

Conclusion

The three recommendation slots in a ChatGPT or Perplexity response are the new prime real estate of the internet. Most brands don’t know whether they’re in those slots or not — and for the ones that don’t know, the answer is usually “not often enough.”

Visibility Rate, Citation Source, Position, Sentiment, and CVR are the five numbers that tell you the truth. Track them together, act on the gaps, and you move from being indexed to being recommended.

The brands doing this now will be significantly harder to displace in six months. The ones waiting will be catching up.

FAQ

How often should I check my AI citation metrics?

Weekly for Visibility Rate and Position — AI models update frequently, and citation patterns can shift overnight after a model update. Sentiment should be monitored daily for enterprise brands, specifically to catch hallucinations or emerging negative narratives before they scale. Citation Source analysis is typically most useful on a monthly cadence, since the domain-level signals move more slowly.

Can I track AI citations without a paid tool?

You can do a rough version manually — run 20–50 prompts across ChatGPT, Gemini, and Perplexity once a week and log what you find. The problem is accuracy. AI responses are probabilistic; a single run of a prompt doesn’t represent what your audience is actually seeing. Paid tools like Topify iterate each prompt dozens of times across different models and IP locations to produce a statistically significant normalized score. Manual tracking is better than nothing, but it tends to give teams false confidence in incomplete data.

How is AI citation tracking different from traditional brand monitoring?

Social listening tracks what humans say to other humans — reviews, posts, comments. AI citation tracking measures what the machine says to potential buyers during the decision phase. A brand could be mentioned 10,000 times on social media; if those mentions aren’t being retrieved by AI models, the brand is invisible in the AI search funnel. The fix is also structurally different: improving AI visibility requires content optimization for parsability and earning corroborating mentions on high-weight domains — not community management.