You search your own brand name in ChatGPT. Your competitor appears. You don’t.

That’s not a fluke. It’s a signal — and it’s one most marketing teams don’t catch until the damage is already done. AI citation isn’t broken. The brand’s digital presence just isn’t giving the model a reason to mention it.

Here’s what’s actually happening, why it happens, and what to do about it.

AI Citation Is a Trust Competition, Not a Ranking Race

Traditional SEO is a popularity contest. You earn backlinks, optimize pages, chase positions. The winner gets clicks.

AI citation works differently. When ChatGPT, Perplexity, or Google’s AI Overviews synthesizes a response, it’s not picking the best-ranked page. It’s picking the most verifiable entity — the brand it trusts enough to put its credibility behind.

That distinction matters more than most teams realize.

A brand can rank on page one of Google and still be invisible in AI answers. The AI has already retrieved ten sources. It synthesizes three names. If your brand isn’t one of them, you don’t exist in that interaction — regardless of your domain authority.

This is what researchers call the shift from the “link economy” to the “citation economy.” The goal is no longer to drive a click. It’s to become part of the truth the AI delivers.

Your Official Website Isn’t Enough

Here’s the misconception that gets brands in trouble: a strong website doesn’t equal AI visibility.

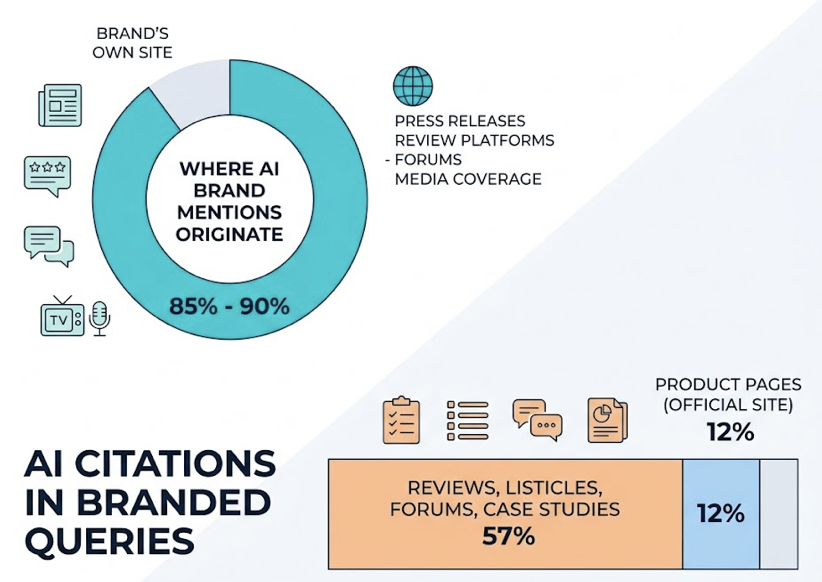

Research shows that 85% to 90% of AI brand mentions originate from external domains — press releases, review platforms, forums, and media coverage — not the brand’s own site. In branded queries specifically, reviews, listicles, forums, and case studies account for 57% of AI citations. Product pages on the official site capture roughly 12%.

AI models prefer third-party validation for the same reason customers do. If only you are saying you’re great, that’s marketing. If G2, Reddit, and TechRadar are independently saying it, that’s evidence.

Brands that rely entirely on owned channels are building a technically polished presence that AI actively discounts.

5 Reasons AI Drops Your Brand From Its Answers

Understanding the failure mode is the first step to fixing it. There are five specific signals that tell an AI to omit a brand.

1. Thin third-party coverage. If your brand has minimal presence in industry publications, no reviews on G2 or Yelp, and no mention in forum discussions on Reddit or Quora, the AI simply lacks enough external material to recommend you with confidence. It defaults to brands that have been cited extensively across the web.

2. Content dilution by competitors. Even if you’re searchable, your share of AI citations can quietly erode. If competitors are publishing more detailed comparisons, updated industry reports, and authoritative guides on your core topics, the model’s probability of mentioning you drops — without your SEO rankings moving at all. This “ecosystem drift” is invisible in traditional analytics.

3. Misalignment between your content and query intent. AI systems extract discrete chunks of content to satisfy specific questions. If your pages bury the answer behind a slow build-up, the RAG system may fail to extract a usable response. AI prefers front-loaded content, where the answer appears in the first 30% of the text. When a competitor’s page is more “extractable,” it gets cited instead.

4. Stale or low-influence citation sources. Not all mentions are equal. AI models lean on a “kingmaker” set of domains — Wikipedia, Reddit, Forbes, TechRadar — for their default recommendations. If your brand doesn’t appear on those platforms, or if the sites that do cite you are unmaintained and niche-obscure, the model discounts those citations.

5. Outdated brand data. Freshness matters. Content updated within the last 30 days is cited up to 6 times more often than content older than 12 months. If your pricing, features, or positioning are stale across the web, the AI learns an outdated version of your brand. In fast-moving categories, citation priority can be lost in as little as 14 days without fresh signals.

The Gap Between Searchable and Citable

There’s a phrase that captures this problem well: “ghost citation.” Your content is trusted enough to be retrieved during the AI’s research phase. But your brand isn’t well-known enough in the right places to be named in the final response.

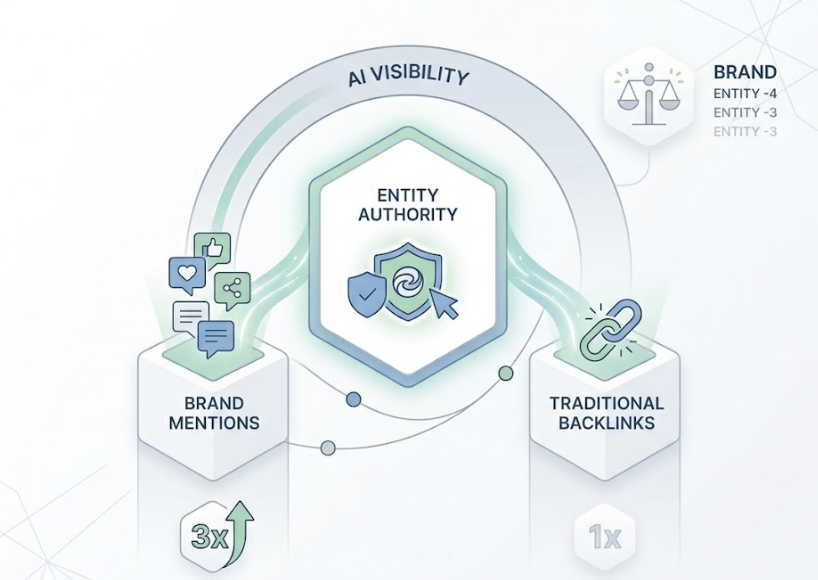

Being citable requires what researchers call “Entity Authority” — the clear, consistent recognition of your brand as a distinct entity across the web. That authority is built through brand web mentions, which correlate three times more strongly with AI visibility than traditional backlinks.

Reddit and Wikipedia alone account for over 66% of all LLM citations in certain categories. For B2B brands, consistent signals across LinkedIn, Crunchbase, and G2 are essential for entity recognition.

That’s the gap most brands still can’t see.

If you’re not measuring where AI citations for your category are coming from, you’re flying blind. And you can’t fix a problem you’re not measuring.

What Most Teams Are Still Measuring (and Why It’s Not Enough)

Traffic. Rankings. Click-through rates.

These are lagging indicators. By the time organic traffic drops from AI-driven queries, the brand has already lost citation authority. The model moved on weeks ago.

The industry has aligned around a different set of metrics for the AI era:

| Metric | What It Measures |

|---|---|

| Inclusion Rate | % of relevant prompts where your brand is explicitly mentioned |

| Citation Rate | % of AI responses that link to your owned assets as a source |

| AI Share of Voice | Your mention frequency vs. total competitor mentions |

| Sentiment Score | Whether the AI describes your brand positively, neutrally, or negatively |

| Position Index | Where you appear in the response (first-named vs. fifth-named) |

Topify tracks all five of these across ChatGPT, Gemini, Perplexity, and other major AI platforms. Its Source Analysis feature goes a layer deeper: it identifies which specific external domains the AI is currently citing for your target prompts — revealing exactly where your citation gaps are and which competitor pages the model treats as authoritative.

That’s the difference between knowing you’re invisible and knowing why.

How to Get Back Into AI Answers

Reclaiming AI citation is a multi-pronged effort that targets both what the model has learned from training and what it retrieves in real time.

Build citable third-party coverage. The fastest lever is earned media. Pursue guest posts, analyst inclusions in Gartner or Forrester reports, and quotes in industry publications. Actively build presence on G2, Capterra, and Trustpilot — sites with those profiles have a 3x higher citation probability. Foster discussions on Reddit and industry forums, which account for 11% of citations and are heavily weighted for human validation.

Optimize existing content for extractability. Lead with direct answers. Place the core response in the first 30% of every page. Replace vague statements with numerical data — this “statistics addition” approach can boost AI visibility by 40%. Use tables, bulleted lists, and clear Q&A sections. LLMs prefer atomic knowledge blocks that are easy to extract and cite.

Fill the competitive gaps your brand is missing. Test 20 to 30 high-value prompts relevant to your category. See who the AI recommends and which domains are driving those citations. If the AI is citing a specific listicle on TechRadar for a prompt you should own, that’s your next PR target.

Topify’s One-Click Execution turns this from a research exercise into an action item. You define the goal; the platform identifies the prompt-level gaps and deploys the content strategy without requiring manual workflows.

Track It After You Fix It

Here’s a number worth knowing: 45.5% of AI citations change every time an AI Overview re-runs for the same query.

Citation recovery isn’t a project with a finish line. It’s a maintenance cadence. AI models are continuously retrained. Their retrieval indices update daily. A brand can be cited this week and dropped next week if it stops generating fresh signals.

The monitoring priorities look like this:

| Content Type | Frequency | Action |

|---|---|---|

| Product Pages | Monthly | Update pricing, specs, and schema |

| Data-Heavy Guides | Quarterly | Replace stats older than 12 months |

| Landing Pages | Bi-Monthly | Refresh intros, check internal link consistency |

| Foundational Explainers | Annually | Verify accuracy, update “Last Updated” date |

Using Topify’s Visibility Tracking and Competitor Monitoring, teams can watch for sentiment shifts (where mentions are trending negative), position changes (whether you’re first-named or fifth in a response), and new rivals entering the AI answer space for your category.

The goal isn’t to win once. It’s to stay in the retrieval set as the landscape shifts.

Conclusion

AI search doesn’t reward the most optimized brand. It rewards the most verifiable one.

If your brand has disappeared from AI citations — or never appeared in the first place — the cause is almost always the same: insufficient third-party coverage, misaligned content structure, stale data, or low presence on the platforms AI models actually trust.

The fix isn’t complicated. But it requires measuring the right things, targeting the right sources, and treating citation recovery as an ongoing discipline rather than a one-time task.

The brands that do this now are building a durable advantage. The ones that wait are losing ground to competitors who are already in the model’s answers.

FAQ

What’s the difference between AI citation and SEO ranking?

Traditional SEO focuses on ranking a URL in a list of links to generate clicks. AI citation focuses on your brand being mentioned and sourced within a synthesized answer. Visibility is measured by Inclusion Rate and Share of Model, not organic position.

How long does it take to see changes in AI citations?

For models like Perplexity or Google AI Overviews, structured content improvements can influence citations within a few days to a few weeks as their indices refresh. Broader parametric authority — shaping what the model has learned in training — typically takes 6 to 12 months of consistent third-party coverage.

What types of content are most likely to be cited by AI?

Front-loaded content that answers the question immediately, pages with high fact density and expert quotes, and structured formats like tables or Q&A sections. Third-party reviews and independent media are cited significantly more often than brand-owned blog posts.

How can a small brand compete with a large brand for AI citations?

By being more specific. Large brands have broad but shallow coverage. A brand that provides deep, precise, and niche-specific expertise can earn citations that generic national pages can’t match. Precision and entity clarity often beat raw scale in the selective logic of AI.