Your domain authority is solid. Your backlink profile is clean. Your top pages rank in position one for keywords that matter. Then a prospect opens Perplexity and types the exact question your product answers, and your brand isn’t in the response.

Traditional SEO tools can’t explain this. They weren’t built to. They track HTML hyperlinks, not the probabilistic logic that determines what a language model synthesizes into an answer. That’s the gap AI citation tracking tools are designed to close.

Your Backlink Profile Tells You Nothing About AI Citations

For two decades, marketers treated domain authority as a universal proxy for visibility. High DA meant high rankings. High rankings meant traffic. The logic was linear.

AI search has broken that chain.

Research shows that Domain Authority has a measured correlation of just r=0.18 with AI citation frequency, explaining less than 4% of variance in AI visibility. In practice, this means a brand with a DA of 80 can be completely absent from a ChatGPT or Perplexity response while a niche industry blog with DA 30 gets cited consistently. The reason is structural: traditional search engines rank based on link equity and domain age, while AI systems use probabilistic, retrieval-augmented generation (RAG) to synthesize answers from semantically dense sources.

The business stakes are real. An AI Overview reduces the click-through rate for the first organic position by as much as 34.5% to 61%. At the same time, when a brand is cited inside an AI response, it sees organic clicks increase by 35% and paid clicks by 91% compared to queries where the brand is absent. Visibility has shifted from “ranking” to “winning the citation.” These are not the same thing, and they don’t respond to the same tools.

What Is an AI Citation Tracking Tool?

An AI citation tracking tool is a software platform that monitors, measures, and analyzes which URLs, domains, and brands generative AI systems reference when answering user queries.

The distinction between a “mention” and a “citation” is worth being precise about. A mention is when an AI includes your brand name in its text without attribution. A citation is an explicit reference to a source URL or domain. Tracking mentions tells you about brand awareness. Tracking citations tells you whether AI is actually directing users to your content.

What makes this category different from traditional analytics is the mechanism. These tools send structured prompts to AI platforms like ChatGPT, Perplexity, and Gemini, parse the returned responses, and extract the source attribution data. Over time, they aggregate this into visibility metrics: how often your domain appears, on which platforms, for which topic clusters, and how that compares against your top competitors.

This is what’s meant by “AI citation tracking tool” in practice: it’s less a single feature and more a monitoring architecture built around the behavioral logic of AI answer engines.

How AI Citation Tracking Tools Work

Most tools follow a three-step process: send a standardized prompt to an AI platform, parse the response, and extract the source attribution data.

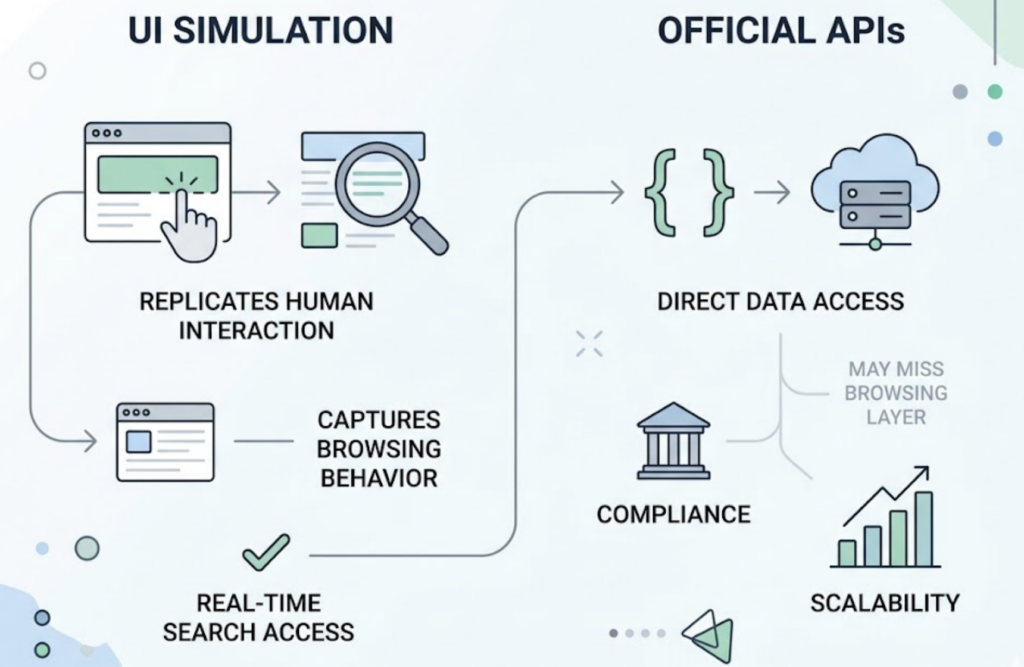

Where tools differ is in how they access that data. Some use UI simulation, essentially replicating a human user’s interaction with the web interface of ChatGPT or Perplexity. This captures the full browsing and real-time search behavior that often isn’t available in raw API calls. Others use official APIs, which offer better scalability and compliance but may miss the “web browsing” layer that shapes real user responses.

The tracking itself operates at three levels of precision. Domain-level tracking tells you whether your site is being cited at all. URL-level attribution tells you which specific pages are driving those citations. Topic-level mapping tells you the types of queries where you appear versus where you don’t. Only the last two levels give you anything actionable.

One data point that surprises most teams: only 11% of domains are cited by both ChatGPT and Perplexity for the same set of queries. “AI” is not a monolithic audience. Perplexity averages 21.87 citations per question, about 2.8x more than ChatGPT, and draws heavily from Reddit (46.7% of its citations). ChatGPT answers 60% of queries from pre-trained parametric knowledge without triggering a web search at all. Google AI Overviews cite an average of 35.2 sources per complex query and overlap strongly with the top 10 organic results (93.67% of the time). Tracking one platform and calling it done isn’t a strategy.

5 Signs a Citation Tracking Tool Is Actually Worth Using

Most tools promise “AI visibility.” Fewer deliver the intelligence needed to act on it. Here’s what separates useful tools from dashboard noise.

Platform breadth. A tool that only tracks ChatGPT misses the majority of the AI search landscape. Look for coverage across ChatGPT, Perplexity, Gemini, and emerging platforms. Model version matters too: citation behavior varies between “instant” and “reasoning” model variants.

URL-level attribution. Domain-level reporting tells you whether your site exists in the AI’s world. URL-level attribution tells you which article is doing the work, and more importantly, which articles aren’t. This is the data you need to make content decisions.

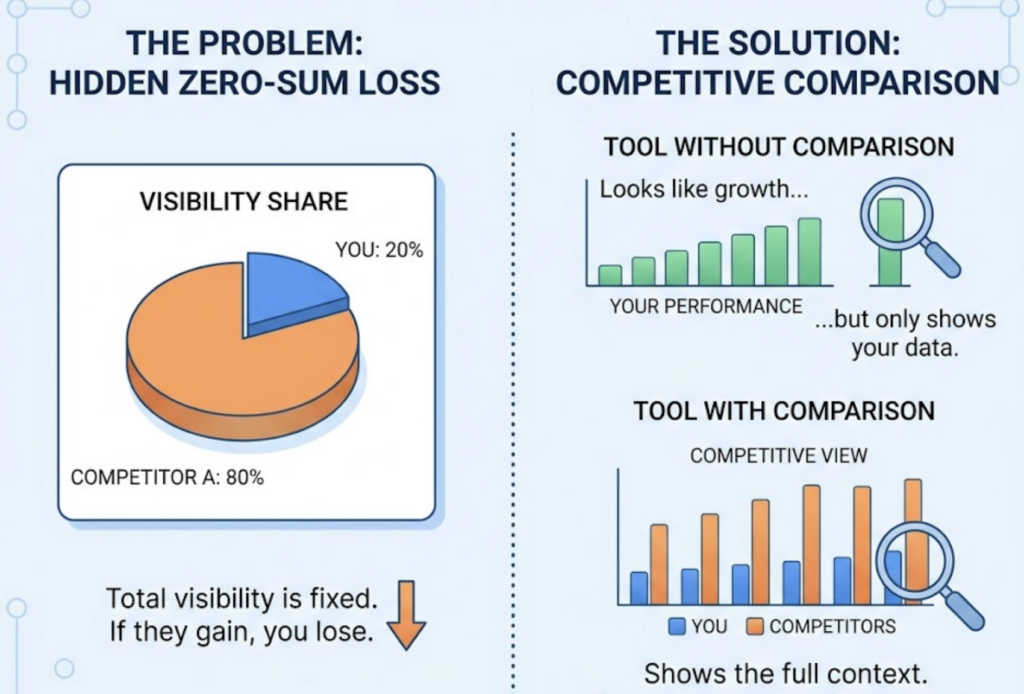

Competitive share of voice. In generative search, visibility is zero-sum. If a competitor appears in 80% of relevant AI responses and you appear in 20%, you’re losing ground even if your absolute numbers look stable. A tool without side-by-side competitive comparison is giving you half the picture. Tools like Profound and others in the market offer this; the question is the depth and granularity of what they surface.

Historical trend data. AI citation patterns are more volatile than they appear. BrightEdge research indicates that 96.8% of citations are stable week-to-week, but when they shift, they tend to shift completely: domains go from cited to not cited in a single model update cycle. Without historical data, you can’t tell whether a drop is noise or a signal.

Topic and intent mapping. A brand may be cited consistently for “technical specifications” but never for “pricing comparisons.” Tools that connect citations to specific prompt types help teams prioritize optimization for queries that actually sit in the buyer’s journey, not just the traffic-heavy terms.

Common Mistakes Teams Make When Tracking AI Citations

The most common mistake is treating brand mentions as citations. An AI can say your company name a dozen times in a response without creating any path for a user to reach your website. AI models disagree on the same query 54.5% of the time, which means mention count is an unreliable signal to begin with. Citation count, tied to a specific URL, is the metric worth tracking.

The second mistake is the single-audit approach. Teams run a one-time check of their AI visibility, document the results, and file them away. In practice, AI citation patterns shift every few weeks as models update their retrieval parameters. Successful teams build citation tracking into their continuous monitoring workflows, not their quarterly reporting cycle.

Third: ignoring competitor source data. The most actionable insight from citation tracking often isn’t about your own pages at all. If a competitor is consistently cited through third-party comparison sites or industry roundups, the implication is that publishing more content on your own domain won’t fix the gap. The fix is a digital PR strategy to win mentions on those external sources.

Finally, over-optimization. The research is clear: keyword stuffing reduces the likelihood of being cited by AI systems. Adding statistics, on the other hand, improves AI citation visibility by up to 41%. Original research and first-party data generate 4.31x more citation occurrences per URL than generic blog posts. The optimization lever isn’t keyword density. It’s evidence density.

How Topify’s Source Analysis Handles AI Citation Tracking

Most visibility tools answer the question “Is your brand appearing in AI responses?” Topify is built to answer the more useful question: “What content is driving those appearances, and where are the gaps?”

The Source Analysis feature maps which domains and specific URLs AI platforms cite in response to your tracked prompts. You can see your own citation footprint and your competitors’ at the same time: which third-party sources are giving them authority, which topics you’re missing coverage on, and where adding a single well-structured page could shift your citation share measurably.

Topify covers ChatGPT, Gemini, Perplexity, DeepSeek, and other platforms, which matters given how differently those platforms behave. Its tracking architecture also connects to seven core GEO metrics: visibility, sentiment, position, volume, mentions, intent, and CVR. This means citation data doesn’t sit in isolation. It connects to the broader picture of how AI systems are representing your brand relative to your category.

For teams that need to move quickly without a five-figure enterprise contract, Topify’s Basic plan starts at $99/mo, covering 100 tracked prompts and 9,000 AI answer analyses across four projects. The Pro plan at $199/mo expands to 250 prompts and 22,500 analyses. Both include a 30-day trial to establish a baseline before committing to a monitoring cadence.

The market has several other options worth being aware of. Enterprise platforms like Profound focus on compliance and large-scale simulation, making them a fit for global brands with formal reporting requirements and security needs like SOC 2 Type II. Lighter tools serve startups that need basic sentiment snapshots at lower cost. The mid-tier, where Topify sits, is built for content and SEO teams that need actionable intelligence on a working cadence, not just quarterly audits.

A Practical Checklist for Setting Up AI Citation Tracking

Getting from “we should be tracking this” to an operational system doesn’t require months of configuration. Here’s a framework that moves fast.

Step 1: Define your core query set. Identify 20-40 prompts that map to your buyer’s awareness and consideration stages. These are the questions where you need to appear. Start with “What is…” and “Best… for…” formats before moving to transactional terms.

Step 2: Inventory your target URLs. List the specific pages on your domain intended to answer those queries. These become your “citation candidates” and the pages you’ll prioritize for GEO optimization.

Step 3: Establish a multi-platform baseline. At minimum, set up tracking across ChatGPT and Perplexity before expanding. Document your starting share of voice against three to five competitors. You need a baseline before you can measure movement.

Step 4: Audit competitor citation sources. Before optimizing your own content, identify which external domains the AI is citing for your target queries. If those are third-party review sites or aggregators, your content roadmap needs to include an outreach strategy, not just on-site publishing.

Step 5: Review citation trends monthly. Weekly is better for volatile categories. Monthly is the floor. When citation share drops, correlate the change with model update dates and recent competitor content activity.

Step 6: Execute targeted content updates. Based on gap analysis, update existing pages with statistics, structured Q&A sections, and clear heading hierarchies. Implementing FAQPage and HowTo schema increases citation inclusion likelihood by 20-30%. These are measurable changes you can test against a control group of prompts.

Step 7: Connect citation data to traffic. Monitor referral traffic from perplexity.ai and chat.openai.com in GA4 to close the loop between citation share and business outcomes. This turns citation tracking from an SEO vanity metric into a revenue-attributable signal.

Conclusion

Traditional SEO gave you a ranking. AI search gives you a citation, or it doesn’t. The difference determines whether a user reaches your content at all, and the data shows that brands inside AI responses see up to 91% more clicks than brands that aren’t.

The tools exist to track, measure, and systematically improve your citation footprint across the platforms where your buyers are actually searching. The starting point is knowing where you stand: which domains are winning citations in your category, which of your own pages are doing the work, and where the gaps are. From there, the optimization is methodical. If you’re ready to establish that baseline, Topify’s 30-day trial is a practical place to start.

FAQ

Q: What is an AI citation tracking tool? A: It’s a software platform that monitors which URLs, domains, and brands generative AI systems like ChatGPT, Perplexity, and Gemini reference when responding to user queries. Unlike traditional analytics, it tracks explicit source attribution inside AI-generated answers, not just brand mentions.

Q: How does AI citation tracking differ from traditional backlink monitoring? A: Backlink monitoring tracks HTML hyperlinks between web pages. AI citation tracking monitors which content sources language models reference in their synthesized answers. The two systems have almost no correlation: Domain Authority explains less than 4% of variance in AI citation frequency.

Q: What’s the best AI citation tracking tool for small teams? A: It depends on what your team needs. For teams that want actionable source intelligence without enterprise pricing, Topify’s Basic plan at $99/mo covers the core tracking workflow. For teams that primarily need brand mention monitoring at low volume, lighter-tier tools may suffice. The deciding factor is whether you need URL-level attribution and competitor comparison, or just a snapshot of brand presence.

Q: How often should I review my AI citation data? A: Monthly is the minimum for most teams. AI models update their retrieval behavior regularly, and citation patterns can shift quickly. If you’re in a competitive category or actively running content optimization sprints, weekly monitoring lets you catch drops before they compound.