You searched for your own brand on ChatGPT. Your competitor showed up. You didn’t.

It’s not because their product is better. It’s because AI platforms are pulling from a set of sources that your content hasn’t entered yet. That’s the gap AI citation tracking is designed to close.

This guide walks through how citation tracking works, why different AI platforms cite different sources, and how to build a systematic strategy to improve your brand’s citation rate across ChatGPT, Gemini, and Perplexity.

Your Brand Isn’t Invisible. It’s Just Not Being Cited.

There’s a distinction most brands miss: being mentioned by AI is not the same as being cited.

A mention means an AI model references your brand name in its response, typically drawing from its parametric knowledge, the information absorbed during pre-training. A citation means the AI actively retrieved your content as a source during its response generation, usually surfacing a link or source card alongside its answer.

That difference matters enormously. Brands with strong offline awareness often get mentioned but not cited. Meanwhile, smaller brands with well-structured, data-dense content get cited repeatedly, because they fit what the retrieval layer of AI systems is actually looking for.

Here’s the business case for fixing this: according to research on AI Overviews, when a brand is cited in an AI-generated answer, it earns around 1.20% organic CTR. When it’s absent from citations, that drops to 0.52%. The gap translates directly to traffic and revenue, particularly as AI-driven consumer spending is projected to reach $750 billion by 2028.

What AI Citation Tracking Actually Measures

AI citation tracking isn’t one metric. It’s a three-layer diagnostic.

The first layer is citation source mapping: which domains are AI platforms actually pulling from when they answer prompts relevant to your category? The second is citation rate: how often does your domain appear as a referenced source across a defined set of tracked prompts? The third is competitive citation gap: what sources are being cited for your competitors that aren’t being cited for you?

Together, these three layers tell you something traditional SEO analytics can’t: why AI recommends the brands it recommends, and what you’d need to change to get cited instead.

This is fundamentally different from backlink analysis. Research shows that brand mention frequency correlates with AI visibility at a coefficient of 0.664, versus only 0.218 for backlinks. The authority signals AI systems use aren’t the same ones Google uses.

Why ChatGPT, Gemini, and Perplexity Don’t Cite the Same Sources

One strategy doesn’t cover all three.

Each major AI platform has a distinct retrieval logic, and understanding those differences is where most citation-building strategies fall apart.

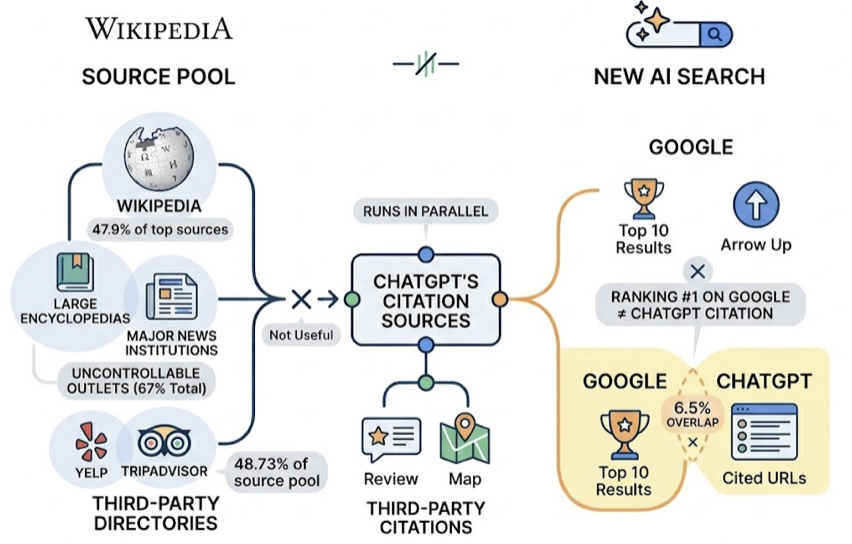

ChatGPT dominates roughly 78% of AI-driven clicks globally, but its citation behavior is surprisingly hard to influence directly. Around 67% of ChatGPT’s top 1,000 most-cited sources are outlets marketers can’t easily control, think large encyclopedias and major news institutions. Wikipedia alone accounts for nearly 47.9% of its top citation sources. Third-party directories like Yelp and TripAdvisor represent 48.73% of its source pool. Perhaps most striking: ChatGPT’s cited URLs overlap with Google’s top 10 results by only 6.5%. Ranking first on Google is no guarantee of appearing in ChatGPT’s answers.

Gemini behaves almost oppositely. Because it’s built on Google’s infrastructure, 93.67% of its citations link to domains that already rank in Google’s top results. It also shows a strong preference for brand-owned content: 52.15% of its citations point directly to a brand’s official website. If your own domain is authoritative and well-structured in Google’s index, Gemini is the platform where that investment pays off most directly.

Perplexity targets a different audience entirely and cites accordingly. Reddit accounts for 46.7% of its core citation sources, and niche, vertical-specific content makes up 24% of its references. For categories where user discussions and community reviews carry weight, Perplexity is often the platform where smaller brands can gain citation traction faster than on ChatGPT.

The practical implication: a single “optimize for AI” strategy misses the structural differences between these three platforms.

How to Audit Your Content for AI Citation Potential

Most brands start citation tracking by looking at where they appear. The more useful starting point is looking at where they don’t.

The audit process breaks into four steps. First, define a prompt set: 20 to 50 queries that represent how your target audience searches for solutions in your category. Include decision-stage prompts like “best [category] tools” and comparison prompts like “[your brand] vs [competitor].” Second, run those prompts across ChatGPT, Gemini, and Perplexity and log which URLs appear as cited sources. Third, check whether your domain appears, and in which position. Fourth, analyze what’s being cited instead, including specific URLs, their content format, and what data or structure they contain that yours might lack.

This is where Topify’s Source Analysis becomes useful in practice. Rather than running this manually across dozens of prompts and three platforms, Topify tracks the exact domains and URLs that AI platforms are citing for your defined prompt set, and flags where your competitors are being pulled in while your content is being passed over. The tool was built specifically for this step: not just telling you your brand’s visibility score, but showing you the citation layer underneath it.

The audit typically surfaces one of two problems: either your content isn’t being indexed by AI crawlers at all, or it’s being retrieved but not selected, because it doesn’t match the structural patterns AI systems prefer when extracting evidence for their answers.

Reverse Engineering Your Competitor’s Citation Sources

Once you’ve mapped your own citation gaps, the next move is understanding why your competitors are filling them.

Start with the specific URLs being cited, not just the domains. A competitor might be getting cited not from their homepage or product pages, but from a third-party comparison article, a Reddit thread, a G2 review page, or a white paper hosted on an industry association’s site. Each of those citation pathways has a different strategic implication.

Then analyze the content structure of those high-citation pages. Research from Princeton, Georgia Tech and other institutions studying GEO found that adding statistics to content improves AI visibility by up to 40%, and embedding expert quotes has the same effect. If a competitor’s cited content leads with specific numbers, “ROI improved by 36%” versus “effectively improves efficiency,” AI systems will almost always extract the former.

Look also for citation concentration risk. If a competitor’s citations cluster heavily around one or two third-party sources, that’s a vulnerability you can work around by building a broader citation surface across more domains.

Topify’s Competitor Monitoring runs this analysis at scale, tracking which sources are generating citations for competing brands across platforms, and surfacing the patterns you’d otherwise need weeks of manual research to identify.

Building Content That Earns AI Citations

The content that earns AI citations has a specific structure. It’s not about length or keyword density.

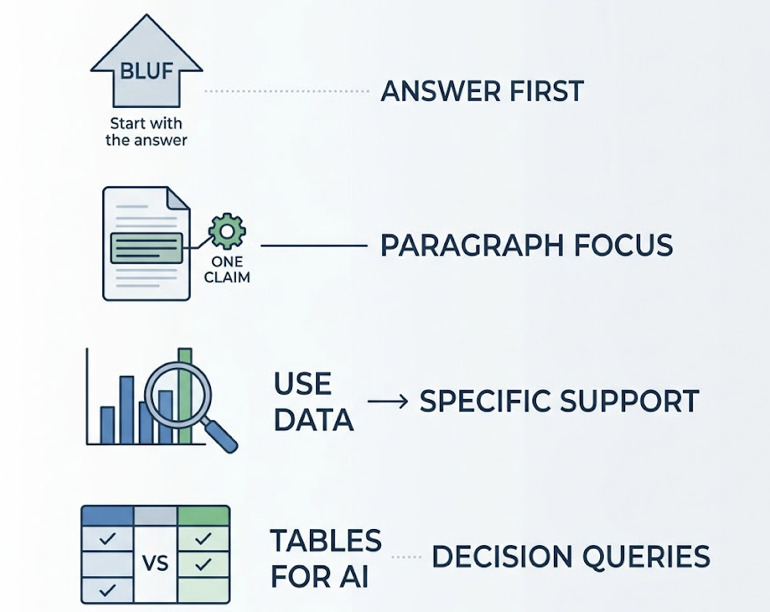

AI systems are built on retrieval-augmented generation (RAG), which means they’re not reading full articles and forming opinions. They’re scanning for extractable chunks: short, self-contained segments of text that directly answer a specific sub-question and can be pulled into a response as evidence.

AI doesn’t cite great brands. It cites great sources.

The practical implications for content structure are concrete. Each section of your content should open with the answer before the explanation, what researchers call BLUF (Bottom Line Up Front). Each paragraph should focus on one fact or claim, kept to two to four sentences. Every major assertion should be supported by a specific data point, not a general claim. Comparison tables outperform prose for decision-stage queries, because they match the format AI systems prefer when generating structured recommendations.

Technical accessibility matters too. Roughly 65% of AI bot visits target content published or updated within the past year. Checking that your robots.txt doesn’t block GPTBot or OAI-SearchBot, implementing structured data schemas like FAQPage and HowTo, and ensuring your content renders server-side rather than through client-side JavaScript, these are baseline requirements for AI indexability.

GEO research shows that for brands currently ranking around position five in traditional search, these optimizations can increase AI visibility by up to 115%. That’s the magnitude of the opportunity for brands that haven’t yet structured their content for AI retrieval.

From Citation Tracking to Citation Growth: Closing the Loop

Citation tracking only creates value if it feeds back into a repeatable improvement cycle.

The loop looks like this: track which prompts your brand is being cited for, identify the gaps where competitors appear and you don’t, produce content that targets those specific citation gaps, distribute that content across the channels that carry citation weight for each platform (Wikipedia and major media for ChatGPT, your own domain for Gemini, Reddit and vertical forums for Perplexity), then re-measure citation rate across your prompt set.

The conversion data makes the case for running this cycle consistently. Traffic arriving through AI citations converts at dramatically higher rates than traditional organic search: ChatGPT-sourced visitors convert at 14.2%, roughly 5.1x the 2.8% baseline for Google organic. Perplexity-sourced sessions last 41% longer on average. The volume is still smaller than organic search, but AI-driven traffic grew 7x between 2024 and 2025, and the trajectory is clear.

Topify is designed to close this loop with less manual overhead. The platform tracks citation rate across ChatGPT, Gemini, Perplexity, and other AI platforms, surfaces the source-level data behind competitor citations, and connects citation changes to brand visibility metrics over time. For teams running this analysis manually, the difference is the shift from one-time audits to a continuously updated view of where your brand stands in the citation layer of AI search.

Starting at $99/month, Topify’s Basic plan includes tracking across ChatGPT, Perplexity, and AI Overviews across 100 prompts. For teams managing multiple clients or categories, the Pro plan at $199/month expands to 250 prompts and 22,500 AI answer analyses per month.

Conclusion

AI citation tracking isn’t a nice-to-have for GEO strategy. It’s the diagnostic layer everything else depends on.

You can’t improve what you can’t see. And right now, most brands are optimizing for AI visibility without knowing which specific sources AI is pulling from, where their competitors are being cited instead, or what structural changes to their content would actually move the citation rate.

The research is clear on what AI systems value: specific data over vague claims, structured formats over dense prose, multi-platform presence over single-channel authority. Brands that build their content around those principles, and track their citation rate systematically, are the ones that will hold ground as AI search continues to grow.

FAQ

What makes a website a trusted citation source for AI platforms?

Trusted citation sources tend to share a few structural traits: they use clear heading hierarchies that allow AI to extract specific sections, they support claims with verifiable statistics, and they’re referenced across multiple third-party domains rather than only on their own properties. Domain authority plays a role, particularly for Gemini, but it’s not the only factor. Content that’s structured for extraction, not just for reading, consistently outperforms high-authority content that’s written in dense, undifferentiated prose.

Why is AI citation tracking essential for a GEO strategy?

GEO without citation tracking is optimization without feedback. You can restructure content, add data, and build authority signals, but without tracking which prompts you’re being cited for and where competitors are being cited instead, you can’t verify that any of it is working. Citation tracking turns GEO from a set of best practices into a measurable, improvable channel.

How do you get your website cited by ChatGPT and Gemini?

The paths are different for each. For ChatGPT, the highest-leverage citations often come through third-party platforms: Wikipedia mentions, directory listings, media coverage, and forum discussions that establish your brand as part of the broader internet consensus. For Gemini, your own domain is the primary lever. Well-structured brand content that aligns with Google’s quality signals and Knowledge Graph entities is what Gemini prioritizes. Building in both directions, rather than focusing on one, produces the most durable citation presence.

How does domain authority influence AI citation likelihood?

Domain authority correlates with AI citation frequency, but the relationship varies by platform. Gemini shows the strongest correlation, with 93.67% of its citations linking to domains already ranking in Google’s top results. ChatGPT shows much weaker correlation, with only 6.5% overlap between its cited sources and Google’s top 10. This means domain authority matters for Gemini optimization but is a less reliable predictor for ChatGPT, where third-party validation and content structure tend to matter more.

How do you measure the impact of earned citations on AI brand visibility?

The clearest measurement approach is tracking citation rate (the percentage of your target prompts where your domain appears as a cited source) over time, alongside brand visibility metrics across AI platforms. As citation rate improves, you should expect to see corresponding increases in AI visibility scores, particularly for the platforms where your citation-building activity is concentrated. Conversion data is a secondary but important signal: traffic arriving through AI citations typically converts at 4x to 6x the rate of traditional organic search, so shifts in AI-sourced traffic quality are a meaningful downstream indicator.