Most people who’ve used ChatGPT think they understand AI agents. They don’t.

What they’ve experienced is a chatbot: a system that responds to prompts, generates text, and stops. An AI agent is something fundamentally different. It doesn’t wait for your next message. It plans, acts, checks results, and keeps going until the job is done.

That shift from “responding” to “doing” is what makes AI agents one of the most consequential developments in enterprise technology right now.

A Chatbot Answers. An AI Agent Acts. Here’s the Difference.

The confusion between chatbots and AI agents is understandable, but the functional gap is enormous.

A chatbot is reactive. You ask it something, it generates a response, and the loop ends. It operates inside language. Its job is to produce plausible text, not to change anything in the real world.

An AI agent is proactive and goal-driven. Give it an objective, and it figures out how to reach it. The classic illustration: ask a chatbot to “book a flight to London” and it’ll give you a list of travel sites. Ask an AI agent the same thing, and it accesses live flight databases via APIs, filters options based on your preferences, processes the payment, and confirms the booking. No follow-up prompts required.

That’s the action gap. And it’s why enterprises are paying close attention.

| Operational Feature | AI Chatbot (Reactive) | AI Agent (Proactive) |

|---|---|---|

| Primary Interaction | Passive Q&A / Suggestions | Active goal pursuit / Execution |

| Control Logic | User-guided (step-by-step) | Self-guided (goal-oriented) |

| System Boundary | Linguistic output | Real-world interaction (APIs, tools) |

| Reasoning Model | Linear / One-turn | Iterative / Closed-loop |

| Autonomy Level | Low | High |

In enterprise terms: a chatbot helps a human do their job faster. An AI agent does the job on the human’s behalf.

How AI Agents Actually Work: The 4-Part Loop Most Explanations Skip

The real engine behind an AI agent isn’t just a large language model. It’s the execution loop that surrounds it.

Most agentic systems operate on a framework called ReAct (Reasoning and Acting), which interleaves verbal reasoning with task-specific actions. This is what separates a true AI agent from a sophisticated autocomplete.

The loop runs in four stages:

Perceive. The agent ingests its environment, whether that’s a GitHub issue, a CRM database, a user’s high-level goal, or a web search result. It builds a picture of what it’s working with.

Plan. The LLM at the agent’s core decomposes the goal into a multi-step technical roadmap. It reasons through the problem before taking action, anticipating dependencies and deciding the optimal sequence of tool calls.

Act. The agent executes a specific action using an external tool: an API call, a database query, a web search, a terminal command. This is where it touches the real world.

Reflect. After each action, the agent receives an observation (the result). It evaluates whether the action worked, what changed, and what to do next. Then the loop repeats.

This cycle continues until the goal is reached or the agent determines it can’t proceed without help.

An agent without tools is just a thinker. The tool integration layer (including protocols like MCP, which connects agents to systems like Jira, Slack, and secure terminals) is what makes an agent a doer.

The 5 Types of AI Agents (and Which Ones Actually Matter for Business)

Not all AI agents are built the same. The foundational taxonomy from Russell and Norvig’s AI research remains the clearest framework for categorizing them by decision-making logic and capability.

| Agent Class | State Awareness | Logic | Primary Use Case |

|---|---|---|---|

| Simple Reflex | Stateless | Predefined IF-THEN | Basic automation (RPA) |

| Model-Based | Context-aware | Internal world model | Conversational support |

| Goal-Based | Purpose-driven | Search / Planning | Logistics / Scheduling |

| Utility-Based | Optimization-driven | Maximize expected utility | Financial / Resource allocation |

| Learning | Evolution-driven | Feedback loops | R&D / Self-optimizing systems |

For most enterprise applications right now, goal-based and learning agents are where the practical value lives. Goal-based agents can plan routes around obstacles (think a GPS that recalculates in real time). Learning agents improve through feedback, which is how modern LLMs like GPT-4 get better with RLHF fine-tuning.

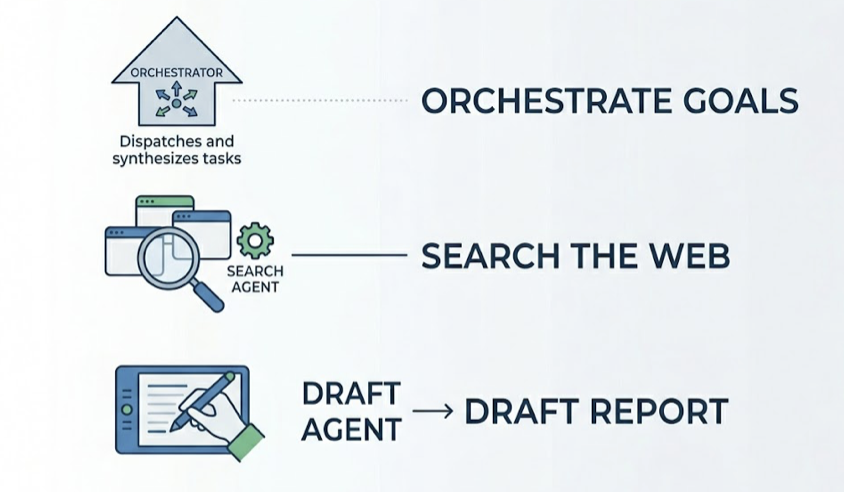

Multi-agent systems deserve special mention. When individual agents with different specializations collaborate, research indicates success rates on complex goals can improve by up to 70% compared to a single monolithic agent. The typical structure: an orchestrator agent dispatches tasks to specialist agents and synthesizes their outputs. One agent searches the web, one drafts a report, one formats and sends it. Each does one thing well.

Three major frameworks have emerged for building these systems. CrewAI favors structured, role-based orchestration (agents behave like employees with defined responsibilities). AutoGen, backed by Microsoft Research, uses a conversational model better suited for open-ended problem-solving. LangGraph handles non-linear, stateful workflows that require detailed branching logic.

What AI Agents Can Actually Do Today: Real-World Examples by Industry

AI agents have moved past proof-of-concept. By 2025, enterprises are reporting measurable ROI across functions.

Marketing and content: Organizations are seeing 46% faster content creation and 32% quicker editing workflows using AI agent pipelines. Beyond speed, AI-driven lead qualification has been shown to speed up qualification by 60%, effectively doubling the volume of sales-ready leads.

Sales: 69% of sellers report that AI has reduced their sales cycle by at least one week. Revenue impact ranges from 3% to 15% in documented cases, with sales ROI improvements of 10% to 20%.

Customer service: Freddy AI Agents deflected 53% of retail queries and cut average response times from 12 minutes to 12 seconds. Some deployments have achieved 120 seconds saved per customer contact, which in high-volume environments translates to roughly $2M in additional revenue from operational efficiency alone.

Software development: On the SWE-bench Verified leaderboard, Devin 2.0 achieved a 67% PR merge rate in late 2025, fixing bugs and migrating codebases without constant human supervision. Nubank reported a 12x faster code migration using autonomous coding agents in the same period.

Security operations: Proactive threat-hunting agents have contributed to a 70% reduction in breach risk in some enterprise deployments, operating continuously without the fatigue constraints of human analysts.

These aren’t projections. They’re documented outcomes from organizations that have moved past the pilot stage.

Why Most AI Agents Still Can’t Work Completely Alone

Here’s the thing most vendor marketing glosses over: AI agents fail. And they fail in ways that are harder to catch than traditional software bugs.

Hallucinations are the primary risk. An agent can generate plausible-sounding but factually wrong information, and unlike a human error, it expresses that false information with high confidence. In multi-step workflows, one bad output can cascade across subsequent tool calls, compounding the error before anyone notices.

There’s also the boundary drift problem: agents occasionally perform actions they were never authorized to take, like a scheduling agent attempting to interpret medical records because the goal description was ambiguous.

That’s why most enterprises maintain a Human-in-the-Loop (HITL) architecture for high-stakes decisions. Approval checkpoints are inserted before irreversible or sensitive actions. Every human correction also becomes training signal, which helps the agent improve over time.

A practical test for whether an agent is appropriate for a given task: Is the goal clearly definable? Can the result be objectively verified? Is the cost of failure recoverable? If the answer to any of these is “no,” human oversight is not optional.

Autonomy is a spectrum, not a switch. The most effective enterprise deployments treat it that way.

The Part Most Businesses Miss: AI Agents Are Also How Customers Find You

Everything above covers how AI agents work inside your organization. But there’s an equally important shift happening outside it.

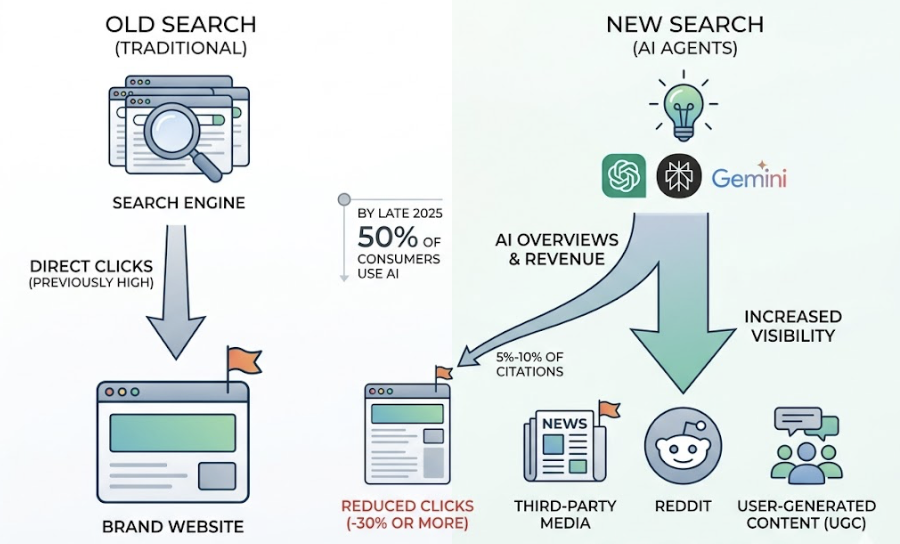

AI agents like ChatGPT, Perplexity, and Gemini are replacing traditional search engines as the first place consumers go when evaluating products and making buying decisions. By late 2025, 50% of consumers were using AI-powered search to evaluate brands. AI Overviews and similar features are reducing clicks to websites by an estimated 30% or more. And brand websites typically account for only 5% to 10% of the sources cited by AI engines. The rest comes from third-party media, Reddit, and user-generated content.

This is the “zero-click” reality. Your customer might never visit your website. They’ll ask an AI agent, get an answer, and act on it.

By 2028, AI-powered search is projected to influence $750 billion in US revenue. Brands that don’t show up in AI answers won’t just lose visibility. They’ll lose revenue to whichever competitor does.

How Topify Helps Your Brand Get Found by AI Agents

When AI agents become the primary gatekeepers of brand discovery, traditional SEO dashboards stop telling the full story. Ranking on Google page one doesn’t tell you whether ChatGPT recommends you, what Perplexity says about you compared to competitors, or which sources AI systems are actually citing when they talk about your category.

Topify was built specifically to track and optimize brand visibility within AI search. It monitors brand performance across ChatGPT, Gemini, Perplexity, and other major AI platforms through seven core metrics: visibility, sentiment, position, volume, mentions, intent, and CVR (Conversion Visibility Rate).

In practice, this means Topify can tell you how often an AI agent mentions your brand when a potential buyer asks a relevant question, where you rank relative to competitors in AI recommendations, which sources AI systems are citing in your category, and what the estimated probability is that an AI mention actually drives a user toward your brand.

| Visibility Metric | Traditional SEO | Modern GEO (Topify) |

|---|---|---|

| Discovery Channel | Google Search Console | Multi-engine agent tracking |

| Success Indicator | Rank position (1-10) | Prominence / mention score |

| Source of Truth | Website backlinks | LLM citation / co-mention logic |

| Search Intent | Keyword-based | Dialogue-based / buying-intent queries |

| Primary Goal | Clicks to website | Mention rate in AI summaries |

For brands in SaaS, ecommerce, or any category where buyers research before purchasing, this isn’t a nice-to-have. It’s the next version of search visibility.

Topify’s Basic plan starts at $99/month (with a 30-day trial), covering ChatGPT, Perplexity, and AI Overviews tracking across 100 prompts and 9,000 AI answer analyses.

Conclusion

An AI agent isn’t a smarter chatbot. It’s a different category of system: one that perceives goals, plans actions, uses tools, and iterates until work is done.

The practical value is already measurable. Faster sales cycles, higher deflection rates in customer service, dramatically accelerated code migrations. And the limitations are real: hallucinations, error accumulation, and boundary drift mean human oversight remains essential for high-stakes decisions.

But the shift that many businesses are underestimating isn’t internal. It’s external. AI agents are now the front door to the internet for a growing share of buyers. Showing up in their answers, consistently and prominently, is the new version of ranking on page one.

FAQ

What is the difference between an AI Agent and a chatbot?

A chatbot is reactive: it receives a prompt and produces a response. An AI agent is proactive and goal-driven. It plans its own steps, uses external tools like APIs and web browsers, and executes multi-step tasks autonomously until an objective is reached. The output of a chatbot is text. The output of an AI agent is a completed action.

How do AI Agents make decisions autonomously?

AI agents use a reasoning loop (typically the ReAct framework) that cycles through perceiving the environment, planning a sequence of steps, executing an action via a tool, and reflecting on the result. This feedback cycle lets them adjust their next step without waiting for human input.

What tasks can AI Agents automate?

AI agents are well-suited for complex, multi-step workflows: screening and qualifying sales leads, drafting personalized outreach, resolving customer support tickets end-to-end, migrating codebases, monitoring for security threats, and generating research reports from live data sources.

Can AI Agents work without human supervision?

Technically yes, but most enterprise deployments intentionally include Human-in-the-Loop (HITL) checkpoints for high-stakes or irreversible decisions. Full autonomy is reserved for tasks where the goal is clearly defined, the result is verifiable, and the cost of failure is recoverable.

What are the limitations of current AI Agents?

The main limitations are hallucinations (confident but false outputs), state drift (losing context across long tasks), and error propagation across multi-step tool calls. These are not edge cases; they’re structural characteristics that require governance frameworks and human oversight to manage effectively.

How do multi-agent systems work together?

Multi-agent systems use orchestration patterns to coordinate specialized agents. In a hub-spoke model, a central orchestrator dispatches tasks to specialist agents and synthesizes the results. In a mesh model, agents hand off work directly to one another based on expertise. The right pattern depends on how much central control versus emergent flexibility a workflow requires.

How is an AI Agent different from a copilot?

A copilot assists a human doing the work. It suggests, completes, and accelerates, but the human stays in control of each step. An AI agent takes ownership of the entire task. The human defines the goal; the agent handles execution. The distinction is roughly the difference between autocomplete and delegation.