Your brand might be cited by ChatGPT dozens of times today. You probably don’t know about any of it.

That’s not a hypothetical. As AI platforms like Perplexity, Gemini, and Google AI Overviews increasingly synthesize the web for users, they pull from brand content, product pages, and thought leadership pieces without sending a single referral ping to your analytics. Traditional tracking infrastructure, built on cookies and referrers, can’t see any of this. The result is a growing layer of “dark visibility” that most marketing teams are measuring with tools built for a completely different era.

AI citation trackers exist to close that gap. But the market has matured fast in 2026, and not every tool is built the same way.

We ran hundreds of prompts across ChatGPT, Perplexity, Gemini, and Google AI Overviews to evaluate which platforms actually tell you when AI is citing your brand, and which ones are just estimating.

Last Year’s SEO Dashboard Won’t Show You This

Here’s the thing most teams don’t realize until it’s too late: AI search doesn’t work like Google’s blue links. When a user asks Perplexity for the best SaaS project management tool, the platform doesn’t list ten options. It picks two or three, explains why, and sometimes links directly to the ones it trusts most.

That link is a citation. And AI-referred visitors who arrive through a citation convert at rates up to 6x higher than standard organic traffic, because they’ve already received a vetted recommendation from a system they trust.

The tricky part? AI responses vary by up to 70% for the same prompt across different sessions. You can’t just check once and call it done.

Citation vs. Mention: Why Only One of These Drives Revenue

Before evaluating any tool, it’s worth getting this distinction right.

A mention is when an AI names your brand in a response but doesn’t link to your site. A citation is a formal attribution: a clickable link, a footnote, or a reference card that sends actual traffic your way.

This matters because mentions and citations require completely different optimization strategies.

| Feature | Brand Mention | AI Citation |

|---|---|---|

| Visual format | Text-only name in the response | Clickable link, footnote, or reference card |

| Traffic impact | Minimal, awareness only | Significant, drives high-intent referral traffic |

| Optimization signal | Brand exists in AI training data | Content is structured for RAG retrieval |

| Primary goal | Share of Voice | Attribution and lead generation |

A high mention rate with low citations is a diagnostic signal: your brand has recognition, but your content isn’t structured authoritatively enough for the AI to treat it as a primary source. The fix is different from what most SEO tools recommend.

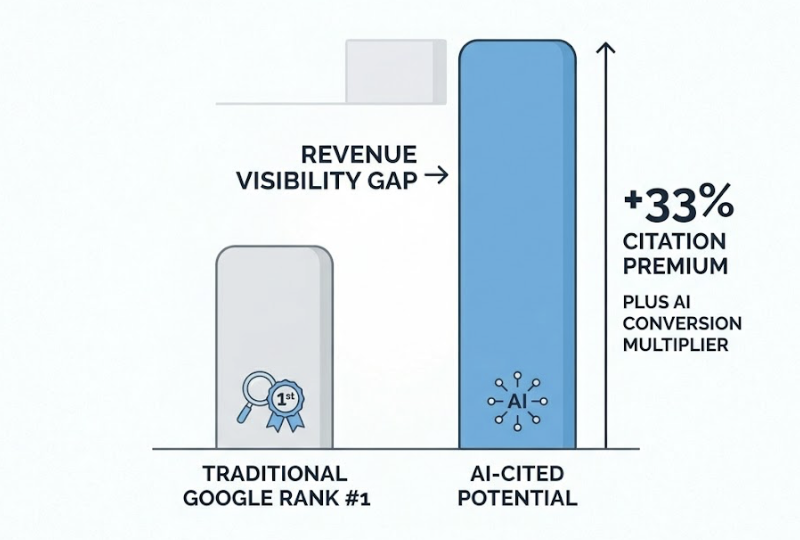

That gap between current performance and AI-cited potential is what researchers call the Revenue Visibility Gap. If you rank number one on Google for a query but aren’t cited in the AI Overview for that same query, you’re missing a predicted 33% citation premium, plus the AI conversion multiplier that comes with it.

The 5 Things That Actually Separate Reliable AI Citation Trackers

Not all tools measure citation data the same way. Here’s what separates the useful ones from the ones that generate a lot of charts without driving decisions.

Platform coverage. There’s only a 13.7% overlap between the citations provided by Google AI Overviews and Google’s AI Mode for the same queries. A tool that only monitors ChatGPT is giving you a fraction of the picture. You need coverage across ChatGPT, Perplexity, Gemini, AI Overviews, and regional models to identify platform-specific gaps.

Prompt volume and refresh rate. Because AI responses are non-deterministic, a single check is a snapshot, not a signal. Reliable tools support prompt matrices of 50 to 150 queries that mirror actual buyer journeys. Perplexity’s content retrieval logic refreshes in hours, not weeks. A tool checking citations once a month is perpetually behind.

Source-level granularity. Knowing your brand appears in 20% of responses is a start. Knowing which external domains the AI cited instead of your site is actionable. The best tools map the exact URLs pulling weight in generated answers, including third-party review sites, Reddit threads, and industry publications.

Actionability of output. A report that says “your visibility dropped 8%” isn’t useful without a path to correction. Top-tier tools pair citation data with content recommendations: information density audits, Schema markup gaps, and specific pages the AI is skipping over.

Pricing vs. data depth. Budget matters, but cheap tools that check a handful of prompts across one platform will miss more than they catch. The right floor for a professional baseline is typically in the $99 to $199 per month range, depending on team size and prompt volume.

AI Citation Tracking Tools in 2026, Ranked

Here’s a quick overview of the main platforms, followed by a deeper look at the ones worth your time.

| Tool | Platforms Covered | Citation Depth | Starting Price | Best For |

|---|---|---|---|---|

| Topify | ChatGPT, Gemini, Perplexity, AIO, DeepSeek, and more | High: 7-dimensional analysis + CVR | ~$37/mo | Full-spectrum visibility and GEO execution |

| Otterly AI | ChatGPT, Gemini, Perplexity, AIO, Claude, Copilot | Medium: GEO Audit + Share of Voice | $29/mo | SMBs and agencies |

| Profound | 10+ platforms including ChatGPT, Claude, Perplexity | High: server log validation | $99/mo | Enterprise-grade monitoring |

| Airefs | ChatGPT, Perplexity, regional LLMs | High: source mapping | $24/mo | Lean teams |

| AthenaHQ | 8 AI platforms | Medium: recommendation focus | $295/mo | Action-oriented teams |

Topify: The Platform That Connects Citations to Revenue

Topify stands out in 2026 because it’s one of the few platforms built around a question most tools don’t ask: not just “is your brand being cited?” but “what is that citation actually worth?”

Its Source Analysis feature maps the exact domains and URLs the AI platforms pull from when generating answers. You don’t just see that a competitor ranked above you. You see which third-party sources the AI used to justify that ranking, and whether those sources include review sites you’ve ignored, Reddit threads you’re not participating in, or technical pages with higher factual density than yours.

That source-level transparency feeds directly into an action plan. Topify’s Action Center lets teams deploy fixes, from clarifying entity signals to updating structured data, without switching between tools. The analysis drives the execution from a single dashboard.

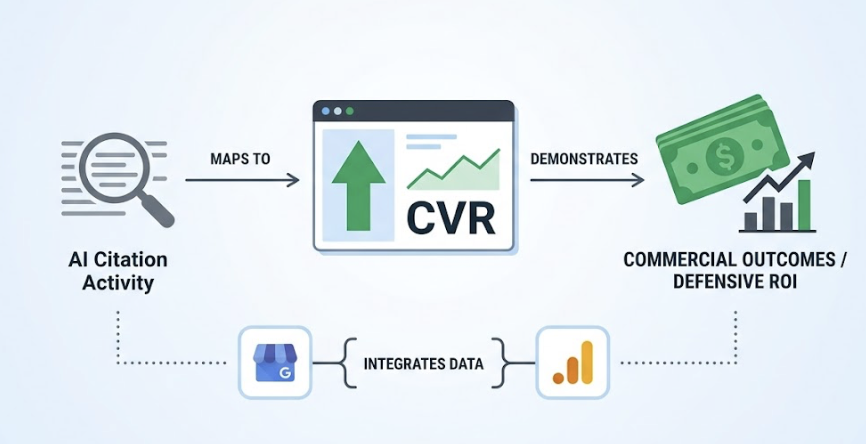

What makes Topify’s approach genuinely different is its Conversion Visibility Rate (CVR) metric. CVR maps AI citation activity to actual commercial outcomes by integrating first-party data from Google Search Console and GA4. This makes the ROI of citation tracking defensible to leadership, not just to the marketing team. Research shows AI-referred visitors deliver a 4.4x higher conversion value than standard organic traffic, and CVR makes that premium visible at the brand level.

Topify also tracks across a wider engine set than most competitors, including ChatGPT, Gemini, Perplexity, AI Overviews, and DeepSeek, with full seven-dimensional reporting across all of them. Plans start at approximately $37 per month for a base tier, scaling to $199 per month for growth teams that need expanded prompt volume and multi-project tracking.

If your team needs to prove that AI visibility investments translate to pipeline, Topify is where that case gets built.

Otterly AI: Accessible for SMBs and Agencies

Otterly AI is a practical choice for smaller teams that need broad platform coverage without enterprise-level complexity. Its GEO Audit scores content against 25-plus citation-readiness factors, and its AI Visibility Score provides a consolidated view of mention rate and citation frequency over time. Gartner has recognized it as a Cool Vendor in the space. At $29 per month, it’s the most accessible entry point for teams starting to measure AI visibility systematically.

Profound: Built for Enterprise Validation

Profound’s standout feature is its Agent Analytics, which uses server log data to provide definitive proof of AI crawler activity. You can see exactly how bots from OpenAI or Anthropic are parsing your site’s technical structure. It monitors share of voice and sentiment across 10-plus platforms and is the right tool for global brands with complex competitive landscapes and strict data governance requirements. Plans start at $99 per month.

Airefs: Source Mapping for Lean Teams

For startups and solo founders, Airefs offers solid source-level transparency at $24 per month. It’s built around reverse-engineering which external domains are driving citations for your category, and its regional LLM coverage makes it useful for brands in markets where ChatGPT isn’t the primary AI platform.

What Most Citation Reports Don’t Tell You

Here’s something 90% of basic tools miss entirely: the why behind a citation.

Knowing your brand appeared in 22% of sampled prompts doesn’t explain what earned those appearances. LLMs don’t just search the web. They look for third-party validation to minimize hallucination risk, a process researchers call the Consensus Mechanism. That means the AI isn’t just reading your site. It’s reading everything that references your site, your category, and your competitors.

The data on this is striking. About 85% of non-paid AI citations come from earned media and third-party validation, not brand-owned content. Wikipedia alone accounts for roughly 27% of all citations across major AI platforms. Reddit threads, industry review sites, and G2 category pages often outrank well-resourced brand pages in AI retrieval because they carry more third-party consensus signals.

Reverse-engineering citations means identifying which specific external pages influenced the AI’s decision to cite your brand, or your competitor instead of you. That’s the analysis that turns citation tracking from a reporting exercise into a content acquisition strategy.

Citation Data Is Only Half the Picture

A high citation rate doesn’t automatically mean things are going well.

Brands can appear frequently in AI responses and still take reputation damage if the tone of those responses is consistently negative. “Brand X is a powerful tool but has poor customer support” is a citation. It’s not a win.

This is why multi-dimensional tracking matters. Topify has popularized a seven-metric framework that’s becoming the industry standard for GEO reporting in 2026.

Visibility Rate: The percentage of target prompts where the brand appears. Industry leaders typically target 30% or above.

Sentiment Score: A 0-100 scale analyzing the tone the AI uses when referencing your brand. High visibility with low sentiment is a reputation risk.

Position Rank: The first brand mentioned in an AI response is framed as the primary authority. Later mentions are secondary alternatives. Position matters.

Volume Density: The number of prompt analyses backing the data. AI non-determinism means statistical confidence requires thousands of samples.

Mention Frequency: Raw reference count, including text-only mentions, which measures brand salience even without link attribution.

Intent Alignment: Whether the brand is being cited at the right stage of the funnel. “How-to” citations are less valuable than “best solution” citations for transactional queries.

CVR (Conversion Visibility Rate): The measured impact of citation activity on lead generation and pipeline.

Together, these seven dimensions let a team diagnose whether they’re in the Reputation Risk quadrant (high visibility, low sentiment) or the Distribution Problem quadrant (high sentiment, low visibility), and build a targeted response accordingly.

How to Pick the Right AI Citation Tracker for Your Team

The right tool depends on your team’s size, technical maturity, and how directly you need to tie citation activity to revenue.

| Team Type | Recommended Tool | Core Reasoning |

|---|---|---|

| Startups and solo founders | Airefs | Low barrier to entry at $24/mo, solid source tracking for prompt testing |

| SMBs and boutique agencies | Otterly AI | Comprehensive GEO Audit and automated reporting at an accessible price |

| Growth-stage tech teams | Topify | GSC/GA4 integration and CVR make it the right tool for proving ROI and executing changes fast |

| Global enterprises | Profound | Server log validation and multi-market governance for complex organizations |

One more consideration: the cost of not tracking. For an enterprise team, manual AI response auditing runs at roughly $14,200 annually per employee when you factor in the hours spent checking platforms, logging results, and maintaining prompt libraries. A platform like Topify at $199 per month pays for itself before the second month is out.

Conclusion

In 2026, the goal isn’t to be the first search result. It’s to be the trusted node that the AI uses to build its answer. Citation tracking is the infrastructure that tells you whether you’re there yet.

The right tool for most growth-stage teams is Topify: it’s the only platform in the current market that combines source-level citation transparency, multi-engine coverage, and direct revenue attribution through CVR. It converts citation data into action, and action into measurable pipeline.

For teams at earlier stages, Airefs or Otterly AI provide a strong starting point. For global enterprises needing server-log-level validation, Profound scales to that complexity.

Pick the tool that matches where your team is today. But start tracking. The gap between your traditional SEO performance and your AI citation reality is almost certainly larger than you think.

FAQ

Can AI citation trackers work with Perplexity and Gemini, not just ChatGPT?

Yes. Modern platforms like Topify, Otterly AI, and Profound are built specifically for the fragmented engine landscape. They track visibility across Google AI Overviews, Gemini, Perplexity, Claude, and regional models. It’s worth noting that there’s only a 13.7% overlap between citations provided by Google AI Overviews and Google’s AI Mode for the same queries, so multi-engine tracking isn’t optional if you want an accurate picture.

How often should I check my AI citation data?

The refresh rate of your tool should align with the engines it monitors. Perplexity and Google AI Overviews update their retrieval logic frequently, so weekly tracking is the recommended standard for most teams. About 40 to 60% of citation sources rotate monthly, which means monthly reporting alone will consistently miss shifts in your competitive position.

Is AI citation tracking different from traditional backlink monitoring?

Fundamentally, yes. Backlinks measure a static relationship between domains used by Google’s traditional index. AI citations measure real-time inclusion in generated responses. A site can have a strong backlink profile and zero AI citations if its content is poorly structured for LLM retrieval. The two metrics track different kinds of authority.

What’s the minimum budget to get reliable AI citation data?

Basic tracking can start at $10 to $24 per month with tools like Airefs, which works for startups testing a small prompt set. A professional baseline for a mid-sized team typically starts around $99 to $199 per month, providing the prompt volume and multi-engine coverage necessary for strategic decision-making.

How do I calculate the Revenue Visibility Gap for my brand?

Map your traditional SERP positions against your AI citation status. If you rank number one on Google for a query but aren’t cited in the AI Overview, you’re missing an estimated 33% citation probability for that position. The gap is the difference between your current organic revenue from that query and the potential revenue if you captured the AI Conversion Premium, which runs 4.4x to 6x the value of standard organic traffic.