Your Google Analytics dashboard looks fine. Traffic is stable. Rankings haven’t moved.

But somewhere right now, a potential customer is asking ChatGPT which tool to use in your category — and your brand isn’t in the answer.

That’s the gap most marketing teams still can’t see.

As AI search becomes the default way people discover products, the metric that matters is no longer “Did they click?” It’s “Did the AI mention us at all?” This guide breaks down what AI citation tracking is, how it works across ChatGPT, Perplexity, and Gemini, and what you can do about it starting this week.

You Can’t See AI Traffic in Google Analytics. That’s the Problem.

Between 65% and 69% of all Google searches end without a click to an external website. On mobile, that number climbs to nearly 77%.

This isn’t a traffic problem. It’s a measurement problem.

When an AI engine answers a query, it does the browsing on the user’s behalf. It visits your site, extracts relevant facts, and synthesizes an answer — all without generating a session in your analytics. You provided the data. You got zero credit in GA4.

The dangerous part: a brand can be the most-cited authority in ChatGPT responses and still see a declining traffic report internally. Marketing teams perceive a failure that isn’t actually there.

What makes this worth tracking anyway? Visitors who do click through from AI citations browse 12% more pages per visit and bounce 23% less than traditional search traffic. AI referrals convert at rates up to 9 times higher than the Google organic baseline. The volume is smaller. The intent is sharper.

What an AI Citation Actually Is (Hint: It’s Not a Backlink)

A backlink is a static hyperlink added by a human editor. An AI citation is a probabilistic outcome — the model decided, during synthesis, that your content was the most accurate and contextually relevant source for the answer.

The difference matters because the signals are completely different. Backlinks demonstrate popularity and social proof. AI citations demonstrate factual legitimacy. You can have thousands of backlinks and zero AI citations.

There are three ways a brand actually appears in a generative answer:

Direct Citations are clickable links in a “Sources” box or as footnotes. This is the only modality that shows up in GA4 as referral traffic.

Brand Mentions name the brand in the body of the answer without a link. This builds Share of Voice and entity authority but stays completely invisible to click-based analytics.

Recommended Rankings are the comparative lists AI produces — “Top 3 CRM tools for startups.” Where you land in that list drives user perception, even if your name isn’t linked.

Traditional tools like Ahrefs and Semrush can’t see any of this. They index crawlable URLs and backlinks. They can’t read the non-deterministic text an LLM generates inside a private chat session.

3 Questions an AI Citation Tracker Should Answer

Not all tracking tools are built the same. Before choosing one, make sure it’s designed to answer these three questions — in order.

Is your brand being mentioned at all?

Start here. Research indicates that 98.8% of local businesses and 26% of major brands are currently invisible in AI-generated recommendations for their primary categories. Being absent from an AI answer is functionally equivalent to being removed from the consideration set.

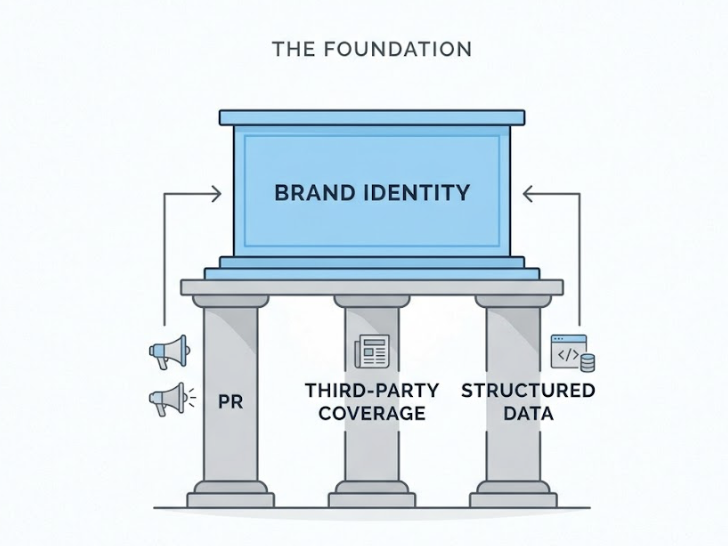

This first question measures entity clarity: does the model recognize your brand as a distinct, authoritative entity with defined attributes? If the answer is no, no amount of content optimization will fix it until you build multi-source corroboration through PR, third-party coverage, and structured data.

What sources is AI citing when it talks about your category?

Here’s where it gets counterintuitive. AI models often don’t cite your own website. Instead, they rely on a narrow set of domains they’ve determined to be authoritative.

For example, 88% of review-platform citations in AI Overviews go to just five sites: Gartner, G2, Capterra, Software Advice, and TrustRadius. That means if your brand isn’t covered on those platforms, you’re structurally absent from a huge portion of category queries — regardless of how good your own site is.

Understanding these retrieval patterns lets you reverse-engineer visibility by targeting the domains AI actually trusts.

How do you rank against competitors in AI answers?

The final layer is competitive. If AI mentions a competitor 80% of the time for purchase-intent queries and mentions you 20% of the time, you have a visibility deficit that no traditional dashboard will surface.

A solid tracker calculates Share of Voice across platforms and identifies Citation Gaps — specific prompts where competitors are recommended and you’re absent.

How AI Citation Tracking Works Under the Hood

The technical challenge here is real. Unlike a search engine that returns a stable list of links, an LLM can produce different answers to the same prompt minutes apart.

AI citation trackers handle this by simulating human interactions at scale. They run large libraries of prompts — conversational questions that mirror real user behavior — across multiple platforms. Because of model volatility, they use high-frequency sampling: each prompt gets run dozens or hundreds of times across different locations and settings to produce a statistically significant visibility score.

Most serious tools follow the logic of the RAG (Retrieval-Augmented Generation) pipeline. They monitor which URLs the AI is pulling in real-time, track which specific passages from those URLs were extracted for synthesis, and record which sources were ultimately credited in the final response. This breakdown pinpoints exactly where the failure happens — a retrieval issue (the site isn’t being crawled) versus a synthesis issue (the content isn’t structured clearly enough to be used).

Continuous monitoring matters more here than in traditional SEO. A model update can shift a brand from primary source to completely omitted overnight. And source decay is real: the median citation half-life for non-network domains is roughly 4.5 weeks. Content that isn’t refreshed falls out of the citation pool on a rolling basis.

ChatGPT vs. Perplexity vs. Gemini: Do They Cite the Same Sources?

They don’t. There’s only an 11% domain overlap between sources cited by ChatGPT and those cited by Perplexity for identical queries. That’s the number that kills single-platform monitoring strategies.

| Platform | Avg Citations / Response | Freshness Sensitivity | Key Bias |

|---|---|---|---|

| ChatGPT | 7.92 | Moderate (60-day window) | High-authority domains, Wikipedia, major news |

| Perplexity | 21.87 | Extreme (30-day window) | Reddit, YouTube, niche technical docs |

| Gemini / AI Mode | 8.34 | Moderate (90-day window) | E-E-A-T signals, Google Knowledge Graph |

ChatGPT’s citation behavior

ChatGPT relies on the Bing index and Microsoft’s crawler. It favors a small set of high-authority sources: major publications, Wikipedia, established industry journals. It’s 3.5 times more likely to cite an established industry journal than a niche blog. For B2B brands, it functions as a curator of established reputations, not a discovery engine for emerging players.

Perplexity’s citation behavior

Perplexity is built for recency. It cites nearly three times more sources per response than ChatGPT and actively surfaces secondary sources — Reddit threads, YouTube videos, specialized documentation. 82% of its cited content was updated within the last 30 days. If your content publishing cadence is slow, Perplexity will quietly deprioritize you.

Gemini’s citation behavior

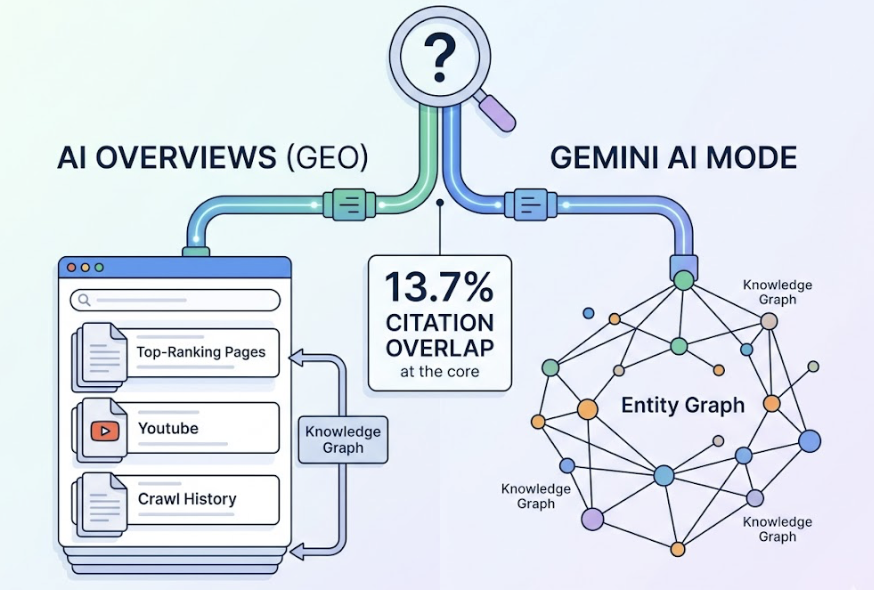

Google’s AI systems draw from two decades of crawl history and a proprietary Knowledge Graph. They weight E-E-A-T signals heavily. There’s also a meaningful internal divergence: Google AI Overviews and the Gemini-powered AI Mode only cite the same URLs 13.7% of the time. AI Overviews lean toward top-ranking pages and YouTube. AI Mode behaves more like a conversational assistant pulling from a broader entity graph.

One-platform monitoring misses almost everything that matters.

How to Start Tracking Your AI Citations in 30 Days

The transition from keyword tracking to citation tracking follows a four-week rhythm.

Week 1: Build your Core Prompt Set. Stop tracking keywords. Start tracking prompts — the conversational questions your target customers actually ask. Compile 30 to 50 prompts covering brand-specific questions, category comparisons, and problem-aware queries. Run them through an AI visibility checker to establish a baseline score across all three platforms.

Week 2: Run cross-platform capture and source analysis. Extract every cited URL and brand mention from the AI responses across ChatGPT, Perplexity, and Gemini. Topify’s Source Analysis feature is built for exactly this step: it reverse-engineers which third-party domains are driving competitor citations and outputs a prioritized PR target list — the external sites that need your content to appear before AI will trust you.

Week 3: Identify Citation Gaps. With the data captured, map the prompts where competitors appear and you don’t. Analyze the authority weight of your mentions. Are you being recommended as a primary solution or buried as a footnote in the third sentence?

Week 4: Optimize and monitor. Increase fact density in your core content (concrete statistics, named sources). Improve structural clarity (H1/H2 hierarchy, FAQ schema). Implement Organization and Product schema markup. Then monitor whether your Visibility Score and Share of Voice respond. Brands using systematic GEO approaches have reported significant increases in AI mentions within two weeks of targeted optimization.

5 Signs Your Brand Is Losing Ground in AI Citations Right Now

These are diagnostic signals, not vanity metrics. If you’re seeing two or more of these, the problem is already compounding.

1. Your rankings are stable but your AI Visibility Score is declining. This is the clearest sign of low extractability. Your content exists but isn’t structured clearly enough for a model to pull facts from it with confidence. Verbose content without structured data fails the synthesis test even when it ranks.

2. Competitors dominate transactional prompts. If Topify’s Source Share data shows competitors cited in 70%+ of purchase-intent queries (“What is the best [product] for [use case]?”) while you’re under 10%, you have a multi-source corroboration problem. AI sees competitors discussed across many authoritative domains. It sees you only on your own site.

3. Sentiment is shifting toward neutral. When AI citation tracking reveals that mentions of your brand are accumulating caveats or becoming factually hedged, the model is likely retrieving outdated or negative content from Reddit or Quora. Your reputation moat is leaking.

4. You’re disappearing from niche, long-tail queries. Research shows that citation changes are overwhelmingly binary — domains go from cited to not cited, not gradually down. Disappearing from fringe queries first is the early warning signal that your content freshness is falling below the model’s threshold.

5. High impressions, falling CTR in Search Console. If your brand appears in AI Overviews but your click-through rate on those queries has dropped, and you’re not the primary cited source in the answer, you’re effectively supplying data that helps a competitor win the customer’s decision.

Conclusion

AI citations are the new first impression. A potential customer who never visits your website can still form a complete opinion about your brand based on how — or whether — an AI describes you.

The measurement tools most marketing teams rely on were built for a different era. Zero-click search and generative synthesis have made a significant portion of brand discovery invisible to traditional analytics. That gap is only widening.

The path forward isn’t complicated, but it requires a different set of metrics. Identify your Core Prompt Set. Run cross-platform capture. Find your Citation Gaps. Optimize for fact density and entity clarity. Then monitor whether the model’s behavior actually changes.

Brands that build this workflow now will have a significant data advantage over those that start when the shift is already complete.

FAQ

Is an AI citation tracker the same as a rank tracker?

No. A rank tracker measures where a URL sits in an ordered list of links. An AI citation tracker measures how frequently your brand is mentioned, how prominently it’s positioned, and what sentiment surrounds it inside a synthesized narrative answer. Rank trackers measure where you are. Citation trackers measure whether you’re recommended at all.

How often does AI change what it cites?

High-authority sources are relatively stable — 96.8% of citations remain consistent week-to-week. But when changes happen, they’re usually binary. Content either stays in the citation pool or drops out entirely. Pages updated within the last 14 days are cited 2.3 times more often than older content.

Do I need separate tools for ChatGPT and Perplexity?

With only 11% overlap in cited sources between the two platforms, single-platform monitoring gives you a severely incomplete picture. A reliable tracker needs to cover ChatGPT, Perplexity, and Gemini at minimum to reflect how your target audience actually searches.

Can I track competitor citations too?

Yes, and you should. Running identical prompts for competitor brands lets you calculate relative Share of Voice and map the specific Citation Gaps where competitors are winning discovery opportunities you’re currently missing.